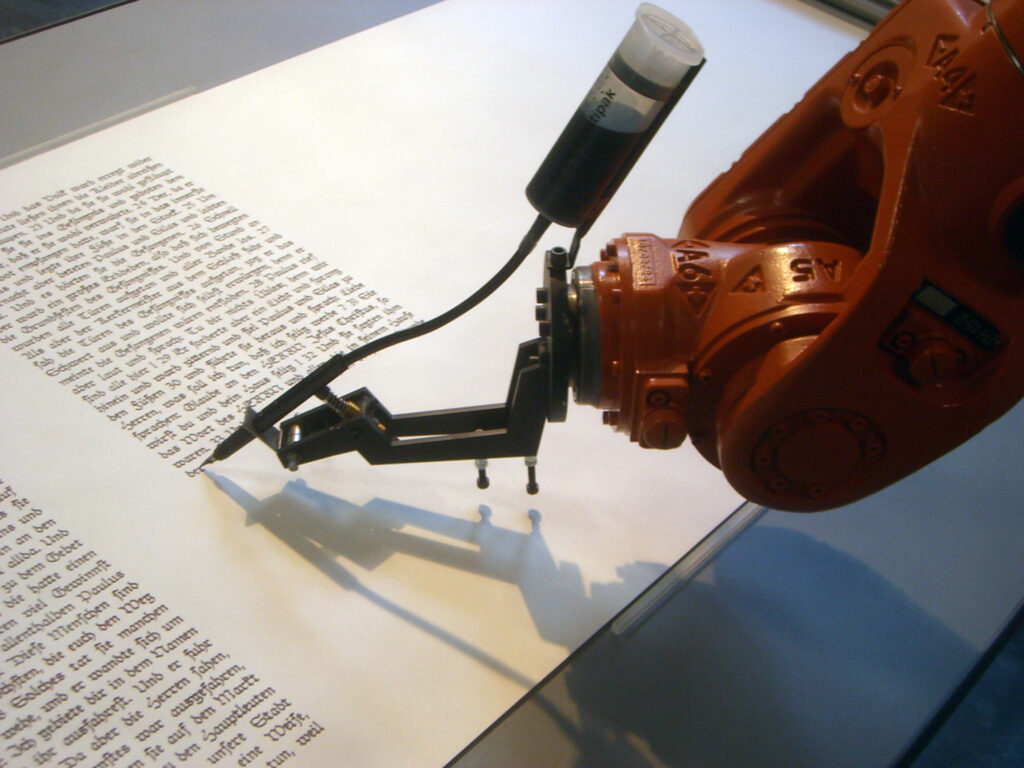

As 2022 drew to a close, a great deal of popular attention was drawn to the latest artificial intelligence chatbot, ChatGPT, which was released in November 2022 by OpenAPI. (As an aside, the company has a had a very interesting background and funding, which has produced a number of important AI advances). Machine learning, natural language processing and textual creation have made significant advances over the past decade. When the first auto-generation of content from structured data became commercially viable about a decade ago, it was reasonably easy to discern machine generated content. This is increasingly no longer the case. Content distributors and assessors of content, be that for scholarly peer-review, for academic credentialling, or simply those who consume content should be aware of the capabilities of these tools and should not be dismissive of them.

Given the range of questions in scholarly communications around the application of AI, I thought it might be interesting to see what the ChatGPT’s response would be to some of these, along with other literary/tech questions, and to share them with you. You can review for yourself whether you think the responses are good ones or not and, if you didn’t know the source of the responses, whether you could tell that they were derived from a machine. Copied below are the questions and responses. I have not edited the responses in any way from what was output by the ChatGPT. It seems we’ve moved well beyond the “Turing Test” for assessing the intelligence of a machine. You may notice there is something formulaic to some of the responses, but it’s only discernible after looking over several of them. Though it is important to reflect that the machine doesn’t “know” whether the answers are correct or not, only that they are statically valid responses to the questions posed.

(NOTE: Yesterday—after this post was prepared—, reports circulated that Microsoft was in discussions to acquire a 49% stake in the for-profit arm of OpenAI. It has been speculated that Microsoft would embed OpenAI’s technology in its suite of Office365 applications. If that were to happen, the lines would become interestingly blurred between human and machine authorship.)

TAC: What is the best question to test an AI chatbot with?

ChatGPT Response:

There is no one “best” question to test an AI chatbot, as different chatbots may have different capabilities and be designed to handle different types of tasks or interactions. However, here are a few ideas for questions that could be used to test an AI chatbot’s capabilities:

- “Can you provide information about a specific topic or answer a general knowledge question?”

- “Can you carry on a conversation and respond appropriately to follow-up questions or comments?”

- “Can you understand and respond to natural language input, even if it includes slang, colloquialisms, or typos?”

- “Can you perform a specific task or action, such as booking a reservation or searching for information online?”

- “Can you recognize and respond to different user intents or emotions?”

Ultimately, the best questions to test an AI chatbot will depend on the specific capabilities and goals of the chatbot.

TAC: Is it ethical for an author to use AI in developing a scholarly article?

ChatGPT Response:

Whether it is ethical for an author to use AI in developing a scholarly article depends on the specific context and the expectations of the research community in which the article will be published.

There is no inherent ethical issue with using AI in research or writing, as long as the AI is used appropriately and ethically. For example, AI can be used to analyze large amounts of data or to generate text, which can be useful for identifying trends or patterns that might not be evident to a person.

However, it is important for researchers to be transparent about the role of AI in their work and to ensure that the use of AI is consistent with the research community’s standards and expectations. For example, if an AI program is used to generate text, the authors should clearly state this in the article and provide appropriate credit to the AI program.

Additionally, researchers should be mindful of the potential limitations of AI and consider the risks and benefits of using it in their work. For example, AI may not be able to fully capture the complexity of human thought or language, and it may be necessary to supplement AI-generated results with additional analysis or interpretation by human researchers.

Overall, it is important for researchers to use AI ethically and transparently, and to consider the potential implications of using AI in their work.

TAC: During peer review, if an author is found to have used ChatGPT in producing the paper, should the paper be rejected?

ChatGPT Response:

The use of AI, such as ChatGPT, in the production of a scholarly paper does not necessarily mean that the paper should be rejected during the peer review process. However, the use of AI should be disclosed to the reviewers and the editor, and the reviewers should consider the extent to which the use of AI has influenced the content of the paper.

If the use of AI has significantly influenced the content of the paper, the reviewers may consider this when evaluating the paper. For example, if the AI has been used to generate large portions of the text or to analyze data in a way that is central to the paper’s findings, the reviewers may want to scrutinize the work more carefully to ensure that the AI has been used appropriately and that the paper’s conclusions are supported by the data.

Ultimately, the decision to accept or reject a paper during the peer review process should be based on the quality and rigor of the research, and the extent to which the paper makes a meaningful contribution to the field. If the use of AI has not significantly influenced the content of the paper, or if the paper meets the standards of the research community, it is unlikely that the paper would be rejected solely on the basis of the use of AI.

TAC: Can AI-derived text be novel and creative?

ChatGPT Response:

There is ongoing debate about the extent to which AI-derived text can be considered novel and creative. Some argue that AI-generated text is simply a reflection of the data and algorithms it was trained on, and therefore cannot be considered truly novel or creative in the same way that a human-generated work can be. Others argue that AI can be used to produce works that are original and creative, particularly if the AI is given some degree of autonomy in the creative process.

It is important to note that the level of creativity and novelty in AI-generated text can vary depending on the specific AI system and the parameters it was trained on. Some AI systems are designed to produce text that is very similar to human-written text, while others may produce text that is more experimental or unusual.

Ultimately, whether AI-generated text is considered novel and creative may depend on the expectations and standards of the audience or research community in which the text is being presented. It is possible that some audiences may consider AI-generated text to be creative and original, while others may not.

TAC: Whose responsibility is it to acknowledge to the reader the role of AI in the authorship of published works, the author or the publisher?

ChatGPT Response:

It is generally the responsibility of the authors to acknowledge the role of AI in the authorship of published works. Authors should be transparent about the extent to which AI was used in the production of their work, and should disclose this information to the publisher and the readers of the work. This can be done through the use of footnotes, citations, or other methods of attribution.

Publishers also have a role to play in ensuring that the use of AI in published works is disclosed and properly attributed. Publishers should establish clear guidelines for authors to follow when using AI in their work, and should review submissions to ensure that the use of AI is properly disclosed and attributed.

Overall, it is important for both authors and publishers to be transparent about the role of AI in the production of published works, in order to ensure that readers have a full understanding of the work and the extent to which it was produced using AI.

TAC: How big a problem is predatory publishing?

ChatGPT Response:

Predatory publishing, also known as deceptive or illegitimate publishing, is a significant problem in the academic world. Predatory publishers operate by charging authors fees to publish their work without providing the editorial and publishing services that legitimate publishers do, such as peer review and copyediting. As a result, the published work may not meet the standards of quality and integrity that are expected in the academic community.

Predatory publishing can undermine the integrity of the scientific record, and it can also deceive authors, particularly those who are new to the publishing process or who may not be familiar with the practices of legitimate publishers. It is important for authors to be aware of predatory publishers and to do their due diligence when considering where to submit their work for publication.

TAC: How will the OSTP Nelson memo affect publishing in 2023?

ChatGPT Response:

I’m sorry, but I don’t have information about the OSTP Nelson memo or how it might affect publishing in 2023. My training data only goes up until 2021, and I am not able to browse the internet or access any new information.

TAC: Should AI-driven text be copyrightable?

ChatGPT Response:

Under most copyright laws, original works of authorship, including literary works, are eligible for copyright protection. This includes works that are produced using artificial intelligence (AI). In general, if a work is original and has been fixed in a tangible form (such as a written or recorded work), it is eligible for copyright protection, regardless of whether it was produced by a person or by AI.

However, there may be certain legal issues that arise when it comes to AI-generated works and copyright. For example, if an AI program is used to generate a work, it is not clear who the “author” of the work is. Some countries have specific laws addressing this issue, while others do not.

It is also possible that a work produced by AI could be considered a “derivative work,” which is a work based on one or more preexisting works. In this case, the copyright in the derivative work would be held by the person or entity that created the work, but they would need to obtain permission from the copyright holder of the preexisting work in order to use it as a basis for the derivative work.

Overall, the question of whether AI-generated works are eligible for copyright protection is a complex legal issue that may vary depending on the specific circumstances and the laws of the country where the work was created. It is important to consult with a qualified attorney if you have questions about copyright and AI-generated works.

TAC: Should markup in publishing be used more for conveying appearance or semantics?

ChatGPT Response:

Markup in publishing refers to the use of codes or symbols in a document to indicate how the document should be structured or formatted. There are two main types of markup: structural markup, which is used to indicate the structure and organization of a document, and presentational markup, which is used to indicate how a document should be formatted or styled.

In general, it is generally considered more effective and efficient to use markup to convey semantics (i.e., meaning and structure) rather than appearance. This is because semantics are more enduring and less prone to change over time, while appearance is more subjective and can be affected by factors such as the device or browser being used to view the document.

By using markup to convey semantics, it is possible to create documents that are more easily understood by both humans and machines, and that are more adaptable to different formats and platforms. This can help to ensure that the content of a document is more widely accessible and more useful in the long term.

Overall, markup languages play an important role in publishing, and can be used to convey both the appearance and semantics of content. The extent to which markup is used to convey semantics may depend on the specific needs and goals of the publication, as well as the preferences of the authors and publishers.

TAC: When will open access overtake subscription publishing of journal articles?

ChatGPT Response:

It is difficult to predict when open access (OA) publishing will overtake subscription publishing as the dominant model for journal articles. The adoption of OA publishing has been increasing in recent years, with many researchers, institutions, and funders expressing support for OA as a way to increase the accessibility and impact of research.

There are several factors that could influence the adoption of OA publishing, including the availability of funding to support OA publication fees, the policies of research funders and institutions, and the attitudes of researchers and publishers.

One of the main challenges to the widespread adoption of OA publishing is the cost. Many OA journals charge publication fees, which can be a barrier for some authors, particularly those at institutions or in countries with limited research funding. However, there are also a number of OA journals that do not charge publication fees, and there are initiatives underway to support OA publishing, such as the Plan S initiative, which aims to make all research funded by public grants fully OA by 2024.

It is likely that OA publishing will continue to grow in importance and adoption in the coming years, but it is uncertain when it will overtake subscription publishing as the dominant model for journal articles.

TAC: How will publishers adapt to a fully open access ecosystem?

ChatGPT Response:

The transition to a fully open access (OA) ecosystem is likely to be challenging for many publishers, as it requires a significant shift in the way they generate revenue. In a fully OA ecosystem, most or all research articles are made freely available to the public, and the costs of publishing are typically covered by grants or other forms of funding, rather than by subscription fees or paywalls.

One way that publishers could adapt to a fully OA ecosystem is by shifting their business models to focus on providing value-added services to authors, rather than on generating revenue from subscriptions. This could include offering high-quality editing and production services, promoting research through marketing and outreach efforts, and providing data management and preservation services.

Another option for publishers is to explore new sources of funding, such as grants from foundations or government agencies, or by partnering with universities or other research institutions.

Overall, the transition to a fully OA ecosystem is likely to require a combination of these strategies, as well as a willingness to experiment with new business models and revenue streams.

TAC: What is the role of a library if all the content is freely available?

ChatGPT Response:

Even if all content were to become freely available, libraries would still play a critical role in the research ecosystem. Here are a few ways that libraries could continue to be valuable in a fully open access (OA) environment:

- Collection development: Libraries would still be responsible for acquiring and curating collections of research materials, and for providing access to these collections to their users. This could involve selecting, purchasing, and preserving OA content, as well as traditional print materials.

- Research support: Libraries could continue to provide a range of services to support researchers, such as research consultation, data management and preservation, and training in research skills.

- Access to specialized resources: Libraries often have access to specialized resources and collections that may not be freely available to the general public, such as historical documents or rare books.

- Community engagement: Libraries can serve as hubs for community engagement and learning, hosting events and workshops, and providing access to resources and technology for local residents.

- Preservation: Libraries play a critical role in preserving the intellectual record of humanity by collecting, organizing, and storing research materials for the long term. In a fully OA environment, this role would become even more important as libraries work to ensure that research is preserved and remains accessible to future generations.

TAC: What is the best AI text development tool right now?

ChatGPT Response:

It is difficult to identify a single “best” AI text development tool, as the appropriate tool for a given task will depend on a variety of factors, including the specific needs and goals of the user, the type of text that is being generated, and the resources and expertise available.

There are many different AI text development tools available, ranging from simple online generators to more complex software programs. Some examples of AI text development tools include:

- GPT-3 (Generative Pre-trained Transformer 3): GPT-3 is a large, advanced language model developed by OpenAI that can generate text in a variety of styles and languages. It can be used for tasks such as translation, summarization, and text generation.

- ChatGPT: ChatGPT is an open-source chatbot that uses the GPT-3 language model to generate responses to user inputs. It can be used to create chatbots for customer service, marketing, or other applications.

- Hugging Face: Hugging Face is a platform that provides access to a wide range of natural language processing (NLP) models, including language models, machine translation models, and text classification models. Users can use the platform to train, fine-tune, and deploy NLP models for a variety of tasks.

- AI Dungeon: AI Dungeon is an AI-powered text adventure game that uses a language model to generate interactive stories in real-time. Users can input their own responses and choices to shape the direction of the story.

There are many other AI text development tools available, and the best tool for a given task will depend on the specific needs and goals of the user. It is important to carefully consider the capabilities and limitations of different tools before choosing one.

TAC: That is a modest answer. Which do you think is strongest for the creation of journal articles?

ChatGPT Response:

As a language model, I am not able to make judgments about the relative strengths or weaknesses of different AI text development tools. There are many different AI text development tools available, and the best tool for a given task will depend on the specific needs and goals of the user, as well as the resources and expertise available.

For the creation of journal articles, it may be useful to consider tools that are specifically designed for research or academic writing, or that have advanced language modeling capabilities. Some examples of AI text development tools that might be relevant for journal article writing include:

- GPT-3 (Generative Pre-trained Transformer 3): GPT-3 is a large, advanced language model developed by OpenAI that can generate text in a variety of styles and languages. It could potentially be used to generate text for journal articles, although it is important to carefully consider the limitations of any AI tool and to supplement the output with additional analysis and interpretation by human researchers.

- Hugging Face: Hugging Face is a platform that provides access to a wide range of natural language processing (NLP) models, including language models, machine translation models, and text classification models. Users can use the platform to train, fine-tune, and deploy NLP models for a variety of tasks, including research and academic writing.

- AI Dungeon: AI Dungeon is an AI-powered text adventure game that uses a language model to generate interactive stories in real-time. While it is not specifically designed for research or academic writing, it could potentially be used as a tool for generating ideas or inspiration for journal articles.

Ultimately, the best AI text development tool for journal article writing will depend on the specific needs and goals of the user, and it is important to carefully consider the capabilities and limitations of different tools before choosing one.

TAC: In Borges’ The Library of Babel, what is the meaning of the hexagon?

ChatGPT Response:

In “The Library of Babel,” a short story by Argentine author and poet Jorge Luis Borges, the hexagon is a symbol of the universe and the library in which it is contained. The library is described as a vast and nearly limitless space that is filled with every book that has ever been written, as well as every book that will ever be written. The library is organized in the shape of a giant, interconnected network of galleries and rooms, with each gallery or room containing a specific set of books. The structure of the library is said to be based on the shape of a hexagon, with each hexagonal room connected to six others.

The symbolism of the hexagon in “The Library of Babel” is open to interpretation, but it is often seen as a representation of the interconnectedness and complexity of the universe. The hexagon is a repeating, geometric shape that is found in many natural systems, and it is often used to symbolize balance and harmony. In the context of the library, the hexagon could represent the idea that every book and every piece of knowledge is connected to many others, and that the library as a whole is a vast, interconnected web of information.

Overall, the meaning of the hexagon in “The Library of Babel” is likely to be interpreted differently by different readers, but it is generally seen as a symbol of the vastness and complexity of the universe and the library that contains it.

TAC: What is one of the current technological limitations of AI systems?

ChatGPT Response:

One of the current technological limitations of AI systems is their inability to fully understand and replicate human-like reasoning and common sense. While AI systems can be trained to perform many tasks effectively, they often lack the ability to understand and interpret complex or nuanced situations in the same way that a human can.

For example, AI systems may struggle to understand and interpret figurative language or irony, or to apply their knowledge in novel or unexpected situations. They may also have difficulty understanding the context or implications of certain words or phrases, or the relationships between different concepts or pieces of information.

Another limitation of AI systems is their reliance on large amounts of data for training and evaluation. While AI systems can be highly effective at learning from large datasets, they may struggle to generalize their knowledge to new situations or to learn from smaller amounts of data.

Other technological limitations of AI systems include their susceptibility to bias, their inability to fully replicate human creativity or intuition, and their reliance on specific hardware and software environments.

TAC: What will people do when robots can do everything?

ChatGPT Response:

As robots and artificial intelligence continue to advance, it is possible that they will be able to perform a wider range of tasks and duties, potentially leading to some job displacement in certain industries. However, it is unlikely that robots will be able to fully replace all human labor, as there are many tasks that require human-like qualities such as creativity, empathy, and adaptability.

It is also important to consider the ethical implications of replacing human labor with robots, as this could have significant social and economic impacts. Therefore, it is important for society to carefully consider how to integrate new technologies in a way that is beneficial for all stakeholders.

One potential solution is to focus on retraining and upskilling workers for new roles that may be more suitable for humans, such as roles that involve managing and maintaining the technology, or roles that require more uniquely human skills and qualities. Another option is to consider redistributing wealth and resources in a way that allows people to pursue other interests and activities outside of paid work, such as volunteering, learning, or leisure activities.

Discussion

14 Thoughts on "Thoughts on AI’s Impact on Scholarly Communications? An Interview with ChatGPT"

ChatGPT seems to favor the Oxford comma. Maybe there’s hope for us yet.

Me: In Borges’ The Library of Babel, what is the meaning of the hamburger ?

ChatGPT Response: The hamburger in Borges’ The Library of Babel is a metaphor for the infinite and chaotic nature of existence. It represents the idea that no matter how much knowledge is available, it is still impossible to make sense of the world. The hamburger is a reminder that the universe is chaotic and unpredictable, and that any attempt to make sense of it is ultimately futile.

Pfff …

In a world of widespread AI generated text, I think systems that support authenticity will need to be more robust and widely used. Although there is some level of authenticity when visiting a website of a publisher, library, or newspaper, when content is distributed off of these primary sites (such as to publishers’ linking and discovery partners) the application of ORCIDs and RORIDs will be more important. Perhaps blockchain could be of use for this purpose as well.

I’m impressed that ChatGPT considered differences among countries in its answer to the copyright question. I find that the overwhelming number of American humans discussing copyright law in international forums like this one just assume the entire world has American copyright laws, so I consider ChatGPT beat out the average human on this one.

This powerful technology forces us to consider some fundamental questions. What opportunities does this next generation of AI give to researchers and publishers, as well as bad actors (e.g. papermills)? And what does it mean for academia in general?

Are we on the verge of a huge paradigm shift?

Academics judge published articles as to their value. One must consider that an AI generated article might prove of greater value than an article authored by an academic. The reader will be influenced by where the article is published. Reviewers have the same challenge. Thus, is the article in question, whether AI or human generated, of real significance or part of today’s publish/perish persiflage.

It would be of interest if Todd could do a follow-up piece by having an AI review articles submitted for publication or published. The ramifications for academia and the publishing industry might be instructive. Subsequent reviews of the same articles as the AI’s improve could have ramifications.

An AI program that is based on a statistical map does a good job providing a summary of the corpus of literature on any topic. But I do wonder how it deals with topics in which the consensus of the free-and-open literature supports a particular view, while there is scientific consensus for a competing view (e.g. anthropogenic climate change; vaccines and autism, etc.). Similarly, how would it deal with something more mundane, say the OA-citation advantage postulate, where the vast majority of journal articles, based on poorly-controlled observational methods is upended by a couple of robust RTCs?

These topics require a deeper understanding of how evidence and methodology is used in scientific knowledge, something that is not programmed into ChatGPT. Moreover, I could imagine powerful individuals/nation states using programs like ChatGPT to purposely flood the open web with misinformation for the sole purpose of distorting the search and discovery process (e.g. the cultural history of Ukraine from a Kremlin perspective).

ChatGPT is down right now (its servers are overwhelmed with searches), but I’d be interested in your thought in how these programs deal with scientific and other academic controversies.

Excellent points which can be applied to many humanistic, scientific and journalistic queries that might be posed to an AI-based program. “Correct” answers/facts/theories are often elusive.

For those of you who are not familiar with it, or who have not reread it in a long time, this is a great time to pull out Karel Čapek’s play “R.U.R.”, which premiered in January 1921. It is this play from which the word “Robot” originates. To learn more about the play, please see this article from MIT Press: https://thereader.mitpress.mit.edu/origin-word-robot-rur/.

I will check it out. Thanks; I appreciate the reference.

Let me “peer review” at least one answer here (though I cringe at saying I’m a peer to ChatGPT!) and point out that it got at least one answer completely wrong.

It isn’t at all difficult to predict when open access publishing will overtake subscription publishing as the dominant model for journal articles because … it already has. Given such a significant factual error, as a reader my trust in this manuscript, which fails to document it sources, is irreparably impact. Recommendation: Reject

“Open Access surpasses subscription publication globally for the first time”

February 24, 2021

https://www.dimensions.ai/blog/open-access-surpasses-subscription-publication-globally-for-the-first-time/

See

AI et al.: Machines Are About to Change Scientific Publishing Forever https://pubs.acs.org/doi/10.1021/acsenergylett.2c02828

(See Appendix in the supporting information to write a Scientific Perspective article)

the problem with chatGPT is in this phrase in the introduction to this article: “the machine doesn’t “know” whether the answers are correct or not, only that they are statically valid responses to the questions posed”

chatGPT doesn’t give answers or responses, rather it assembles linguistic chunks of texts taken from everywhere. the assemblage has statistical correspondence with the presence of those chunks in the sources used in the learning phase.

different would be if these answers were the result of a specific search of specific content in the web, then clustered and classified, and then summarized. this would be useful AI, but here we haven’t this.

and because of these limitations, there are all the higher level problems mentioned by Phil Davis here above.

Beyond the level of undergrad term papers, a thesis/dissertation or academic paper/article requires enough original thought (generally beyond standard Wikipedia-level writing) or creativity of expression to make this AI solution only semi-useful. The problem isn’t just the reasoning methodology but finding and interpreting sources. In history and social science there are not always “accurate” answers about, for example, the character of famous figures like Julius Caesar or Winston Churchill. Bland, non-committal, positions and language aren’t always effective or desired.

That said, this is clearly a good first step toward more sphisticated AI.