Editor’s Note: Today’s post is by Jonny Coates. Jonny, originally an immunologist, is a leading expert in preprints and metascience, focusing on science communication, trust, and research culture reform.

For more than two decades, the open access (OA) movement has been one of the most influential reform efforts in scholarly communication. It reshaped policies, business models, and expectations around the dissemination of research. Yet the movement has also faced persistent criticism, including from longtime observer and journalist Richard Poynder, who in 2023 announced that he would no longer cover open access after concluding that the movement had failed to achieve its original goals.

In an ebook outlining his reasoning, Poynder argued that open access not only fell short of its ambitions, but in some cases produced unintended consequences, including the rise of predatory publishing, the normalization of pay-to-publish models, growing inequities, and new opportunities for publishers to extract revenue through “double dipping”.

Whether one agrees with Poynder’s conclusion, his critique raises an uncomfortable but important question for other reform efforts in scholarly publishing. In particular, the preprint movement, once seen as a pragmatic, low-cost, researcher-driven route to openness, now faces its own moment of uncertainty.

In 2023, bioRxiv marked a decade of rapid growth in life-science preprints. Yet only two years later, the landscape looks less secure. The Chan Zuckerberg Initiative (CZI), long the most significant funder of preprint infrastructure, has largely withdrawn from the space. Advocacy organizations have shifted their priorities and lost their expertise, and adoption rates in several fields appear to be plateauing. At the same time, new initiatives such as Publish-Review-Curate (PRC) are emerging, potentially fragmenting effort and authority within the ecosystem. Questions have already been raised about the current trajectory of the preprint movement.

I revisit Poynder’s arguments about why OA faltered to examine if the preprint community is at risk of repeating some of the same mistakes and how it might course correct.

Argument 1: Advocates did not take ownership of their own movement and allowed co-option by legacy publishers

In his book, Poynder states that “the fundamental problem was that OA advocates did not take ownership of their own movement. They failed, for instance, to establish a central organization (an OA foundation, if you like) to organize and better manage the movement; and they failed to publish a single, canonical definition of open access.”

The general definition of a preprint is “a complete, draft version of a scientific or scholarly manuscript shared publicly on online repositories before formal peer review and publication in a journal.” However, this definition is contested, often viewed as dated and inaccurate. Evidence shows that preprints are not “draft” reports, and many are peer reviewed before posting (by colleagues or journals when preprints are submitted).

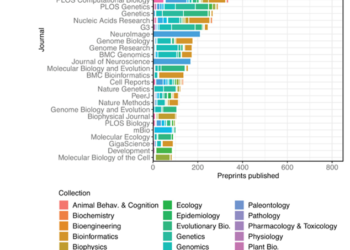

Traditional publishers increasingly dominate the space. The largest server, Research Square, is owned by Springer Nature, and each of the major publishers now operate their own servers. Society-owned servers are using publisher-owned platforms, such as the recent announcement that chemRxiv would be moving to Wiley’s Research Exchange Preprints platform.

However, traditional publishers’ co-option extends beyond infrastructure. As Poynder states in his book, “OA advocates failed to anticipate – and then for too long ignored – how their advocacy was allowing legacy publishers to co-opt open access.” Efforts such as PRC are publisher-driven and threaten to divert attention, funding, and resources away from the basic advocacy that this model relies upon. This fragmentation threatens the entire movement. It is also a clear co-option of the preprinting movement to benefit a publisher-dominated model. As with the OA movement, this particular co-option is not just originating from the legacy publishers but from those that are more innovative, too. Indeed, even key advocacy efforts are currently pursuing efforts much more aligned with publisher interests rather than those of researchers.

There is hope. The most well-known bioscience preprint server, bioRxiv, is owned by the non-profit organization openRxiv, which has greater community governance and focus. This server also integrates with multiple third parties, allowing for experimentation within the scholarly communication landscape, free from restricted and publisher-dominated workflows, such as PRC. More recently, arXiv is also becoming an independent non-profit.

The preprint movement reflects Poynder’s first argument; lack of a central strategic organization, increasing fragmentation and hijacking by publisher-aligned interests. However, it has not yet completely fallen, and openRxiv offers a particular ray of hope. A significant renewed effort in advocacy and ownership will be essential to avoid falling afoul of this argument.

Argument 2: Lack of sustainability

Poynder argues that the OA movement did not “give sufficient thought to how OA would be funded”. Indeed, this is why we’ve witnessed the rise of article processing charges (APCs) and transformative agreements.

Preprint servers have relied on funding from grants and funding bodies such as the Wellcome Trust, the Howard Hughes Medical Institute (HHMI), CZI, and the Gates Foundation. As funders withdraw, servers are left to determine other sources of funding. Some are turning to charging institutions whilst also continuing to seek support from funders. Library budgets continue to shrink, and so this funding model may not be sustainable over the long term.

Although preprint servers are an order of magnitude cheaper than journals, they still have costs. Researchers who set up preprint servers on the OSF platform (run by the Center for Open Science (COS)) discovered this when COS began charging for the use of the infrastructure, resulting in several preprint servers shutting down. The rise of AI has also caused issues for some preprint servers without screening processes (primarily OSF and psyArXiv) to temporarily stop accepting submissions. Even those servers with current screening in place are having issues with AI-generated content. For now, many servers rely on volunteers to screen preprints, but where in-house staff is used, this incurs additional costs.

Sustainability is a perennial issue that the preprint movement has not yet solved. Publisher-owned servers benefit from more secure funding (and this is one way publishers could use their profits to benefit the scientific community). However, this removes preprint servers from researcher governance and control. Efforts such as PRC risk further escalating costs through two means. Firstly, the services themselves are funded by organizations or funders — taking funding away from preprint servers and other efforts — and secondly by introducing article review charges in addition to APCs (or article “curation” charges); reviewing charges have already been suggested. These costs are likely to be borne by authors, and therefore funders or institutions, further spreading an already thin funding source.

Poynder summarizes the sustainability problem well: “But why do I think scholar-led initiatives are unlikely to prove sustainable? Because in the main they are reliant on money from libraries and funders.” Preprints are much more sustainable than the many OA approaches due to significantly lower costs and less specialization, but they do (currently) rely on libraries and funders.

Argument 3: Mandates and top-down control

Poynder puts this pointedly, describing OA efforts as “morph[ing] into a top-down system of command and control” and describing mandates as “something that resembles an administrative power grab more than a principled researcher-led movement focused on sharing”. This is a strong view of mandates, but one that (anecdotally) many researchers share.

Preprints are often promoted as restoring author control. However, it could be argued, as Poynder does, that mandating preprints removes this choice and turns the movement into a top-down effort rather than the necessary cultural shift that grassroots efforts can achieve.

Preprint mandates are increasing, too. HHMI, the Gates Foundation, and Aligning Science Across Parkinson’s have all introduced policies mandating preprints. These policies are typically motivated by legitimate goals, and yet they also illustrate how quickly a voluntary practice can become institutionalized. Perhaps a good example of supporting preprinting without mandates can be found in the EMBO policy, which supports the citation of preprints in fellowship applications but does not mandate their use by grantees. This approach can reward desired behaviors without removing researcher choices. Naturally, over time, researchers who engage with the desired behaviors will be advantaged, and others will follow suit to avoid being disadvantaged.

A significant issue with mandating preprint posting is that such efforts do not always include the necessary education, training, or outreach. This forces compliance without helping researchers understand why they should comply or how such actions are beneficial. Additionally, funders spend funds and resources on ensuring compliance with mandates, which can be challenging and expensive.

Here, the preprint space is actively pursuing mandates for preprinting. Advocates largely see these mandates as a positive development, but there is little evidence about how researchers perceive them.

Argument 4: No overarching strategy

The OA movement had “no coherent overarching strategy,” as Poynder states. “This could have perverse effects, which has in fact been an abiding feature of OA initiatives.”

As discussed under the first argument, the preprint movement never had a truly centralized organization. Key organizations that could have filled this role never provided a fully overarching strategy, and now, as preprint adoption is stalling, are focussed much more on efforts benefiting publishers. This also highlights a larger issue with preprint advocacy: current efforts have plateaued, and there is little willingness to attempt different outreach and educational activities.

Plan U represents a vision for preprints (see Argument 3 above re: mandates), but was never backed up with an organization to deliver that vision and is not a strategic document. An updated vision was presented by Richard Sever in 2023, but again, there is no clear (public) strategy on achieving this vision. If a coalition can be formed between preprint servers, researchers, institutions, and funders, then it may be possible to develop a more coherent and overarching strategy.

As discussed, some preprint-related efforts such as PRC are being developed in a manner that is likely to cause perverse effects, suggesting that the preprint movement is not only suffering from a lack of strategy but is now positioning to inflict damage — “an abiding feature of OA initiatives”.

openRxiv may attempt to provide this overarching strategy, but the fragmentation of the landscape and competing interests of those who have consolidated power could prevent progress. Regardless, the preprint movement does not have an overarching strategy and therefore falls into the same issue that the OA movement suffered from.

Argument 5: Loss of evidence-driven approaches and objectivity

Poynder determined that “many OA advocates seemed to stop being objective in their advocacy, as the “cause” began to take precedence over rational discussion, objective weighing of facts, and clear-headed assessment of the advantages and disadvantages of open access.” Unfortunately, this is becoming true of the preprint movement, too.

There is a small minority who are concentrating power within the movement and using this to further their own agendas, without self-reflection or accepting criticism. Often, these individuals have conflicts of interest, such as creating and running the services that they promote. Additionally, new efforts are often created and developed in closed, invitation-only meetings that limit participation. PRC is a prime example of a new effort developed in a manner inconsistent with open science practices and reluctant to criticism. Significant evidence now exists demonstrating preprints are comparable to their peer-reviewed counterparts. However, the PRC effort centralizes peer review to advance the preprint review services reliant on such a model.

As part of this argument, Poynder stated that there is a “lack of consideration for what researchers want.” Indeed, many advocates push a one-size-fits-all approach, ignoring vital disciplinary differences. There is an increasing detachment between those involved in the preprint movement and the average researcher. Many key individuals within the movement lack a life science background, limiting their genuine understanding of the experience and motivations of these researchers. This gap leads to misguided reform efforts that add administrative burdens and increase workloads. Collectively, this can alienate researchers from the very efforts designed to improve science. In private correspondence, many researchers have raised concerns with the current directions of the open science movement.

There are several surveys of researchers’ perceptions toward preprints, yet these are not modifying advocacy efforts. For example, persistent myths and misconceptions surrounding preprints have remained since 2013, yet advocacy efforts have not substantially changed to more directly tackle researchers’ concerns. However, in some cases, this may finally be changing. In 2025, a collaboration between the preprint and assessment reform movements began — albeit with a closed meeting and a degree of moving away from preprints towards the PRC model. It remains to be seen if this will cause long-term detriment or be beneficial.

Despite this, the preprint movement is largely backed by evidence, and some of the most prominent people involved remain objective and self-reflective. Although the preprint movement does not quite meet this argument, it is beginning to waver.

“Before any new OA initiative, policy or mandate is introduced, those proposing it should be duty bound to…do due diligence on the dangers of it creating new problems or having perverse effects,” Poynder states. Those involved in academic reform efforts would do well to remind themselves of this, as when perverse effects occur, they damage the whole movement.

Argument 6: Reform movements act independently

This is not an argument that Poynder specifically made, but it is one that I believe impacts all reform efforts within academia. Many initiatives that aim to improve scholarly communication, or academia more broadly, operate in parallel rather than in coordination, limiting their overall effectiveness and slowing systemic change.

One of the key concerns for researchers adopting preprints is that they are not recognized in assessments for hiring or promotion. Yet despite this, the preprint and assessment reform movements have only very recently come together to directly collaborate. Without alignment between these movements, researchers face conflicting signals: they are encouraged to share work as preprints, yet evaluated using criteria that do not fully acknowledge those practices.

Another example is in the open access movement. Green OA, which includes the use of preprints and repository-based sharing, is often under-represented in broader open access conversations, which instead focus on Gold or Diamond OA publishing models. Therefore, policies and advocacy efforts may prioritize journal-based solutions while overlooking approaches that could be implemented more quickly and at lower cost.

Providing only partial solutions is not a strategic approach to systemic change and risks weakening the impact of reform efforts. When multiple initiatives pursue similar goals in isolation, progress becomes fragmented and slower than it could be through coordinated action. The existence of several separate reform agendas can also create confusion for researchers, who may struggle to understand which practices are expected, rewarded, or permitted within their institutions. Greater collaboration between movements would help create consistent incentives and make it easier for researchers to adopt more open and transparent forms of scholarly communication.

Avoiding another missed opportunity

The experience of open access suggests several lessons for the future development of preprints.

Community governance matters. Infrastructure that remains under academic or non-profit control is less likely to be reshaped purely by commercial incentives. This also helps to ground any efforts in genuinely serving researcher needs.

Equity must be considered early rather than retroactively. Funding models built around author payments risk reproducing the same structural inequalities already visible in APC-funded open access. It is vital that a global approach is taken, which actively includes voices from regions beyond Western Europe and the USA.

Policy debates and advocacy efforts need intellectual pluralism. Reform efforts are healthier when dissenting views and empirical evidence are taken seriously rather than treated as obstacles to momentum.

The behavior of influential actors matters. The trajectory of scholarly communication reform is often determined less by technical possibilities than by the priorities of those shaping policy, discussions, and infrastructure.

Cohesion and strategy are important. Increasing fragmentation and loss of support for vital advocacy are only damaging to the movement. Cohesion and overarching strategy provide consistent incentives and make it easier for researchers to adopt preprinting

A pivotal moment

So, have we learned any lessons from the OA movement, and is the preprint movement about to fail in the same way?

Right now, the preprint movement sits on the precipice, and the decisions made in the next few years will prove pivotal in determining whether preprints become a stable, community-governed part of the scholarly ecosystem or drift into the same patterns of fragmentation, inequity, and co-option that have complicated the open access transition.

But another key question to ask is: is the preprint movement healthy? What’s clear is that the movement is not financially healthy, there is an increasing reduction of voices and transparency, adoption is stalling, and the cultural shift needed has not escaped the minority. So whilst the movement has not failed yet, it is definitely unhealthy.

It’s not too late, but what is needed may not be attractive to funders, nor those co-opting the effort for their own agendas.

Discussion

16 Thoughts on "Guest Post — From Open Access to Preprints: Are We Repeating the Same Mistakes in Scholarly Publishing?"

I would like to suggest a sixth lesson for the future, linked to both community governance and equity: open infrastructures cannot rely on grants and good will for long-term sustainability.

The key to long term sustainability is formulating a value proposition: who finds preprints to be so important to their immediate goals that they are willing to give money to make it happen?

If the preprint movement is averse to top-down mandates (where e.g. HHMI signals that preprints are sufficiently valuable to their mission that they’re willing to mandate them for all investigators AND support the accompanying infrastructure), then the value must accrue to individual researchers: what hair-on-fire problem does preprinting solve for researchers, and how much are they willing to pay to solve that problem?

Without an answer to that question – or if the answer is $0 – then preprinting will remain niche and at the mercy of grants and good will.

I agree! My comment is less about preprints specifically than it is about open infrastructure and standards generally. As a community we are great at spinning such things up. Unfortunately we often forget to think about long-term support and sustainability. When those infrastructures then have to start charging for services to ensure they can continue operating, they’re often on the receiving end of negative comments and in some cases accusations of bad faith. As witness the recent moves by Open Alex to start generating enough income from their enhanced services to keep themselves going (https://openalex.org/pricing).

It’s the same reason I keep challenging people about what ‘diamond’ means to them. Open doesn’t mean Free. There’s always a cost somewhere in the process of setting up and then running a service (infrastructure, standard, publishing operation, whatever).

I very much agree! I’ve got a whole other article I’d like to write about open infrastructures and how funding bodies keep abandoning them in search of shiny new things. There’s been some great examples over the last few years (unfortunately). On the point of good will – this is something I didn’t mention in this article but, in private conversations I’ve had with researchers, it is something that the wider open science space is losing – at least from some – but that again would be a different article (I’ve definitely got one in me on the current threats to the preprint space, free from the restrictions I gave myself in this one)

See also from 2019, “Building for the Long Term: Why Business Strategies are Needed for Community-Owned Infrastructure” https://scholarlykitchen.sspnet.org/2019/08/01/building-for-the-long-term-why-business-strategies-are-needed-for-community-owned-infrastructure/

Thanks David – that’s a great piece. Another challenging part of the dynamic is that researchers expect everything for free, especially if it’s from a non-profit or based on open infrastructure, which makes getting revenue from the actual users of the infrastructure really hard.

As an imperfect example, back in my Axios Review days I often heard that students and PIs would rather waste a year or more shopping an article around multiple journals than pay $250 for us to find somewhere that wanted to publish it. Jump over to any other industry and they’d pay you $25,000 rather than waste a year of staff time.

OVERARCHING STRATEGY

Here is the truth. The ultimate goal of research is to advance our knowledge of the world we live in, the payoff being that we will be better able to manage our affairs, which include our economic and physical health. However, the research environment differs in dictatorships and democracies. If researchers want funding, then in dictatorships they must align their research with the dictator’s goals. In democracies, they must align with the politicians who depend for support, statistically, on the goals of the average elector. Thus, the politicians are not able to attend to, or even be aware of, the researchers’ “truth.” The preprint servers have come to play an important role in inter-researcher communication. This to me counts more than what I read in formal publications. While we await the desired massive reforms of our research systems, preprint servers will continue to provide a vital role. With this in mind, yes, ” If a coalition can be formed between preprint servers, researchers, institutions, and funders, then it may be possible to develop a more coherent and overarching strategy.”

Jonny – I like your approach of using Poynder’s thesis as a framework – this kind of reflection is healthy and he got a lot right. FWIW, I see the preprint movement, in part, as a response to the failures of the open access movement rather than some natural outgrowth or progression from it. All the more reason to be mindful of our past missteps.

I share many of your concerns, particularly around co-option and sustainability, but allow me to push back on some points:

1) You characterize PRC as a publisher-driven effort multiple times, but the primary real-world implementation of PRC is the model of eLife – a funder-supported nonprofit that has actually alienated much of the traditional publishing establishment by refusing to make accept/reject decisions. Hardly a publisher power grab. Moreover, the broader PRC concept emerged largely from funder- and researcher-oriented conversations and is being carried forward today by a similar constituency. If there are signs that commercial publishers are eager to adopt this model, I haven’t seen them.

2) You note that publisher-owned servers have more secure funding and rightly acknowledge preprint servers’ dependence on grants and libraries as a liability. And you state that PRC efforts threaten to divert resources away from core infrastructure. But bioRxiv’s growing integration ecosystem – which you call out as a plus – is precisely what enables PRC-style experimentation and may ultimately allow for some diversification of the value chain around preprints, including review services. Couldn’t this be part of the solution, rather than a parasitic drain?

3) In argument 3, you frame preprint mandates as top-down command-and-control, but in argument 4, you lament the lack of strategic organization to deliver on the vision of Plan U, which is an explicit call for funder preprint mandates. I don’t disagree with your general point that mandates can be harmful if they’re not done well – policy must be thoughtful and messaging is everything. You cite EMBO policy as a positive alternative, but I have not seen any evidence that such soft incentive structures change behavior “naturally, over time”- and they risk amplifying inequities. Do you have ideas for a type of coordinated action that you would consider legitimate but not coercive?

4) You are very right that researchers are often “encouraged to share work as preprints, yet evaluated using criteria that do not fully acknowledge those practices”. Perhaps a shameless plug, but HHMI’s policy not only requires preprint posting, it makes the preprints the primary unit of assessment – a real-world example of preprint and assessment reform movements working in lockstep (argument 6). One point to make here is that it is difficult to evaluate researchers on behaviors you don’t require but only encourage. And it is basically impossible to shift an assessment system toward preprints without first mandating them.

Regarding point 2, the issue with the Publish-Review-Curate model is treating it as P-R-C vs. PRC. I think it is important to hyphenate the three components so that some preprints don’t get value just because they’re reviewed. Taking peer-review at face value will lead to the same consequences that we face in traditional journal publishing today.

Hi Michele,

I’ve very much aimed to take a step back and try to have an objective look at the preprint movement, so I’m glad you liked the use of Poynder’s thesis as a framework for that. I’m hoping to stimulate some discussion and (imo, much needed self-reflection). I would also hope that my years of advocacy and efforts in supporting preprints and many of the services now bound into PRC would signal that I do strongly support individual efforts and experimentation. This article (and the concerns I’ve not raised here) are not some outburst or discontent, but an honest effort to pause and reflect.

1 – I’m drawing this, in part, from the data in my report earlier this year where I looked at preprint evaluators and where those preprints were subsequently published. That shows eLife is the primary beneficiary of a PRC model. They’re also one of the largest supporters of PRC, driving discussions towards this specific focus. And whilst I do support eLife, they are still a publisher. Even if they’re different from the traditional publishers that doesn’t mean they can’t inadvertently cause harm – which is my concern with PRC. Traditional publishers are not yet adopting this model but very easily could – they don’t need to do much other than review and publish preprints to achieve PRC. So in one sense, many actually are already just without explicitly saying that they engage in this model. I know many PRC advocates would argue that’s incorrect but how many of them are actually separating out the Review and Curation steps? And when they’re not genuinely separated is that PRC? If not, then eLife isn’t doing PRC. If yes, then how is PRC different from posting a preprint and it being reviewed/published by a journal? Even the PRC effort itself is full of contradictions, leading to even more confusion that just wasn’t necessary.

When I say hijacking I mean that over the past year and a half it has very much felt that PRC is not just another experiment around preprinting but is positioning to be “the” thing. This is reflected in how much it’s dominating discussions in the space and the shift I’ve seen around advocacy. Things are firmly moving away from preprints and towards reviewed preprints. As someone who’s been on the frontlines of advocating for preprints for a long time now, my experience tells me that this is not a positive switch.

I like eLife’s model. I like some of the efforts to improve peer review (Review Commons is a great idea to centralise peer review activity, PREreview are great at actually working to improve equity as examples). But I don’t believe we needed yet another entire movement with its own agenda in this space. Put that in the context of how few preprints are actually reviewed by a non-journal and it makes even less sense to me that this model is taking up so much discussion time – time away from discussing other things. I’ve heard from researchers that PRC is just causing yet more confusion in an already over-confused OS space. There are too many acronyms, too many (often rather similar) efforts and it’s impossible to know what is right to do. Again, basing this on my experience in advocacy I do think this will all amount to PRC being a net negative when we look back in however many years.

2- I didn’t have the space for proper nuance in this (it’s a long article) but I don’t believe that we needed a whole new effort in PRC to support experimentation. I’d also strongly argue that in the last year and a half PRC has been shifting from “another experiment” to something that seems to be trying to become “the” model. It restricts things to peer review rather than wider experimentation and is very much dominating discussions and the preprint space currently. This takes away from other possible experiments, takes up discussion time at meetings and conferences and takes the limited funding in this space to advance PRC instead of discussing more modern solutions.

bioRxiv does absolutely enable this kind of experimentation and I hope other efforts – AI tools, much wider trust signals etc – really make the most of this. I have for a very long time now supported preprint peer review as an activity. The problem is PRC is a very fixed workflow that – in practice – will almost certainly mean that the only preprints that “count” are those that are reviewed and curated. Indeed, we already see this reflected in some policies that only support peer reviewed preprints. This undermines one of the key advantages of preprints and risks playing into the hands of publishers who market peer review as a vital differentiator for trust. I could (maybe should) write an entire article on PRC outlining my concerns with the movement and why I strongly believe it will be damaging to preprinting.

3 – This perhaps is due to a lack of nuance. I wasn’t saying that I thought Plan U was necessarily the best approach I was simply using it to highlight that there were attempts to provide arguments for a strategy but that these, ultimately, were not backed up with the organisations/direction required – a common issue across all reform ideas and not one limited to academia either.

I think having very clear guidance that rewards preprints and open data etc over applicants who don’t engage in such behaviour would be a good step. The point I’m trying to get at in this is that forcing people may lead to compliance but that policies are very changeable – just look at DEI and how many organisations completely abandoned their values in the past few years. The longer lasting change – the cultural shift we need – comes from advocacy and education – two things the preprint space is very much lacking at the moment. I’d love to see undergraduates and graduates being taught the history of publishing, the metascience behind peer review and communication, and why OS efforts are actually important. I’d love to see better efforts in highlighting the voices and efforts in these endeavours beyond the normal things we keep seeing. In terms of inequities, this is a concern I have with PRC. I struggle to see how a real-world implementation will not result in additional costs and a two-tier structure to preprinting whereby only those preprints that are “reviewed” and “curated” count. Prestige will remain where you were reviewed or curated (i.e. journal brands).

4 – Not sure what worth this is but I am putting together a self-directed course on preprints and one of the sessions is around preprint policies. In that I’m taking different policies as case studies and intend to use HHMI as you discuss here – a great example of a more co-ordinated policy that is backed with the necessary training too (something else I think is so often missing in these discussions, but also something HHMI actually really follow through with well).

I agree that it is difficult to shift assessment without mandates but that doesn’t necessarily mean that we shouldn’t try. If you’re evaluating a researcher and have two similar cases that differ only by the presence of a preprint (or open data etc), then that presence should be an advantage as in one case you can confirm output/quality whereas in the other you simply trust blindly. Many non-academic careers require evidence of your ability to do the role you’re applying for so I don’t think this would be unfair if clearly stated in application materials. By all means, I’m not an expert on evaluating researchers but I do think we can assess without mandating. It may be that mandates for preprints end up producing different results to those in OA. On this point I was really looking at this simply from Poynder’s argument in OA, not my opinion on mandates. That said, I have heard increasing complaints from researchers that they are just being given more and more compliance/admin burden. One definite concern I have with mandating however is “that such efforts do not always include the necessary education, training, or outreach”. HHMI (again) is a great example where this does happen but it’s not the norm.

Being mindful of our past missteps is precisely one of the things that led me to actually share this article (I’d been thinking about much of this for quite a while). I fear that, as a movement, we’ve tipped into forgetting some of those mistakes and that we are – at least in part – repeating them. I think it’s vital that we have some of these conversations, as difficult as they may be. I just wish more people within the movement would openly challenge some of the behaviours (that are not in keeping with the spirit of what we’re asking of others for example) and openly reflect on what is working and what isn’t/could be improved. I often feel like a lonely voice at times.

I’m glad to see that my scribbles on open access are still being read.

It might be worth noting, however, that while the above discussion presents the “preprint movement” as a separate and later phenomenon to the open access movement (even, it seems, a response to the failures of the OA movement) the chronology assumed would seem to be back to front. In fact, the preprint model (as practised online) dates from the launch of arXiv in 1991, eleven years before the OA movement was founded at the BOAI meeting. It was also used as the model for Stevan Harnad’s 1994 “Subversive Proposal” (eight years before BOAI) and arXiv’s apparent success was a major reason why the BOAI meeting was called. In other words, the practice of sharing preprints preceded the OA movement, not the other way round.

Does the chronology matter? I don’t know but characterising it in the way the above discussion has done gives the impression that since it came after the OA movement the “preprint movement” can succeed where the former failed – if it learns the right lessons. Yet, as Jonny Coates points out, the issues and mistakes are pretty much the same in both cases. Given that the OA movement and the “preprint movement” are in effect the same thing this is unsurprising. It is also far from clear that the research community is any more able to resolve the issues, or correct the mistakes, today than it was yesterday.

This is in part because many of the obstacles the open access movement has faced cannot be resolved by the research community on its own – not least the funding problem discussed above.

1991? Please, arXiv was a latecomer to the model. According to Lariviere et al., 2013, the use of preprints can be traced back at least as far as 1922. In the mid-1960s, the NIH ran Information Exchange groups that circulated more than 1.5 million preprints. The National Bureau of Economic Research Working Papers archive goes back to 1973. The American Mathematical Society sponsored a short-lived repository for preprints in the mid-1990s, and the Chemistry Preprint Server was around in the early 2000s. In the Humanities, the Social Sciences Research Network was founded in 1994 and Research Papers in Economics was launched in 1997.

Lariviere, V., Sugimoto, C.R., Macaluso, B., Milojevic, S., Cronin, B. and Thelwall, M. (2013) arXiv e-prints

and the journal of record: An analysis of roles and relationships arXiv:1306.3261

Thanks for responding David. Just to note that I said, “as practised online”. Most of the services you mention (I assume) either predated online or (judging by the dates you cite) came after 1991, although I believe the National Bureau of Economic Research Working Papers did indeed go online in 1973. But I am happy to be corrected.

My point was that arXiv predated the open access movement and was an important model for it. As such, I do not think we can fairly say that the preprint movement was a response to the failures of the open access movement. (In fact, the roots of arXiv go back to 1989 when an electronic mailing list was set up to share theoretical physics preprints, or “e-prints” as they were then called).

Really I’m referring to efforts post 2010 here – for space this does ignore the history of preprints. But preprinting of course dates back to at least the 1960s as formal efforts (in the Life Sciences) but there is evidence for even earlier efforts in other fields (1920’s/30’s). There were attempts through the 1990’s and early 00’s but for the Life Sciences it wasn’t until the 2010’s that preprints really became a viable effort. There’s also an argument for looking at the earlier failures of preprinting but I would argue that they have generally been learned.

Mainly, I thought you broke down OA in a pragmatic way that would be a useful exercise to apply to my own area of focus. It helped me shape some of the concerns I’ve had and also to take that step back (as I actively advocate for preprint adoption and so can suffer from being too close at times too).

UNQUANTIFIED BENEFITS

Quite right David. In the 1960s my PhD supervisor in the UK had the NIH Information Exchange papers piled up in his office. I dipped into them regularly. An immediate thought was of those in laboratories world-wide that were excluded. Now you can dip in for free with a cell-phone, whether in a laboratory or in a Himalayan village!

Not only that. You can contribute from afar and, surprise, surprise, soon you are receiving emails from others (such as “retirees” like me) offering advice. Sometimes that advice can take the form of a formal comment (in Preprint exchanges like bioRxiv that have a comment section). More often, I suspect, it is a private interaction that is not calibrated by those seeking to quantify (in terms of advancing knowledge or publisher profits).