(This post is based on a presentation given at the 6th annual World Conference on Research Integrity, in Hong Kong, June 2019.)

My objective with this small research project was to get an idea of whether (and, if so, to what extent) articles published in predatory journals are being cited in the legitimate scientific literature.

To that end, I identified seven journals that had revealed their predatory nature when they were exposed by one of four different “sting” operations, each of which had clearly demonstrated that the journal in question will (despite its public claims of peer-reviewed rigor) either publish nonsense in return for payment of article-processing charges, or take on as an editor someone with no qualifications.

I then searched for citations to articles published in these journals in three large aggregators of scientific papers:

- The Web of Science, a massive index of scholarly journals, books, and proceedings that claims to index over 90 million documents

- The ScienceDirect database of journals and books published by Elsevier, which claims to include over 15 million publications

- PLOS ONE, an open-access megajournal that has published roughly 200,000 articles in its history

(Here it’s important to note that Web of Science indexes both the Elsevier journal list and PLOS ONE, which means that findings in either of the latter two databases will represent a subset of the findings in Web of Science. Searching them separately serves the purpose of creating additional context for the Web of Science results, but obviously doesn’t supplement them.)

In the interest of avoiding exposing both myself and my institution to possible litigation, I’m going to avoid naming these journals publicly. Instead, I’ll assign them the letters A through G. I will disclose, however, that six of these seven journals publish in the medical and biosciences — which is, itself, a matter of particular concern.

Journals A, B, C, and D demonstrated their predatory nature by falling for the “Star Wars” sting. In this sting operation, an investigator wrote a putatively scientific paper that actually consisted, in the investigator’s words, of “an absurd mess of factual errors, plagiarism, and movie quotes.” The paper purported to discuss the structure, function, and clinical relevance of “midi-chlorians… the fictional entities which live inside cells and give Jedi their powers in Star Wars.”

Despite the paper’s obviously fictional and even absurd nature, it was accepted for publication in these four journals, thus demonstrating that despite their claims, they do not actually exercise any meaningful editorial oversight or peer review, but in fact will publish anything submitted as long as the author is willing to pay an article processing charge — and will then falsely represent that article to the scholarly world as legitimate, peer-reviewed science.

Another journal betrayed its predatory nature by falling for the “Chocolate Makes You Lose Weight” sting. This sting was perpetrated by science journalist John Bohannon, who put together an actual clinical study of the impact on weight loss of eating one chocolate bar per day. By purposely using a fundamentally flawed research design and subsequently p-hacking the resulting data set, Bohannon and his colleagues were able to make it seem as if their study had demonstrated eating chocolate will make you lose weight.

The article was accepted and published (without a single word changed from its submitted draft, according to Bohannon) in Journal E.

The “Seinfeld” sting followed roughly the same parameters as the previous two, except that in this case the nonsense paper that was submitted for publication purported to discuss the fictional disorder “uromycitisis,” which was invented as part of the story line of a popular American television sitcom. This paper was accepted and published by Journal F.

The final predatory journal under examination for this project was Journal G, which agreed to take on as an editor a fictional individual named Anna O. Szust. According to the fake CV provided by the perpetrators of this sting operation, the fictional “Dr. Szust” had never published a scholarly article and had no experience as either a reviewer or an editor. Not only did this journal accept “Dr. Szust” as a member of its editorial board; amazingly, she is still listed as a member of that board today, even after her nonexistence has been widely publicized. (The word szust, by the way, is Polish for “fraud.”)

Searches were conducted in August 2018 and then repeated in October 2018 as a control. In all cases, the October results were either the same or slightly higher.

Summary of Findings

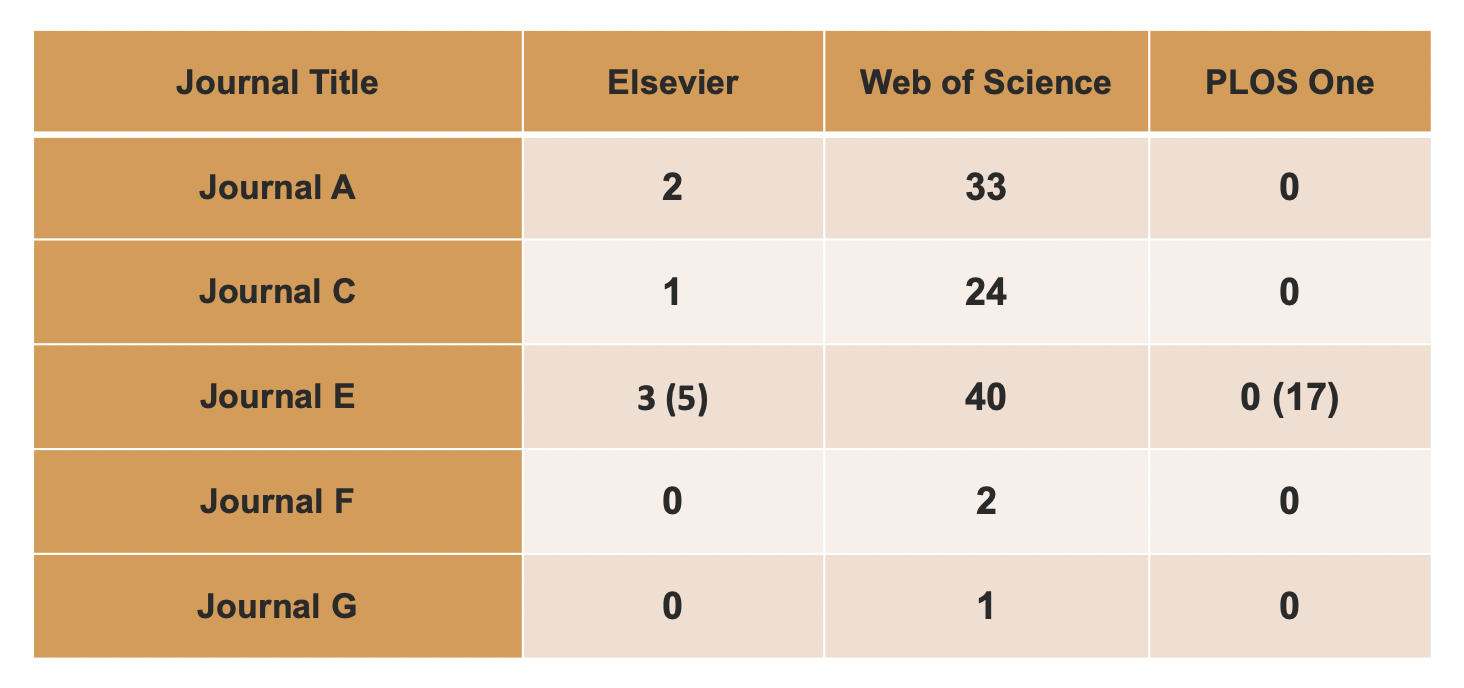

When I searched Web of Science, Science Direct, and PLOS ONE for citations to articles published in these seven journals, I found that two of the seven (Journal B and Journal D) had never been cited by articles in those aggregations.

Of the remaining 5, it is important to note that Journal E hasn’t always been a predatory journal. It was established in 2008 and published by a highly reputable open access publisher until the end of 2014, at which point it was sold to another publisher, which was the journal’s home at the time the “Star Wars” sting was carried out. While this journal continues to exist and its website shows volumes through 2018, it hasn’t actually published any articles since 2016. These facts will become important in the next section.

The Good News

The findings indicated in Figure 1 are mostly self-explanatory; however, some explanation with regard to Journal E is called for.

Of the three aggregations, the one that contained the fewest citations to predatory journals was PLOS ONE (in which there were no citations to predatory journals except for Journal E—which was cited in 17 PLOS ONE articles, though none of these cited articles was published in Journal E after its sale in 2014).

Elsevier ScienceDirect contained 61 citations to Journal E in total, 31 of them occurring since the sale. However, of those 31 articles in Elsevier journals, 26 cited pre-sale articles in Journal E; in two cases only abstracts were available online, making it impossible to determine the publication dates of the articles cited; in three cases, citations were to post-sale articles. In other words, only 5 articles in Elsevier journals, at most, were found to have cited articles from Journal E that were published after its sale.

The most concerning result was that Web of Science contained 40 citations to post-sale articles published in Journal E.

However, in interpreting this data, it’s again very important to bear in mind that Web of Science claims to index “over 90 million records,” while Elsevier’s Science Direct includes “over 15 million publications”; in both cases the indexed documents include book chapters as well as journal articles. PLOS ONE has published just under 200,000 articles, making its archive a radically smaller data set.

In any case, this table represents the good news: the predatory journals under examination have rarely been cited in legitimate publications indexed by these large compendia of scholarly and scientific literature.

However, there’s also bad news.

The Bad News

Another context in which this data should be considered is that of the predatory journals’ output itself: for example, one of them has had fully 36% of its published articles cited in the mainstream scholarly literature; another has had 25% of its articles cited. Journal E has had only 6% of its post-sale articles cited in the legitimate literature — however, as Figure 2 shows, given this journal’s prodigious output during the two years under examination, that small percentage represents the largest number of articles cited.

And it’s important to bear in mind that this study examined only seven of the most egregious predatory journals in a population of over 12,000 such publications in the marketplace.

Another particularly disturbing aspect of this data is the fact that these three predatory journals all publish in the field of medicine. The fact that these journals’ articles are being regularly cited by articles in the legitimate medical literature should give all of us serious pause.

Conclusion

In conclusion: the data from this study of seven of the most egregiously predatory journals demonstrates the ability of such journals to contaminate the scientific and scholarly discourse. This is clearly a problem.

Other important questions remain, though. These include:

- How big is the problem? What percentage of the articles published by the 12,000 or so predatory journals currently operating represents research that is so deeply flawed that it would have been rejected by competent editors and reviewers? Of those fundamentally flawed articles, how many are being cited in legitimate publications?

- How severe is the real-world impact of the problem? To what degree is bad science, masquerading as good science, undermining the quality of scientific discourse, policy formation, and medical care?

These are urgent questions that call for further research.

The full dataset on which this report is based can be found here.

Discussion

51 Thoughts on "Citation Contamination: References to Predatory Journals in the Legitimate Scientific Literature"

Interesting, and yes, potentially concerning. However I wonder how many preprints are now being cited? These may be assumed to be of similar quality to the articles published in these fraudulent journals (i.e. unreviewed, unrevised research), and their prevalence in the published literature is surely going to increase*. (I am not comparing them to predatory journals, and I know that MedRxiv is introducing submission checks and they will be adding reviews, have links to published articles etc., but as things stand now, they often have the “look and feel” of a credible/reviewed article and their easy availability makes using/citing them attractive.) (*I think some journals have a “no-preprint” policy for references?)

I wrote about this subject here:

https://scholarlykitchen.sspnet.org/2018/03/14/preprints-citations-non-peer-reviewed-material-included-article-references/

I have approached several of the groups promoting the use of preprints to see if they would be willing to help put together a set of standards for citing preprints in a manner that clearly marks them as non-peer reviewed material (as should also be the case for non-peer reviewed materials like websites, datasets, and some books). So far there’s been no uptake on their end. I do have a few journals I’ve worked with that flag all preprint citations in the style suggested by the NIH.

Nowadays, even decent journals are not that decent:

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC6558577/

in detail:www.xufangbai.com

Sorry, Xufang Bai, but I have no idea what point you’re trying to make here. Are you suggesting that the Journal of Geriatric Cardiology is not a decent journal?

Decent journals publish a lot of garbage (mates of mates etc) and cost enormous amounts to read the 2 -25 page articles unless you subscribe or belong to a library. Nature and Science have published some shockers and never retracted them. Your research is terribly flawed without proper controls and highly biased by selecting 7 egregious predatory journals of 12,000 such open-access publications and condemning all 12,000 without naming the seven. I wonder who is paying you to perform and write up your flawed and ‘click-bait’ research survey. I rather trust the authors and their citations in my field of interest and assess the follow-up research of the cited authors including those who publish in the many reliable and reputable open-access journals that provide a great service to science and medicine. If I saw ‘Star Wars’ in the title I would immediately pass it by and think that it’s just another fraudulent ‘sting operation’ paper or somebody trying to attract with a ‘sexy’ come-over-here title. I would have preferred that you made a list and named those open-access journals that you might think are reliable and reputable, if there are any in your consideration.

The problem is increasing at an alarming rate hence why we have decided to build a platform where researchers and publishers as well as the wider academic community can look if a journal is of a questionable nature or not. Fidelior is the platform we are busy testing and from responses thus far, the need is clearly there to have a system do the hard work of checking and highlighting possible questionable journals used within texts. The platform is growing and more tools will be added in due time to support the academic community even more. We welcome anyone to come and have a look, test and see how the reporting looks inside the system.

The link to be used is: http://test.fidelior.net/#home

Just register in order to gain full system access.

Please provide us with any comments or suggestions you think would be helpful in order for us to improve the system even more.

Hallo. Thanks for Fidelior. A suggestion: we urgently need a list of predatory conferences. These conferences are more difficult to determine than journals and are springing up all over the place.

Dear Hesma, thank you for this suggestion. We, at Fidelior Research, have been looking into the matter for a while now. We know that predatory operations have strong connections to other sorts of cyber-crime. Evaluating predatory conferences is indeed is a very challenging task, due to the fact that there are limited resources (e.g. metrics, lists, etc.) to support decisions. We therefore hope to build a scholarly community and develop a meaningful knowledge base. Please email me at info@fidelior.net. We have compiled a comprehensive list of readings on the issue of predatory conferences, which I can share.

I fully endorse the concerns raised, but my worry is that these problems are not just restricted to predatory journals, I have gathered some examples where journals of well-known publishing groups are not strictly following the rigor of or maybe not all reviewers are applying their full attention to the review process. articles are being published with gross errors and citations have clear questionable contents. In one way such publications. This is particularly common what I call the “kit based” (like kits of SNPs and other gene mutations are largely being used in the so-called molecular and genetic studies) research where investigators from entirely different fields manage to get presentable results, but their inherently weak analytical skills make the data interpretation questionable. On the other hand, such publications are providing easy material for teaching critical review of research to graduate students

There’s no question that the publication of questionable research is a problem throughout the scholarly literature, and that a solution to that problem is urgently needed–particularly where the research has the potential to significantly impact human health. This study examined only one particular manifestation of the problem, but a particularly egregious one.

It is for exactly this reason why an electronic platform is needed. One area where there is a problem is that there is no international standard that exists to determine if a journal is questionable or not. In order for the entire academic community to work together to clean out the predatory practices, such an international standard is required with criteria to determine if a journal is following the correct procedures and peer review mechanisms. Fidelior aims to establish an advisory board with international stature to support this process in order to establish an international criteria standard which will ultimately lead to policy changes at country as well as university and research levels.

Lazy journal editors (and reviewers)! One might at least wish that the editors were outed to their academic deans, who no doubt fund grad student assistants or approve faculty release time for such inattention to simple standards of citation.

Please note, though, that in the case of predatory journals the problem isn’t that their editors are lazy. The problem is that their editors are a fiction (in some cases literally, as the “Dr. Fraud” sting made clear). The entire business model of these journals is based on publishing whatever is submitted, as long as the submission is accompanied by money. So it’s not that the editing is sloppy; it’s that editing doesn’t happen, because if it did it would interfere with the revenue flow. Poorly managed journals are fundamentally different animals from predatory journals, and it’s important not to confuse the two.

I’m not sure that the right take here. I’ve never seen journal reviewer instructions that expect reviewers to verify that every citation is a high quality paper. I’ve certainly seen reviewers call out dubious claims that come with a questionable citation; however, I don’t think anyone is expected every reference to be validated by volunteers. Further, many of these so-called predatory journals go out of their way to look like legit journals and publishers do no favors by abbreviating journal names to within an inch of their lives.

Overall, I agree that given the level of fraud and concern, more attention should be paid to citations, particularly as it is the currency of scholarly publishing: https://scholarlykitchen.sspnet.org/2017/10/05/turning-critical-eye-reference-lists/

Wouldn’t it make sense to hava a public database (or 10…) that kept track of papers flagged as invalid? Not just “we don’t like it”, but “proven false”, “proven misleading”, “faked data”. “retracted” & such. Proof of the claim would, of course, have to include supporting citations.

There are obvious issues with such an approach (there is more than one way to commit fraud), but (like Wikipedia) a consensus approach can be taken as a start, with the understanding that politics can be involved. And having multiple, independent databases/references would tend to ameliorate the ‘faux consensus’ problem.

This does not require that all papers be included; only that retracted and/or disproven papers be added. Note that “disproven” does not mean “superseded”. We wouldn’t want Newton’s laws of gravity and motion to classify as ‘disproven” just because Einstein was more correct.

There should also be an appeal process, of course.

Nor am I advocating a new government agency to maintain such a dataset, although the government having their own such list would not be ruled out. I personally don’t want any government (or single academician) determining “truth”. This is something that univiersities (like Harvard and Yale, with their billions) should be expected to finance, “charitably” and preferably through independent third parties (like maybe Wikipedia itself?)

This is just off the top of my head. And yes, I know how likely this is, and how likely it is to be done appropriately. I’m not holding my breath.

The solution may not be as simple as finding a means to screen out citations from questionable journals. What about the problem of quality work that was submitted by mistake to questionable journals by the junior author on a reputable research team? Especially during 2012-16, a substantial number of reputable biomedical researchers unknowingly submitted to questionable journals. Hundreds and hundreds of publications in OMICS Group journals arose from federally (or Europe PMC Funders group) funded scientists, as shown here proudly by OMICS: https://www.omicsonline.org/NIH-funded-articles.php

This particular list is just a *subset* of federally funded OMICS Group publications where the authors took the time to submit their published manuscripts to PMC (under public access policies); for this subset, the manuscripts are now permanently archived and discoverable because they show up in PubMed searches, and may be cited. PubMed may have played a role in building up the reputation of some questionable journals, by not flagging them or by listing them as published in the USA when they are well known to be published in India.

The following URL shows, as of today, 128 federally funded studies archived in PMC from a single OMICS journal alone — the Journal of AIDS & Clinical Research — with many authors representing prestigious research institutions:

https://www.ncbi.nlm.nih.gov/pubmed?term=%22J+AIDS+Clin+Res%22%5Bjour%5D

If you click on the articles, you may notice important researchers in the field of HIV/AIDS; and that many of these publications were subsequently cited by articles in reputable journals. Should reputable journals be excluding such citations? Should editors tell the authors that they cannot cite their own work or the work of their peers if published in a questionable journal?

Should the NLM list this journal publisher as in India instead of allowing them to falsely claim Sunnyvale, California? https://www.ncbi.nlm.nih.gov/nlmcatalog?term=%22J+AIDS+Clin+Res%22%5BTitle+Abbreviation%5D

Finally someone did the study! Thanks, Rick. All these years of hand-wringing about predatory & nobody had any idea if those papers were ever being cited. Thanks also for using a solid criteria for predatory rather than that questionable old list.

Now we have a little data indicating that there are a handful of authors of legit papers that cite predatory journals. The question that comes up immediately is whether those authors actually read the articles they’re citing (or were the citations added in as part of a too-shallow lit review). I would also be very interested in what the citation patterns would look like extended to a larger set of poorly or not-at-all edited publications.

Yup, agreed on both counts. Though I’d caution (again and again and again) against conflating the problem of poorly-edited or low-quality journals with the problem of genuinely fraudulent ones. I’m not saying there’s no fuzziness at all in the boundary that separates them, but we are actually talking about two different classes of problem: one is high quality vs. low quality articles, and the other is honest vs. dishonest publishing practices. A journal that is operating in a fundamentally honest way but not doing a great job represents an entirely different class of problem from that represented by a journal that offers fake credentialing for money.

It would be interesting to see the articles that were citing these obviously false articles. I struggle to think of any reason why somebody would cite a paper on something as obviously bogus as the examples above.

What concerns me are the articles published in predatory journals that are written on very real topics but were poorly done or false that aren’t as obviously bogus. IMHO those have a greater chance of getting cited by researchers than the articles on midichlorians and uromycitisis and have a greater impact of tainting research.

Whoops ok after re-reading I see that you weren’t specifically look for the citations to those bogus articles. You were looking at any article that cited an article that published in those journals that also published those bogus articles. I read too fast.

Still it taints our research that we use to treat people.

Is it okay to review a manuscript submitted to a predatory journal.

It’s your choice, obviously, but if you’re confident it’s a predatory journal, then my advice would be not to support it in any way.

Is it clear whether these citations include research critical of the articles published in predatory journals? Or are these citation numbers references to these works regardless of how they are evaluated?

Apologies, but I’m not sure I understand the question. Can you clarify?

I think the point that Emily is trying to make is the same question I have about this study: you can cite a paper to support it and say it’s great, or you can cite a paper to discredit it. Both count as one citation in your analysis. Did you look into the context around the citations to see which category these fall into? There is at least some hope that scholars are calling out bad work in the literature. I also wonder how many are self-citations.

Thanks, Martyn. That is indeed what I was getting at.

As I recall from seeing presentations by scite.ai (a startup looking to use AI to determine the nature of citations), nearly all citations are “neutral” in nature, a very small percentage are “positive” or “supportive”, and a very very tiny percentage are “negative”. While it would be interesting to run their algorithms on this data set, I suspect it may not deviate much from the norm.

Regardless, it raises the question of citation practices, and whether one should be citing, even in a negative manner, things that one knows haven’t been peer reviewed? Traditionally, the practice is that anything not peer reviewed should not be listed in a paper’s citations (they can be mentioned as things like “personal communications” instead). I discussed this at length here: https://scholarlykitchen.sspnet.org/2018/03/14/preprints-citations-non-peer-reviewed-material-included-article-references/

Is an author required to cite any random rant or piece of doggerel one finds on the internet that contradicts the reality of one’s findings? If someone hands me a deranged flier on the subway claiming odd things about the causes of cancer, should I be citing that in my formally published epidemiology paper?

While the concerns are valid and this research is worthwhile, it raises another issue often ignored

Simply that occasionally, good research may inadvertently be published in a predatory outlet. It is very likely that those who cite such works and even the reviewers have checked the source and found the material credible and sound despite the shady character of the publisher.

This shows that ultimately each article may be evaluated on it’s own merit and not solely based on the publication outlet. Yes, peer review is important but it is not necessarily without occasional imperfections too.

I have been working with journals for almost 20 years and I can’t think of an occasion where the peer reviewers carefully went through the references of a paper to determine the credibility of the cited article. Peer reviewer time is limited and this level of checking is generally not expected.

Which is why we need an automated tool to flag questionable references for further investigation:

https://scholarlykitchen.sspnet.org/2019/09/25/fighting-citation-pollution/

I think perhaps your reply misses the point. As Dr. O. S. Aasaolu says, “occasionally, good research may inadvertently be published in a predatory outlet”. I have noticed plenty of examples of legitimate, but generally boring research published in questionable journals, including the ‘OMICS Group’ as mentioned above.

Hence regardless of whether peer reviewers notice or not, such citations may still be valid and necessary.

It is primarily the job of the authors of the manuscript to judge the quality of the research they cite. If the authors cite low quality or potentially fraudulent studies, then the quality of the manuscript should be judged accordingly – by the reader! I have also note countless examples in reputable journals where citations do not support the arguments being made by the authors, along with simple errors in citation (wrong studies being cited). A tool for peer reviewers that flags citations of predatory journals might not make any difference at all, since the questionable/fraudulent research isn’t being cited, except as examples of questionable research.

Peer review is not something that starts and ends before publication.

It is a big mistake to assume that just because a manuscript is published, even in a reputable journal that nothing funny has gone on. (Common examples are HARKing, outcome switching, publishing research involving children without ethics approval – the latter of which involved journals published by the BMJ, Oxford University Press, Springer Nature and Elsevier.)

Agree. The “concerned too” reply above provides links to substantive examples of good research in omics journals which now appear in pubmed and are cited by others in legit journals (also in PMC). No commenter has provided advice how journals might deal with this problem of good work in bad journals.

As has been noted elsewhere, the proposed solution is a service that scans reference lists for known offenders.

https://scholarlykitchen.sspnet.org/2019/09/25/fighting-citation-pollution/

Those references get flagged for further attention, which means either the editors can evaluate them or they can query the authors about them. Seems like a reasonable approach that would allow legitimate research to get through where justified.

The idea that I (and others) have proposed is to flag any citations to journals that have proven that they do not perform peer review. Once flagged, they can be reviewed by the editors or brought to the attention of the authors for justification. Then the authors and editors can make that judgement call.

It is a big mistake to assume that just because a manuscript is published, even in a reputable journal that nothing funny has gone on.

The difference being that legitimate publishers make an honest effort to weed out such manuscripts and when errors are found, have a process in place for corrections and retractions. The publishers being discussed here make no such efforts.

For what it’s worth, I’ve started including a citation review as part of what I do whenever I serve as peer reviewer for an article. I don’t go to the extent of reading every cited article and trying to analyze its quality, but I do examine all cited journals to see if they appear legitimate, and I include my findings in my report.

Interesting. Is it known what percentage of these citations are author self-citations?

What is the percentage of scientific research in the last decade that is peer-reviewed?

Australia and India threw out thousands of research. Most of the countries research come out of have a high percentage of discrimination in society. Scientists come out of these social environment. Have they any training in the removal of prejudicial biases to formulate non-biased researches? Do they know Emmanuel Kant’s Critique? Science has eradicate religion (catechism helps to teach practitioners to remove prejudicial biases) and now carry all of the irrationality and vulnerabilities that exist in untrained human beings it has ascribed to religion.

When will North America have a scientific review of science itself? The third most quoted psychologist just became the second most revoked psychologist with a possibility of becoming the first.

When will science only publish reviews that are peer-reviewed or express non-peer-reviewed researches frequently in the articles? Corruptibility increases the higher the intelligent quotient. Corruption is highest in the top universities. Science is losing its credibility. Where does science begin transparency?

Journal citations are an increasingly outdated metric for this and other reasons. They arose from an era where the friction of paper-based distribution enabled a trust-based infrastructure. In a world of friction-free, open dissemination we need to transition to new techniques based on verification, attribution, and network analysis to reach conclusions about authenticity and value. It’s not clear that retrofitting citations or building new human-curated journal authority lists will be potent enough for our era.

Richard Wynne

Rescognito, Inc.

Citations, however, do serve a purpose beyond driving metrics that allow academics to abdicate their responsibilities as far as evaluating candidates for jobs, tenure, and funding. They are (as argued here https://scholarlykitchen.sspnet.org/2016/11/07/does-democracy-need-footnotes/), “about the productive and persuasive relationship of evidence to argument.”

Diluting the scientific record is the issue here, not the pernicious side effect that has on metrics.

Our team has studied predatory publishing in nursing. Concerned about the dissemination of information from predatory journals into legitimate nursing literature, we analyzed the citations to articles published in 7 predatory nursing journals. These 7 predatory journals had published the largest number of articles in nursing, and in earlier studies we confirmed that they had questionable peer review and other editorial practices. Our medical librarian developed a way of searching for the predatory journal title in the reference lists of articles in Scopus. We found 814 articles in legitimate nursing journals that cited in at least one article from a predatory nursing journal!

(Our study was Citations of articles in predatory nursing journals. Nursing Outlook. https://doi.org/10.1016/j.outlook.2019.05.001 ahead of print]

Thank you Marilyn. My colleagues and I also recently wrote a blog where we referenced 7 citation pollution studies, including one of your papers. We summarized the key research findings on the topic: http://test.fidelior.net/2019/10/15/predatory-journal-papers-are-permeating-and-polluting-the-scholarly-system-via-citations-how-serious-is-the-problem-what-can-be-done/

Indeed, there is a need for more studies that show the extent of citation pollution in different subject disciplines. We hope that Fidelior’s Citation Reports tool will assist researchers in their analyses of pseudo-science citations, and encourage more research on the issue.

In a world of friction-free, open dissemination we need to transition to new techniques based on verification, attribution, and network analysis to reach conclusions about authenticity and value. It’s not clear that retrofitting citations or building new human-curated journal authority lists will be potent enough for our era.

Seems like this is turning into a losing cause. Rick, in this post, you decided not to even say the names of your 7 studied predators, Beall folded his list apparently after he or his university tired of threats and harassment, and Cabell’s list isn’t available to individuals.

I’m not sure I’d describe it as a “lost cause” but it is certainly one that is fraught with legal risk. Beall’s list no longer exists because of lawsuits threatened by publishers that he deemed as “predatory”. Rick, as someone who has faced legal threats for his previous analyses (https://scholarlykitchen.sspnet.org/2013/04/03/ssp-board-decides-to-reinstate-removed-posts/) seems prudently cautious in not wanting to get caught up in further entanglements. Cabell’s list is not given away for free because it needs to be paid for somehow (including paying to cover those potential legal liabilities).

I think the question is really whether the community sees predatory publishing as problematic enough to invest funding in addressing it. Legitimate publishers don’t seem to see much ROI in doing so (other than when a predator infringes on ore tries to falsely represent one of their titles). No funders seem willing to step up and cover the costs for an open list or other approaches, nor have universities or libraries.

I’ll just add a couple of things to David’s response:

1. Anyone who wants to see the names of the journals in question should follow the link I’ve provided to my full dataset, which includes that information. I just didn’t want to put their names in the public blog post, because some predatory publishers are quite litigious. (Of course, you can also follow the links I’ve provided to the write-ups of the relevant “sting” operations, each of which identifies the journals that were caught.)

2. I don’t think it’s true that Cabell’s Blacklist isn’t available to individuals. If you were to contact them and request a quote for an individual subscription, my guess is that they’d be happy to provide one.

Cabell’s Blacklist is a start but I am frustrated by them. I have a hard time judging the quality of their Blacklist when their Whitelist has so many missing journals. We were looking at linking the Whitelist to our publishing page so that authors could easily use one site to see acceptance rate, type of review, time to publication, etc. Unfortunately, they are lacking a great many legitimate titles in the Whitelist. A simple search for JAMA reveals only JAMA Psychiatry and no other AMA publications. When asked about this, Cabell’s responded their “Whitelist does not currently cover medical subjects, that’s the only reason why those titles and thousands of other god quality journals are not yet indexed.” I realize they are working on it, but it also makes me question how complete their Blacklist is.

My belief is that citations of articles are real ingredients to any research outputs, which could be criticized or supported openly to the detriment of the Authors’ credentials and not the publishers (the money makers, so to say).

Over the next few weeks we’ll be updating our citation extraction API to provide an option to flag citations to suspect sources. It’ll work with Word, PDF and XML files, and Web pages.