Editor’s note: Today’s guest post is by Ben Kaube (Cassyni) and Steve Smith (STEM Knowledge Partners). Reviewer credit to Chef Alice Meadows.

Last summer, Rob Johnson and Sarah Greaves published a sobering analysis: society publishers are at a crossroads, facing declining revenues, rising costs, and pressure to consolidate. Their data is hard to argue with. Since 2019, over half of society publishers have seen journal revenues decline in absolute terms. Smaller portfolios and biomedical fields have been hit hardest. The trajectory is real.

This crisis narrative emphasizes what societies lack: scale, technology investment, and resources to compete with well-capitalized commercial players. All true. But it can obscure what societies have that others don’t; and what becomes more valuable, not less, when the information environment gets noisy.

We write this as outsiders of a particular kind: between us, we have spent decades in scholarly publishing, but neither of us currently works inside a society. That distance has limitations. But it also offers a different vantage point.

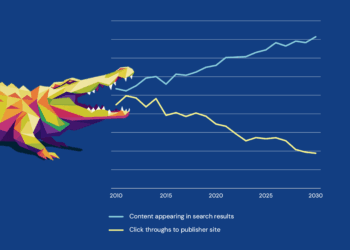

In our previous piece, “When the Front Door Moves”, we described how AI discovery tools are routing researchers away from publisher websites toward assistants that summarize without attribution. Stack Overflow was our cautionary tale: traffic collapsed, engagement followed, and a once-vibrant community hollowed out within months. But Stack Overflow was a platform. It hosted a community, and for a time, a vibrant one, but the relationships were incidental to the product. Developers came for answers, and when answers arrived faster elsewhere, most left without looking back.

Societies are different. Members build relationships with each other, reputations within the community, and professional identities tied to the field. A researcher who has attended the same conference for 15 years, served on committees, reviewed for the journal(s), mentored students through the society’s programs, and built a reputation within that specific community has something at stake that goes beyond engagement metrics. That’s membership. And membership is sticky in ways that platform usage never was.

The Value of Trust in a Noisy World

This distinction matters because the AI disruption story cuts differently depending on what you’re actually offering.

Content is exposed. AI summarizes it, strips attribution, flattens context, and delivers answers without directing anyone to your website. The more efficient these tools become, the less reason anyone has to visit the source. For publishers whose value proposition is primarily “we have the content,” this is an existential problem.

Community is harder to disintermediate. The relationships built through years of participation, the reputation that comes from knowing who reviewed a paper or organized a session or mentored an early-career researcher, the trust that accumulates when people show up repeatedly and put their names behind judgments: none of this survives the journey through an AI intermediary. You cannot summarize a relationship. You cannot aggregate trust into a chatbot response.

And trust becomes more valuable, not less, when the information environment degrades. The more noise enters the system, the more researchers will retreat to sources with human accountability. The question shifts from “what does the evidence say?” to “who is telling me this, and why should I believe them?”

When a researcher needs to know whether a controversial finding has been replicated, they don’t just want an AI summary of the literature. They want to know what the people who actually work in that area think, and whether those people have track records that warrant attention. That’s a question that only a functioning community can answer.

Societies have been building the infrastructure for exactly this kind of trust for decades. They just haven’t always recognized it as their core asset.

What Societies See That Others Don’t

Societies engage researchers across decades, not transactions.

The touchpoints are more frequent (annual meetings, seminars, committee cycles), broader in scope (publications, events, service, professional development, awards), and more informative than download logs or submission portals. A commercial publisher might know that someone submitted three papers last year. A society knows that the same person gave their first conference talk in 2015, joined a working group in 2018, started reviewing in 2020, and is now being discussed as a potential associate editor. That longitudinal view, combined with strong norms around research integrity, creates differentiation that citation metrics cannot capture.

Societies also see participation in ways that surface expertise beyond the published literature. Publishers track submissions and citations. Societies see who presented early-stage work, who reviewed carefully, who mentored newcomers, who organized conference sessions. They may not yet capture this information systematically, but the visibility is there. These signals can identify emerging experts, reliable reviewers, and future leaders years before their publication record catches up. AI discovery tools currently lack this layer, and societies are positioned to provide it.

There’s also a fairness upside: societies can surface contribution signals that aggregate metrics and generalist models often miss, especially outside Northern, English-language publication channels.

Because this engagement is repeated and reciprocal, it compounds into something harder to replicate: trust. Members participate more openly when they know the community; societies enforce norms more effectively when violating them carries reputational cost; and the resulting signals carry credibility because they come from people with skin in the game.

These are precisely the signals that funders, institutions, and AI systems are currently missing, and that societies are uniquely positioned to provide.

Where Society Value Actually Lies

If journals become commodity inputs to AI systems, what’s left?

The traditional view puts the journal portfolio at the center: impact factors, submission volumes, contract renewals. That made sense when journals were the primary way in which researchers encountered the society. But if AI intermediaries increasingly stand between researchers and content, the journal risks becoming raw material for someone else’s product. The society’s visibility depends on what it offers beyond the PDF.

But there’s another way to see this. What if the center of gravity isn’t the journal portfolio but the community itself: the web of relationships, contributions, and participation that only societies can see across journals, meetings, membership, and service roles? One researcher might be simultaneously an author, a reviewer, a conference presenter, a committee chair, and a mentor. Today, these are often separate records in separate systems. The societies that link them will have something no AI platform can replicate: a structured, credible account of who actually contributes to a field and how.

This is not just an answer to AI disruption. It’s an answer to a question societies have been wrestling with for years: what is their distinctive role in the research landscape? The answer has always been there. Societies are where researchers who care about the same problems come together to discuss, share, and collaborate. AI just makes it urgent for societies to demonstrate that they are the answer.

The 2030 Opportunity

The practical opportunity is to make human judgment visible in ways that AI systems can recognize but cannot replicate.

Create “society verified” signals. Right now, AI systems surface experts primarily through publication and citation signals. Societies see a richer picture: awards, service, conference organizing, and mentorship. Making these machine-readable positions societies as trust authorities in the AI ecosystem, not just content sources. And the infrastructure already exists: for example, ORCID records can capture service roles, editorial positions, and other contributions, yet few societies make systematic use of this. When an AI assistant answers “who are the leading researchers on X,” societies that have structured their contribution data can ensure their community’s expertise is visible and with attribution intact.

Capture the conversational layer. The published article is polished and hedged, but the really interesting stuff often happens around it: the Q&A after a talk, the pushback in a seminar, the debate about what the paper doesn’t say. Recording, transcribing, and linking these conversations to related papers creates a layer of signal found nowhere else in the formal literature. It also provides something AI-generated summaries cannot: real experts interrogating real claims, with names attached.

Position community experts as curators. In a world flooded with content, the bottleneck shifts from production to curation. Societies already have recognized experts with reputations to protect. Invite them to write commentaries, compile reading lists, and contextualize emerging work. These curators offer something AI cannot: not just accountability (their reputation is on the line) but taste. They know what’s genuinely interesting versus merely publishable, what the field should pay attention to even if the citation counts are modest, and what represents a real shift versus incremental progress. AI can aggregate; it cannot tell you what matters.

Recognize editors as community influencers and leaders. Special collection editors are often underutilized: lending their name but not deeply involved. That’s a missed opportunity and, in the wider publishing landscape, an integrity risk. Special issues have become a vector for low-quality and papermill content precisely because editorial oversight is thin and reputations aren’t truly on the line. Society-run collections are better positioned to resist this: the editors are known to the community, their judgment is visible, and their reputation extends beyond a single publication. When an editor identifies an emerging topic, assembles a collection that shapes how the field thinks about it, and puts their reputation behind the selection, they’re acting as an influencer in the proper sense. They’re setting direction and expressing the kind of taste that shapes long-term research trajectories. Societies should make this role meaningful: give these community editors real curatorial scope, make their contributions visible at society events and in member profiles, and treat this work as part of the scholarly record alongside authorship. Recognition creates both reward and accountability.

Connect content across formats. Link talks to papers bidirectionally: when a member publishes, surface any related conference presentation; when someone watches a talk, show the papers that were discussed. Go further by hosting AMAs with authors and editors, and let members follow topics or contributors across journals, meetings, and seminars. The goal is an integrated experience where content types reinforce each other rather than sitting in silos.

Why this matters for financial sustainability. Societies that can show who contributes to a field, and how, bring something to publishing partnerships that doesn’t come from scale alone. They can offer libraries differentiated products beyond content access, along with credible data on expertise and contribution that funders can use to support better assessment. They create sponsorship opportunities anchored in high-trust community channels rather than generic impressions. And they make society journals more desirable places to publish, because authors know their work will reach and be recognized by the community that matters to them. The community advantage is not separate from financial sustainability; it is the foundation for it.

Where to Start Now

None of this requires wholesale transformation or building your own AI-stack. For most societies, this is a matter of priority and partnership, not massive technology investment. A few concrete moves can build momentum.

Build the data spine. Create a basic layer that links membership, authorship, reviewing, and meeting participation in a single view. Even a rudimentary version reveals patterns that siloed systems hide and that positions the society to answer questions about its community that no one else can. This data is a strategic asset, so treat it as one: ensure contracts with partners include clear terms on data portability and governance, so the society retains the ability to use and build on what it learns about its community.

Treat talks as first-class knowledge objects. Pick a flagship meeting and ensure that every presentation has a persistent identifier, structured metadata, and clear permissions. Not “we recorded the sessions,” but talks as knowledge objects that can be discovered, cited, and connected.

Offer visibility as a publishing benefit. Give authors who publish in society journals a seminar slot, a “behind the paper” feature, or inclusion in curated collections. Make the society journal the place where your work gets seen by the community, not just indexed.

The forces reshaping scholarly publishing are real. AI discovery will continue to erode traffic to journal websites, technology costs will keep rising, and some consolidation is probably inevitable. But the conclusion that societies are destined to lose this game only follows if you accept that the game is about content delivery and platform scale. It isn’t. The game is about trust, and trust is what societies have been quietly accumulating for decades.

The risk is not that AI replaces societies. The risk is that societies fail to recognize the value of what they have and what they are. The task now is making that trust legible to the systems reshaping how researchers discover and evaluate knowledge.

Discussion

8 Thoughts on "Guest Post — Societies 2030: The Community Advantage in an AI-First World"

Thanks for the post Ben and Steve, and for the call back to our analysis. I think you’re right to highlight the opportunity for societies to highlight and leverage the interconnected nature of their communities. The caveat is that for societies with large publishing portfolios the number of authors will be an order of magnitude larger than the number of engaged society members, and the majority of those relationships will be purely transactional. I am sure there is untapped potential to convert some of these authors into reviewers, speakers, mentors and so forth, but does the vision you set out mean accepting a reduction in breadth of engagement in favour of depth? Or can these engaged and more transactional models continue to co-exist?

Thanks Rob, and also for the original analysis that prompted a good deal of our thinking here. You are absolutely right to flag the scale mismatch. For larger societies, the author base will often vastly exceed the engaged membership, and many of those relationships will remain essentially transactional.

I do think the two layers can coexist. The question is less whether societies must choose breadth or depth, and more which layer carries the strategic weight. A broad author base still matters, but the engaged layer, reviewers, speakers, mentors, committee members, is the harder-to-replicate asset, and potentially the thing that makes the transactional layer more attractive as well. Authors often want to publish where their work will be seen, discussed, and valued by the community that matters to them.

So I would frame it less as a trade-off between breadth and depth, and more as a question of whether societies can build a better pathway from one to the other. The publishing operation does not have to shrink, but the community layer arguably needs to become more legible and more central to how the society understands and presents its value.

As you imply, the practical challenge is making that conversion pathway real: identifying potential contributors within the author base, recognising participation beyond publication, and making those signals visible. That is where we suspect there is genuine untapped potential.

Thanks for the thought provoking post Ben and Steve. Lots to chew on here.

Your points around building the data spine really resonate. The reality for a lot of society publishers is that data sets are isolated from each other. While I completely agree with the idea of combining these, the reality of doing so is deeply challenging, requiring investment, expertise (that can be lacking) and joined up thinking between often siloed departments. All too often publishing units aren’t connected to other units, don’t speak the same language or even the same approach to value realization.

My (albeit optimistic) hope is that the AI transformation provides the catalyst for change, whether through business model disruption or the discovery of revenue upside.

Certainly societies, and their publishing partners, have a clear role to play in the AI future, even if the pathway there is potentially rocky.

Thank you, Chris. I share your optimism, even if many societies do not yet have the muscle memory for this kind of cross-departmental collaboration.

Part of the reason we framed the piece as Societies 2030 is to acknowledge that this will be a journey. It will require change across data, platforms, teams and incentives, but publishing partners and other vendors can help societies navigate that change more easily.

Enjoyed reading this piece Ben and Steve, this aligns with a few thoughts I have had about the society space.

Chris mentioned silos, I think that is one of the biggest challenges facing societies. Also, thinking of meetings as a one time event and not a continuous process where the sharing and learning never ends. Lack of data collection beyond what is published or presented, personalization is what user want (not one size fits all). To mimic what has disintermediated societies, social media and professional networks.

Thanks Jay, you’ve put your finger on several of the core issues: silos, weak data collection outside of the formal publication process, and the habit of treating meetings as isolated events. And yes personalization matters here too. Researchers increasingly expect experiences that reflect their interests and role in the community. If societies can build more continuous and personalized ways for members to engage, they may have a stronger hand than they sometimes realize.

One empirical way to quantify the strength of this society advantage would be to compare reviewer agreement rates across society and non-society journals – the overall narrative is that nobody wants to review any more, but one would hope/expect that society journals are the best placed to tap the goodwill in their surrounding community to find willing reviewers.

Hi Tim, thanks for this. Yes, if societies do have a genuine community advantage, one place you might expect to see it is in reviewer acceptance and participation rates, especially over time. That said, I suspect a number of other factors also come into play, including the field, journal tier, article topic, and editorial setup. But as an empirical test of whether “community goodwill” translates into something measurable, reviewer willingness and retention would be a very interesting place to look. I have long suspected that where researchers feel a stronger connection to a journal, they are often more engaged, more responsive, and more willing to help out. More broadly, it points to the same underlying question: can societies convert their community relationships into stronger editorial and participation pipelines than other models can?