Let us cast our minds back to 2003 and a quote from John Battelle that summed up neatly why Google and a slew of other tech companies down in the valley, would go on to command the astronomical valuations that persist to this day:

The Database of Intentions is simply this: The aggregate results of every search ever entered, every result list ever tendered, and every path taken as a result. It lives in many places, but three or four places in particular hold a massive amount of this data (ie MSN, Google, and Yahoo). This information represents, in aggregate form, a place holder for the intentions of humankind – a massive database of desires, needs, wants, and likes that can be discovered, subpoenaed, archived, tracked, and exploited to all sorts of ends. Such a beast has never before existed in the history of culture, but is almost guaranteed to grow exponentially from this day forward. This artifact can tell us extraordinary things about who we are and what we want as a culture. And it has the potential to be abused in equally extraordinary fashion.

This is one of the most prescient statements I think I have ever read about the world that we live in today. And in looking back a paltry 11 and a bit years since those words, one has to realize that the Database of Intentions was in fact in its infancy.

What John is talking about in the above quote, is the idea that Google et al., would know, in an abstract sense, about human desires. For example – N% of a population is interested in Y and Z and A. Those interests have changed over the last XXXX period from C and D and E. So the algorithms show ‘them’ more Y and less C. And Google makes money off the advertising.

This was a world that existed after the Patriot Act (for the USA) and RIPA (for the UK). This was also a world where Y, Z and A were ‘just’ statistical clusters of co-occurring words; the machine didn’t really have a clue what those words actually were about. A bunch of dumb algorithms and a bunch of parallel processing. It was a world before the rise of the social graph (people, aka Facebook); the geospatial graph (driven by cheap GPS chips in mobile devices, and increasingly ubiquitous wifi); and the knowledge graph (concepts and so on). So let us update that quote with all those things and see what it looks like now.

The Database of Intentions is simply this: It’s a model of you. And everyone and everything you contact in space and time.

It’s the aggregate of your searches, your results and the paths you took, indexed against your location, your time of day; further indexed against the semantic understanding of who you are; what you are interested in and what, or who your interactions are with.

This information, in aggregate form, IS the intentions of humankind – a massive database of desires, needs, wants, and likes that can be discovered, (not even) supoenaed, archived, tracked, and exploited to all sorts of ends. Such a beast has never before existed in the history of culture, but is almost guaranteed to grow exponentially from this day forward. This artifact can tell us extraordinary things about who we are and what we want as a culture. And it has the potential to be abused in equally extraordinary fashion.

And here’s the thing… I really want to exploit that database.

And so do you.

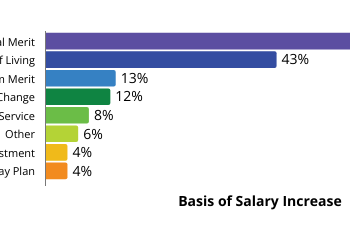

You are a Funding Agency – representing the long arm of the taxpayer, and you really want to do the ROI of science thing; demonstrate that you give money to the most impactful work by the most effective scholars. You totally want at that database, because that’s how you are going to figure out just what impact truly is.

You believe #Altmetrics is the future of measurement of the multitudinous elements of scholarly output. You totally want at that database. Actually, you are building that database – harvesting the data from all sorts of places, trying to find the signal in the noise and show that a positive tweet from professor X from the research powerhouse of Y about a scholar is just as useful a measure as an A-list publication score.

You want to expose data from researchers – from the raw material to the curated and quality controlled outputs, you want to match the who to the what and the how.

You are a University – you want to know who brings in the money from those funding agencies – who’s the rockstar and who needs to clear their lab; How can you ensure you score big at the next RAE?

You are a Librarian – You’ve paid for a bunch of stuff, now what exactly is it being used for and by whom, and does that justify the cost?

You are a Researcher… You need to maximize the ‘bang’ from your research; gotta get it in front of the right people for that tenure process. You need to come up with something that demonstrates your ‘impact’. You could really use something that filters out the cr*p, let’s you scan over the literature more efficiently; let’s you keep an eye out for the opposition sneaking in with a result from left-field; something that tells you if you’ve registered an ‘impression’ with that big prof in your field.

You are a Publisher – You need more usage; more visits; more eyeballs. Whether you are #OA or #Tollgate, #Freemium or #Subscription, you need to get the stuff to the right people at the right time. You need to enhance serendipity, because that drives the value. You need that 360 degrees view of ‘the customer’ so you are building that database as well. You want to push the relevance, and a knowledge graph and a social graph is a wicked combination if you can crack it.

All these things require surveillance. You can’t measure ‘impact’ without it. If you want to measure the ‘impact’ of a persons’ work, you have to gather the data on where it goes, how far it spreads, the impressions, the penetration, and the cascade of actions that it triggered, and you have to set those in some sort of cultural model. A really good relevancy model for usage must relate the semantics of the stuff to the interests of the potential readers, and that requires data be parsed from both. Where do you get the reader interest data from? Why their clickstream of course. This is why we are tracked constantly in our browsers so as to feed the beast that serves the ads. As a (rather important) sidebar, one might want to ponder why it is that with all this tracking, the ad supported news business is in such terrible trouble; isn’t the data worth infinitely more than taking a blind shot on a half page ad in the print newspaper? Shouldn’t we be living in a golden age of journalism powered by surveillance based advertising?

Of course, surveillance has a bit of an image problem right now. Whether you merely find it creepy, or perhaps you worry about the capabilities of the state to blunder around the data, the cost benefit equation is more often than not, tilted firmly against you.

And it’s reached the outer spiral arm of the internet we call home. Us. The community of scholarly publishers and associated interests. It emerged rather strongly in a number of the sessions at the most recent SSP Annual Meeting, a topic of question in more than one session. It was called ‘impact’ or ‘analytics’ or ‘metrics’ but it’s surveillance at the end of the day.

And I believe firmly that it’s an important tool, and that if we can do it right; do it transparently; do it respectfully; explain the benefits; allow the control to reside with the surveilled; be honorable and open with our intentions; then maybe we can all benefit here. But hope is not enough.

I think we should have a debate about how this data should be used in a scholarly context. We should look to derive a set of principles about how such data is to be used. Perhaps we need to think about badges and accreditation for ‘good surveillance’ practices. We must be able to explain to our users why the transaction is in the interests of all the stakeholders, and understand and allow for control over the collection and use of such data.

There’s more here (much more). I hope that whether you agree with my views (and they are very much my personal views) or not, you do conclude that discourse in this area is much needed. We are somewhere close to the general internet conditions that prompted John to write those words in a blog post in ’03. Let’s try and think this through for the benefit of all the members of the scholarly community.

Discussion

24 Thoughts on "Surveillance and the Scholarly World: What Shall We Do With The Database of Intentions?"

The word ‘surveillance’ is associated with covert operations, whereas, as you say, the kind of metrics-gathering we are talking about here should be overt – transparent, and with those who are being measured in control. As this Nature editorial, ‘Track and trace’ from March 2014, noted, the ORCID system gives researchers control over the information they submit and allow to be visible. Would that be an example of analytics/metrics done right? What would be an example of analytics/metrics gone so far wrong that you would term it surveillance?

Some of what we are talking about are public events, such as tweets and blog comments. But are downloads and browse paths public events? My grocery store has, or can have, a computer record of everything I buy using my credit card. It could sell that data to advertisers. Is buying wine a public event? Where does privacy begin?

I have to say that I am leery of the idea of “control over the collection and use of such data.” Are we talking about government controls? We are talking about important new ways of understanding human behavior. Do we want the government controlling that? The potential harm from the use of the data would have to be very serious to justify government control.

I recently presented a poster on this very topic at the Digital Humanities Summer Institute. Couldn’t agree more that we need to talk about the ethics and structures of this type of research. teachableplanet.com/searching

Do you have something you can link to? It would be great if you did.

Here’s the website to support the poster. Forgive the bland layout, it was quick and passed a number of key accessibility tests.

Data are not self-interpreting. It seems to me that what one can know from such raw data takes you only so far. The kinds and variety of “intentions” revealed by such data are endless. How does one know one is right in interpreting them?

Is the data tied to each individual who creates it? Many searches are done on public machines. Also, some 100 billion searches are performed each month or 1200 billion each year and that is only on google (www.internetlivestats.com/google-search-statistics/).

How would one store the data created by each individual? by IP or by name? To those of us who have tried to maintain a simple membership list, one finds the task daunting.

Thus I wonder? Are we worried about something that applies to each of us or is this a Roseanne Roseannadanna event?

As a general point, it’s increasingly easy to behaviourally and otherwise, tie use of machines to people. And if you use a social media account, they’ve got you basically. If you use Google, you are getting personalised results, and so is every other user. Facebooks Graph search was a superb example of just how creepy things could get. In fact Google “creepy facebook graph searches”… I’d rather like us to avoid that!

I’d like to hear about why you chose to frame this as “surveillance” … I think that word will lose its important and specific meaning if it is used to name any gathering of data about people and actions. Or, would is all research on human subjects surveillance since it gathers data about them?

I think the word “surveillance” is appropriate, perhaps because it does deliberately ring alarm bells as opposed to other potential euphemisms that could be coined by those trying to distract our attention away from their practices. Merriam Webster defines it as follows:

: the act of carefully watching someone or something especially in order to prevent or detect a crime

Certainly what groups like the NSA are doing qualify. And while the Googles of the world aren’t looking to prevent a crime, they are essentially performing the same act to a different end. What word would you propose instead? Is it worth coining a term like “economic surveillance”?

Is there a difference between performing research on human subjects (presumably in a limited manner, with informed consent and to address a particular hypothesis) and carefully tracking and recording all behavior of human subjects often without their consent/awareness and with a purpose of exploiting those data for commercial gain?

Observation might be a better term.

But it’s not just observation, it includes analysis and then action taken. Observation implies a neutral stance, which is not the case here.

This is a great question. It is the in order to part of surveillance definition that troubles me and makes me believe it is not the right word for all of the kinds of data gathering, analysis, and use that is being grouped under it. Certainly there are types that are surveillance but I don’t think that logging search terms and behaviors within a publisher system is that, especially not if it is in a library catalog and used to recommend books a user might want to see. Figuring out the overall category and sub-categories is would be really useful. (Side note: IRB does not always require informed consent and not all research methods proceed from hypothesis formulation.)

I think a broad, neutral term is just right, David, because as Lisa points out we are talking about a very broad category of practices, which may be carried out for many different reasons. You seem to have some narrow practice in mind, something Google does or some such, but that is not what we are talking about. As I understand it, we are basically talking about all the available data about everything that everyone in the world does,

To expand on some of the comments (Especially from Lisa and Richard). I chose “Surveillance” very deliberately. 1) to provoke comment and make explicit what I believe are some underlying aspects of various emerging use cases out there. 2) Because we (the scholarly community at large) won’t get to frame the language if the law of unintended consequences gets there first.

Richard points to a link about tracking. It is a real world example, even the language:

“Identifiers that follow researchers’ work from grant to paper will make funding more effective”

Think that sentence through. The techniques used to gather this information and attach it to people or groups are IDENTICAL to the techniques that the spooks use. And they will suffer the same limitations in terms of error rates and biases when the data is assembled into dashboards and reports, and used. False positive rates; false negative rates and straightforward mis-assignment of works and actions to people, concepts, locations and times WILL happen. Rather important then to think about how recourse can be obtained by somebody on the receiving end of that.

Harvey has enquired as to whether this is something that really happens – metadata attached to individuals. Those databases are already out there, whether it’s ORCIDs or propriatory identifiers. True – small scale in our world at the moment, but this stuff is firmly within the technical capabilities of a surprisingly large set of of organisations within the scholarly sphere – this is not limited to the big fish.

Richard asks if his link a good example… It is. There’s nothing wrong with the ORCID collection per se. But – how is the data going to be used. How is it going to be connected to other data-sets? What’s mandatory? How will the data be actioned and what rights does the individual have to challenge conclusions and assumptions that will be derived from that data. Should they be made aware when data about them is used in aggregate? etc etc.

We are building maps. And just like the maps of the world, they are inevitably the products of our biases and desires; worldviews and aesthetics.

What IDENTICAL spook techniques are you referring to? I thought they mostly used bugs, wiretaps, infrared cameras, clandestine stuff like that. We do not need these to tie grants to articles, which most researchers are happy to do and the US Public Access program requires them to do. You may be hyping a minor issue.