Is the influence of a journal best measured by the number of citations it attracts or by the citations it attracts from other influential journals?

The purpose of this post is to describe, in plain English, two network-based citation metrics: Eigenfactor[1] and SCImago Journal Rank (SJR)[2], compare their differences, and evaluate what they add to our understanding of the scientific literature.

Both Eigenfactor and SJR are based on the number of citations a journal receives from other journals, weighted by their importance, such that citations from important journals like Nature are given more weight than less important titles. Later in this post, I’ll describe exactly how a journal derives its importance from the network.

In contrast, metrics like the Impact Factor do not weight citations: one citation is worth one citation, whatever the source. In this sense, the Eigenfactor and SJR get closer to measuring importance as a social phenomenon, where influential people hold more sway over the course of business, politics, entertainment and the arts. For the Impact Factor, importance is equated with popularity.

Eigenfactor and SJR are both based on calculating something called eigenvector centrality, a mathematical concept that was developed to understand social networks and first applied to measuring journal influence in the mid-seventies. Google’s PageRank is based on the same concept.

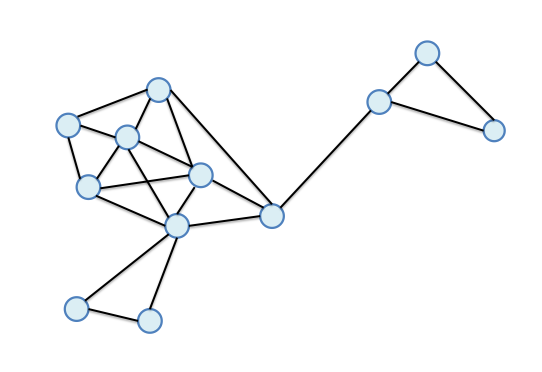

Eigenvector centrality is calculated recursively, such that values are transferred from one journal to another in the network until a steady-state solution (also known as an equilibrium) is reached. Often 100 or so iterations are used before values become stable. Like a hermetically sealed ecosystem, value is neither created nor destroyed, just moved around.

There are two metaphors used to describe this process: The first conceives of the system as a fluid network, where water drains from one pond (journal) to the next along citation tributaries. Over time, water starts accumulating in journals of high influence while others begin to drain. The other metaphor conceives of a researcher taking a random walk from one journal to the next by way of citations. Journals visited more frequently by the wandering researcher are considered more influential.

However, both of these models break down (mathematically and figuratively) in real life. Using the fluid analogy, some ponds may be disconnected from most of the network of ponds; if there is just one stream feeding this largely-disconnected network, water will flow in, but will not drain out. After time, these ponds may swell to immense lakes, whose size is staggeringly disproportionate to their starting values. Using the random walk analogy, a researcher may be trapped wandering among a highly specialized collection of journals that frequently cite each other but rarely cite journals outside of their clique.

The eigenvector centrality algorithm can adjust for this problem by “evaporating” some of the water in each iteration and redistributing these values back to the network as rain. Similarly, the random walk analogy uses a “teleport” concept, where the researcher may be transported randomly to another journal in the system–think of Scotty transporting Captain Kirk back to the Enterprise before immediately beaming him down to another planet.

Before I continue into details and differences, let me summarize thus far: Eigenfactor and SJR are both metrics that rely on computing, through iterative weighting, the influence of a journal based on the entire citation network. They differ from traditional metrics, like the Impact Factor, that simply compute a raw citation score.

In practice, eigenvector centrality is calculated upon an adjacency matrix listing all of the journals in the network and the number of citations that took place between them. Most of the values in this very large table are zero, but some will contain very high values, representing large flows of citations between some journals, for instance, between the NEJM, JAMA, The Lancet, and BMJ.

The result of the computation–a transfer of weighted values from one journal to the next over one hundred or so iterations–represents the influence of a journal, which is often expressed as a percentage of the total influence in the network. For example, Nature‘s 2014 Eigenfactor was 1.50, meaning that this one journal represented 1.5% of the total influence of the entire citation network. In comparison, a smaller, specialized journal, AJP-Renal Physiology, received an Eigenfactor of 0.028. In contrast, PLOS ONE’s Eigenfactor was larger than Nature’s (1.53) as a result of its immense size. Remember that Eigenfactor measures total influence in the citation network, so big often translates to big influence.

When the Eigenfactor is adjusted for the number of papers published in each journal, it is called the Article Influence Score. This is similar to SCImago’s SJR. So, while PLOS ONE had an immense Eigenfactor, its Article Influence Score was just 1.2 (close to average performance), compared to 21.9 for Nature and 1.1 for AJP-Renal Physiology.

In 2015, Thomson Reuters began publishing a Normalized Eigenfactor, which expresses the Eigenfactor as a multiplicative value rather than a percent. A journal with a value of 2 has twice as much influence as the average journal in the network, whose value would be one. Nature‘s Normalized Eigenfactor was 167, PLOS ONE was 171, while AJP-Renal Physiology was 3.

There are several differences between how the Eigenfactor and SJR are both calculated, meaning they cannot be used interchangeably:

- Size of the network. Eigenfactor is based on the citation network of just over 11,000 journals indexed by Thomson Reuters, whereas the SJR is based on over 21,000 journals indexed in the Scopus database. Different citation networks will result in different eigenvalues.

- Citation window. Eigenfactor is based on citations made in a given year to papers published in the prior five years, while the SJR uses a three-year window. The creators of Eigenfactor argue that five years of data reduces the volatility of their metric from year to year, while the creators of the SJR argue that a three-year window captures peak citation for most fields and is more sensitive to the changing nature of the literature.

- Self-citation. Eigenfactor eliminates self-citation, while SJR allows self-citation but limits it to no more than one-third of all incoming citations. The creators of Eigenfactor argue that eliminating self-citation disincentivizes bad referencing behavior, while the creators of the SJR argue that self-citation is part of normal citation behavior and wish to capture it.

There are other small differences, such as the scaling factor (a constant that defines how much “evaporation”or “teleporting”) that takes place in each iteration. While both groups provide a full description of their algorithm (Eigenfactor here; SJR here) it is pretty clear that few of us (publishers, editors, authors) are going to replicate their work. Indeed, these protocols assume that you’ve already indexed tens of thousands of journals publishing several million papers listing tens of millions of citations before you even begin to assemble your adjacency matrix. And no, Excel doesn’t have a simple macro for calculating eigenvalues. So while each group is fully transparent in its methods, the shear enormity and complexity of the task prevents all but the two largest indexers from replicating their results. A journal editor really has no recourse but to accept the numbers provided to him.

If you scan performances of journals, you’ll notice that journals with the highest Impact Factor also have the highest Article Influence and SJR scores, leaving one to question whether popularity in science really measures the same underlying construct as influence. Writing in the Journal of Informetrics, Massimo Francechet reports that for the biomedical, social sciences, and geosciences, 5-yr Impact Factors correlate strongly with Article Influence Scores, but diverge more for physics, material sciences, computer sciences, and engineering. For these fields, journals may perform well one one metric but poorly on the other. In another paper focusing on the SJR, the authors noted some major changes in the ranking of journals, and reported that eigenvalues tended to concentrate in fewer (prestigious) journals. Considering how the metric is calculated, this should not be surprising.

In conclusion, network-based citation analysis can help us more closely measure scientific influence. However, the process is complex, not easily replicable, harder to describe and, for most journals, gives us the same result as much simpler methods. Even if not widely adopted for reporting purposes, the Eigenfactor and SJR may be used for detection purposes, such as identifying citation cartels and other forms of citation collusion that are very difficult to detect using traditional counting methods, but may become highly visible using network-based analysis.

Notes:

1. Eigenfactor (and Article Influence) are terms trademarked by the University of Washington. Eigenfactors and Article Influence scores are published in the Journal Citation Report (Thomson Reuters) each June and are posted freely on the Eigenfactor.org website after a six-month embargo. To date, the University of Washington has not received any licensing revenue from Eigenfactor metrics.

2. The SCImago Journal & Country Rank is based on Scopus data (Elsevier) and made freely available from: http://www.scimagojr.com/

Discussion

22 Thoughts on "Network-based Citation Metrics: Eigenfactor vs. SJR"

Thanks Phil, this is an interesting post.

The network based metrics are intellectually interesting, but as you point they are effectively black boxes, we have to take it on trust that the calculations have been done correctly and right number of citations included or excluded. Whereas it is reasonably straight forward to estimate an Impact Factor from the Web of Science. These metrics also fail in terms of the elevator pitch: I can explain an Impact Factor or other simple metrics in 30 seconds, the network based metrics take minutes to explain and even that glosses over the details.

My own quick analysis of the Web of Science metrics shows two groups that are closely correlated by journal rank, Total Citations and Eigenfactor in one and the article weighted metrics such as Impact Factor and Article Influence Score in the other. The ever growing range of citation metrics doesn’t appear to add much extra information but does give journals another way to claim to be top. I also think that these metrics apply less in the social sciences and humanities where the ‘high prestige journals’ often have less difference in citation profile.

The exclusion of self citation seems rather strange. A specialized journal, which many are, may well have most of the articles on its topic. Later articles will certainly cite numerous prior articles, in the normal course of citation. This is a strong measure of the journal’s local importance. So excluding self citation appears to penalize that specialization which serves a specific research community.

Thank you for an excellent explanation of this complex but important subject. One question I’d like to see explored: How well do the three indices (Eigenfactor, SJR, JIF) correlate? That is, how much does choosing one or the other affect a journal’s relative ranking with competing journals?

For the Impact Factor, importance is equated with popularity.

Given that the IF (like all other citation metrics, as far as I’m aware) makes no effort to discriminate between approving and disapproving citations, wouldn’t it be more accurate to say that for the IF, importance is equated with notoriety rather than popularity?

My conjecture is that, in the physical sciences and engineering at least, negative citations are rare enough to be negligible. HSS may be different. It is a good research question, so I wonder if any work has been done on it. There is a lot of research on distinguishing positive and negative tweets, but maybe not citations.

Phil, I like the evaporation metaphor for the flow/voting interpretation of Eigenvector centrality. It’s a nice complement to the teleport metaphor for the random walk interpretation. It’s fun to see each interpretation of the algorithm in action, so you and your readers may enjoy the demo that Martin Rosvall and Daniel Edler put together to illustrate both the random walk interpretation and the flow interpretation. See http://www.mapequation.org/apps/MapDemo.html

To play with the demo, click on “rate view” at the top center of the screen. Then you can click on “random walker” at the top to look at the random walk interpretation, using the “step” or “start” buttons to set the random walker into motion. Then reset and click on “Init Votes” to restart. Click on “Vote” or “Automatic Voting” to view the flow interpretation. In both cases, the bar graph at right shows each process converge to the leading eigenvector of the transition matrix.

By the way, my view is that the most important difference between the Eigenfactor algorithm and the SJR approach is that in the Eigenfactor algorithm, the random walker takes one final step along the citation matrix *from the stationary distribution*, without teleporting. This ensures that no journal receives credit for teleportation (or evaporation and condensation) — the only credit comes from being cited directly. We’ve found this step extremely important in assigning appropriate ranks to lower-tier journals, whose ranks otherwise heavily influenced by the teleport process. In the demo linked above, you can see how this affects the final rankings by pressing the “Eigenfactor” button.

Carl Bergstrom

eigenfactor.org / University of Washington

I doubt that many of our readers are math pros, so my explanation of this method is simply that the more citations you get the more your citation counts. In the original research the weighting was called authority, if I remember correctly.

Here is the original source. Kleinberg deserves a Nobel.

http://www.cs.cornell.edu/home/kleinber/auth.pdf

This math has changed the world.

You are referring to Jon Kleinberg’s HITS algorithm, commonly known as “hubs and authorities”. This algorithm is actually very different from PageRank, Eigenfactor, and other eigenvector centrality algorithms. Rather than rating each node / website / journal along a single axis of credit the way that eigenvector centrality algorithms do, HITS features are two credit axes. The first measures how authoritative you are, in the sense of “how good are nodes that link to you?”. The second measures how much of a hub you are, in the sense of “how good are the nodes that you link to?” Remember that this algorithm was developed before Google had taken over the search world, at a time when Yahoo was basically hand curated, and thus high quality hubs were extremely valuable.

Of course we carefully considered HITS as an alternative to eigenvector centrality when developing the Eigenfactor metrics, but we felt that for journal ranking purposes, scoring highly as a hub — i.e., citing lots of papers from Nature and Science — is neither particularly difficult nor particularly informative.

That said, I am huge admirer of the HITS algorithm for the purpose for which it was designed. It is in my opinion a more clever algorithm than PageRank, and it is indisputably a more novel one (Eigenvector centrality algorithms had already been well studied in other contexts when PageRank was proposed for use on the web). I am unaware of a Nobel Prize in Computer Science, but I totally agree that Jon Kleinberg would be high in the running if there were one. Perhaps we can hope that the Nobel in Physics will not be such a stretch.

Carl Bergstrom

eigenfactor.org / University of Washington

SJR is posted to Journalmetrics.com upon release. (see: http://www.journalmetrics.com/values.php ) The site is maintained by Elsevier and the file of all journals’, book series’ and regular Proceedings’ metrics is free to download immediately upon release. The file contains all calculable values from 1999 to the current release year and contains IPP and SNIP metrics in addition to SJR.

Both CWTS (the developers of IPP/SNIP and Scimago are also allowed to post these data to their site. Both organizations are provided with Scopus article and citation data for their analyses.

Thanks for adding that, Marie. The development of Eigenfactor and SJR are both great intellectual achievements, but just because they were hard to develop doesn’t mean they are hard to implement. In fact, Carl has generously made source code available, so with that plus a little more data from Crossref, perhaps the black box becomes a little more transparent? Looking at the well-documented installation instructions for the Map Equation: http://www.mapequation.org/code.html it would appear fairly straightforward for someone with a minimal about of programming knowledge to run this themselves (and thus those without can get it done for them easily).

Even without doing that, editors can surely agree that both SJR and Eigenfactor take the negotiable number of citable elements out of the equation, literally, and thus remove the main thing that is currently gamed by those playing the Impact Factor game.

Great post!

I don’t think negotiating the denominator items is the biggest factor in gaming the Impact Factor. I worry much more about self-citation (editorials in the journal citing a long list of papers from the last two years, forcing authors to add citations to the journal) as well as citation cartels and the like.

But for the Eigenfactor, as described here, isn’t the main flaw that it automatically favors big journals over small ones? Hence the need for the various normalization methods used?

On the other hand, self citation might be an important measure of how a journal is serving a specific research community. An impact metric of its own.

David, with any system of metrics you have to use the appropriate measure for the question you are asking. If I want to know whether I can carry a particular lump of iron, I need to know its weight, not it’s density.

As we explain in our FAQ http://eigenfactor.org/about.php , the Eigenfactor score is a measure of total influence of a journal whereas our Article Influence score is a measure of per-article influence, or what some people might want to call the prestige of the journal. Thus the Eigenfactor score favors big journals in just the same way that reporting total citations favors big journals. It’s a useful measure if you are asking a question such as “Should I purchase a subscription to this journal for my library collection?”. If you are asking a different question, such as “where should I publish my next paper?”, you want a measure that is normalized by journal size, such as our Article Influence score or Thomson-Reuters’s Impact Factor.

Carl Bergstrom

eigenfactor.org / University of Washington

Understood,but when using any metric, it’s valuable to understand its inherent biases. I see researchers using the Impact Factor all the time, with very little understanding of how it is derived, or that one highly cited paper in a journal can greatly skew its score. I see an increasing use of the h-index with very little accompanying understanding that it favors older scientists. One tends to just see numbers and scores thrown around, so the more education we can do to help promote intelligent use of the right measurements with an understanding of their strengths and weaknesses, the better.

The best advice I ever got about metrics use was this warning: “When you have a hammer, everything starts to look like a nail.”

I have been thinking lately about the consequences of there being only one type of measurement in the market for so long (citations) and only one measuring unit used for so long (IF). It results in a kind of whisper-down-the-lane chain of inference. Journal citations were a proxy for journal quality (insert disclaimer here about skewness, and the mathematical problem of using a mean or a median to describe a non-normal population); inferred journal quality was used as a proxy for article quality; inferred article quality was used as a proxy for research/researcher quality. And here we are.

The fundamental problem is that we are working backwards on the problem of assessment and metrics. Measurement has, as one of its basic goals, the objective demonstration of an observed phenomenon. Measurement is what changes anecdote into data.

In the lab, and in my years in publishing, I took the following approach: if an expert is looking at two “things” and he/she can say one of them is different from the other, that expert is “measuring” something in a complex algorithm of weighted parameters. A “good journal” that gets covered by a major index and a “weak journal” that finds its way onto a black list are different. When I was selecting journals, I could tell in minutes – sometimes less; just like, in the lab, I could tell the difference between the cells treated with an inhibitor and the control cells just by looking. The challenge was – what are the measurements that I can use to show anyone else, any non-expert, the difference that I was seeing? You design the rubric of evaluation and measure according to the nature of the parameters that differentiate the populations. Then you test it. Does it show you a true difference between things? Is it consistent between individuals who are scoring those parameters? Can it be replicated?

It’s science.

If we are to have a serious science of measurement, we must stop thinking that everything is a nail and can be hit with the same hammer.

Thanks for a very interesting article. One formal but important point: you say “…number provided to him” when referring to a generic editor. I suggest using “him/her”.

How would a network-based article-level metric (ALM) look like? (I assume the Article Influence Score is a journal-level metric, JLM, only scaled/normalized as a per-article ratio.) It’d seem to sidestep the self-citation problem. It’d also serve existing authors better, though perhaps in detriment of journal editors as well as aspiring authors in choosing where to publish. If desired, an aggregate JLM could be derived from such an ALM, although it’d seem to be different from a directly applied network-based JLM. (Count-based JLM, such as the IF, are commutative with count-based ALM, i.e., summing citations-per-article into citations-per-journal is a linear operation — not sure that property holds for network-based ALM/JLM.)

Just to follow-up, I found “Author-level Eigenfactor metrics” (West et al., 2013; doi:10.1002/asi.22790); so article-level Eigenfactors would indeed make sense, although it’d involve a much larger (and sparser) cross-citation matrix.