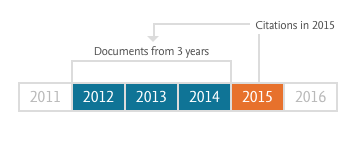

Last Thursday, Elsevier announced CiteScore metrics, a free citation reporting service for the academic community. The primary metric promoted by this service is also aptly named CiteScore and is similar, in many ways, to the Impact Factor.

Both CiteScore and the Impact Factor are journal-level indicators built around a ratio of citations to documents. The specifics in how each indicator is constructed makes them different enough such that they should not be considered substitutes.

- Observation Window. CiteScore is based on citations made in a given year to documents published in the past three years. The Impact Factor is based on documents published in the past two years.

- Sources. CiteScore is based on citations from about 22,000 sources. The Impact Factor is based on citations from approximately 11,000 selected sources.

- Document Types. CiteScore includes all document types in its denominator. The Impact Factor is limited to papers classified as articles and reviews (also known as “citable items”).

- Updates. CiteScore will be calculated on a monthly basis. The Impact Factor is calculated annually.

After its launch, it didn’t take long for critics to voice their objections to CiteScore.

Writing for Nature, Richard Van Noorden illustrated that many high Impact Factor journals performed very poorly in CiteScore, the result of including non-research material (news, editorials, letters, etc.) in its denominator. Based on their CiteScore rank, top medical journals, like The New England Journal of Medicine and The Lancet, and general multidisciplinary science journals, like Nature and Science, rank well below mid-tier competitors. This kind of head-scratching ranking creates bad optics for the validity of the CiteScore metric.

Ludo Waltman, at Leiden University, praised CiteScore for being more transparent and consistent about the the calculation of CiteScores, but voiced reservations over how treating all documents equally creates a strong bias against publications that serve to provide a forum for news and discussion. Similarly, the researchers at Eigenfactor.org argued that CiteScore tried to solve a problem by creating another one:

[J]ournals that produce large amounts of front matter are probably receiving a bit of an extra boost from the Impact Factor score. But since these items are not cited all that much, the boost is probably not very large in most cases. The CiteScore measure eliminates this boost, but in our opinion goes too far in the other direction by counting all of these front matter pieces as regular articles.

CiteScore subject rankings also exposed some high-profile misfits. For example, the Annual Review of Plant Biology ranked 4th among General Medicine journals. While Scopus tweeted that they were working on fixing some journal classifications, cases like this also creates bad optics for their new metric. More user-testing would have caught high-profile irregularities like this before launch.

Others critics worried that getting into the metrics business put Elsevier into a conflict of interest. Departing from idle speculation, Carl Bergstrom and Jevin West demonstrated in a series of scatterplots how Elsevier journals benefited generally from CiteScore over competitors’ journals.

Taken together, it doesn’t appear that the CiteScore indicator can be considered a viable alternative to the Impact Factor.

Until recently, Scopus produced an Impact Factor-like metric — the Impact per Publication (IPP) — which was limited to Articles, Reviews and Conference Papers. I asked Wim Meester, Head of Product Management for Content Strategy at Elsevier, why they stopped producing the IPP. Wim responded that calculating IPP was a time-consuming process but will still be calculated and reported independently on Leiden University’s Journal Indicators web service.

Abandoning a more reasonable metric for a quick, dirty, and overtly biased one makes me wonder whether Elsevier’s decision reflects a different marketing strategy for Scopus that has nothing to do with building a better performance indicator.

The Impact Factor metric is reported in Clarivate’s (formerly Thomson Reuters) annual Journal Citation Reports (JCR), a product that is sold to subscribing publishers and institutions. While the calculations that go into generating the JCR each year are enormous and time-consuming, the information contained in the report disseminates almost instantaneously upon publication. Each June, within hours of its release, publishers and editors extract the numbers they need. Impact Factors get refreshed on journal web pages and shared widely with non-subscribers. Some web hosts even use JCR data as clickbait to sell adds.

In contrast, Elsevier has adopted a different business model: Make the metrics free but charge for access to the underlying dataset. To me, CiteScore is developed with publishers and editors in mind, not librarians and bibliometricians. Each journal has its own score card, which is updated monthly — yes, monthly. No more waiting until mid-June to get a performance metric on how your journal performed last year. While an annual report may be sufficient for a librarian making yearly journal collection decisions, it is woefully insufficient for an editor who wants regular feedback on how his/her title is performing.

The CiteScore metric is controversial because of its overt biases against journals that publish a lot of front-matter. Nevertheless, for most academic journals, CiteScore will provide rankings that are similar to the Impact Factor. The free service provides raw citation and document counts, along with two field-normalized metrics, the SNIP and SJR. As the underlying Scopus dataset is available only by subscription, marketing will come from editors at institutions without current access.

In the past, products like Scopus were marketed with librarians and administrators in mind. The launch of CiteScore may be an attempt to market directly to editors.

Discussion

18 Thoughts on "CiteScore–Flawed But Still A Game Changer"

Thanks for the thoughtful post, Phil. You are correct that the CiteScore Tracker is a response to demand from editors, but I want to point out something that I think most people miss when thinking about why the Scopus team is doing this.

The overall aim of the set of metrics is to reinforce the message that one metric alone can’t do everything. All metrics are flawed, but they’re flawed in different ways and together they allow you to get a better sense of how they reflect the underlying phenomenon measured. I initially wondered why in the world would Scopus come out with a competitor to the impact factor, when everyone has been talking for years now about the problems with the IF and pushing for less use of it in assessment? Well, if you’ve been hanging around altmetrics folks like I have for the past few years, you’ll know that IF-based assessment is stubbornly resistant to change. You often hear things like “It’s a flawed metric, but it’s the best we have.” With the introduction of CiteScore, that statement is no longer true.

At this point, after DORA, after the multi-year NISO Alternative Metrics Initiative, everyone who has the ability or desire to move away from using the IF will have already done so. This is good and Elsevier does not support the use of IF or any journal-based metric in article or individual assessment. You can get article-level metrics at Scopus.

However, whether out of consideration or just tradition and inertia, there are still some people who misuse IF and they now have a better, more transparent, more frequently updated, & just generally fairer option to misuse! Of course, every time they visit http://journalmetrics.scopus.com, they see “Be sure to use qualitative as well as the below quantitative inputs when presenting your research impact, and always use more than one metric for the quantitative part.” My hope is that in addition to being better at all the things IF tries to do, CiteScore also helps further reduce the misuse of IF among the most resistant to change.

I appreciate your comment, William. Scopus was publishing a similar metric (Impact per Publication or IPP) that was just like CiteScore, but included the equivalent of “citable items” in its denominator. The fact that Scopus abandoned this metric tells me that they ran into the same problem as Clarivate (formerly Thomson Reuters) in defining what makes a document citable or not. As I wrote earlier this year, there is a lot of ambiguity over document classification and that means that journals are not all treated equitably. I suspect that Scopus did not wish to remain in that contested space of deciding how documents were classified, especially since it may be perceived as favoring Elsevier journals over competitors’ journals.

I would like to provide some additional background on our decision that with the introduction of CiteScore, IPP will no longer be displayed in Scopus. But please note that IPP will continue to be available from CWTS via http://www.journalindicators.com/indicators

The reason is that each of the metrics for serial titles in the Scopus basket of metrics measures a different type of performance. Although slightly different, CiteScore and IPP both measure citations per document and so we decided to retain only CiteScore so that we could provide:

Increased transparency:

• CiteScore is calculated from the same version of Scopus.com that our users see

• IPP is calculated from a version of Scopus.com that is customized by CWTS

Regular updates:

• CiteScore is calculated by Scopus, and can be generated quickly and regularly

• IPP is calculated by CWTS by a more time-consuming process, so regular updates are not possible

Independence from document type classification:

• CiteScore is based on all document-types

• IPP uses only articles, reviews and conference papers

Scopus has included all document types in CiteScore metrics because this is the most simple and transparent approach, that also acknowledges every item’s potential to cite and be cited.

Hi Wim,

Had a question about journals that use continuous publication models and your new metric. I know there are journals that publish one “issue” per year, basically publishing papers as soon as they are ready to go, then closing that “issue” at year’s end. From what I understand, Scopus does not get that content until the issue closes.

So what happens to a journal like this when your metric is updated monthly?

This could go further. Elsevier could develop a metrics portal that would enable users to tailor the metrics they use to their jobs, interests, and tastes. Each individual can be entitled to her own way of measuring and evaluating scholarly and scientific literature. This would be both completely transparent and utterly incomprehensible. Yet everyone would be able to congratulate themselves for their high scores, and scientifically justified metrics.

Clarivate’s adherence to a limited number of old-fashioned metrics will seem increasing stodgy and obsolescent.

I guess what puzzles me is just what CiteScore is intended to measure. For all its failings, there’s a conceptual logic to the IF — research articles that get more citations have a bigger impact in the field, so journals that have more of those articles have a bigger impact. But including all document types seems to muddy that beyond coherence. What does the CiteScore actually measure? What is it attempting to tell me about a particular journal as it relates to other journals in the same field?

Hi Scott, CiteScore is calculated similarly to the IF and also tells you which journals are having a bigger impact on the field. The big difference is that it is updated more frequently, is derived from Scopus (which is a more comprehensive citation database), and it’s free.

Including all articles may seem strange at first, but it’s really the only fair thing to do. This makes the metric more transparent & fair by removing the ability to manipulate the score by manipulating what is considered a citable item or not.

Where you place the line between “free” and “fee” is going to show where you place the line between “interesting” and “valuable.”

I would argue that CiteScore metric is new not because it is a free metric on Scopus data (SNIP and SJR are also free metrics on Scopus data), but because it is a metrics PRODUCT built onto Scopus itself. The “free” part is the CiteScore number and rankings; the “fee” part is the articles that comprise the 2016 value (only 2016 seems to be linked to article data…?).

Both Elsevier and Clarivate clearly understand that the value proposition of metrics is not the number/ranking itself, but the data that give the number itself authority. For Elsevier, the value they delineate is the articles and their links in the Scopus database; for Clarivate, it is the JCR cite-citing matrix – the full display of journal-to-journal citation detailed year-by-year for 10 years, and summed for 11 and older years. This matrix is the underpinning of the Eigenfactor metrics – and a similar matrix (of Scopus data) underlies SNIP and SJR.

This touches a more fundamental difference between the two metrics, and a basic divergence between approaches to defining a “journal metric.”

Citescore is a “sum-of-articles” metric. It defines a journal as a collection of the published works and citations that are linked successfully to those works. While CiteScore is a ratio, it is a specific kind of ratio: a mathematical average of the number of citations to any content item published in the journal. The value proposition of linked articles shows the specific item-by-item drivers of that year’s metric. These data are singular, and time-limited. An article can appear in one-and-only one year’s numerator. Once Article Z (in year 2016) cites Journal A (to years 2013-2015), that relationship is non-repeatable, non-expandable. Article Z had no effect on 2015, and will have no effect on 2017 CiteScore value. A CiteScore-like metric can be calculated for any set of articles – by journal, by country, by subject, by author.

JCR – and thus JIF – is a journal-entity, or journal-level metric. It defines a journal as a named and singular entity. The numerator is sum of citations – as indexed by Web of Science – that unambiguously acknowledge the journal and a year – whether or not Clarivate has sufficient data to link the citation to a specific item. The denominator has been explained several places – including Phil’s post, noted and linked above. It’s an (imperfect) estimator of “size.” The JIF is a ratio of citations, scaled by that size – but it is not a mathematical average. The value proposition of the journal matrix shows a relationship that is time-limited in its specifics, but indicates a relationship among journals that – mostly – continue publishing past the JCR year. Journal Z (in year 2016) cites Journal Z (any year), and that suggests topical relationship, or at least an author population that likely will continue. JIF is only defined for journal-entities – but “author impact factor” is also conceivable

Both metrics are affected by citations to “front matter” or “non scholarly content.” JIF is affected more; CiteScore is affected less.

Both metrics are affected by the type and distribution of content that is published. CiteScore is affected more; JIF is affected less.

This doesn’t make one right, or transparent. It doesn’t make the other wrong, or obscure.

It’s just arithmetic, and a business decision about what’s valuable.

Two questions come to mind:

1) If CiteScore eliminates the judgment call on what “counts” as an article by including everything, is this a case of sacrificing accuracy in favor of transparency/ease in calculation? Is that a fair trade-off?

2) If the CiteScore for a journal is going to change every month, how does this affect a candidate on the job market? What if their initial application looked really strong, then after a month or two of interviews, their journals have dropped off? Will job seekers “play the market” and carefully time the release of their CVs to an opportune month when the journal has performed well?

Also, as an aside, I recently learned that DORA is a registered trademark of the American Society for Cell Biology.

The most ludicrous citation metric is for one of the top COMMUNICATION journals: Journal of Broadcasting and Electronic Media, which is placed in the category Electrical and Electronic Engineering! In its 60 years, JOBEM has never published an article in such a category.

Here at the coalface – I am am academic, editing a journal with $0 budget, and with up to 10 PhD students and graduates wanting to publish and advance their careers – the Citescore is a positive development. I know the inclusion of front matter is raising alarms, but for the social sciences I can’t think that this is a real problem at all for any of the journals. We have tried to get into Web of Science as a ‘do it yourself’ journal (J of Political Ecology) for years without success. But Citescore reveals there are many journals with lower scores than us, that are also in the WoS.Why? because the WoS looks at citations in less and allegedly more ‘prestige’ outlets. Scopus is a much longer list of acceptable journals and we find ourselves doing remarkably well this month, much better than many commercial journals in the social science fields closest to us. This validates over a decade of hard work – many more citations than articles published. For the students, they have a good and enlarged list of places to publish, including many independent and OA journals. Now we have to convince hiring committees that a journal with score of 2 or so on Citescore, even if the journal is not in the the WoS, is still a great place to publish (in social sciences, so 2 is pretty good). And for the moment at least, unlike most Elsevier stuff, we are not charged to look at the site.

I work with several Social Sciences journals, and they all publish editorials, some do book reviews, the occasional meeting report, news article, etc. These are all a pain to put together, but are seen as being useful to the research community. If these article types are going to actively penalize the journal’s ranking, it is likely that the community will lose this content and any value it represents.