The theme of this year’s Peer Review Week is transparency in peer review. Many peer review experts will be gathering in Chicago in September for the Peer Review Congress (PRC), an international event that is held every four years. So we will be kicking off this year’s Peer Review Week celebrations with a panel session immediately after the Congress closes, at 5.30pm on September 12. Under the Microscope: Transparency in Peer Review, which I’m delighted to be chairing, will be open for all PRC attendees to join in person, as well as being live-streamed and recorded so others can also participate (register here for free).

To whet your appetites and encourage you to join us there or follow the proceedings online, we invited the four speakers to share their initial thoughts on what transparent peer review means to them and why it’s important. Irene Hames (independent peer review and publication ethics expert), Elizabeth Moylan (BioMed Central), Andrew Preston (Publons), and Carly Strasser (Gordon & Betty Moore Foundation) bring an interesting range of perspectives to the discussion, as you can see from their answers to this question. While they all agree on the importance of peer review, there’s divergence around what we mean by transparency in peer review and, critically, how to achieve it. For example, is there an agreed definition of what peer review actually is? Is it really the case that peer review has to be open in order for researchers to get credit for it? And how open should reviews for rejected papers be?

As moderator, I’m remaining neutral on the topic (at least for now!) but I look forward to your comments on this post and warmly invite you to submit additional questions for the panel either here in the comments or on Twitter, using the hashtag #AskPRW – and, of course, to join the discussion on September 12.

Irene Hames

To me, transparency in peer review means making clear to everyone the how, what and why behind editorial decision-making. How are the peer-review and editorial processes organized at a journal/organization? Too often details aren’t given. What information was used in decision-making? Why was the decision to publish made? Currently, making the reviewer reports, editorial correspondence and author responses available with articles isn’t done by the majority of journals, so it’s mostly impossible to know what information forms the basis for decisions or to see the quality of the reviews on which these decisions are based. When I’ve reviewed manuscripts and then seen what the other reviewers have written I’ve been surprised, and disheartened, to see how brief and superficial their comments have sometimes been.

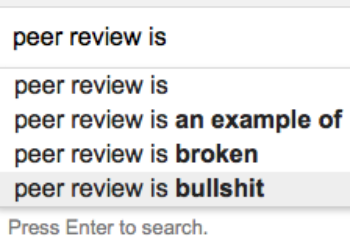

Trust has always been at the center of scholarly publication, but with the problem cases we’re seeing reported – Retraction Watch reports that more than 500 papers have been retracted because of fake peer review alone – confidence in peer review has taken a real knock. Opening up the process and enabling readers to see the content and quality of peer reviews will do a lot to help restore confidence.

Why can’t making reviewer reports and author responses available with articles become the norm?

Not only will this make things more transparent, it would be a great and simple way to address another big current problem – ‘predatory’ journals. Having peer-review reports alongside articles would go a long way to helping researchers distinguish between reputable journals and those that are predatory, questionable or carrying out inadequate peer review.

Elizabeth Moylan

To some, transparency is synonymous with ‘open.’ At BMC, open peer review began on the medical journals in the BMC series in 1999, and open peer review is now commonly accepted across the industry as an important aspect of open science. Open peer review facilitates accountability and recognition, and may help in training early career researchers about the peer review process. Over 70 of our journals across various disciplines operate fully open peer review where the content of the invited reviewer’s report and name are shared alongside the published article (as others do e.g., BMJ, F1000Research).

For me, the term ‘transparent peer review’ has the precise meaning that the content of a reviewer report is posted alongside a final published article but no information about the reviewer identity is provided. Sharing the report content goes some way to making the peer review process more transparent and various journals operate this system, e.g., EMBO and Nature Communications. Others also practice another form of transparency, sharing the reviewer names but not revealing report content (e.g., Frontiers and Nature).

Andrew Preston

Peer review has historically been performed in silos and behind closed doors. We have little insight into who is doing the review or what issues are discussed during the review process. It can sometimes even be hard to know for sure that review is actually happening (this is the predatory publishing problem)!

This results in all sorts of problems, which – as Irene says – can undermine our ability to trust published research. It is also incredibly inefficient. To give an example, researchers who aren’t recognized for their time are less likely to prioritize review. This is compounded because editors have no insight into reviewer workload, meaning they can often overload reviewers with multiple review requests. The net results is a system where it often takes 10 or more invitations in order to get a manuscript reviewed by two reviewers.

To me, transparency is about making sure that we are able to reveal the information necessary to bring a level of trust and efficiency to the peer review process. It doesn’t necessarily require open review (although that would be nice), but it does mean coordinating across the publisher divide in order to give the community an understanding of who is shouldering the workload, what they have on their plates right now, and exposing information about the expertise of reviewers and the quality of the review process. As an industry we should be able to do this in a way that results in better, faster review and vastly increases our trust in published research.

Carly Strasser

Science is trending towards more openness. Open science practices have been gaining momentum for the last few years, especially with the advent of new tools like Jupyter Notebooks, increased uptake of open source software, and new publishing models that promote open access to all aspects of research. The idea of transparent peer review is increasingly discussed as a way of pushing science further into the open. Instead of reviews being cloaked in secrecy and seen only by editors and authors, open peer review allows the reader to see how the science unfolded. It would allow junior scientists to better understand the process of peer review, would result in more useful and thoughtful reviews (since the reviewer knows her/his review will be read by many), and can spark conversations and collaborations that might not have otherwise occurred. It would also permit credit for reviewers, which currently is a service that is performed without incentives or rewards.

With many thanks to Shane Canning of F1000 for his help in organizing the panelists’ contributions to this post

Discussion

26 Thoughts on "What Does Transparent Peer Review Mean and Why is it Important?"

As you note in your introduction, like every other aspect of scholarly communications these days, here we’re seeing the same sorts of variation in terminology. What do we call peer review where neither the reviews nor the reviewers’ identities are made public? What do we call it when the reviews are published with the paper, but anonymously? What do we call it when the reviewers are named but the reviews are not released? What do we call it when both the reviews and the reviewers’ names are made available? What do we then call peer review that takes place in public and online? Which of these is “open peer review”?

And the question of rejected articles is an important one. If, as some of the speakers above note, the reviews must be made public in order for peer reviewer credit to be granted, how do we handle rejections? Should a journal publish information on papers it did not accept? If you only get credit for papers that are accepted, does this then put pressure on reviewers to accept more papers in order to get payback for their efforts? Clearly we need to separate out the concepts of credit for peer review from the public availability of those reviews.

I agree with you that there needs to be a consistent terminology for describing what is meant by transparent or open review. It gets very confusing.

It is probably not feasible or appropriate to identify the reviewers or publish the reviews of rejected papers. Yes, that results in some loss of transparency but I think it would create more problems than it solves. As someone who has endured their share of rejections, I wouldn’t want the reviews published. The same goes for being named as a reviewer for manuscripts that are rejected. Scientific fields are relatively small communities and the author of a paper that you wrote a critical review can easily end up being a reviewer for your next grant proposal.

There are better ways to ensure reviewers get the credit they deserve. Many journals publish a list of reviewers once a year. When I was an editor I would occasionally get requests by reviewers for a letter documenting the reviews they had done for the journal. Sending out a letter to each reviewer each year thanking them and documenting the number of reviews they completed would be far more useful to reviewers or at least those who are university faculty. That is something a faculty member can put in their review packet for their yearly evaluation or when they go up for promotion/tenure documenting their service to their profession.

As I said above, I think it’s worth separating out the issues of credit for peer review, and the question of the value of making peer reviews (and peer reviewer identity) publicly available. Both have their merits, but they are separable, and not dependent upon one another.

ORCID has some interesting ways of offering peer review credit without revealing the actual review (accepted or rejected). The idea is to have reviewers create an ORCID iD and include that in their sign-up to do the review. Then their ORCID page receives notification that this reviewer did a review for journal X on date Y. Simple enough, no need to get into the specifics of the paper or the review itself, and really all that’s needed for peer review credit. I wrote about this a few years back here:

https://scholarlykitchen.sspnet.org/2015/06/17/the-problems-with-credit-for-peer-review/

This is a very good starting point David. There has been some efforts recently in understanding and classifying different levels of openness in manuscript peer review process. As an example have a look at this one:

https://f1000research.com/articles/6-588/v1

There is great variation in terminology – Tony Ross-Hellauer has looked at the different definitions of OPR and found 22 main ones, with seven core traits https://f1000research.com/articles/6-588/v1 – so it’s a good idea currently to define what one means when talking about OPR. Perhaps producing a concise taxonomy suitable for all disciplines and easily understood by all researchers could be an output of peer review week?

In my contribution in the post I was only referring to transparency of reviews in the published literature. There is no question that reviewers of manuscripts that are rejected should get credit for reviewing – those papers are sometimes harder to review and take longer than the ones that are accepted – but the reviews don’t need to be published for that to happen. Editorial oversight of all reviews is important and crucial in making sure review quality isn’t being sacrificed in the pursuit of quantity.

I wonder if completely open peer review and rejections would impact the sheer volume of papers submitted to journals that follow this practice. If an author knows reviews will be open, as well as the paper itself, will more care be exercised to submit a better quality product? Any data on this, by chance?

I am confused about what is the end-goal here. I am under the assumption that peer review is designed to improve the manuscripts. Sometimes the improvements lead to a publication. Sometimes the suggestions/requests are too difficult to rectify and the manuscript withdrawn and a great deal of further work is done.

In the former case, the review is, ideally, a conversation among reviewers, editors, and authors. I can’t imagine a reason to publicly disclose the working versions prior to the final, polished product. In the latter case there is no public disclosure, which protects author, reviewer and editor from unwarranted scrutiny. The ideal peer review process again encourages the conversations so that authors fully understand the reasoning and have a route to appeal. The best organizations engender trust in their communities.

Even when appeals fail, authors can submit their work elsewhere. When they do, it is their ethical duty to disclose prior submission. They can (and perhaps should) choose to share reviewer comments with the new publication. But again, I don’t see where public disclosure would be a benefit.

There is the issue of credit to reviewers. Again this is a matter of ethics and trust. Editors scrutinize the review quality and should rate the reviews. I know our publication software has the reporting mechanism and our EICs are training editors to use this function. And there are mechanisms (Publons for example) for accumulate these ratings for use by the reviewer to validate activity for hiring, promotion and grant applications. I imagine mandatory reporting of review content would have a chilling effect on reviewers.

I suspect I am missing some key element of the argument for and the benefits of greater openness in the review process.

Re: “I can’t imagine a reason to publicly disclose the working versions prior to the final, polished product.”

You must never have had occasion to look at arXiv (arxiv.org). There are many cases readily findable within arXiv where authors have submitted multiple versions of the same general paper, with later versions edited to take into account comments received from other researchers who read and provided feedback to the author on earlier versions. It’s peer review without being called that. And I had at least one physics publisher representative tell me that many papers that appeared in arXiv prior to formal submission to one of their journals received fewer substantive comments from reviewers, presumably (according to the rep) because of the informal peer review that had already happened on one or more versions of the paper that had been posted in arXiv.

The definition question is interesting. I don’t actually believe there can or should be just one universally agreed definition of open peer review, any more than there is one universally agreed definition of, for example, open access or open science. Different communities (and individuals) have differing requirements and priorities and we need a range of peer review options – open and closed – to meet their needs. The key thing is that all forms of peer review should be transparent (ie in terms of the process), whether or not they’re also open in any form.

The problem comes though, when we try to talk to one another about these different options for peer review, access and science. If we’re not speaking a common language, and I think I’m saying one thing and you think it means something else entirely, then we run into trouble.

I agree. That’s the argument made in the Mellon white paper by Fitzpatrick & Santo. I’m skeptical of efforts to create rigid definitions, though for many that introduces some unease with a “new” concept such as this.

Totally agree that we need clarity David! Maybe a good outcome of Peer Review Week could be a set of proposed labels/definitions for community consideration? Maybe also a good question for the panel discussion…

To Andrew’s point about editor’s needing to know the workload of their reviewers–that sounds like a direction that Publons can move into with OPRSs to better track and identify them within the OPRS (ScholarOne, Editorial Manager). Those systems already do a good job showing activity within that journal, and since I think many publishers would be reticent to share reviewer activity from their journals, a third party like Publons may be able to present a workable solution that’s also accessible within the OPRS.

What about transparency of the methods/framework for peer review? That in and of itself is important. I would argue that is more important than the identities of the reviewers.

Actually revealing the identities of the reviewers publicly along with the contents of the review raises a few questions/issues.

What are the criteria used to identify the reviewer as a “peer” for a particular paper? As an author, more than once I have received open reviews but was never able to find publications by them in a closely related area. In those cases, the reviewer was another academic but not well acquainted with the research (as evidenced by the lack of quality in the review itself.)

Another question is that we (academics) do not receive “credit” for being a reviewer (workload or scholarship.) I would argue that most of us serve as reviewers when asked because we care, want to make a contribution, or want to learn. Having our name attached to reviews would be more lines to add up on a CV, but that would not necessarily have a favorable impact on quality because we all would be beating each other over the head to try to become reviewers.

Having something publicly posted with our name attached (whether positive or negative) carries some implications. This is especially important if (as someone seemed to suggest) that information about rejected papers is also made available. That sort of thing would practically eliminate the ability of authors to re-submit a paper to a different journal. In the publish or perish model, that would create quite a churn when it caused more faculty to perish.

I will begin with a complaint that I have often made about TSK: with a vast majority of the postings here one would never know that books are also part of the scholarly communication process. Does the Peer Review Congress pay any attention at all to peer review as it applies to books?

That process is quite different in many ways from the process as it applies to journals. For one thing, the staff editor at every publishing house plays a major role in the peer-review process, choosing the reviewers, interpreting their reports to authors, making decisions about whether and when to offer a contract. The process is further complicated, at university presses at least, by the role played by faculty editorial boards. The dynamics between staff editors and these editorial boards has no counterpart in the world of journals. (Journal editorial boards are purely advisory and have no decision-making powers.) And then there is the factor of sales potential. No journal article is turned down because it won’t sell enough copies. That is not ever a consideration because individual articles do not have sales (well, at least initially, anyway). So the decisions made about books involve many more factors than those that go into deciding about the acceptance or rejection of journal articles. I am sorry if the Congress will be completely ignoring books.

“Journal editorial boards are purely advisory and have no decision-making powers.”

Ah, Sandy, another terminology/definition issue! Journal editorial boards come in all shapes and sizes and vary in what they do. At some journals the role is just advisory, but at others the responsibilities range from reviewing papers to making editorial decisions. Active, engaged and committed boards are one of the main defining features of successful journals. Of course, there are boards where the members do nothing but add their names, so transparency of editorial board role/s would be good.

Just to clarify, you’re saying that at some journals individual editorial boiard members can decide an article should be accepted or rejected without the journal’s editor being involved? That would seem extraordinary to me. If the journal editor always has to make the final decision, then my point stands, with the qualification that some editorial board members get actively involved in the review process.

Hi Sandy,

There are a lot of different ways that journals work. Some do indeed funnel everything through one Editor in Chief who makes all accept/reject decisions. Many have “deputy” or “associate” editors for particular subject areas who are well-established and trusted to make acceptance decisions. Other journals send things out to a “handling editor” who makes the decision. For example, PLOS ONE did not have an Editor in Chief until fairly recently, and decisions on manuscripts are made by the academic expert chosen to handle the paper:

http://journals.plos.org/plosone/s/editorial-and-peer-review-process#loc-editorial-process

Hi Sandy, the Peer Review Congress is fairly specific about its focus on scientific publishing (so primarily journal publishing), however, Peer Review Week is decidedly not just about journal publishing. So look out for posts on Scholarly Kitchen and elsewhere on many types of peer review, including books.

I considered it a matter of simple courtesy to give an author a clear explanation of the reasoning behind my EIC decision. The author got all the (anonymous) reviews and was free to disclose them and their own drafts as they saw fit.

As for reviewers, in thanking them for their work, I always explained my decision, especially if we didn’t accept all their good advice. Jonathan Foreman makes a good point that modern manuscript handling systems give an EIC lots of information about reviewer performance. Whenever a reviewer asked for a recommendation, I was quickly able to review all their past reviews and provide a detailed summary and evaluation. I noted to them, “If you did good work for us, you will like my recommendation.”

Transparency in peer review implies that the whole world can read the review of R. Grant Steen of a manuscript of Elizabeth Moylan at http://bmjopen.bmj.com/content/bmjopen/6/11/e012047.reviewer-comments.pdf

Transparency in peer review also implies that the whole world can read my review of a preprint of Elizabeth Moylan at https://forbetterscience.com/2017/03/29/cope-the-publishers-trojan-horse-calls-to-abolish-retractions/#comment-4922

Transparency implies that this comment will be posted.

Disclaimer: I am the author of http://bmjopen.bmj.com/content/6/11/e012047.responses#commentary-on-a-study-about-retraction-notices-in-journals-of-biomed-central (submitted version of the comment has been posted as comment alongside https://www.researchgate.net/publication/310778980 ).

I’d like to be contrarian here and simply state that I don’t want more transparency than is already offered by *some* journals. I certainly would never like to see referee reports published with my (or anyone elses) papers.

As a reader, that’s just more sausage making than I can stomach and I write this as someone who in his daily life has to consume a lot of said sausages. As an author, I think that I would not particularly enjoy having some embarrasing mistake that was subsequently fixed published along with the paper itself. Journals might want to consider the possibility that authors simply thank for the review and take their updated paper elsewhere just to avoid this (this is something I can easily see myself doing).

But do we really need to post the reviews? The problem with peer review, as stated in the original post, largely boils down to the old “Quis custodiet ipsos custodes?”. Why can’t the answer just be (again): “The reviewers.”? As simple as that.

Several journals that I have reviewed for communicate the journal decision back to me, the reviewer, when the decision has been taken and attach the decision letter and all reviews. I normally go through these (yes really!) to see things like whether other referees shared my views, if I missed something or other and so on. Mostly self-evaluation, but as a bonus, I also get a decent idea of the journals’ editorial processes and whether they seem trustworthy.

I would suggest that if journals were transparent to the reviewers in this way, then the journal editorial quality data could simply be extracted from them. A job for Publons perhaps?

Alice, thank you for this discussion. Perhaps we need to consider another element in peer review alongside that of ‘transparency’: ‘blinding’. Far too few journals blind authorship when sending out reviews to reviewers (who are themselves most often appropriately blinded).

Distributing the manuscripts to reviewers with authors identified (or even potentially identifying information about the authors included in the distributed manuscript) raises questions regarding the (im)partiality of the reviewers. While the number of science publications today is enormous, it still remains the case that the circles of those publishing in one particular area are often small. Reviewers are put in the difficult situation of knowingly commenting on colleagues or ‘opinion leaders’. For many reviewers, they may feel that their own ability to publish or even their livelihoods may be affected by an all too honest review.

Journal editors and (perhaps more so) journal publishers feel themselves pressured when ‘opinion leaders’ have their manuscripts called into question by reviews that buck the trend. The ‘conflict of interest’ argument is no excuse for not blinding authors when manuscripts are sent to reviewers.

Double-blinded peer review is a necessary complement to transparency if we want to promote honesty and integrity in peer review.

As more disciplines introduce and embrace preprint servers, it is becoming increasingly difficult to maintain author anonymity in double-blind reviewing. The post ‘Academic publishing death match: Double blind review vs. preprints’ gives a good account of the issues http://steamtraen.blogspot.co.uk/2017/01/academic-publishing-death-match-double.html

I absolutely agree. Not only from preprints, but also from the cited material in the introduction, but also from the materials section (“The methodology is based on Anonymous et al. In short …”) it is usually very easily possible to guess the author. Knowledge of the author is also important to check conflicts of interests. For all these reasons, I strongly oppose double-blinded peer review and I find nothing transparent in that. In my opinion, the best transparency achievable is using the fully open model similar to F1000 Research or some BMC journals.

Double-blind review is almost never used in the peer-review process for monographs, however.