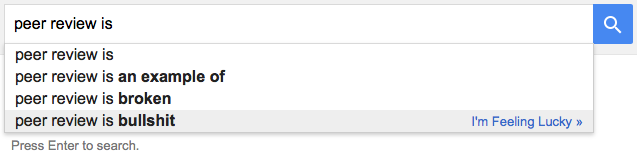

Like a lot of topics in academic publishing, peer review attracts strong opinions, denunciations, and prescriptions for change. Many of these diatribes are based on little more than personal anecdotes. Unfortunately, a dearth of evidence does not stop these opinions from dominating our discussions about scientific publishing. Just Google “peer review is” and you’ll see.

Like a lot of topics in academic publishing, peer review attracts strong opinions, denunciations, and prescriptions for change. Many of these diatribes are based on little more than personal anecdotes. Unfortunately, a dearth of evidence does not stop these opinions from dominating our discussions about scientific publishing. Just Google “peer review is” and you’ll see.

Recently, a group of French researchers took it upon themselves to test a widely voiced opinion that the peer review system is unsustainable — that the explosion of scientific papers has overwhelmed the community of scientists willing to review them.

Their paper, “The Global Burden of Journal Peer Review in the Biomedical Literature: Strong Imbalance in the Collective Enterprise”, was published November 10, 2016 in PLOS ONE.

Rather than focusing on the experiences of individual journals or by surveying scientists on their opinions, the researchers attempted to model the system of peer review in the biomedical sciences to see if there was sufficient supply of reviewers to meet peer review demand.

Building such a model, however, required a number of assumptions, and not all of them appear, on face value, to be accurate. For example, the researchers assumes that 25% of manuscripts were desk-rejected (too low?), that 90% of peer-reviewed submissions go through a second round of peer review (too high?), and that 5% of papers are submitted just once, 10% are submitted twice, and 85% are submitted three times (too optimistic?).

Nevertheless, the researchers were not married to these assumptions and performed 25 sensitivity analyses, varying these and other assumptions. In all but the most stringent scenarios, there was sufficient supply of peer review to meet demand. They write:

From 1990 to 2015, the demand for reviews and reviewers was always lower than the supply […] In fact, the supply [of reviewers] exceeded the demand by 249%, 234%, 64% and 15%, depending on the scenario. The peer-review system in its current state seems to absorb the peer-review demand and be sustainable in terms of volume.

Logically, these results should make complete sense: a growing population of authors should theoretically translate into a growing population of reviewers. In practice, this does not always happen.

In 2013, Elsevier reported that some countries, like the United States, were doing proportionally more reviews than other countries, like China. This imbalance also operates on the micro-level as well — some scientists simply do much more reviewing than others. The authors of the PLOS ONE study refers to these scientists as “peer review heroes” and warns that this group “may be overworked, with risk of downgraded [sic] peer review standards.”

While this paper adds more empirical evidence to suggest that peer review is not suffering a sustainability crisis, it perpetuates an unsupported belief that quality may be at stake. Further, it treats peer review as a burden — a necessary chore that provides little benefit to the reviewer, rather than a part of the scholarly communication process. The title of their paper, “The Global Burden of Journal Peer Review in the Biomedical Literature: Strong Imbalance in the Collective Enterprise” implies a sense of great unfairness and inequality in the publication process, a view adopted in this Vox article.

Peer review is largely based on a voluntary labor market and, in such markets, work is rarely distributed evenly among its members. Some volunteers contribute more because they have more time, more aptitude, or take more pleasure in contributing. Peer review should be no different.

I also question the unstated assumption that an insufficient supply of competent reviewers means that the peer review system is broken. In a voluntary system, reviewers get to be selective in what they chose to review. While a researcher may be willing to review a relevant, well-written paper presenting novel results, it may be much harder to find someone willing to review a poorly-composed paper presenting negative results. Under such conditions, it may be more efficient for an editor to leave the volunteer market and depend upon a commercial peer review alternative, like Rubriq, or to give up entirely.

Scientists find the time to review good papers, especially when they are relevant to their own work and sent to them by someone they respect. For these papers, an editor can demand reviews within days (sometimes within hours). At the other end of the spectrum, an editor may spend months finding a volunteer willing to review a paper or, in desperation, assign themselves to the role of reviewer. The selectivity of editors and the willingness of volunteer reviewers should not be considered flaws of the system, but as features, by speeding up the publication of some manuscripts while delaying others. While authors may not consider such preferential treatment fair, it may be ultimately beneficial to science.

Discussion

8 Thoughts on "Are Journals Lacking for Reviewers?"

Just a small correction about the amount of times papers were resubmitted. The percentages mentioned in this post were given only as an example inside the article to better explain the equations. The actual data we used actually come from an Elsevier survey (15% are submitted once, 47% twice, 23% three times etc.) and they are in the accompanying excel file.

Just a quick comment on the Google search you performed… “Google’s suggestions may contain things you’ve searched for before, if you make use of Google’s web history feature.”. See here: http://searchengineland.com/how-google-instant-autocomplete-suggestions-work-62592

Hence, not everyone gets the same suggestions as you. In fact, I get “peer review is essential to the scientific process because”. So, using Google is problematic. That being said I totally agree with you that peer review nowadays is not without problems. (Per Carlbring, EIC of: http://www.CognBehavTher.com )

I either make sure I’m signed out of Google or use private browsing mode when trying to get a sense of what Google’s default search results are for any particular query (actually, I try to avoid using Google wherever I can in favor of search engines that don’t track your behavior). When I do that search, I get peer review is 1) “an example of”, 2) “an example of quizlet” 3) “bullshit”. Quizlet, if you’re wondering, appears to be a VC backed education company.

It also seems a good time to post a link to this recent article on Google and how those search suggestions are so readily being gamed:

https://www.theguardian.com/technology/2016/dec/04/google-democracy-truth-internet-search-facebook

Great post. Thanks, Phil. Finding qualified reviewers can be a challenge. But this challenge is compounded when editorial offices make no effort to expand their reviewer pool or fail to keep detailed, up-to-date records of each reviewer and their respective areas of expertise. Several journals are experimenting with apprenticeship programs to prepare young scholars for the job of reviewing. Others are conducting audits of their reviewer pool and adding more detail to reviewer records. These steps give editorial offices more choice of reviewers, reduces the number of requests to review, and ensures higher quality reviews. Peer review IS sustainable, but only if journals make an effort to realize efficiencies.

Absolutely! This paper only models the number of manuscripts, reviewers, and their time. There are many factors that reduce the pool of available reviewers, such as:

1) Being able to identify relevant reviewers

2) Being able to contact these reviewers

3) Getting a response from these reviewers within a fixed window of time

4) Competing with other editors who are contacting the same reviewer pool

5) Competing other professional obligations of the reviewer

When a reviewer declines a review, or states that s/he is “too busy” this should be interpreted as being “I’m too busy to review THIS manuscript,” or “reviewing THIS manuscript is not important enough to bump other tasks lower in my priority list.” When editors or publishers complains of a “crisis in peer review,” they are essentially blaming others for someone of their own internal problems.

The peer review burden looks more unbalanced than it really is because a substantial fraction of the apparent review shirkers are contributing to peer review as journal editors. These people tend to get a lot of review requests and decline most of them, largely because they’re involved with the review process of so many other articles already.

Yes, to identify the right reviewer for a research article is really a big challenge now day. It is more so , because in the recent era there are numerous journals have come up and topics or subjects are also of varied one.

Further more, many a time journals editors’ forget to acknowledge the contribution of a reviewer in their publication. Reviewers should get free subscriptions of the journal as well. This could be a motivating factor for new researchers to become reviewers . Thanks Prof. ( Dr.) Niloy Sarkar