This post, which was co-authored by Alice Meadows and Karin Wulf, is the first in a series to celebrate this year’s Peer Review Week, which they have also co-edited

The theme of this year’s Peer Review Week is “transparency.” Among the many planned global activities will be blog posts, both here on The Scholarly Kitchen and elsewhere, webinars and other events, and of course sessions at the Peer Review Congress on aspects of transparency, as well as a special Peer Review Week panel, Under the Microscope: Transparency in Peer Review.

To start things off on the Kitchen we wanted to go big, to be transparent about the values and general principles of peer review. The foundation of modern scholarly practice — across disciplines and rooted in the Enlightenment — is the process of testing assertions, followed by critical exchange among experts, and then more testing. But what is asserted, how it’s asserted, and who constitutes the community of expertise has always been up for grabs. Distortions of evidence, actual conspiracies and conspiracy theories, and the attractiveness of some theories over others have historically been rooted in factors including new media forms. In the nineteenth century, it was cheap print; in the twentieth century television and in the twenty-first century it is digital media that makes evaluating information and its deployment so challenging.

In the mainstream news, here at The Scholarly Kitchen, and on other blogs, there have been lots of hot — and some not so hot — takes in recent months on the challenge of information profusion, manipulated material, and distorted narratives. When we start to consider what constitutes reliable information, and how we know what we know — from image and video manipulation to social media bots to widely differential news emphases to retracted journal publications — the spectrum is vast. Yet if we return to the basic impetus for enlightenment inquiry, we might find that it is not certainty we seek (or even want), but confidence in the methods and process of discernment, both of which are deliberately lacking in fake news.

Peer review is a mechanism for critical discernment. It’s a vital element of how knowledge, in all its forms, is created through evidence and argument. The framework of alternative facts (in)famously defined by Kellyanne Conway as “additional facts and alternative information,” suggests that knowledge is a matter of opinion. Alternative facts, however, cannot withstand scrutiny, whereas knowledge actually thrives on it. Peer review, at its best, is one of the most rigorous forms of scrutiny available to us, and is thus key to the production of knowledge.

Of course, neither the process nor the methods of peer review are perfect. But what is arguably perfect is the intent of peer review. It exists specifically to try and ensure that, before being accepted, a paper (or book, conference proposal, grant application, etc.) has been thoroughly reviewed — typically by at least two peers who are experts in the field. Peer reviewers are explicitly responsible for evaluating the merit of the work they’ve been assigned, according to the reviewing organization’s criteria. Per a modest adaptation of the Council of Science Editors to include all scholarly disciplines, this includes:

- Providing written, unbiased feedback in a timely manner on the scholarly merits and the value of the work, together with the documented basis for the reviewer’s assertions

- Indicating whether the writing is clear, concise, and relevant and rating the work’s composition, accuracy, originality, and significance for the journal’s readers

- Maintaining the confidentiality of the review process: not sharing, discussing with third parties, or disclosing information from the reviewed work

- Providing a thoughtful, fair, constructive, and informative critique of the submitted work, which may include supplementary material provided to the journal by the author

- Determining the merit, originality, and scope of the work; indicating ways to improve it; and recommending acceptance or rejection using whatever rating scale the editor or other selection group deems most useful

- Noting any ethical concerns, such as any violation of accepted norms of ethical treatment of animal or human subjects or substantial similarity between the reviewed manuscript and any work concurrently submitted elsewhere, which may be known to the reviewer

Peer review is vulnerable to cultural and other biases, as is any human endeavor, and we take that seriously. But its goals of subjecting research to scrutiny, to identifying possible errors or concerns, and to providing this feedback to the authors in such a way that they can use it to materially improve their work, reflects core values. This process may be undertaken privately, as in the case of blinded peer review, or, to a greater or lesser degree, openly, for example, through publication of the review report itself. In some cases, especially in scientific disciplines, authors may also be required to provide access to the underlying data and/or proof of replicability.

There is certainly some evidence to suggest that a more transparent process is both wanted and needed by researchers.

Irrespective of the form of review, providing transparent (i.e., clear, accurate, and easy-to-find) information on their peer review process should be a no-brainer for any journal or organization. This could be as simple as a complete description of how they do peer review to, for example, banning confidential remarks to the editor in favor of a collaborative and consensual discussion between reviewers that ensures the author understands the rationale behind their decision (see, for example, eLife). There is certainly some evidence to suggest that a more transparent process is both wanted and needed by researchers. For example, 82% of respondents to PRE’s 2016 survey want to know if an article has been screened for plagiarism, 81% want to know the number of reviewers, and 74% want information about the peer review method. The same survey showed very strong support for more transparency around publisher policies: 97% agreed (84% strongly agreed) that peer review policies should be freely available; 93% agreed (71% strongly) that journals should provide a description of their peer review process; and 95% agreed (83% strongly) that publication ethics policies should be freely available.

While common sense steps can provide the transparency in peer review and clarity in other scholarly contexts such as research process, including sources and data, it is as important to be transparent about the values inherent in peer review. As an iterative process, peer review relies on a mutual dedication to knowledge on the part of reviewer and author, and on the communal intellectual labor, including that of editors, that makes knowledge creation possible. In other words, peer review is emblematic of broader values and collaborative practices associated with scholarship specifically but also in the production and circulation of knowledge more generally. Good professional journalism, for example, relies on a set of principles that emphasizes the use of sound evidence and fact-checking. What peer review and associated like practices threaten is opinion-based assertions that purport to be facts, as well as ideological inflexibility.

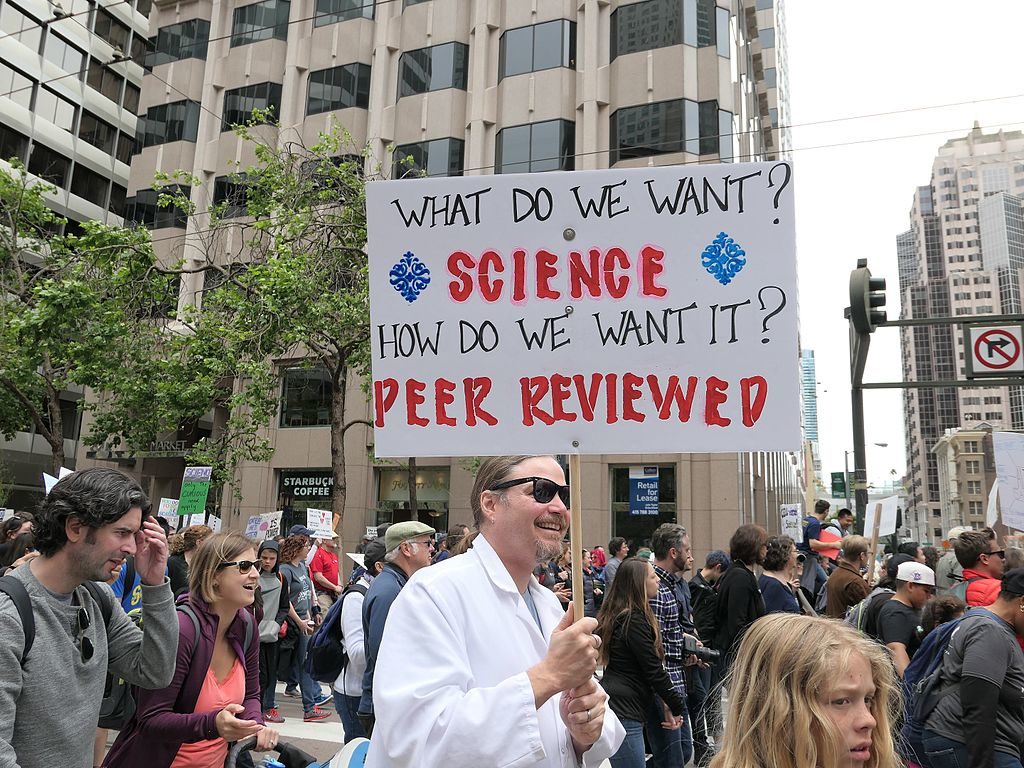

As we’ve argued here, peer review is big. It touches us all — not just as authors or editors or publishers, but as readers and consumers of peer-reviewed scholarship in its immediate and derivative forms. When the March for Science featured signs proclaiming the value of peer review, it might have seemed lighthearted to some. But “What do we what? Science! How do we want it? Peer Reviewed!” is in fact an assertion of core values embodied in a practice essential to our ongoing striving for knowledge.

Other posts this week on the Kitchen for Peer Review Week will consider the challenges of reviewing digital scholarship; self-citation and the past, present, and future of peer review and we will end the week with a report from the Peer Review Congress (taking place September 10-12). We hope you’ll read and engage with these important issues throughout the week.

Discussion

5 Thoughts on "Peer Review in a World of “Alternative Facts”"

SLAP-DASH EDITORS?

Yes, “providing this feedback to the authors in such a way that they can use it to materially improve their work, reflects core values.” Perhaps it is the burden of editorial work, but too often I have had feed-back from authors whose work I have reviewed (always politely) that they either never received the review, or only a doctored editorial summary (and I do not routinely check with authors). When one expends considerable effort in preparing a judicious and helpful review, at the very least one expects it to be communicated to the author! So let us have an explicit statement from editors when soliciting a review that it will be fully communicated to the author (unless the language is grossly intemperate).

Fact-checking, while obviously important, does not seem to me to be the core of what peer review is supposed to do. All of the facts in an article or book can be correct, yet the work as a whole deemed unimportant. Methodology, use of evidence properly to support an argument (i.e., basic logic), originality and scope of the interpretation offered, etc., all seem to weigh heavily in peer review.

But there is also a more fundamental question about what a fact really is. Readers of Pierre Duhem and W.V.O. Quine will know that the line between theory (interpretation) and observation (fact) is not fixed, and that where one makes changes to bring them into alignment is variable.

The term “alternative facts” continues to get undeserved derision despite being respected jargon taught in quality law schools like the one Kellyanne Conway graduated from. Now the term is needlessly useless for political reasons.

I thought a post noting that “a more transparent process is both wanted and needed by researchers” might explore transparent peer review processes more. While there are the fully open peer review processes such as used by PeerJ, eLife, and F1000, I expect many author/reviewers might not embrace the idea of their reviews living online forever, and might avoid strong criticisms. Yet a fully transparent peer review process, that maintains anonymity for reviewers is an attractive alternative that opens up the substance with less risk of personalizing the process.

As used at EMBO, in this approach the original version, anonymous reviews with author responses, and decision letters are consolidated into a supplemental, online file called “Process.” Authors can opt out, but the default is to publish the process file, and opt outs appear infrequent. Having the transparent, anonymized peer records would probably be most useful prospective authors shopping for a home for their work, who wish to assure themselves that their work will receive proper peer review before entrusting it to a journal.

To me, this model of transparent peer review process has much promise. Simple, as authors routinely compile the review responses and their revisions anyway, so it shouldn’t be a lot of extra work for authors or editorial staff.

Pulverer, B. 2010. Transparency showcases strength of peer review. Nature. 468(7320): 29-31. http://dx.doi.org/10.1038/468029a

Transparency allows one to look at what reviewers have said, and it has been useful to me as a peer reviewer to see what other reviewers of the same work have said. Should there be consensus among reviewers? Not necessarily because each reviewer is chosen for their expertise in substance or method and this introduces multiple perspectives, or paradigms into the process. Consensus of blind reviewers is worthy of note as an indicator of convergence on the clarity with which authors have communicated, and the value or usability of the work.

Before the peer review process starts, the editors office or publishers team should do as much assessment of the veracity and authenticity of the work as is possible, and there is software to do this, regarding plagiarism, etc. More software could be developed in terms of source material in key references, for example. The peer reviewer, not specifically a “fact checker” as has been previously mentioned, looks more carefully at the coherence of facts, and the significance of the knowledge generated. Peer reviewers are given criteria or questions to answer regarding appropriateness for the given journal, ethical considerations of the work, quality of writing, and accuracy of content. Those questions/criteria should be published, available to authors. This makes transparent the way the work will be critiqued. Included among these questions used by reviewers should be an epistemological one, such as “How strong is the evidence and arguments supporting the major conclusions or assertions in the article?”

Qualitative research presents unique epistemological challenges, since many consider findings to be more subjectively affected by researcher interpretation. As a reviewer I would prefer to see a frank discussion by authors of how the knowledge generated should be weighed, instead of saying that member checking of participants was done, for example, or proving a simple list of the types of rigor criteria used, such as confirmability. More is usually needed on *how* the knowledge generated was seen as confirmed. Transferability, rather than generalizability is the goal for qualitative knowledge development. There needs to be a clear statement, more than a sentence, regarding to which contexts and under which conditions the knowledge is transferable. This supports the usability of the work, hence its scientific value. Directions to prospective authors should make this an expectation.

The peer review does fit often into an iterative process, involving revisions and re-review. Potentially, a secure electronic system could turn this into a dialogue process between authors and reviewers. Authors often do not understand what reviewers “want” or mean in their reviews, and there is sometimes disagreement on points where changes are expected. And truly this exchange is collaboration that may improve the work. Often it is a matter of the reviewer pointing to bodies of relevant literature that authors are not including, that could add substantially to the work. Not to burden reviewers, but a limited opportunity for exchange and clarification could be built into publisher websites.

Alternative facts may be an accepted legal term, but Conway was not using it in the legal sense. Peer reviewers do need to question “facts” given the context and purposes for which the knowledge is to be used, but they are less well positioned to verify the facts, as to evaluate interpretation of them. Alternative explanations of findings should be provided by the authors, allowing for weighing them against the chosen interpretation.

Finally, reviewers must be vigilant of the logical basis for arguments provided in the manuscript. There need not be a citation for every point, nor does than ensure veracity. But the findings and conclusions must make sense and be comprehensive.

Journal editors should consider some way of assembling their group of peer reviewers perhaps in an online forum, to help new reviewers learn the process from more experienced reviewers. Problems could be posed and responses could be given in an anonymous blog.