Last year at this time we asked the Chefs: What is the future of peer review?

In anticipation of the third annual Peer Review Week, we’ve asked the Chefs: Should peer review change?

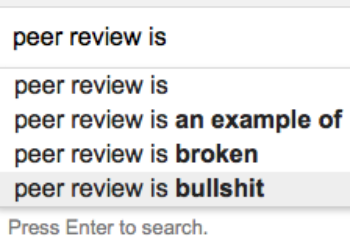

Kent Anderson: I don’t think peer review is one thing. It is very different in every case it’s implemented, and is always changing. The caricature of peer review in our industry is both sad and funny — it’s as if everyone knows what it is, and we have many people speaking about it as if it is one unitary process that everyone has standardized. It’s almost as if, because we can measure inputs and outputs, some of us have inferred that everything that goes on in between is the same. This belief typically comes from people who have never really been close to multiple peer review processes. When you look more closely, you realize that nothing happens the same way almost ever, and certainly not usually between journals in different fields. In fact, I have never seen a peer review process that is the same for any two journals, and it’s even hard to find a process that is the same for any two papers, given the differences in reviewers, editors, content, conflicts, timeframes, and so forth. There is often a messy bit that counting steps and mapping flows won’t capture, as well. So, given that there is no single thing called “peer review” that anyone can describe with anything approaching verisimilitude to reality, yes, it should and does and will always change.

Should it be single-blind or double-blind? Should you have two or three reviewers? Should you have disclosures that follow the paper or are held by the editors? Should reviewer names be shared, published, or both? Should databases be included? Should there be a formal reviewer rewards program? Should reviewers get CE credits? Do you use statistical reviewers? Do you use technical reviewers? Do you have ethics review?

I think some new options are becoming available, including manuscript annotation and automated statistical review. Whether and how these are used, at what stage in the process, by whom, and to what end will all be subject to discipline, editorial, and journal standards and practices.

I think one great change would be broader support and respect for the peer review process, which means giving it time to be done well, however a journal has chosen to implement it. There is such a demand for speedy publication that reviewers are pressed to turn things around quickly and to inflate grades in the current environment. Cascading portfolios also contribute to the “what the hell, I’ll accept it because it’s just going to go to Journal B here anyhow” attitudes. As long as the business of publishing is about speed and efficiency and not focused on value and finer filtering, peer review will be viewed as a commodity process. The portrayal of it as a singular process contributes to this perception. Unless we respect the bespoke nature of interacting with new research findings, peer review will become more and more like the Lucille Ball skit involving chocolates on the conveyor belt. And that’s a vision of peer review we should work to avoid.

As long as the business of publishing is about speed and efficiency and not focused on value and finer filtering, peer review will be viewed as a commodity process. The portrayal of it as a singular process contributes to this perception.

Joe Esposito: Questions like this always make me grumpy, as they imply a situation that simply does not exist in the real world. There is no Commissar of Scholarly Communications; there is no big “system” of how scholarly publishing is conducted. So to ask if peer review should change is the wrong question. The better question is: If you don’t like some aspect of peer review (or anything else), what are you doing to change it?

Change mostly happens from the bottom up. Top-down change is rare, and even more rarely is it effective. Change happens when one person forces things to happen, occasionally (but in a minority of times) in a partnership with 2-3 other people. The “community” is not the source of change; the community is the target of the change agents. We have to stop pretending that consensus takes place before the fact, when in fact consensus is acquiescence in the face of established fact.

Alison Mudditt: Yes. While peer review provides a valuable quality sieve for the overwhelming volume of manuscripts submitted for publication, in its current form it has become a costly and distorted barrier to publication. In the short term, I see three key challenges:

- Recognition: We need better mechanisms to recognize and reward those who invest time and effort in constructive reviews. This likely requires greater transparency in review, although there is lively debate about how to do this in a way that avoids potential pitfalls (such as the introduction of unconscious biases for reviewers who have been positive about your papers).

- Portability: of peer review to reduce the collective burden on the community. Again, implementation models are difficult to agree on because individual journals have such different requirements. One solution might be to develop a common core for reviews to cover the rigor of experimental design, execution, analysis and reporting to make at least part of a review more reusable from one journal to the next.

- Scope: With increasing multi-disciplinarity and big data, it is becoming more and more difficult to peer review and it may no longer be realistic to consider that peer reviewers can review everything.

These challenges, and particularly the second two, support the case for greater decoupling of publication and evaluation. In future, perhaps we need to define what peer reviewers look at prior to publication and what experts in different aspects of the paper need to look at post publication. Arguably, this need will be further driven as preprints continue to mushroom. This is an important new service for working scientists, but one that requires us to rethink how much and what type of evaluation serves the community well at different stages of the communication cycle.

With increasing multi-disciplinarity and big data, it is becoming more and more difficult to peer review and it may no longer be realistic to consider that peer reviewers can review everything.

Rick Anderson: On a fundamental level, my answer is no — I think peer review is a fundamentally sound notion and, when it’s done the way it’s supposed to be, I think it works quite well. The problems I see with peer review today strike me as mostly problems of implementation, not problems with the structure or philosophy of peer review. There are debates about whether peer review should be closed or open, but I don’t see why they have to be one or the other; different journals can carry out peer review in whatever ways make sense to them. Sometimes peer reviewers are slow, or unresponsive, or irresponsible, but that no more represents a critique of the system than a poorly-built house represents a critique of lumber. So while I don’t have any particular opposition to the idea of changing peer review, I would need to know what problem is being solved by any particular change before deciding whether I could support it. The idea of reform for the sake of reform leaves me cold.

Alice Meadows: The short answer to this question is yes. Just as the research process should and does continue to evolve, so should peer review evolve — as a key element of that process — to meet the changing needs of the research community and of society more broadly. And of course it is already changing. Peer review as we know it today is relatively recent — until the 1950s it was conducted primarily by journal editors rather than by independent “peers” as it is now. In recent years, after decades of reliance on single or double blind review, we have seen the rise of open peer review in some disciplines. And the use of artificial intelligence in peer review could be the next big thing.

The main issue from my perspective is that peer review must continue to meet the needs of the research community and, since that community is diverse, there can’t be a one size fits all solution. So I expect (and hope!) that there will continue to be many ‘flavors’ of peer review. However, the function of peer review is the same across all research communities , namely to evaluate and improve research by harnessing the community’s expertise, in whatever shape or form that may take in future. So while the process of peer review should and will change, as it always has done, the overall goal should and will stay the same.

Michael Clarke: My views on whether peer review should change aside, that particular horse has already left the barn. Peer review is presently in a state of fecund experimentation and roiling debate, much of which will be on display at the Eighth International Congress on Peer Review and Scientific Publication, to held in Chicago next week. Variations on the traditional peer review process are bountiful. There are journals that practice double-blind peer review and others that practice open peer review and just about every variation in-between these two poles. Some journals, such as eLife, work towards a consensus decision among peer reviewers. F1000 practices post-publication peer review where articles are published and then reviewers invited to comment on them. Cell Press has recently been publishing articles that are still under review via an initiative they call “Cell Sneak Peeks.” Recently there have been initiatives to not only review papers in advance of publication, but to have organizations other than the publisher manage this review process (“extra-journal” peer review). These have included Rubriq, Axios Review, and Peerage of Science. Of these, only Peerage of Science remains active, which says something about the willingness of organizations to take risks and fail in this area. Another notable venture is Publons, a product designed to give reviewers more credit for the reviews they write. Beyond all this there are a growing number of editorial cascades and transfer systems, allowing papers to flow more easily between journals and even preprint archives. And to surface some of this variation there is PRE, from AAAS, which is a product designed to make the peer review process more transparent by showing, at the article level, details about the review process. There is a great deal more happening beyond what I have touched on here – this is just a sampling of the dynamic world of peer review in 2017.

Peer review is presently in a state of fecund experimentation and roiling debate, much of which will be on display at the Eighth International Congress on Peer Review and Scientific Publication, to held in Chicago next week. Variations on the traditional peer review process are bountiful.

Angela Cochran: Before I jump in to whether peer review should change, I should say that I think peer review is important and valued by the vast majority of researchers—both authors and readers. Is it perfect? Nope, but it’s actually not bad. Should it change? Yeah, I think we could make it better.

I would like for the process to be easier for reviewers and editors. They should be able to read a paper on a mobile device, pop out to linked sources, and easily provide comments no matter where they are located. We are still working in a system that largely depends on downloading PDFs and logging in to clunky systems to perform a review. We need to insist on making this easier.

Peer review fraud — mostly involving author or service provider fake reviewer emails — needs to be addressed in a comprehensive way. So many papers have been retracted and a more sophisticated way to detect this needs to be implemented. Many editors like author suggested reviewers as there may be new people that have not yet been “tested” or tried.

Care must be given to diversifying the voice of the reviewer pool and by extension the editorial boards.

Speaking of the reviewer pool, journals and the editorial boards should make an effort to diversify the pool of reviewers. Most evidence shows that peer reviewers are still mostly male researchers from the US and Europe. Yet, the growth in submissions come from other parts of the world. Care must be given to diversifying the voice of the reviewer pool and by extension the editorial boards.

Lastly, I personally believe that more transparency in the process is a good thing, but I do not advocate for forcing this onto communities that are not yet ready to adopt this method.

Judy Luther: Yes. While our current model of peer review is considered an indicator of a quality journal, the time required for the typical review process remains a bottleneck for many journals. Advocates vouch that it is worth the wait and critics claim that it slows the progress of science. Although we have been able to shorten production cycles, we are still challenged in trying to compress the human element of peer review.

Even though we do not lack for innovative options such as post publication peer review, paying reviewers, recognizing reviewers, no single solution has emerged. Part of the appeal of mega-journals and preprint servers, in addition to being open to anyone to read, is rapid publication. These databases of articles screen for scientific validity and remove the burden most journals face of also screening for relevance.

Peer review as we know it today became widely established following the boom in funding research after WWII. The journal Nature adopted formal peer review in 1967 and the Lancet in 1976. During this print era, peer review not only served to provide the author a private critique, it also served to limit the volume of content that would be mailed. Our digital environment does not have these same cost constraints for distribution, and timeliness in a global research environment is increasingly important to funders and therefore to researchers. With all the pressures on the sustainability of the current peer review system, can we afford not to change?

Ann Michael: In my opinion there is no process so perfect it can not be improved. There are also varying objectives for peer review, which may be fundamental to difficulties in teasing out what is the right level and criteria for peer review for a specialty area, a journal, or a submission type. But out of all of the entries above, the sentence that stands out the most to me is Alison Mudditt’s comment on scope.

Regardless of how one feels about all the options and experiments that Michael Clarke deftly enumerated, volume and scope continue to increase. How can peer review keep up and continue to add value to scientific and scholarly discovery as well as the global community at large? As I said in last year’s peer review post, I do think we’re going to have to get creative.

So, what do you think? Should peer review change?

Discussion

15 Thoughts on "Ask The Chefs: Should Peer Review Change?"

Once again, discussion proceeds as though journal publication is the only kind of publication that involves peer review. What about reviewing monographs? Are the procedures of peer review the same for books and articles? Should they be the same? Do proposals for change apply equally to book and journal reviews?

There is a lot of talk about rewarding reviewers. How about actually making peer review a mandatory procedure by, for example, requiring every candidate for tenure and promotion to carry out a certain number of reviews each year? Why should the system depend just on volunteer labor?

How about actually making peer review a mandatory procedure by, for example, requiring every candidate for tenure and promotion to carry out a certain number of reviews each year?

To play devil’s advocate, I’ll channel many of my more cynical biomed researcher colleagues, who continue to tell me that all tenure and promotion decisions are based around the single question, “how much money did you bring in?” How much grant money did you bring in, how many paying patients did you see, how many paying students did you teach, what did you publish (as your papers are likely to help bring in grant money)?

If the institution’s main concerns are around professors being a source of capital, then are they likely to require an activity that does not bring in money and that takes time away from money-making activities?

Colleagues may be interested in a system of peer review not mentioned here. Here the editor established a panel of some 600 volunteer peer reviewers. Members of the panel were sent at regular intervals abstracts of the new submissions. Members of the panel then chose whether or not they wished to review one (or more). For an evaluation of this system see Hartley, J. et al (2016). Peer choice – does reviewer self selection work? Learned Publishing, 29, 1, 27-29 DOI: 11002/leap.1010

An outgoing student of mine has made the brave decision to explore the “failures” of peer review in their current dissertation. Among common complaints and calls for change is that peer review is subjective, results are poorly verified, competing interests remain undeclared or that reviewers and editors succumb to inherent bias. I am not convinced that being compelled towards greater visibility or openness of peer review (and certainly not speed) changes any of these things. Similarly I’m not convinced by what could sometimes be seen as argumentum ad populum regarding open peer review (or no peer review as many a young researcher has been known to yell at my frustrated editors during annual conferences from about 2011 onwards). Changing implementation is certainly the question, as pointed out by some of the chefs, not a changing of the precepts of peer review. What concerns me is maintaining on the ground a baseline for quality and accountability, having adequate processes to facilitate this, and willingness to respond appropriately where things go awry. By necessity this will vary by publication, editor and audience (throw in publisher to boot). Scope might be considered the ever increasingly difficult challenge for publishers if we consider volume (exacerbated by the multidisciplinary) but for researchers and other readers who don’t necessarily care about the publisher’s challenge of volume and simply want to be able trust a baseline of academic rigour, depth of peer review remains a constant. So we have to look at the pressure points and ask what are we trying to change? Peer review isn’t some singular act that sits solely with the publisher and ends prior to publication. It never was.

Re: Allison’s point about scope (and even, really, portability), would you see value in a taxonomic system for peer reviewers? Something like CRediT, but which would identify and classify the qualifications for reviewers, which could then be built into the process itself? For instance, Researcher X could have a “specialist” tag, while Researcher Y has a “generalist” tag, and finally Researcher Z has a “statistical” tag. Specific steps in an OPRS can then be built out for each user tag, if applicable, and reviews can be more easily potable once classified.

One of the most time-consuming activities for our Managing Editors is the constant pruning and editing of the reviewer database. These very quickly become outdated as researchers move to new positions and their research leads them into new fields.

Hmm, maybe there’s a role for ORCID here.

I think that its a great idea and it will be very useful for the editors. I also agree that it is a very time consuming process if done manually. But, we have the technology to automate this process.

I agree David but a submission system that searches a publication database, with the support of ORCID for disambiguation, to keep a reviewer’s expertise up to date and then searchable–or better yet, an automatic matching algorithm that provides a list of people that the editor can choose from, would be magic. I can’t for the life of me figure out why this does not exist.

I think that’s an interesting idea, and one with potential especially as the “object” of review evolves with the growth of preprints. Post-publication review has not really take off successfully but arguably, a preprint is far more appropriate for this kind of community review, as arXiv has demonstrated for many years. I could imagine a much more focused set of targeted reviews at this point of the process in the future.

What I seldom see mentioned in discussions of peer-review is perhaps the most important component of the process: editing. The editors who identify and recruit qualified reviewers; who adjudicate and synthesize the reviewers’ opinions of the manuscript; who convey to authors the perceived strengths and deficiencies of the manuscript, who decide whether the manuscript is worthy of pages in the journal and readers’ precious time; they are the most important piece of the peer-review process. Who chooses those editors? What are their qualifications? How well do they serve science? Those questions (and more) need to be addressed in any discussion of peer-review (but seldom are).

And the answers to those questions are different for journals and books because for journals the editors are academics while for books the editors are publishers’ staff editors!

Some brief comments and observations:

1. I find it hard to believe that Joe Esposito is ever grumpy.

2. I’m glad SOMEONE mentioned PRE, an idea so brilliant, groundbreaking, and ahead of its time that few in our industry have the courage to adopt it.

3. All kidding aside, there are many great points and comments here. I tend to agree with Rick Anderson the most. The collective “we” keep beating up peer review as the problem, but the real question is “Should the academic reward system change?” Nearly every single problem related to peer review and scholarly publishing share the same root cause: researchers under pressure to not just publish, but to publish in “important” journals. I don’t think this is a revelation to most in the industry. Academic publishers serve the law of supply and demand (not the Rick Perry version) just like any other business or service provider. As long as there is a demand to publish in peer reviewed journals, especially those with a high impact factor, that’s what publishers will supply. If the academic community changes their demands then, and only then, will publishers adjust the services provided. Yes, there have been and will continue to be new products and services which are “add ons” to the business, but at its core the primary function won’t change until it has to.

There has been a significant increase in the number of academic and scientific published articles over the past years, and the non-availability of reviewers in such circumstances is becoming a serious problem. Journal editors are well aware of the level of difficulty faced while searching for suitable reviewers. The idea is that the editors can save a lot of time if the reviewers are in touch with the individual editors for reviewing the manuscripts.

The total estimated time that the editors spend in finding the reviewers every year is about 20 million hours worldwide.

Reviewing manuscripts is an onerous and time-consuming task. The reviewers should receive adequate compensation for carrying out this task. However, the journal has to remain as an independent entity and only external peer reviewers should carry out reviewing tasks. The perfect solution to differentiate the profiles of both the entities is to create a third-party database, where authors and editors can submit the manuscripts for peer-review and the independent reviewers can be compensated for doing the work.

Peereviewers.com has taken the responsibility to tackle the issues of finding appropriate peer-reviewers in the current academic publishing scenario.

Peer review works when conducted appropriately, and when it involves blind review. There is no excuse whatsoever to allow reviewers to know the authors’ identities. This can clearly produce biased responding on the part of the reviewers. I also think it’s incumbent on editors to not override the decisions of assoc. editors when the reviewers’ recommendation are unanimous or near-unanimous. I’ve been an AE in multiple situations where the reviewers and I clearly recommended acceptance or rejection and were overruled by the editor; bogus…

Indeed, it can be argued that by relaxing peer-review procedures, such as by applying post-publication open review procedures, Open Access journals can speed up the review process and increase the accessibility of knowledge, such as medical case information, as the example of the Cureous Journal of Medical Science demonstrates (http://openscience.com/despite-reservations-open-access-to-case-data-can-dramatically-improve-the-accessibility-of-medical-knowledge/). Though reservations about open peer review formats (http://openscience.com/the-opportunities-and-risks-of-open-peer-review-and-its-adoption-by-journal-publishers/) are understandable, sufficient case study information and research results (http://openscience.com/tentative-research-results-on-open-peer-review-feasibility/) seem to exist about their advantages and drawbacks, to allow for their qualified application, especially in specialized academic or practice fields.