I wrote a post for The Scholarly Kitchen late last year about the business model for open data. The section that generated the most controversy was this:

…investing in data sharing seems to make the journal less, and not more, attractive to authors. For example, the shift in submissions from PLOS ONE to Scientific Reports coincides with the former’s adoption of a more stringent data policy. As long as authors see strong data policies as just another obstacle to publishing their work, journals will struggle to commit editorial resources to enforcing those policies.

The PLOS ONE story is just a single data point, and many other factors must have been involved – for example, the Impact Factor of their chief rival, Scientific Reports, jumped in mid-2014 from 2.9 to 5.0.

So, setting the PLOS ONE example aside, is there any better data out there on how the adoption of a data sharing policy affects journal submissions? Do authors find these policies so off-putting they take their articles elsewhere, or do they not seem to care?

To get a decent answer to this question, we need to set some constraints on the data we collect. First, data sharing policies vary widely in their wording and intent (see e.g., Springer Nature’s list), and we would expect that milder policies would have less of an effect on submissions. Ideally, we’d focus on a range of journals all adopting the same policy, so that differences in response cannot stem from the wording of the policy.

Luckily, twelve journals across ecology and evolutionary biology adopted the Joint Data Archiving Policy (JDAP) between 2011 and 2014. The policy starts:

[Journal] requires, as a condition for publication, that data supporting the results in the paper should be archived in an appropriate public archive

The policy wording does admittedly vary slightly among the journals, but they do all mandate data sharing as a condition of publication.

The expected effects of a data policy would be i) an immediate fall (or rise) in submissions when the policy was brought in, and ii) a steady decline (or rise) in submissions in the following four or more years. I therefore contacted the Chief and Managing Editors at these journals to ask for their submission data for the four years before and four years after the JDAP was brought in. Specifically, I requested counts of only new submissions (i.e., not resubmissions or revisions) for standard research articles, which should eliminate fluctuations arising from changing editorial policies around acceptance rates and article types.

In no particular order, we* have data from the Journal of Ecology, the Journal of Applied Ecology, Functional Ecology, the Journal of Animal Ecology, Methods in Ecology & Evolution, Molecular Ecology, the Journal of Evolutionary Biology, the Journal of Heredity, Evolution, Biotropica, and the American Naturalist. Molecular Biology and Evolution include the relevant data in their annual editorials.

Standardizing the year each journal adopted the JDAP as year 0 and plotting submissions per year looks like this (to maintain confidentiality the individual journals are not identified):

The first thing to notice is that submissions generally increased: in only 3 of the 12 journals (journals G, I, and L) are there fewer submissions in the year the JDAP was adopted (year 0) than in year -1. In addition, 8 of the 12 journals have more submissions in year 1 than in year -1. There is therefore no suggestion that adopting a strict data policy has an immediate negative effect on submissions. Moreover, the journals that are growing well before year 0 generally continue to grow afterward (journals B, C, D, E, F, and K). Only H and J appear to be growing before the JDAP came in and shrinking thereafter.

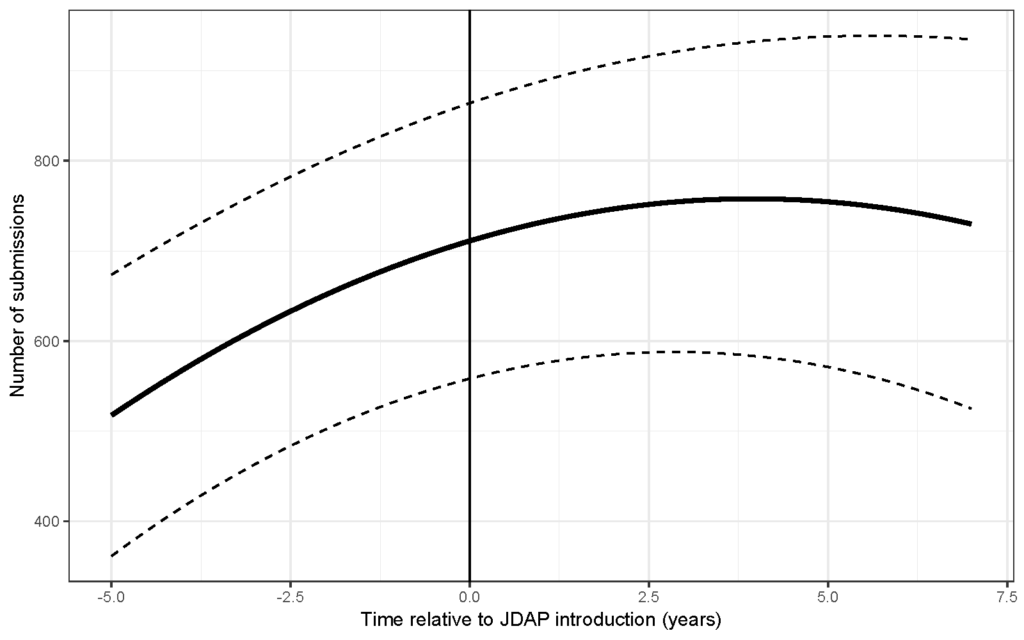

We can also do a more formal analysis using a linear mixed effects model for submissions against time relative to the introduction of JDAP, allowing for a curvilinear relationship by including a time2 term. We find that the time2 term is highly significant, and shows that growth in submissions for the ‘average journal’ in this dataset slows 2-3 years after the JDAP comes in. The graph below shows the predicted trend in submissions for this ‘average journal’ and the corresponding 95% confidence intervals.

Did the adoption of the JDAP cause the slowing of submissions growth? This is impossible to test without submissions data from similar journals that did not bring in a data policy. However, we can identify another potential cause: the rapid growth of ecology and evolution articles published in first PLOS ONE (to 2014) and then Scientific Reports (2014 onwards).

Together, these journals put out 18,541 evolutionary biology and ecology articles between the start of 2011 and the end of 2015. Although many of these were probably reviewed and rejected by at least one of the 12 JDAP journals (and are thus included in the submissions data above), a significant fraction were likely sent directly to PLOS ONE or Scientific Reports instead.

More broadly, journal submissions are affected by a wide range of factors that are not considered here – Impact Factors being the main one – and we just don’t have the data at hand for a comprehensive analysis. However, we can be confident that adopting a strict data sharing policy had no consistently negative effect, and that journals that were growing continued to grow once the policy came in. The longstanding fear that journal data policies hurt submissions should now be laid to rest.

*I’d like to thank Arianne Albert, Chuck Fox, Mohamed Noor, Wolf Blanckenhorn, Trish Morse, Dan Bolnick, Anjanette Baker, and Emilio Bruna for help with putting this post together.

Discussion

9 Thoughts on "Does Adopting a Strict Data Sharing Policy Affect Submissions?"

Tim, thanks for bringing data and analysis to the table for discussion. There are a lot of factors that influence submission–data archiving policy is just one of them. While you illustrate that 8 of 12 journals that adopted the JDAP policy increased submissions in year 1, there is no control group of journals to compare these changes. Submission rates have been increasing for many journals–the result of new papers from China, India, South Korea and other countries investing heavily in R&D. Before we give credit (or blame) to data archiving policies, ruling out other exogenous effects is required. A control set of journals that did not adopt data archiving policies would help put your trends in context. Alternatively, exploring the effect of different strength policies–from weak voluntary sharing policies to strict archiving policies–would help determine if, and how much, data policies matter to submitting authors.

Hi Phil – thanks for these comments. This is one of those cases where one can either collect a small and relatively controlled dataset (where everyone is bringing in a very similar policy) or a large messy datasets with a lot of confounding variables.

These are more-or-less all the JDAP journals, and since the goal was to bring the policy in at all the major journals simultaneously there’s no natural comparator journals in evolution. There’s probably 4-5 in ecology we could use, but given the variability between journals we’d ideally have 15+.

Perhaps with a research grant and cooperation from a number of publishers we could collect a more definitive dataset. I do, however, stand by my conclusion that there’s no indication from these data that bringing in the JDAP had a strong effect on submissions.

While I like the conclusion, it is very difficult to sustain it without comparison journals–even your more cautious closing conclusion. The main issue is that there could be a negative impact of the data sharing policy but it is masked by massive growth in the number of total papers getting submitted and reviewed at journals. As a cartoon example, if the total number of eco-evo papers in the review pipeline increased by 10-fold during this period, then the steady or slightly increasing submission rates at these journals could be indicating sharp decline in the share of submissions because of the data policy. As you suggest, it would be really productive to see similar curves for some near neighbor journals covering the same disciplinary topics.

Hi Brian, thanks for this comment. As I said to Phil, there are not many obvious comparator journals out there for evolution, and only 5-6 for ecology (the ESA titles and Ecology Letters. The latter journals might tell us something, but, given the wide variability between journals, I doubt we’d have a strong signal of anything. Demonstrating the absence of an effect is inherently difficult, and all you can do is put an upper bound on its strength for the sample you’ve managed to collect.

I’m not agree with you. Your conclusion cannot be adapted to medical area due to some restrictive policies (or maybe laws) of countries or institutes. How many countries in future will accept these open data policies in healthcare? I believe there will be strict policies in future to publish these data. Otherwise, open data policy could expose the countries’ genetic diversity that could be a nightmare to governments.

It’s interesting to also think about this in terms of field. Ecology is an area of study where there is clear and ample evidence that datasets are reusable and helpful. Further, the culture of the field seems to be one where this is well established and something of the norm. Other fields are less advanced in terms of transparency and openness with their data, so the conclusions here may not be universal for all fields, at least at the present time.

Tim: You give but two options 1. Increase and 2. Decrease. How about 3? No impact! If the adoption of the policy was by 12, how many total journals in the discipline and were their submission rates affected? I think 3 is a very viable option to be considered prior to a conclusion.

Thanks Tim, an interesting example, and at a broader level, shows continued transition to an open science frame work, where making the underlying research data available will be key. It will also be interesting to see more broadly which disciplines are taking the lead here, what subjects and journals have the highest number of open datasets etc, or course with the usual caveats that some subjects have more restrictions, around the patient data privacy rules etc

Thanks so much for this thought-provoking analysis of a question that is at the forefront of many of our minds! I do think this provides some cautious optimism for data sharing policies on journals. It is hard, if not impossible, to provide like-to-like comparison, and in addition to the other factors that have already been mentioned in the post and in the comments (e.g. rising global submissions, IF, other outlets) I wanted to tease apart a couple more. First, the JDAP policy mandates data sharing up to a point: exceptions can be granted, and I’m wondering how many submissions came in where author provided a statement declining to share and editor agreed (though I’m going on anecdote, this could be potentially significant). I also note that within these journals there are subfields where data sharing is already commonplace, e.g. molecular biology where data like genomic data already has well known ‘homes’ and data deposition is a fairly established part of the research workflow. I won’t hazard guesses as to which chart represents which journal, but I would expect different uptake between Molecular Ecology and American Naturalist, for example. Finally, though data deposition mandates are clearly specified upon submission of the article, I wonder if there is also any data that can be gathered about withdrawn articles pre-review- e.g., author provides a statement declining to share data which editor rejects, and the author then withdraws the paper, suggesting that mandatory data deposition actually was a turnoff and led (after a step) to in fact decreased submission numbers.