Publishing is an information technology business. If we look back at the history of our industry, a central theme is the application of technology to increase the speed at which scholarly information is disseminated. The operative word here is application. A desire and willingness to invest in technology is not enough; it has to be applied in thoughtful ways that solve specific problems that customers and end-users have.

Publishers struggle to apply technology, even when they can see the benefit. As both a publisher and technology vendor, I’ve found that even when technology is a really good idea, with a clear business case and minimal or no development needed, the bandwidth to integrate it into an organization can be frustratingly elusive. It’s not for want of willingness to invest, many publishers spend an awful lot of money on platforms of various sorts. Instead, it’s a difficulty in creating the processes around the technology that are needed to make the best use of it.

The problem isn’t technology, it’s process.

My second job in scholarly publishing was running an editorial department. The company was quite young at the time and a big part of my job was to make sure the department was as efficient as it could be. One of the first things I did was to figure out exactly how we were handling manuscripts. I had to understand and visualize the flow of documents through the system. So I did what most people would do; I asked everybody what they did and drew a flow chart.

I didn’t realize it at the time, but I was employing value stream mapping, a technique from lean management principles that was made most famous in manufacturing by Toyota. The basic principle is that in any process, you can only go as fast as the slowest workstation, so if you want to go faster, you need to find the bottleneck and figure out how to leverage it better.

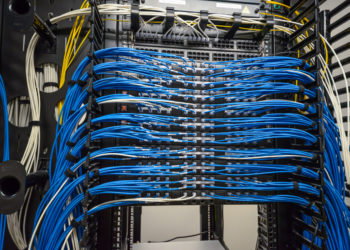

Publishing obviously contains workflows as manuscripts move through submission, editorial, production, and dissemination activities. What’s less well understood, not only in publishing — and in libraries — but in many sectors, is that IT functions are also production lines. IT production lines are harder to see, they don’t have the advantage of having all the workstations laid out one after the other like they are in a car factory, and there isn’t necessarily a document that you can trace through a system as it gets transformed, but a process is definitely there.

Lack of visibility of the workflow harms technology delivery. It’s too easy to see technical operations as a number of functional units that each perform a task, like vendor management or server provisioning.

In reality, whenever a company wants to do something new, whether it’s a small business change or a new product line entirely, there is a process. These processes start with the original idea, move through a series of product or project management phases, solution design, sometimes development is involved, sometimes vendors are used, but ultimately a solution is deployed, usually involving IT operations staff.

Challenges occur when the work that’s being done at one stage in the process is either not sufficiently complete and accurate, or it’s not being communicated in a way that the next person or group in the value chain can understand it. In some cases, individuals may not even know who the next person in the value stream actually is, because no one has ever mapped it. Even worse situations can occur when necessary workstations are missing entirely. In those cases, everybody wonders why repeated projects grind to a halt and stop. Problems can occur unnoticed or are unintentionally passed on, at one point in the process, only to cause much bigger issues downstream.

So what can be done about this?

In 2009, at the O’Reilly Velocity conference, the head of operations and head of engineering at a growing photo sharing site gave a joint presentation called “10+ Deploys Per Day: Dev and Ops Cooperation at Flickr“. From that presentation, the term “DevOps” was spawned. In reality, it was the application of Lean methodology to the software development value stream all the way through to delivery. It was a breakthrough in technical operations and since then, almost 70% of small to medium-sized businesses in the US are applying it to some degree.

While DevOps is a huge step in the right direction, it’s limited in that it assumes that the process begins once the business decides what it wants to do with IT. This can leave organizations in a difficult position where traditional, waterfall style project management techniques are being employed right up to the point where a hand-off happens to IT. This can lead to product managers (upstream of IT) struggling to understand what developers or vendors need to know to be successful, and IT teams looking at an ever-growing to-do list and somehow having the de facto responsibility of prioritizing, despite not being best placed to make prioritization decisions. In turn, this leads to management and organizational frustration while everybody wonders what are all those IT people doing all day?

The solution lies in understanding the value stream all the way from ideation to delivery and managing the work through an end-to-end governance mechanism. There are multiple ways to achieve this. For some smaller organizations that are focused on new and innovative products, Agile project management and techniques like flash builds immerse product managers into the build process as they act as a direct proxy for customers, guiding development at every stage. For larger organizations, portfolio management offers a way to help business stakeholders better interface with IT, build shared understandings of business goals and guide prioritization.

These approaches sometimes involve a shift in mindset that doesn’t come easy and a willingness to expand people’s comfort zones. Having said that, as technology becomes ever more important to publishers, and the pace of change continues to accelerate, publishers have to find ways of better-integrating technology operations into their overall business strategy.

Discussion

11 Thoughts on "Why Is It So Hard to Solve Problems with Technology?"

I certainly agree with you in your assessment that publishing must find better ways of integrating technology. In my consulting business I often find that a publisher is spending significant resources on platforms but has not developed an automated way of feeding the platform’s access control system. It is not unusual to find publishers still utilizing a batch process that is time consuming and usually out of date. Another example is keeping the fulfillment system up to date. Publishers will often buy a new system to handle fulfillment but have not strategy of keeping the system up to date. Every system that controls any publishing activity has to be keep up to date with new releases. New features, bug corrections, and general improvements are in the new releases. Failure to keep releases up to date is a common condition that I have experienced far too often.

Thanks Dan. I think you’re right. Your example of a lack of automation in an access control system is a good example of a process that hasn’t been made visible enough, with perhaps a lack of documentation or analysis of the end-to-end process. The temptation is to spin up another project to deal with what looks like the immediate problem but that can sometimes make it worse by creating more complexity and overhead.

Some people use workflow sprints in these sorts of situations, which help the team develop a shared understanding of what’s actually happening and create more efficient workflows. With a much clearer understanding of the end-to-end, it’s possible to find the easiest piece of automation that will have the greatest benefit.

Keeping complex systems up to date is tough. People hold off because updates can sometimes break functionality, especially if there are heritage systems involved. There are ways of making sure that backend systems can be changed and updated safely, but that’s a whole other kettle of fish.

The technology of communication is important – if only email threads are used to keep track of changes in processes, inevitably some critical decision will not be retained in a usable way. One key thing to tie the production and developer teams together is a robust ticketing system that permanently retains such discussions and decisions as occur in the interactions of these groups with different mindsets.

Good post. I’ll add two more thoughts.

1. In addition to the nature of development work being invisible, it can also be irreducibly complicated. The difference between what is easy and what is impossible is not always apparent, and this can lead to frustration. What can help here is supporting good translations between technology and business.

2. Publishing organisations often have unconnected systems with a significant amount of human powered integrations. This often leads to metadata inconsistencies and can make it difficult to reason about the topology of the system.

Thanks Ian. Those are both excellent observations.

Your point about complexity is well taken. This is particularly true of heritage systems that are sometimes heavily customized and/or tightly integrated into other systems. The architecture can be so complicated it’s hard for anybody to understand the consequences of any given change. There are ways to pay that technical debt down over time, but doing so can sometimes be a hard sell.

Need more be said:

The most unfortunate obstacles to technological progress are information users, i.e. the readers, writers, and their close advisors. PDF, for instance, has been obsolete for decades. E-formats correctly fill whatever screen you are using, adjusting type size and page orientation while offering an effecting “find” function. They free your finger-in-the-back-of-the-book as hyperlinks take you from text to note and back with a click or two. You can annotate your copy (without guilt), search for a word or phrase, and pull up a dictionary definition with ease. And if reference markers, such as folios, are desired, HTML allows bookmarks and hyperlinks.

Yet learned journals, government reports, etc. insist on basing their digital renditions on the codex format, which is more obsolete than PDF. I’m suspect the transition from scrolls to bound books more than 500 years ago was easier.

Hi Phil and thank you for this! I’ve also dabbled on both sides of publishing and technology, and another “P” that I’ve come across in Process is for People.

People shift focus during the time it takes to discuss, frame, test, evaluate, and decide on what problems to solve with what technology, all while the technology, needs, and problems evolve. Depending on the complexities of the targeted problem, it requires a mix of skill, persistence, and serendipity to get to the bottom of which problem is worth solving, how it should be solved, with just the right stakeholders before any of them leave, at just the right time while it’s still worth solving in the proposed fashion, for the right budget, before stakeholder views and strategies change, and in competition with a moving landscape wherein emerging solutions and needs influence how we think about technology to solve problems.

I agree with your process focus, and propose to build on your answer for “Why Is It So Hard to Solve Problems with Technology?” Because we the people are involved, and we can be a pretty dynamic x-factor when it comes to agreeing on problems and technology. Some decisions aren’t as logical as they’re circumstantial.

Thanks Neil,

You’re right that these problems, like all organisational and operational problems, are rooted in human nature.

The problem of shifting priorities is a big part of the challenge and often stems from competing perceptions of what is the most important thing right now for the organization. That’s why governance around prioritization that involves all stakeholders is so important.

Thanks Phill, good post, I’ve often said the biggest barrier to major change is not what the technology can do, but the organizational, and industry-wide culture needed to create lasting change. I would also point out, in my broad observations, outside the usual big players, I’d say the pure born OA publishers like eLife, Hindawi, PLOS and Frontiers, are doing some of the most innovative things in publishing together with authors, and I suspect this is down to culture within those organizations, and the vision of leadership there, of course it’s easier to change culture from top down, than bottom up, and perhaps these relatively newer OA publishers don’t have as many legacy systems. My two cents worth, good food for thought.

Hi Adrian,

It’s certainly easier for new companies with greenfield products to implement good product management from the outset than it is for mature companies with heritage systems and culture to transform. It’s not impossible, though. Companies as diverse as Amazon, Sky, Dominoes Pizza, and Nintendo (they started out making playing cards) have all implemented digital strategies in which they focused just as much on how to do things as what to do.

Technology also moves fast, which means that we have to be prepared to evolve the ways we use technology just as quickly. Adding to that, the approaches that worked when a company had a dozen employees may or may not scale to 50 or 100 people.

PLOS is a good example of a company that was founded less than 20 years ago and recently had to take the bold and necessary step of writing down a large project, which may be part of a rethink of how they deliver new technology value. I’m looking forward to seeing how PLOS continue to take that forward.

I also agree that what eLife and Hindawi are doing, especially around their use of open source is really interesting.