Author’s note: To mark the end of Open Access Week 2021, we offer this reprint of an essay I originally wrote in 2008 and which was published first, in abridged form, in the Scientist and later in The Journal of Electronic Publishing; it later appeared on The Scholarly Kitchen. It has not been updated. I actually got paid by the Scientist to write this, making me a professional author, though a poor one. I remain an unpaid contributor to the Scholarly Kitchen, the more fool I.

Were I to update this piece, I would, of course, make a number of changes. First, obviously, I would update the examples. Second, I would change the title, which reflects the meme at the time to label everything as “2.0,” which now sounds silly. A more accurate title today would be “Placing OA publishing into the context of the overall publishing ecosystem.” Having said this, I note that much of what the essay commented on has indeed come to pass. People talk of an “infodemic,” but fail to see that OA publishing can, in some instances, be the lethal virus. But as a pragmatist — I don’t seek to save the world; I seek to profit from it — and the question I would put to readers is how best to navigate the emerging ecosystem for the benefit, economically expressed, of the organizations for which they are responsible. It is important to bear in mind that only what is sustainable will survive. The rest is silence.

Open Access 2.0: Access to Scholarly Publications Moves to a New Phase

What information consumes is rather obvious: it consumes the attention of its recipients. Hence, a wealth of information creates a poverty of attention and a need to allocate that attention efficiently among the overabundance of information sources that might consume it. —Herbert Simon

What publishing does well — traditional publishing, that is, where you pay for what you read, whether in print or online — is command attention. This is not a trivial matter in a world that seemingly generates more and more information effortlessly, but still has the poor reader stuck with something close to the Biblical lifespan of three score and ten and a clock that stubbornly insists that a day is 24 hours and no more. Attention is the scarce commodity; any service that makes those 24 hours more productive is welcome. A service that winnows through the huge outpouring of information and says (with authority), Pay attention to this; pay less attention to that; and as for that other thing, ignore it entirely—such a service is well worth paying for. The name of that service is publishing.

A dollar spent for publications is a measure of how a reader allocates his or her attention. That dollar could have been spent otherwise — on a different publication, on a Starbucks coffee, on a ticket to a ball game—and it matters little whether that dollar is managed by the reader or by someone, perhaps a librarian, acting on the reader’s behalf. Publishers orient their enterprises to get at that dollar, and they do this by tossing out most of what comes into their purview. Although it may sound paradoxical to assert that charging a fee is an act of mercy, publishing, in its enormous respect for readers’ time and attention, is reader-friendly. It is also author-hostile, at least for those authors who do not make the grade. It is no wonder that Goethe mused that “Publishers are all cohorts of the devil; there must be a special hell for them somewhere.” He was an author.

Few people outside the publishing industry share this benign view of publishers. A common view, widely held by advocates of open access, is that since the barriers to producing information have been flattened in the age of the Internet (no paper to purchase, no printing presses to rent, no books and journals to ship from here to there and often back again), formerly scarce information is now easy to produce, easy to disseminate, and thus should be free. It is true that it takes little to put something on the Web these days: an Internet connection and a repository somewhere. But to find the time to review all those easily posted items is another matter. Indeed, the lower the barriers to production, the greater the need for filtering: publishing becomes more and more important precisely at the time when many are wondering if it is essential at all. The distinction between traditional publishing and open access can thus be stated neatly: publishing is a service for readers, open access a service for authors. They both work best when the beneficiaries of the services pay for the benefits they receive.

It would be imprudent, however, for publishers to sit back and glory in this brave new world, which, as the amount of information continues to explode, every day makes their work more and more valuable to readers. The authors whose work does not find its way onto the publishers’ lists, or those whose publishing efforts place them at a disadvantage because their work appears in a little-subscribed-to journal, are not likely to drop their manuscripts into a drawer. More likely they will publish the work themselves, sans editors and copy-editors, marketing programs, sales incentives, and professional production values. They will post their work to a Web site, an institutional repository, or some other service that provides Web dissemination; perhaps they will simply write up their work on a blog. The author who cannot find a publisher will become a self-publisher; some will become so disheartened by the formal publishing process that they won’t seek formal publication at all. All they need is a hard drive in the cloud.

It is hardly news that a great number of people oppose the publisher’s gatekeeping function, and many advocates of open access assert that gatekeepers are a thing of the past. Indeed, many continue to argue one side or the other of a binary choice: All research publishing should be open access or, Only traditional publishing can maintain peer review and editorial integrity. But increasingly we are seeing various hybrid models emerge and new, often complex, business arrangements as the debate over open access to research literature appears to be moving on to a new phase. Partly this is an outcome of the inability of many open-access ventures to come up with economically sustainable models [1], partly this is a product of shrewd thinking on the part of traditional publishers, whether in the for-profit or not-for-profit spheres, who are identifying new ways to hold onto revenues and, in some instances, even to augment them. We are entering a pluralistic phase, where open access and traditional publishing coexist, though they increasingly are finding their own distinctive places in the research universe and are less likely to compete head on.

If you want to understand what is going on in scholarly communications today, a good place to start is with Walt Disney. After completing the hugely successful Disneyland, Disney famously resented all the clever businesspeople who profited from his venture by opening up hotels, gift shops, and restaurants around the perimeter of the theme park. In response, Disney went into real estate, buying up much of the Orlando, Florida area, now home to Disneyworld. The hotels and restaurants sit on the property of the Disney company, paying a toll for the privilege.

Traditional publishers take the view that they invested in the creation of scholarly literature and thus should, like Disney, be able to extract a toll for each instance of any work that is derived from their original investment. This now takes many forms. Increasingly common are so-called “author’s choice” programs (for example, Springer’s Open Choice and Oxford University Press’s Oxford Open) in which an author is permitted to pay a fee to make his or her articles completely open access. A variant of this is the policy of the American Chemical Society, which now, for the first time, permits authors to place copies of their work in open-access institutional repositories, but at the cost of $1,000 per article for ACS members, more for non-members. I was surprised while working on a project last autumn for a client, a new search-engine company, that at least one publisher expected to be paid for the right to index articles—not the right to display articles, but simply the right to index them. I imagine Walt Disney saying, “Damn! I wish I had thought of that!”

Perhaps the most intriguing recent development in this regard is the announcement by Reed Elsevier that it would develop an advertising-supported online portal for oncologists. Reed Elsevier will make its articles on oncology available on this portal at the time of first publication. Subscriptions to the underlying journals (paper and electronic) would continue to be sold to academic libraries, but for users of the portal the content would be free of charge. It is not yet clear how “open” this open-access initiative will be, as users must register and be qualified before gaining access (a version of the controlled-circulation model common to trade magazine publishing), but at a minimum Reed Elsevier is opening up the doors a little, even if just to build a small guest house on the property. Thus we now have the same content being monetized through subscriptions to libraries and through the development, packaging, and sale of audiences to advertisers in an open-access or almost-open-access form. Each market segment thus attracts its own business model.

This attempt by traditional publishers to work with or around open-access publishing, and in some cases to co-opt it, is not the only set of choices in making scholarly articles available today. There are emerging developments in “pure” open-access publishing as well, but before we review them, let’s take a look at what open access can and cannot do.

Advocates of open access assert that open access will increase the dissemination of research materials, which is the first aim of many researchers, as only a small number receive direct financial compensation for their publishing activity; their only “pay” is recognition by their peers. It is unlikely that open access could increase dissemination significantly, however. This is because most researchers are already affiliated with academic, governmental, or corporate institutions that have access to most of the distinguished literature in the field. Open access, in other words, only adds to dissemination at the perimeter of the research community, not at its core [2]. To say “most” of the literature, of course, is not the same thing as to say “all.” Some researchers, for example, are independent or employed by impecunious institutions or reside in developing nations; and some articles appear in publications whose circulation is far from robust. Thus, though there may be some exceptional situations, especially in the short term, the increased dissemination brought about by open access is, literally, marginal. From the point of view of traditional publishers (echoing Voltaire), open-access advocates make the perfect the enemy of the good.

Open access is not likely to significantly increase dissemination for another important reason as well, and that is because attention, not scholarly content, is the scarce commodity. You can build it, but they may not come. It is one thing to write an article and upload it to a Web server somewhere in the Internet cloud, where it will be indexed by Google and its ilk; it is fully another thing for someone to find that article out of the millions (and growing) on the Internet by happening upon just the right combination of keywords to type into a search bar [3]. Open access advocates would do well to consider what put those keywords into a researcher’s mind in the first place; very often the answer is the sum of all the marketing efforts of a traditional publisher, including the association with a journal’s highly regarded brand. This does not mean that open access is useless or adds no value when it comes to dissemination; what it does mean is that open access is most meaningful within a small community whose members know each other and formally and informally exchange the literal terms of discourse.

There are exceptions to this, however. An entirely new journal, for example, is likely to get a larger readership in an open-access format unless an established publisher gets behind it in the traditional, proprietary way and makes a major marketing push. But without that push, the one-click pass-along capability of the Internet, building on the growing social networking sites, can be highly effective in special circumstances. What is important to bear in mind is that exceptional situations are by definition exceptional.

What the authors of research material seek from their publishers is the audience—and, transitively, the prestige and certification that derives from that audience—that publishing companies, for-profit and not-for-profit alike, are set up to deliver. But what of the work for which there is little or no audience? What if there is simply an insufficient market to support the traditional publishing model? This is the ideal province of open-access publishing: providing services to authors whose work is so highly specialized as to make it impossible to command the attention of a wide (at least in academic terms) readership. Work can be specialized for any number of reasons. The author may be working in a tiny field; the work in question may effectively be addenda to previously and formally published work; or the author is probing a new area, where a community of fellow researchers has not yet emerged. Terming some information “highly specialized” doesn’t mean that it is not important or of poor quality; it simply means that the material is of interest to a very small number of readers. Nor would we ever assert the opposite, that something is of high quality just because it is enormously popular.

It is useful to think of the domain of open-access publishing as existing at the very tiny center of scholarly communications, the innermost spiral of the shell of a nautilus, where a particular researcher wishes to communicate with a handful of intimates and researchers working in precisely the same area. Many of the trappings of formal publishing are of little interest to this group. Peer review? But these are the peers; they can make their own judgments. Copy-editing? I doubt it, as this inner group already knows one another and can fill in the blanks and make mental corrections for errors in a hastily drafted document. Nor does this group require the sales and marketing of a large publisher, as the group is in regular communication anyway, without the mediation of a sales force or acquisitions librarian.

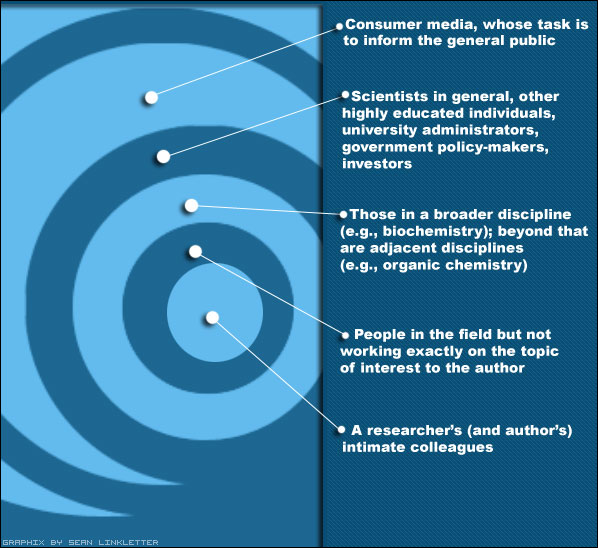

As one moves beyond the inner group of researchers, however, other readers may be interested in the work, but they may need some guidance in evaluating the material. For these readers, formal publication validates a work and asserts that it is worth paying attention to. So we can imagine all of scholarly communications as a nautilus’s spiral: the inner spiral is the researcher’s (and author’s) intimate colleagues; the next spiral is for people in the field but not working exactly on the topic of interest to the author; one more spiral and we have the broader discipline (e.g., biochemistry); beyond that are adjacent disciplines (e.g., organic chemistry); until we move to scientists in general, highly educated laypersons, university administrators, government policymakers, investors, and ultimately to the outer spirals, where we have consumer media, whose task it is to inform the general public.

Something may be lost in the translation as research data moves outward from the core research colleagues to the disciplines beyond that. (I fear that the outermost spiral may be represented by Fox News, which would report new scientific evidence in support of Bishop Usher’s date for the creation of the world in 4004 B.C.E.) Without the “translators,” however, which are comprised of the editorial review systems of traditional publishing, the loss would be greater, as many readers would not be able to determine the relative value of different publications. “Word-of-mouth” marketing is the best of all, of course, but the question is how you come into contact with that “mouth” and whether you trust it. At each step away from the center, the role of the publisher grows and the merits of open access diminish. Researchers not familiar with the author will seek a way to evaluate his or her work, and a publisher’s brand is a form of insurance. Formal publishing, in other words, assists an author not in speaking with a tiny group of peers but to a broader audience beyond them.

Whatever the virtues of traditional publishing, authors may choose to work in an open-access environment for any number of reasons. For one, they simply may want to share information with fellow researchers, and posting an article on the Internet is a relatively easy way to do that, especially when they supplement that posting with personal e-mail or other communications to tell people that the article exists and comments are welcome. Some authors may choose open access as a condition of a funding grant. For other authors an open-access copy may be a way to provide a backup to the original on the author’s personal workstation. Or let’s imagine that an article has been rejected by one or more publishers: the author still may want to get the material “out there,” and an open-access version fills the bill. We should not forget authors who may be frustrated by the process and scheduling of traditional publishers [4]. Of course, there is also a growing number of authors who have philosophical reservations about working with large organizations, especially those in the for-profit sector, and deep and growing suspicions about the whole concept of intellectual property. The fact is that a reason to publish in an open-access format need not be a very strong one, as the barriers to such publication are very low indeed [5]. It takes little: an Internet connection, a Web server somewhere in the cloud, and an address for others to find the material. Thus one way to think of open-access publishing is simply as an emergent property of the current state of Web infrastructure.

One of the reasons that many open-access ventures have had a hard time financially is that they have been built on the mistaken assumption that they are replacing traditional publishing and thus have to re-create all of the services that traditional publishers now provide. Thus, BioMed Central has set up a series of open-access journals, replete with editors, review boards, and a peer review system. This is also true of many of the publications of the not-for-profit Public Library of Science, whose outstanding editorial program carries a high overhead [6]. Obviously, a high cost structure demands sizeable revenue streams to offset it. Where does that money come from? It comes from funding agencies, sponsoring institutions, and the authors themselves. If, on the other hand, one views the province of open-access publishing as the small, specialized communities of researchers whose aim is simply to share information with people working in the same narrowly focused area, all this overhead can be tossed out. What’s needed is good software, not a stable of editors.

At its most basic level, an open-access service need not be much more than a hard drive in the cloud, a place where content can be stored and others can access it. But a highly automated service can include far more features, and many existing services already do. We can imagine a generic service where an author uploads a document; the document is stored in the cloud, but only the author can access it for changes or removal. The author has an account with the service; logging onto it, the author may choose to send e-mail to selected individuals about the document and grant access to it. It is the author, not a publisher, who controls access. This access can be extended to an academic department or to the members of a professional society; access can be granted to any authenticated directory of users. At some point the author may remove all access restrictions, fully opening access. We may debate whether any of these steps, including the final one, constitutes “publication,” but it is indisputable that access can be augmented and that the marginal cost of doing so approaches zero.

Such an open-access service, seeing itself in competition with other services, will likely add to its offerings. The service that begins by supplying simple storage evolves into an access system that lets the author control authentication, and may eventually become what we mostly see in the institutional repository arena now, a means to display open-access content without user restrictions. The next step is to set up alert services; these already exist for Medline and Google Scholar and some university repositories (“Send me a link for all new papers posted by Mary Jones”); search capability for entire collections is an obvious follow-on. Then comes the capability to place comments on the papers, and the opportunity for the author to respond. (At this stage we begin to see the social networking capabilities of Web 2.0 technology begin to tiptoe into the realm of peer review, though it is “post-publication” or “post-posting peer review.”) Perhaps one copy of the document is preserved as an uneditable original, where it is displayed side by side with another copy on a wiki platform, providing the means to update or correct the paper. Documents may also be rendered into special file types to facilitate new machine processes (e.g., text mining); they will likely trigger automated searches (“Find other documents like this one”); and they will automatically be linked into other information services such as a library’s long-term preservation program and be assigned specialized metadata, such as an algorithmically generated Library of Congress classification. Whatever computers can do, will be done; this is inevitable: the only question is the order of the appearance of features and the timeline for implementation [7].

From a cost point of view, there is a considerable advantage of a highly automated service over many of the staff-heavy open-access services that are currently vying for researchers’ attention. A robust software platform requires a modest initial investment [8], but once that platform is in place, the incremental cost of operating the service can be very small. Traditional publishing, on the other hand, has high ongoing costs for staff, marketing, etc., but traditional publishing is set up to deliver a different kind of service, one that refines editorial material and creates a market. The ongoing costs for an open-access service can be small because of the shared assumptions of the community members resident at the innermost spiral of the nautilus shell, which diminish the need for authoritative editorial supervision. Open-access organizations thus are best suited to serve a new market or a small one (or more likely, a large collection of very small ones), not the established markets of traditional publishers, and they can do this at modest expense.

Unfortunately, some open-access initiatives, even where they do not attempt to replicate the editorial review system of traditional publishing, have not grasped the fundamental point that the aim of automation is to get people out of the picture. I recall one conversation I had with a university librarian who is in charge of her institution’s open-access repository. She proudly told me how she had worked with an author, assisting him in placing his work into the service, and asserted that such services would in time supplant traditional publishing. She failed to do the math. There are 24,000 peer-reviewed journals, a number that continues to grow; the total number of articles published is in the hundreds of thousands, perhaps millions; and this doesn’t even begin to estimate the explosion of new material when the barriers of editorial review—and rejection—are removed from the mix. A “high touch” approach would be hugely expensive, taking a very big chunk out of GDP; in comparison the high prices of commercial STM publishers may seem like a real bargain. The cost of “free” is simply too expensive unless we strip away almost all the administrative costs. This is why libraries are very poor places to establish open-access services: libraries provide outstanding high-touch service and are culturally out of synch with the need for literally impersonal technical services. A successful open-access organization has to be operated with the ruthlessness of a Henry Ford, not the warm, helpful manner of a librarian.

Let’s return to the nautilus spiral model. At the center we have researchers, who write material that is intended to be read by other researchers working on similar problems; at the outermost ring we have consumer media and other publications where there is a very big gap in the expertise of authors and readers. The center is the province of open-access publishing, where peers talk to peers (often literally using peer-to-peer computer networks), and at the perimeter is the world of consumer and other non-expert media, where the publication process adds a great deal of editorial value [9]. Between the two extremes of innermost and outermost spirals is a range of hybrid options, with publishers (a la Disney) trying to reach inward, monetizing more and more material, and with newly evolving software pressing outward from the center, attempting to diminish the requirements of editorial oversight through Web 2.0 tools and emerging machine processes (e.g., data mining). With every day the software gets better and the traditional publishers get craftier. This is the essential conflict now emerging in the business of scholarly communications.

Regardless of what point on the spiral you look at, there has to be a sustainable economic model. For documents at the open-access center, the market is essentially the authors themselves or the institutions that sponsor them; the service lives in the “author-pays” or “producer-pays” world. Formal documents, documents for which it is believed that there is a traditional market, live in the “user-pays” realm. There is absolutely nothing wrong in identifying a market for authors who wish to post material as distinct from readers who wish to review it. What is necessary is to know who your customer is, and the customer is the individual or the individual’s proxy that pays for the service. There is a paradox here: for open-access activity to be economically sustainable, the customer to be satisfied is the author, not the readers who receive the content at no cost to themselves.

We can compare an open-access service to the campus print shop of yesteryear. Let’s say the department of philosophy is having a conference and wants to print copies of all the papers that are to be presented. The collection of 50 papers is brought to the print shop (or to a commercial vendor near the campus), which promptly prints the collection as one big volume and invoices the philosophy department. This is a form of author-pays publishing, where the department pays on behalf of the authors. If the papers are not to be printed, then the department needs to contract with someone who could mount the papers on a Web server—that is, a print shop in the cloud. Whether the materials ultimately appear in print or on a Web server or both, the business model for services is basically the same irrespective of the specific kind of publishing service (that is, warehousing, printing, Web hosting, or anything else). Institutional repositories or cloud repositories, in this formulation, are something like latter-day print shops, providing essential services to their customers, who then pay for them.

Services businesses that spring up (whether for-profit or not-for-profit) to provide open-access venues for authors are going to have to grapple with the harsh reality that to make something free for the reader, it cannot be free for the author. Many of the current open-access services have not yet figured out their economics. Academic libraries, for example, in providing open-access repository services for faculty, often fail to charge either the individual researcher or the researcher’s department for the service, unlike the print shop in our example above. Instead, the service is provided free of charge by the library, though obviously the cost becomes part of the library’s (growing) overhead. Such services fall into the trap of thinking they can operate without capital. But capital is needed to invest in the kind of software that open-access ventures will surely require. In the absence of an established publisher with a robust marketing program, software will be the primary vehicle to achieve the author’s goal of dissemination and recognition.

Other open-access services simply do not have scalable models. They properly charge authors a fee, but the fee is incurred only after the peer review process is complete and the paper is accepted for publication. Thus the greater the number of submissions (a measure of the perceived quality of a journal), the more money these services are likely to lose in supporting peer review, unless they keep increasing the number of articles they publish, thereby lowering the average quality and contributing even further to information overload.

For an open-access service to get its financial house in order, it is going to have to engage in some of the blocking-and-tackling business analysis of any other enterprise. It will have to estimate capital requirements for building a service, and the ongoing cost of maintaining the platform and adding additional features. It will have to design the system such that administrative costs, including customer service, approximate zero. It will have to establish a marketing budget to attract paying customers (that is, authors) to the service. It will have to keep a paranoid eye on the competition and the potential competition. When it comes to pricing, there will have to be built into each sale sufficient margin to offset some part of the fixed overhead and the amortized development costs: a service with fixed and amortized annual costs of $1 million cannot break even with a gross profit of $1 per customer unless it expects to have more than one million customers. When open-access services move from the good and generous intentions of some of the movement’s founders to that of hardheaded business analysis, developing a service for an identifiable market segment (that is, authors at the center of the nautilus spiral), they will begin to grow in force and stature in scholarly communications.

How large is a “modest” budget to build a robust open-access service, one that is truly competitive in the marketplace?

A start-up engineering and product-development team of around 12 is a common formulation. This team would take 6-12 months to build a service and data center, with the aims of the research community in mind. Turning the service into a product would include many of the things we now associate with Web 2.0 businesses: highly interactive sites, with the capability of allowing postings, comments, alerts, etc.—a Facebook for the research community. A back-of-the-envelope estimate is that the average salary is $100,000, yielding an annual payroll of $1.2 million. Double that figure to include the cost of rent, hardware, bandwidth, etc. So you could bring the service to market in about one year for perhaps $2.5 million.

While the service would be as highly automated as possible, it would still be necessary to attract users to it, which requires marketing. Most of the current generation of cloud-computing Web start-ups experience a doubling of staff soon after launch. That brings staffing to 24, payroll to $2.4 million, and a fully loaded second-year expense structure of around $5 million. The first $2.4 million can be regarded as one-time or “sunk” costs; the $5 million a year constitutes ongoing overhead (fixed costs). There are no appreciable variable costs in this kind of Web-based business.

If authors would pay $50 to deposit articles, 100,000 articles in a year would bring the service to cash-flow breakeven. To put this into perspective, the arXiv service, funded by Cornell University, receives around 50,000 articles a year (though at no cost to the authors). Of course, the service would have to compete with other services, including the many that have cropped up in university libraries. To compete, the new enterprise must offer better services. And here the new organization has an advantage over the current open-access repositories in that it is market-based and is thus set up to service its customers. For the new service the customers are authors, whose every whim will be satisfied with new features, until the cost of depositing articles appears to be negligible. Yes, there is a paradox here: although open access is free to readers, its real beneficiaries are the authors, who use the service to communicate with peers. BioMed Central and Public Library of Science get this right, but their high-cost editorial model would be difficult to replicate across the entire range of research publications.

The revenue streams for the new organization go beyond posting fees, however. For example, professional societies may wish to have their own brand on an open-access repository. Perhaps AAAS, for example, fearing that Nature’s new “Precedings” product will undermine its flagship publication, will license the software service; this is known in the industry as a “white-label” deal. Other organizations (e.g., the research units of corporations) may want to use the software but balk at making its proprietary research public and thus may opt for a license for a gated community. Over time, we will see the evolution of premium services in which other computer processes (e.g., data mining) are made available for an additional fee.

The fundamental tension in scholarly communications today is between the innermost spiral of the nautilus, where peers (narrowly defined) communicate directly with peers, and the outer spirals, which have historically been well served by traditional means. Advocates of open-access publishing sit at the center and attempt to take their model beyond the peers. I suspect that this will be difficult to do, but a highly automated service funded by authors’ posting fees would indeed put pressure on some outer spirals. At the outer spirals sit the traditional publishers, who are attempting (with increasing success) to extend their reach into the inner spirals, preempting and co-opting open-access initiatives wherever they can. What remains unknown is at just what middle ring the two models will meet.

(Author’s Note: Many of the ideas in this essay originated in a project I worked on for the University of California Press. I would like to thank the Press Director, Lynne Withey, for permission to develop these ideas into an article. An earlier, abbreviated version of the essay appeared in The Scientist (November 2007). The drawing of the spiral (nautilus publishing model) was created by 16-year-old Sean Linkletter, to whom I owe a pizza.)

Notes

- The number of open access projects continues to grow, making it difficult and perhaps unwise to generalize about them. Many of the higher-profile projects appear to be having trouble with their business models, but some smaller and narrowly focused efforts seem to be doing nicely, relying on a number of forms of support, from volunteer labor to grants to university subsidies to author fees. What we have not yet seen, however, is transformative open access publishing, where the system of scholarly communications moves to a new (open access) model.

- There is a great deal of literature that takes the opposite view; see, for example,http://opcit.eprints.org/oacitation-biblio.html. For another perspective, see the forthcoming Philip M. Davis et al, “Open access publishing, article downloads, and citations: randomised controlled trial,” British Medical Journal (2008, https://doi.org/10.1136/bmj.a568). The authors note that research requires infrastructure and that the elite research institutions are for this reason where most research is conducted and that any increase in readership for open access publications comes from outside the core author community.

- Several readers of drafts of this essay have noted that there are other forms of discovery than keyword-based Internet search. This is true, and the number of such methods is growing. It appears, however, that the total share of discovery through search engines, and Google in particular, is growing faster than the number of means of discovery, with Google beginning to evolve into a one-stop place for research, despite the severe limitations of Google’s search technology, especially for the academic community. A puzzling aspect of this situation is the support for open access among many members of the academic library world, since open access materials discoverable through Google essentially serve to marginalize libraries’ role.

- Berkeley Electronic Press, to which I have served as a consultant, was founded by two academics who were frustrated by the practices of established publishers. BEPress has an interesting hybrid publishing model, which includes both open- and toll-access components.

- One could argue that posting something on the Internet is not necessarily the same thing as publishing it. I reviewed this distinction in “The Devil You Don’t Know: The Unexpected Future of Open Access Publishing,” First Monday 9, no. 8 (August 2004), http://firstmonday.org.

- PLOS’s program is evolving in an interesting and, I submit, inevitable way. With the launch of PLOS ONE, a new service, PLOS changed the rules somewhat, lowering its author fees, but also substantially reducing the amount of editorial review for each submitted paper. Thus, the percentage of accepted papers has risen, the overhead allocation per paper published is dropping, and the service may be well on its way to profitability. One can envision a time when PLOS ONE generates a sufficient surplus to support the other services, with their higher editorial costs. It is interesting to speculate whether PLOS will eventually take the next step: stop reviewing papers prior to publication, but provide robust software tools to encourage comments on already published documents—post-publication peer review. Such a service, as envisioned here, would be able to reduce author fees considerably and become, as it were, a mass repository for the research community. In order to evolve in this direction, PLoS will need to develop a sufficiently flexible software platform.

- I developed some of these ideas further in “The Processed Book” (First Monday, March 2003, http://www.uic.edu/htbin/cgiwrap/bin/ojs/index.php/fm/article/view/1038/959). Also see the Processed Book Project at http://prosaix.com/pbos.

- Everything is relative. In an early draft of this paper, I called the amount of capital to build this service “small.” One reader insisted that the sum (discussed toward the end of the paper) is in fact “large.” So here I am calling it “modest.” To put this into perspective, if this service were successful, the amortized development costs would come to a few cents per paper.

- Publications that are created with an advertising-based business model in mind represent a special case, which I don’t want to examine here. The essence of advertising is to develop and package an audience, which is then “sold” to advertisers; the customer is the advertiser, not the author or reader.

Discussion

1 Thought on "Revisiting: A 2008 Look at Open Access"

This is the publisher’s crystal clear view. Other aspects of “open”, which are especially beneficial for scientists, especially from lower-income countries, are completely bypassed by the author:

– there are countries where institutions do not “have access to most of the distinguished literature in the field”

– transparency and integrity of research

– or economic efficiency resulting from data reproducibility

and others. Iam just saying there are also other aspects which contribute to the current inclination towards openness, open access is just a part of it.