Editor’s Note: Today’s post is by Jay Flynn. Jay is the Executive Vice President and General Manager, Research at Wiley.

Research publishers are in the business of sharing information and solutions to the biggest problems that the global community faces – energy, food security, water safety, vaccines, and many more. With low public trust in science, the integrity of research publishing has never been more important. Unfortunately, that trust is increasingly strained by academic fraud, the most prolific of which is paper mills.

What is a Paper Mill?

In recent years, publishers have seen an increase in research integrity issues stemming from systematic manipulation of the publishing process. Paper mills are at the heart of this. The scholarly publishing industry organization Committee on Publication Ethics (COPE) describes paper mills as “profit oriented, unofficial and potentially illegal organizations that produce and sell fraudulent manuscripts that seem to resemble genuine research.” Paper mills circumvent journal security by doing two things: manipulating identities of the participants in the publishing process, and fabricating content that gets published.

Journal security is thus critical for trustworthy research communication. Without it, paper mills and other schemes will continue to fill journals with fabricated content, and damage society’s trust in peer review and journal publications. The scale of the problem will only increase as technology, like generative AI, becomes more widely adopted.

The Effect of Paper Mills on Hindawi

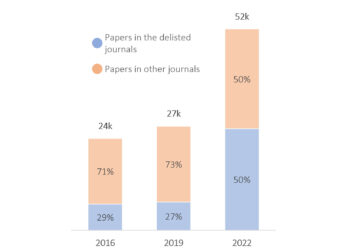

Clarivate recently delisted 50+ journals across the industry for not meeting its criteria. Among these were 19 Hindawi (owned by Wiley) journals. Additionally, retractions across the industry are on the rise, as Retraction Watch reports.

In Hindawi’s case, this is a direct result of sophisticated paper mill activity. The extent to which our processes and systems were breached required an end-to-end review of every step in the peer review and publishing process. One takeaway became immediately and abundantly clear: the environment in which we operate has changed, and the research community – publishers, researchers, and the companies that provide services to them – needs to change with it.

How Hindawi Addressed the Problem

In September 2022, Wiley identified and immediately alerted the industry to paper mill activity we found operating at scale. Specifically, we found fraudulent outside editors that had subverted our processes and workflows, leading to a proliferation of bad content. This scheme hit Hindawi’s Special Issues program hard.

At Wiley we take full responsibility for the quality of the content we publish across our portfolio. We have since reworked the Special Issues publishing process to close these loopholes and protect the scholarly record. Specifically, we paused publication of Special Issues, alerted other publishers and third-party providers of the presence of bad actors in their systems, increased rigorous new checks throughout our publishing workflows, issued an initial 511 retractions, and introduced additional AI-based screening tools. We are currently in the process of retracting an additional estimated 1,200 compromised papers, and we are designing a new retraction process that will help us, and potentially others, accelerate and deal with this new era of mass retractions fairly.

While we have taken many concrete actions in both the short- and long-term, we know there is much more to do. The reality is that the methods bad actors use are increasingly sophisticated. Fraud migrates, and shutting down or securing one journal will simply encourage paper mills to seek another target. This is why publishers must work together and devote substantial resources to ensure the integrity of our journals and the content we publish.

Next Steps for Publishers

Publishers play an essential role in delivering journal security. We believe that we and our publishing peers must take these critical actions to protect the scholarly record:

- Increase our investment in both expertise and technology to support the early identification of unethical publishing behavior.

- Pursue effective and legal ways to share data about the bad actors who otherwise simply move from publisher to publisher, and expunge them from the tools, databases, and resources they exploit.

- Change the ways we work, including creating and embracing new systems, processes, and methods to eliminate fraud throughout the system.

Next Steps for the Industry

Publishers cannot – and should not – address this issue alone. All stakeholders in the industry, (including research integrity officers, funders, academic institutions, learned societies, and, crucially, third-party providers of data and tools) must collaborate to address behaviors that undermine research integrity.

A whole-of-system response includes working together to improve the bad incentives at work in the research evaluation system and the metrics on which it relies. In short, beyond doubling down on uncovering and disciplining academic misconduct, we all have a crucial role to play in modernizing the system itself.

Moving Forward Together

We urgently need a collaborative, forward-looking and thoughtful approach to journal security to stop bad actors from further abusing the industry’s systems, journals, and the communities we serve. We’re committed to addressing the challenge presented by paper mills and academic fraud head on, and we invite our publishing peers, and the many organizations that work alongside us, to join us in this endeavor. I see this as a turning point in our industry. We need to make the right decisions, together. Our future depends on it.

Discussion

26 Thoughts on "Guest Post — Addressing Paper Mills and a Way Forward for Journal Security"

Jay, one loophole to plug is a step in the post manuscript acceptance journal production workflow that allows the corresponding author to add or delete authors. I can’t think of any valid reason to allow this at the post acceptance stage. After acceptance, the editor never sees it again. This is a vulnerability that would seem to facilitate one angle of paper milling, the approach of marketing authorship slots on soon-to-be-published scientific papers.

Keep up the good fight!

Thanks Chris – you raise and excellent point here. Authorship-for-sale isn’t something I’ve gotten into in this post, but yes it’s definitely an issue, and we’ve seen evidence of this sort of fraud in private social media groups and in e-commerce sites scattered around the web. I’d be interested in what others think about the pros and cons of “locking” authorship once a paper has been accepted.

We’re very close to closing this down at IOP Publishing, and considering denying it at any stage after submission too to be honest. The proportion of requests we get far outweighs what we’d expect. We vet all requests thoroughly and deny quite a few, but it’s a very time-consuming task and as volume increases, I’m not sure we can sustain the level of scrutiny required – and of course someone motivated enough would just lie to us anyway. It would upset the handful of authors with genuine requests – but it may be a cost worth bearing if it prevents us publishing fraudulent work.

“We’re very close to closing this down at IOP Publishing, and considering denying it at any stage after submission too to be honest.”

Closing it at submission would be a complete no-go for submission to any journal that implemented it.

It is very common in my field, cancer and cell biology, to be asked by reviewers to include new data for the revision of the manuscript that requires the involvement, and therefore authorship, of people who were not on the submitted manuscript.

Closing it at the Accepted stage is fine though – indeed it should be mandatory.

Dear Mr Flynn,

To what degree do you believe that creating volume-based incentives to publish contributes to the problem you (and others) have observed? In our rush to embrace “open science” we seem to have lost some of the gatekeeper function that journals used to serve.

That’s a good point. A disadvantage of OA is how it creates incentives for volume. not necessarily quality. And poor-quality content feeds chum to science’s critics.

Hi Seth – I’d be hard-pressed to quantify this.

In my experience it’s generally true that as the business model shifts to Open Access (especially gold, hybrid, and diamond) there are economic pressures for individual journals, learned societies and publishers of all varieties to grow their published article volume. This isn’t a popular viewpoint in many circles, so that’s all I’ll say about this topic now at the risk of distracting from the paper mill discussion.

I guess where I net out on the other question, is that it’s pretty clear that the “legacy” approach to scholarly publishing (chasing impact metrics; high manuscript rejection rates; a strong bias towards novel research vs. work that extends and build upon others’ projects) has left a lot of scholars on the outside looking in.

One question that we could all ask is whether “gatekeeper” needs to be a synonym for the legacy notion of “high-quality” here. There needs to be a home in the scholarly record for case reports, clinical notes, field work, and sound science of all varieties. And journals that meet those needs, and the particular needs of early career researchers, do extremely valuable work.

In any case, the vulnerabilities that I (and others!) point out around identity manipulation, content fabrication, and authorship-for-sale and may be slightly more acute in higher-volume journals or those with lower rejection rates. But from what I’ve seen, these issues are prevalent in every tier of the journal hierarchy. It’s a system-wide problem that all of us are going to need to address.

Thank you for making a plan to address this challenging subject. You might also find a way for Wiley and other publishers to work more collaboratively with independent parties who have worked hard to understand and expose these paper mills. Some, like Drs. Elisabeth Bik and Alexander Magazinov, do this work using their real names, others working on research integrity matters do so anonymously or pseudonymously, like Claire Francis and myself. Much of the time we report our concerns on PubPeer, to which publishers can subscribe.

Hi Cheshire – thanks for the work you do to uncover paper mills and other research integrity issues. As I mentioned above, I very much believe that this effort will require a global and multivariate approach on the part of a whole host of stakeholders. I should have mentioned independent research integrity experts, who play an important role here – that was a real omission. And yes, we are open to approaches that allow us to work more collaboratively together. Serious question: how do best approach a collaboration and still respect the desires of some RI experts to remain anonymous?

Thank you for your kind words.

Off of the top of my head:

Actually, I think collaboration could be pretty easy to accomplish. PubPeer offers journals (and publishers?) the ability to subscribe to a “dashboard” that I believe would accomplish much of this. As you know, PubPeer posts are moderated, so this should effectively screen out concerns that do not seem credible to the moderators, or include information that is not publicly verifiable, etc. This would save a lot of time and effort for those of us who want to notify a journal/editor about a concern – right now we have to manually locate email addresses for editors or other journal contacts (which is not always easy). As you know, PubPeer permits anonymous posting, so those who choose to remain anonymous can do so. Via the dashboard, I believe the journal can post comments on PubPeer in response. https://pubpeer.com/journal-dashboard

Another alternative would be to create a private Slack channel which could permit invited research integrity people to collaborate. This too would permit users to maintain anonymity. I don’t know enough about a journal’s or publisher’s workflow to know if this would be too cumbersome. Some of our loose knit group of research integrity people already use this method to work with each other.

Lastly, just having a journal email address that is used for research integrity matters would be helpful. Very few journals and publishers do this now, but a few do. This last option is the least collaborative, but has the benefit of permitting a person reporting a concern (like me) to include contacts at a journal, responsible institution, and/or funding agencies on a single email message. The other two options above don’t solve that particular issue.

I think you should solicit feedback from people like Dr. Bik, who probably have thought more deeply than me about these things, but thank you for asking for my input.

Dear Mr Flynn, dozens of articles I have notified to Wiley/Hindawi during the last three years still await to be retracted. Maybe a first step to make things better would be to retract them?

Secondly, Wiley/Hindawi should cut down half of its portfolio of journals. Many titles are appalling, and do not add up a cent to the progress of knowledge. What do you think?

First off, Wiley is entirely accountable for the quality of the journals we publish. When we became aware the bad actors in the Hindawi Special Issues program, we shut the program down and began a review process that looked at a) changes to the operating model, b) vulnerabilities to paper mills in the program itself and gaps that we need to close, c) a process for issuing retractions at scale. So far, we have retracted more than 500 papers and announced that we will be retracting 1200 more. Retractions are serious, and even the most egregious cases of research fraud need to be fully investigated following COPE guidelines. Nonetheless, we are committed to cleaning up our side of the ledger and will continue to do so transparently.

Could you expand on what you mean by “subverted our processes and workflows”? What were the loopholes? What specifically was being done (so others know what to watch for)?

Hi Karen – thanks for your question. Generally speaking, I think it’s fair to say that participation in the scholarly communications network has been based on trust between parties (authors, readers, reviewers). As a result, the digital landscape in scholcomm was not “hardened” against the kind of identity-based attacks deployed by the paper mills. These attacks are usually enhanced by fabricated profiles in (academic and professional) social networks and, crucially, in industry-standard databases that host author profiles and are searched for potential reviewer matches.

In Hindawi’s case, the Special Issue “Guest Editor” role was infiltrated by people posing as legitimate scholars. These bad actors, who presented themselves to Hindawi with manufactured credentials, and then exploited the longstanding practice in journal publishing that allows Guest Editors a degree of independence in commissioning articles and recruiting authors. Legitimate Guest Editors draw on their professional and personal contacts to acquire submissions that a title might not normally receive. Illegitimate Guest Editors can use those same “decision rights” to hijack a title until they are detected.

I should point out some additional details here that are applicable for the whole industry: 1) Hindawi used over 70 automated manuscript checks in its editorial process and was still vulnerable to these types of attacks. 2) Special issues and proceedings are particularly vulnerable to these types of manipulation, but any journal, regardless of editorial model, is susceptible to these types of fraud. 3) We are aware of instances where people have hi-jacked other legitimate researcher’s credentials without authorization, making it even more difficult to detect.

Wiley will be running a series of meetings over the next few months where we will be sharing what we’ve learned with our publishing industry colleagues where we can get into more granular detail. I’ll share those details in the comments here once we have them.

As someone who has contributed to the exposure of scientific paper mill products (my favorite is the one with tabular data on ovarian cancer in males), I would like to point out that there is also industrial production of review articles that are plagiarized (including those that use repeated translation to generate a text that escapes detection) and that cite mainly paper mill products. It has proven nearly impossible to get them retracted.

“One takeaway became immediately and abundantly clear: the environment in which we operate has changed, and the research community – publishers, researchers, and the companies that provide services to them – needs to change with it.”

There is a solution: Teixeira da Silva, J.A. (2022) Does the culture of science publishing need to change from the status quo principle of “trust me”? Nowotwory Journal of Oncology 7(2): 137-138. https://journals.viamedica.pl/nowotwory_journal_of_oncology/article/view/86446 DOI: 10.5603/NJO.a2022.0001

Is this the same researcher profiled here: https://retractionwatch.com/2015/09/24/biologist-banned-by-second-publisher/ ?

“Biologist banned by second publisher.

Plant researcher Jaime A. Teixeira da Silva has been banned from submitting papers to any journals published by Taylor & Francis. The reason: “continuing challenges” to their procedures and the use of “inflammatory language.”

This is the second time Teixeira da Silva has been banned by a publisher — last year Elsevier journal Scientia Horticulturae told him that they refused to review his papers following “personal attacks and threats.”

If this is the same person, these older concerns certainly don’t invalidate the views expressed in this more recent publication. I would note, however, that approximately 50% of the citations in this paper are to the author’s own publications.

This post, and the response to some of the comments, does illustrate two fundamental problems in how Wiley has been thinking about paper mills.

For a start, as I’ve argued in a preprint (https://psyarxiv.com/6mbgv) there has been an underlying assumption that bad actors have managed to get their work past honest editors and peer reviewers. It’s clear that insufficient scrutiny by the publishers has let in dishonest editors who can then subvert the entire process by taking over special issues and filling them with junk. Unfortunately, the COPE investigation guidelines are simply inadequate to this situation.

Second, as Cheshire pointed out, sleuths have been at first perplexed and then angered by the publisher’s failure to make use of PubPeer, which provides them with free scrutiny of articles in their journals. The response by Jay suggests that the publisher won’t act if commenters are anonymous. This is a serious failure and misunderstands what PubPeer is. The comments there mostly describe factual issues that can readily be checked by reading the article. It does not matter whether an anonymous or identified person informs you that an article has manipulated figures, is totally unrelated to the topic of a special issue, or is pure gobbledegook – you can check that by reading the article. The inference is often drawn that the publisher is just not interested in putting things right because the explosion in special issues is so lucrative for them, but, as we have seen, their reputation is trashed by publishing garbage and in the long run it’s not a sensible strategy. So I think their own commercial interests, they need to take out a subscription to PubPeer and start monitoring what is said there.

On the one hand researchers (or “researchers”) desperate for papers and citations. On the other hand publishers who get paid for publishing and whose overwhelming cost is editorial quality assurance. What could possibly go wrong? The only real solution is a revolution in the evaluation of researchers to focus purely on quality and ignore quantity. A place a million miles from where we stand now.

In the meantime, there are a couple of simple things publishers can and should do.

1) Know who your authors, editors, and reviewers are. Require them all at least to validate their identity with an institutional email. Even better, require an institution-validated ORCID (the validation is required because otherwise ORCID profiles can be faked). At PubPeer we have required institutional email addresses (for authors and signed accounts) from the beginning, so there is really no excuse for publishers.

2) Require full data sharing at publication. This is soon going to be a general requirement in the US anyway. It’s relatively straightforward to share data if you have it, but much, much harder if you don’t.

Thank you to those promoting PubPeer Dashboards. For the avoidance of any doubt, these are a paying service. As pointed out by Cheshire, they provide a channel for editors to learn from some of the truly expert users whose contributions create the value of the site.

Yes, this is the nub of the issue that gets to the heart of the Hindawi issue – essentially Wiley are shutting the stable door after the horse has bolted because it has the combination of a for-profit publisher with open access. That is potentially a formula for paper mills and everything else that has gone wrong with the rise of predatory publishing. On top of that, unfortunately Wiley, with its good name in publishing, has provided a degree of cover here that it is hard to believe they were not aware of when they took on Hindawi, which had a pretty chequered history before it was purchased.

I have found in reviewing for other open access legacy publishers that the standards I would apply to a subscription-only journal in peer reviewing a submitted manuscript are just not adhered to with for-profit open access where the default option is to publish, almost regardless of reviewer comment. This would be even more the case where a suspect title came under the wing of a respected legacy publisher.

There are several ways that Wiley, if it was serious about this, could address the issue. It could publish peer review reports along with the finally-published manuscript for a start. Then we can all judge how searching they were or were not. Ultimately it comes down to, not just the failure to curb an excessive profit goal, but also probably to change the culture at Hindawi. It is inconceivable that Wiley were not aware of the risks they were running with multiple special issues and opaque peer review and author attribution systems. A culture change is required, and much greater transparency on basic metrics and processes. Otherwise we run the danger of a respectable legacy publisher providing cover for a highly suspect, if profitable, side hustle.

Thanks for the clarification, Boris. Though I believe the cost of the PubPeer Dashboard would be covered by a handful of APCs from a Hindawi journal, and so should not be a deterrent to Wiley.

Does anyone know if these retracted papers live on forever in repositories and preprint servers? Fraud will exist so long as the author’s incentives for publication vastly outweigh the costs. Wiley did the hard, right thing by retracting and going public. Paper mills will adapt to avoid current detection methods, ultimately be detected, and readapt. At least we have processes in place where respectable publishers maintain the versions of record. As we move from human to machine consumption, these issues becomes more important. In large language models, machines are often not trained to know the difference between vetted science and junk science.

Flagging of retracted papers has improved immensely on PubMed and some reference managers like Zotero have a highly visible warnings that a saved record has been retracted. On the other hand the number of people using only google scholar for searches and no reference managers is worryingly high.

Wiley has completetly failed as a serious publisher.

My experience from early 2020, is that Wiley is not really interested in fighting against research fraud. We warned them repeatedly about papermills in their many journals, but their response was very slow and only due to intense pressure, they started retract some of the papers. Eg. the journal Biofactors, where we identified many obviously fabricated papers. Their first conclusion was that there was no problem, and the case was closed. Then after more pressure, they retracted.

Wiley now publishes an extremely large volume of articles, most of which are of no scientific value and only contribute to misinformation and confusion.

Public funding is spent on distribution of misinformation and we need much more quality control in scientific publishing.

Yes, I have always held them in high regard, particularly in the area of Statistics. But I must say that regard has been tarnished by their poorly-managed association with Hindawi.

Hello everyone,

Thanks for all the details here, but it seems no one discussed what would come for the honest authors who worked really hard on their papers and got them accepted by one of these journals.

We submitted a manuscript to a high-impact journal, and after a long wait and a lot of efforts to enhance the paper during the review process, it got accepted but not published yet, and then this happens: the journal lost its ranking due to a paper mill issue and got closed by Hindawi.

Would someone please explain what could happen now? What should the authors do?

Thank you!