Discovery patterns and practices are changing steadily, as workflows adjust to new services and work around a variety of barriers. The best data about discovery practices are held by content providers, who are able to analyze the variety of sources that researchers use to reach their platforms. But while many content providers analyze their own traffic sources, they tend not to share these data with one another or publicly, making it impossible for them to know whether their patterns are typical or particular. And while libraries may know that the use of one or another discovery service they provide is growing or shrinking, they do not have access to the black box of web and academic search engines like Google and Google Scholar, making their view especially blindered. Given the limited availability of data about actual discovery patterns, all but the most sophisticated are forced to turn to the next best thing, which is survey findings.

I run Ithaka S+R’s US Faculty Survey, and our companion partnership with Jisc and RLUK, the UK Survey of Academics, both of which touch on discovery issues and both of which will release findings from their latest cycles this spring. I know the great value of survey research and also the cost of undertaking broad sector-wide surveys with rigor, and I am always frustrated to be unable to explore a single topic such as discovery with the depth I might wish in a single survey.

It was therefore with great interest that I learned of the publication this month of Tracy Gardner’s and Simon Inger’s How Readers Discover Content in Scholarly Publications. This is one of the broadest surveys ever conducted on discovery. It is international in scope, goes beyond the academic sector to include corporate, government, and medical users, and draws on some 40,000 responses.

Before looking at the findings, I must say a few words about method. The population surveyed is derived from the contact lists of a wide group of publishers. It was drawn from lists of authors, reviewers, and society members, as well as users who registered online for alerts, for mobile device pairing, and for other purposes. This type of convenience sample approach is, by definition, not representative of any single population, and yet nevertheless the researchers normalized the responses to match the demographics of the respondents in the previous cycle of this survey. In addition, the size of the sample is not indicated, presumably because several open calls for participation were used in addition to targeted invitations, so while the response rate is estimated at between 1-3% it is impossible to establish conclusively. I am sympathetic to the tradeoffs that must sometimes be made in order to conduct research in a timely fashion and in the available budget, but each of these is a serious methodological shortcoming. To their credit, the researchers were transparent about the project limitations (and very helpful in discussing aspects of the project with me upon my inquiry). But in interpreting the findings and considering potential business responses, scholarly information professionals should practice caution.

There are findings from this survey that make good sense and deserve attention on their own merits. Perhaps the most significant of these, which is a theme explored across the report, is that discovery patterns and practices vary across different sectors such as academic, corporate, and medical, different countries and levels of national income, and different fields and disciplines. A content provider with a global footprint, or with a list that cuts across fields, therefore has a much more challenging task before them than just optimizing for a single set of needs, and some publishers are aggressively developing their channels for discoverability as a result. A library cannot just follow the “best practices” of another institution whose user base may be rather different, and some libraries that have the resources to do so are developing services designed with their own researcher population in mind. Examining which set of needs are of greatest importance for your own user communities, and how best to serve those needs, is essential for any information services organization.

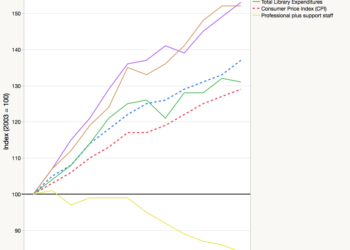

At the same time, one of the most apparently important findings is simply not, in my view, credible. The report finds a significant overall increase in the publisher website as a starting point for searches for journal articles on a specific subject (figure 4). Within the academic sector, it finds the growth in importance of the publisher website as a starting point has grown substantially in every field (figure 10). These findings are contradicted internally within the report, as well as being mismatched with the reality being experienced by many publishers. Within the report, there is a finding of steady downward satisfaction with an array of publisher platform features, including “Searching” (figure 49). And among content providers, I am aware of many that have experienced an overall trend of fewer and fewer searches on-platform, with a growing share of traffic sourced from third parties such as websearch. I fear that with this particular set of findings, the convenience sample — drawn from those with an existing close connection with content providers — is skewing the results.

Search is important, of course, but it is by no means the only way that researchers discover scholarly content. The report finds that search accounts for approximately 40-45% of discovery of the last journal article the respondent accessed, a figure that varies only slightly by sector (figure 30). While the report emphasizes that “search is dominant,” for me, the headline finding here is that the other means of discovery specified — everything from personal recommendations and social media to alerts and citations — collectively add up to drive more traffic than search. This will vary quite a bit by content provider, but it emphasizes the importance of not just seeing Google Scholar as one’s discoverability strategy.

The report distinguishes firmly between discovery and delivery, even though there are notorious challenges in establishing this distinction in online workflows. We have also seen in other research the challenge in establishing for academics the distinction between free resources and those that are licensed by the university library on their behalf and made available to them at no personal cost. So, we should be cautious in responding to the report’s finding that, in high income countries, academics source less than 40% of their journal articles from paid/licensed sources and the remainder from a variety of free/open sources (figure 38). Certainly, the share of access provided elsewhere may well be growing, but the idea that institutional repositories on their own account for fully one quarter of article access is just not believable in light of the small amount of journal content and usage in many repositories.

The researchers asked respondents about “your favourite online journals,” but I wonder if this is still the right formulation. In light of changes in the discovery environment, fewer scholars are keeping up with current scholarship in their field by browsing current issues or receiving alerts regarding new journal issues. Scientists such as chemists have reported that they face a “deluge” of relevant articles, are struggling to maintain current awareness in their field, and are therefore looking for improved current awareness mechanisms, not keeping up with a selection of favorite journals. Indeed, one of the report’s most important, consistent, and credible findings is the dropoff in importance of new issue or topic alerts (figures 26, 27, 34, 35, 49).

In addition to journals, the researchers examined the most important starting point when searching for scholarly books. While they did not break the findings out just for humanists, which would have been intriguing from a university press perspective, their findings for academia overall show some meaningful differences as compared with journals. In academia, library web pages, discovery tools, and search engines barely edged out general web search engines for first position, with online bookshops such as Google Books and Amazon Kindle (not Amazon writ large) in third position (figure 31).

Ultimately, it is impressive that Gardner and Inger have taken on such a large-scale study, which adds some additional context to our understanding of discovery, especially in its diversity. In a future cycle of this project, it is my hope that some of the methodological shortcomings can be addressed so that the study can provide stronger guidance for service models and business practices.

Discussion

23 Thoughts on "How Readers Discover Content in Scholarly Publications"

Mentioned in passing, here, is the comment from one scientist that they are “deluged” And, almost 40 years ago, there was a side bar in a physics journal that said that if the researcher were to track all the publications in the specialty area and not do their own research, that at the end of the year, they would be 10 years behind. The problem, of course, in part do to the pub/perish pressure, is that key items of value are often buried in what, to the researcher could be called persiflage (value but, to whom)

Roger points out that the study went beyond the “scholarly” community which is of interest here. Many of those researchers now have increasingly sophisticated “extraction” engines which are based on “narrative” in that they use more than key words but can provide “profiles” or context, can extract the relevant text for further refinement. While they can extract this, the AI engines which can write narrative from information extracted from large databases, they are not yet able to create meta data or narratives based on the extracted narrative.

At this point in time, for uncollated narrative across publications, scholarly and otherwise, all the techniques noted in this article are important such as peer networks, citation indices, etc. But the bottom line here is the fact that researchers, and even their human assistants are unable to match the growing capability of well trained, deep learning algorithms, that in addition to extracting and collating are also quite capable of stripping out duplications or those that substantially “duplicate”.

Which brings up the interesting points:

a) The vestigial form of even scientific articles not only make it difficult to sort thru for relevance but they increase the articles in length and quantity, especially when the work can be linked to the historic and relevant in the literature

b) The recent articles about early citations and preprints coming out of the biosciences to parallel physics, especially by senior researchers goes back to the “futures” where researchers shared with each other directly, eventually resulting in the first “transactions” or journals to extend access. The rise of AI could see a rebirth of AI supported global sharing.

c) Extending “b”, it has been noted that “young” researchers are looking for “vetting” and journals provide that path. If such validation is the principle rationale, then that is a cumbersome and very expensive vehicle and researchers need to seriously consider that the entire enterprise of formal academic publications needs disruptions.

It is not how do readers discover but rather how do professionals in a field who are interested in a topic discover. That is entirely different than readers which implies everyone who reads. When I was a busy guy (I am now retired and busy trying to be busy) and needed information in order to carry out my work I had a method to accomplish that task. I would think folks who are actively engaged in research do the same. One may miss something, but that miss is seldom catastrophic.

Of course, if one is involved in the world of chemistry one can use CAS. I have often wondered why that kind of research tool is not available across the world of science.

All of the major publishers and perhaps most of the smaller publishers collect and analyze usage data on a continuing basis. There is a wealth of information captured by the publishers. It is too bad that this data could not be shared but I known why it is not community property. Publishers know where their traffic is originating, referred from, what institutions are using down to the journal and even article level. Individual libraries can obtain their usage data from publishers. Google and its varies country servers deliver usage so that a publisher can tell how much usage is from Japan vs. China vs Canada and I believe that for most publishers Google is still a dominant traffic source. I guess I am saying all of this to say their is a mountain of information out there and these surveys do their best to pick out some information. Right or wrong, the community remains quiet and let’s the survey stand even though many of the findings are incorrect. Glad you pointed out some of the weaknesses.

We appreciate the time Scholarly Kitchen has given to the critique of our report. Given that it is largely negative we would like to respond to a number of the comments made in the analysis. It seems that in his critique, Schonfeld picks up on the methodological shortcomings acknowledged in the survey and then uses it as the evidence with which to refute anything which is counter to his understanding of discovery and delivery. We would like to have seen a more balanced analysis of the report than this and we are disappointed in the one-sidedness of this critique.

Schonfeld, and subsequently Tonkery in his comment, has, in our opinion a false view of the quality and accuracy of usage data and analytics held by publishers. In our experience of working with publishers we see three common causes of these inaccuracies:

A) IP access with optional proxy: If we look at an example of a university in Australia with a remote campus in South Arica. If the publisher uses its access control system to drive its analytics, it will place a user from South Africa in Australia instead. If it simply uses the IP address of the client, then the analytics will place the user in South Africa, or potentially in the country where a proxy has been remotely operated, often the USA or UK (and soon to be Holland for some ezproxy installations). So depending on the sophistication of the analytics set up, our reader in South Africa could be recorded as being from South Africa, Australia or Holland! For many publishers this renders their analytics inaccurate and misleading.

B) IP Error: We know that through accumulated procedural error, and in some cases malice, IP address databases held by many publishers are deeply inaccurate. Often users notice that a publisher web site places them in more than one location, i.e., when they arrive at a publisher website the website will list two, often completely unconnected institutions, that they are part of. If the user goes on to download an article, then the publisher’s COUNTER statistics often increment a download for each account, invalidating the publisher’s own data, and the data it supplies to libraries.

C) Referrer Data: In terms of referrer data we know that publisher web sites only see the last referrer, not necessarily where the reader starts their journey. Google Scholar, A&Is and whole raft of other services are re-intermediated by library technology. The referrer becomes the library but it is not where the user started their journey.

There is a proposed NISO project to address some of these issues: http://www.niso.org/news/pr/view?item_key=c2ab810f6ee30db6113af9bd6638748d5caf94f7

Surely Schonfeld doesn’t believe that in the face of this evidence that publisher web logs contain all the answers? Tonkery went on to state in his comment “Publishers know where their traffic is originating, referred from, what institutions are using down to the journal and even article level. Individual libraries can obtain their usage data from publishers. Google and its varies country servers deliver usage so that a publisher can tell how much usage is from Japan vs. China vs Canada and I believe that for most publishers Google is still a dominant traffic source.”

This is an overly simplistic and inaccurate assessment of what can be recorded by libraries and publishers – this is explained in the introduction of the report.

The survey does report some surprising results. The rise in the importance of publisher web sites for search is surprising to us, but not impossible to rationalise, and certainly not incompatible with findings elsewhere in the report about how much publisher web site features are liked. We discussed this with Schonfeld on email prior to his critique. The growth in importance could simply be put down to better visibility and more sophisticated marketing from the publisher – after all, publishers spend a lot of money promoting themselves and their own websites, some of this marketing must have an effect. People may simply be more aware of the publisher website than before. Respondents could feel that other services have become less reliable or affordable. If the user does not know about Google Scholar (as many in lower income countries and sectors outside academia do not appear to) they may have noticed Google displaying fewer scholarly results since its change of indexing policy for subscribed content several years ago and so have sought out alternative resources. For people with less knowledge or access to alternative resources the publisher website may well be the next best point of call.

When we started to dig further into the results in order to understand this finding in more detail, one of the first sets of response data we looked at was Wiley’s. Our initial thought was the rise in publisher web site popularity was somehow a Wiley effect, that Wiley’s engaged clients really like the Wiley web site. But then we saw the effect repeated across many other publishers who took part in the mailing. Perhaps most significantly we saw the same increase in the data from the CUP mailing, showing a clear change from the data from CUP’s involvement in the survey in 2012. So we very much think this is a real effect.

On the second point about a supposed contradiction in findings, once again it can be rationalized. Someone can feel a service is important but not actually like the way it provides that service – for example, a respondent could have said that they felt the publisher website was “somewhat important” when they were looking for articles on a certain subject but felt that the search functionality itself wasn’t particularly useful. After all, there are other ways to use a publisher website than to hit the “search” button.

Schonfeld then dismisses the survey’s findings on delivery, where we see that readers perceive that over half of their downloads are from free incarnations of content and therefore as a result completely ignores one of the main findings of the report. We reported that PubMedCentral seems to be a significant provider of content for people in the medical sector “people in the medical sector say they are accessing journal articles from a free subject resource 25% of the time. This is significantly higher than all other sectors in high income countries”. We would like to note that the categories listed in this particularly question were – the publisher website, journal website, full text aggregation or journal collection; a free subject repository; an institutional repository; Researchgate, Mendeley, or other scientific networking site; a copy emailed by the author – so the question we asked was not as simplistic as free resource vs paid resource. However, whether this figure is completely accurate or not is perhaps a somewhat moot point. One of the key messages is that if readers perceive that the majority of their needs are met through free resources then this has to have an impact on how much they are willing to support library budgets and subscriptions in the future! To ignore it could be deeply perilous for publishers.

Apologies for such a lengthy posting. We know a lot of people read this blog so thought it important that publishers are armed with more up-to-date thinking than what was being offered. The reason for running this research in the first place was because the research that is out there does not address the whole picture of discovery and we work with many publishers who have asked us for our help in understanding the landscape and maximising their content distribution strategy. The introduction of the report does explain the reasoning behind the research.

We recognise that our survey does, in places, challenge the status quo, and does not quite meet with some people’s personal or professional agendas, but it is probably more useful to be open to challenges rather than pretending they don’t exist.

David, was that question for us or Roger? In the email notification I received it looked like your question was to Roger but its not clear from what has been posted here. Thanks.

Regarding the use of the publisher’s website for topical searches, I think this finding may well be credible. To begin with there are a variety of very different things that people are doing when they access the literature. I have yet to see them cataloged. Different strategies are appropriate for different goals and the mix may change over time. Then add to this that publishers have added a lot of search functionality, especially the more-like-this feature, which I sometimes use extensively.

Then too, note that one of the specific functions of journals, and collections of journals, is to aggregate articles on a specific topic. If I am interested in funny walks it makes sense that I would start by exploring (not just searching) the Journal of Funny Walks. My research indicates that researchers are nomadic when it comes to topics, so they are often exploring new realms. Starting with the lead journal is a way to do this.

I think we need to distinguish search from exploration and exploration from the other, often very different, uses of search. The basic point may be that discovery is far to broad a concept.

We have seen absolutely no increase in the use of “on-platform” or “on-journal” search across our publishing platform (use remains fairly steady but very low). I have been told similar things from other publishers and from platform providers. If this had suddenly become a major route to discovery, one would think that we would actually see an increase in the number of such searches happening, but we haven’t.

What are you including in searches? As I explained, exploration and using the search function are very different. In my exploratory work I never use the search box but I spend a lot of time on a publisher’s site. For example, how are your more-like-this stats trending?

I am referring to their statement that accompanies figure 4, “All search resources that are under publisher control – publisher website, journal alerts, journal homepage and society webpage – have made gains”

Several of these have seen general declines across the industry, at least in my experience and those reported by others. I do not have data at hand on recommendation usage, but all use of the search engine internal to our journals/platform has been steadily low, no significant increase.

You have not addressed my question. What does “use of the search engine” include, as far as complex search behavior is concerned? The survey seems quite broad in this regard.

Moreover, surprising results are often the most important in science, including the science of scientific communication. It strikes me that you and Roger may be being unduly dismissive, rather than inquisitive. I am no fan of surveys but I dislike anecdotal claims even less. You and those you talk to may be early adopters, in which case you will miss trends driven by mid to late adoption.

Use of the search engine means, literally, use of the search engine. How many searches are performed on our journals sites/platform using the search engine.

The question is what fraction of site search behavior involves using the search engine? I suspect it is relatively small, so we are simply talking past one another. The survey is not about use of the search engine.

I am making a simple statement. If “all” search resources were seeing significant gains, we’d see it in our usage logs. We do not.

Now you have changed the metric, from search engine activity to usage logs. Neither is a measure of what this survey is about.

Sigh, indeed. I guess you are speaking a special language. Where I come from (DOE OSTI) usage and the search engine are very different. Usage means things like page views, downloads, etc., which have nothing to do with the search engine. The search engine is activated when people do searches, of which there are various sorts, and only then. If you have not implemented this distinction between usage and search you might try it.

But again, the survey issue addresses neither per se.

Sorry I misread your usage logs note to include all usage. Please disregard my previous comment.

No, their statement does not mean that your search engine usage logs need show an increase. As I keep saying, there are many ways of searching a publisher website that do not include using the search engine. Their survey is not specific to using the search engine, so there is no such implication. How could it be since users do not know when they are or are not doing that?