Everyone (of my generation, anyway) knows the story of the Van Halen M&M Rider: this was a provision in Van Halen’s touring contract that required each venue to provide the band a large bowl of M&M candies with all the brown ones removed. One reason everyone knows the story is that it so clearly exemplifies what was wrong with rock ’n’ roll in the late 1970s: arrogant rock stars had become used to getting whatever they wanted in whatever amounts they wanted, their most absurd whims catered to by a support system of promoters and managers who were willing to do whatever it took in order to get their cut of the obscenely huge pie. The story was perfect, and it was all too easy to imagine the members of Van Halen, swacked on whiskey and cocaine, howling with laughter as they made their manager add increasingly-ridiculous items to the band’s contracts.

In other words, the standard explanation for Van Halen’s M&M rider — that it was a classic expression of bloated rock privilege — is a hypothesis with a great deal of face validity: it simply makes good intuitive sense, and is therefore easy to accept as true.

Like many hypotheses with a great deal of face validity, however, it turns out to be wrong. Not just imprecise or lacking in nuance, but simply wrong. The reason that the members of Van Halen put the M&M rider into their contract had nothing to do with exploiting their privilege or with an irrational aversion to a particular color of M&M. It had to do with the band’s onstage safety. As it turns out, other provisions of the band’s contract required the venue to meet certain safety standards and provide certain detailed preparations in terms of stage equipment; without these preparations, the nature of the band’s show was such that there would have been significantly increased danger to life and limb. The M&M rider was buried in the contract in such a way that it would easily be missed if the venue’s staff failed to read the document carefully. If the band arrived at a venue and found that there was a bowl of M&M’s in the dressing room with all the brown ones removed, they could feel confident that the entire contract had been read carefully and its provisions followed scrupulously — much more confident than they would have been if they had simply asked the crew “You followed the precise rigging instructions in 12.5.3a, right?” and been told “Yes, we did.”

What does this have to do with scholarly communication? Everything. In scholarly communication (as in just about every other sphere of intellectual life), we are regularly presented with propositions that are easy to accept because they make obvious sense. Sometimes these are accompanied by rigorous data; too often they are supported by sloppy data or anecdotes. Sometimes they aren’t supported at all, but are simply presented as self-evidently true because their face validity is so strong.

In scholarly communication, we are regularly presented with propositions that are easy to accept because they make obvious sense.

A classic example is the citation advantage of open access (OA) publishing. It seems intuitively obvious that making a journal article freely available to all would increase both its readership and (therefore) the number of citations to it, relative to articles that aren’t free. By this reasoning, authors who want not only broad readership but also academic prestige should urgently desire their articles to be as freely available as possible. But the actual data demonstrating the citation impact of OA is mixed at best, and the reality and significance of any OA citation advantage remains fiercely contested (for example, here, here, here, here, here, here, here, and here).

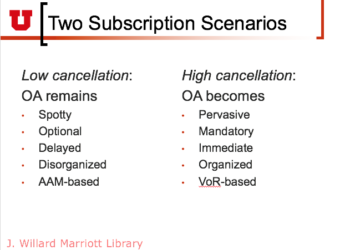

Another example is the impact of Green OA on library subscriptions. It makes obvious sense that as more and more subscription content becomes available for free in OA repositories, subscription cancellations would rise. This is a hypothesis with obvious face validity, and yet despite the steady growth of Green OA over the past couple of decades, there is not yet any data to indicate that library subscriptions are being significantly affected. In my most recent posting in the Kitchen, I proposed that the reason we haven’t seen significant cancellations is that Green OA has not yet been successful enough to provide a feasible alternative to subscription access; others have argued that there is little reason to believe that Green OA will ever harm subscriptions no matter how widespread it becomes. There probably won’t be sufficient data either to prove or to disprove the hypothesis definitively for some time.

Another example of a scholarly communication hypothesis with strong face validity is the proposition that if funders make OA deposit mandatory, there will be a high level of compliance among authors whose work is supported by those funders. Do the available data bear out this hypothesis? Eh, sort of. The most recent analysis of compliance with the Wellcome Trust’s OA requirement found 61% of funded articles in full compliance — not exactly a barnburning rate. In 2012, Richard Poynder determined that the compliance with the National Institutes of Health’s OA mandate was a slightly more impressive (but still not stellar) 75%. As far as I can tell, compliance data are not available from the Gates Foundation or the Ford Foundation, both of which are major private funders of research in the United States and are of course under no obligation to provide such figures publicly. (If anyone has access to compliance data for these or other funder mandates, please provide them in the comments.)

The danger of a false but valid-looking hypothesis increases with the importance of the decisions it informs.

What these three examples suggest is that the face validity of any hypothesis is a poor guide to its actual validity. Some hypotheses with high face validity (like the OA citation advantage) start to buckle under rigorous examination; some (like the impact of Green OA on library subscriptions) may turn out to be valid and may not, but there’s no way to know for certain based on currently-available evidence; for others (like the impact of funder and institutional mandates on authors’ rates of article and data deposit) the supporting data is somewhat mixed. This suggests that deep caution is called for when one encounters a hypothesis that sounds really good — and even more caution is indicated if the hypothesis happens to flatter one’s own biases and preferences.

The current political landscape in the U.S. and Europe has many of us feeling an increasing level of concern about whether important decisions are being made by individuals, by government agencies, and by political leaders in the face of solid and reliable evidence or based simply on what sounds good. Face validity is seductive, which makes it dangerous — and the danger increases with the import of the decision, and with the degree to which the decision-maker is truly relying upon face validity rather than on actual data, carefully gathered and rigorously analyzed.

Discussion

35 Thoughts on "The Danger of Face Validity"

Evidence-based policy and evidence-based medicine spring to mind. As opposed to what, one might ask. Oh brave new world, etc.

Great post, and the Van Halen/M&Ms story is one of my favorites. I think a key aspect to why some assumptions gain such traction isn’t that they appear valid or “make obvious sense.” Rather, I think some ideas gain traction because they’re emotionally gratifying, the same way it was emotionally gratifying to think that a rock star’s demands about colorful candies were vain and silly and self-indulgent, while in fact that requirement was canny, smart, and insightful.

The idea that free content could actually gain more citations is emotionally satisfying — it would make people happy if it were true, and lead to other emotionally satisfying observations. When it turned out not to be the case, the reaction wasn’t, “Well, those are the facts.” Rather, the reactions have been more about emotional dissatisfaction, which manifests itself in making another run at the question until an emotionally satisfying answer is achieved.

We live in a media age that caters to emotional gratification. As you note, “what sounds good” isn’t enough. Insisting on solutions that make us feel good isn’t going to work, either.

I agree with this, but I would like to add that I could also believe the opposite. That is, as well as having a tendency to believe satisfying news at face value, we may also be inclined to believe horrible news, if they are aligned with our prejudices. It is the nuanced news that many seem to have an aversion to.

PEER REVIEW While I take your point about OA publishing, the principle also applies to research itself. Follow the conventional wisdom (usually quite obvious) and get grants, grants, grants! To have original ideas and attempt to act upon them can be akin to professional suicide, especially for those just entering a field (See Peer Review).

It only goes to show that if it walks like a duck and quacks like a duck it may be a muppet!

Re. Van Halen’s candy shenanigans: why not have an engineer check & verify that the rigging is up to par instead of counting on M&Ms as a reliable indicator of venue safety? Seems like that system could have been easily gamed once the promoters caught on — just remove brown M&Ms and you’re all good.

Re. OA citation advantage: the matter has not yet been rigorously i.e. experimentally examined; it’s merely been observed in an uncontrolled environment. With proper controls there is indeed a resounding OA citation advantage.

With proper controls there is indeed a resounding OA citation advantage.

Really? Can you provide citations? Because the randomized, blinded, controlled trials linked above all show no citation advantage. I’ve only seen the advantage shown in observational studies, not in an actual experiment, but if you have a collection of actual trials, I’d love to see it.

David, there is a single article using a randomized controlled trial approach up there, it is Phil’s article, and it was so poorly designed that it doesn’t prove anything. Apart from an article that examines JSTOR (not OA) and see a positive effect on citation using a panel method, most of the others are just attacking the citation advantage hypothesis by saying there is no robust data to support the claim but propose no data of their own to refute the hypothesis. What is often being proposed in these pamphlets is the way more damaging hypothesis for the publishing industry (again unproven and not supported by robust data) that is there is an OACI, it is due to a selection bias. In essence, if it was true, this unproven hypothesis suggests there is little point in subscribing to journals as the more than 50% of articles freely downloadable online tend to have a selection bias. If this is the case, why subscribe to journals? To access the lesser quality articles that were not selected for online access? If this is the case indeed (which I personally doubt but I have no data to to refute as it is largely a conjecture), then Rick should examine the alternative hypothesis that libraries will stop subscribing to journals as they contain articles of lower quality (the adversely biased, non-selected one). So libraries may not stop their subscription because of the quantity of OA, but the positive selective bias – save library patrons time who will not have to read the poorer papers, and save money by not subscribing to journals just to access the poorer quality papers. As the unproven hypothesis of the selection bias is mostly supported by the publishing industry, most of the observers will fail to understand why there is so much negative energy being spent on such a self-destructive hypothesis.

More rationally, libraries are going to switch to OA in large part because of necessity: most libraries’ budget is not increasing as fast as subscription prices. Also, the system is changing, in addition to a lot of green, there is a lot of gold out there – between the gold journals, the hybrids, and the delayed gold access. Gold is increasingly providing a source of potent source of academic knowledge, though because of the youth of many journals, there is a frequently a citation disadvantage (using the same million-level articles test size and the same methods we use in our measurement of citedness which control for articles’ age and fields; and by the way for which I agree with critiques could use even more controls, if only we had the time or financial resources to do it). A careful protocol would likely show that gold is progressively increasing its acceptability, and citation impact but again, this is just a hypothesis and I haven’t taken the time to carefully measure this.

Eric, can you tell us what’s wrong with the design of Phil’s study?

Rick, I’ll get back to you on this. I read Phil article twice, once shorty after it came out, and once more when David Crotty attacked my “observational” study on the SK. I would prefer to call this type of study of “epidemiological” as David has unilaterally decided that theoretical conjectures were preferable to careful observations, which is one of the foundations in the scientific method. In spite of what David proposes without any epistemological justification, experiments are not the only valid methods in science and flawed experimental designs are not valid scientific proofs. This is especially the case when there is only one such study based on a comparatively small experiment, limited in time observation window, measurements taken in a partial population of among a widely more encompassing observation set.

As I mentioned, I’ll read it again tonight and will come back to you with more detailed caveats that Phil should have mentioned. Again, I agree that my own studies could have more controls. But to say that Phil’s was a robust study just because the title was fancy and the protocol equally fancy in some respect, is missing the point. Minimally, he should have studied the green variable with much greater care as his protocol essentially concentrated on a gold-journal experiment, and used only a one-year window for the measurement of citations, that is, if my memory serves me well.

Please don’t attempt to speak for me. I did not at any point “unilaterally decide” that theoretical conjectures were preferable to observations. I did (unilaterally, I suppose, for I am but one person) state that experimentally testing a hypothesis provides evidence toward causation, whereas observational studies provide evidence of correlation. If you would like epistemological justification, the explanation is fairly simple — in the observational studies, there are too many confounding factors that can’t be eliminated (e.g., do papers from better funded labs or better known labs get more citations than those from labs that are less well-funded or well-known, and how do these factors correlate with OA uptake?). Because you can’t retroactively eliminate these confounding factors, at best your conclusions must be tempered — we see a correlation, but we can’t be sure of the root cause. An experimental approach allows one to set up conditions where those confounding factors are either eliminated or controlled for, with the one remaining variable being the test subject, allowing one to see if it is indeed causative.

Let’s also note that there are lots of observational studies that supply the exact opposite conclusion of the one you promote:

http://www.sciencedirect.com/science/article/pii/S0300571216300185

http://www.mitpressjournals.org/doi/10.1162/REST_a_00437#.WMq5aRjMygw

As but two examples, why are these studies wrong and yours correct?

Further, criticizing the Davis study because it did not study a different subject (Green OA) does not invalidate the conclusions on the subject it did study.

Just looking at the abstract, conflation of ‘free access’ with ‘open access’ should be an immediate red flag. Furthermore, incomplete/insufficient dataset implies a fundamental misunderstanding of OA c.a. — and the way to properly measure it — on a conceptual level.

But in order to evaluate the article you need to look at more than just the abstract. The sample the authors actually took for their study appears to me to consist entirely of OA articles. Does it look different to you?

(T)o say that Phil’s was a robust study just because the title was fancy and the protocol equally fancy in some respect, is missing the point.

I don’t think anyone is saying that Phil’s study was robust because it has a fancy title and a fancy protocol. The assertion on the table is that Phil’s study was robust because it controlled for intervening variables. I’m surprised that you can’t say immediately what you found wrong with it, since you asserted very quickly and confidently here that his study “is so poorly designed that it doesn’t prove anything.” But I’ll be happy to read whatever support you can offer for that assertion whenever you feel ready to offer it.

I did, but in retrospect figured its main flaws are conveniently noted in the abstract so no point doing it again really. It goes scuba diving and concludes birds do not exist essentially. Or at least that’s how it’s generally been interpreted in these parts.

So the flaw in the study is that it didn’t study the thing you wanted it to study? It seems to me the study asks a specific question and does a decent job of setting up experimental conditions to answer that question. You can certainly argue that other questions are valid to ask, but that does not make this particular study invalid, nor does it invalidate the carefully stated conclusion drawn.

Sorry for all the typos.

Firstly, it is important to state that this paper doesn’t examine the citedness of green self-archived papers. It is a bizarre experimental setup where the majority of the articles are from delayed open access journals, which for the time of the experiment (1 year), the treatment group is turned into something akin to hybrid OA articles, before more than 90% of the articles become OA for the measurement period. This is not what would call an ideal experimental environment to start with. A substantially more robust analysis of the impact of hybrid OA articles has been realized in 2014:

Mueller-Langer F & Watt R (2014) The Hybrid Open Access Citation Advantage: How Many More Cites is a $3,000 Fee Buying You? Max Planck Institute for Innovation & Competition Research Paper No. 14-02. Available at SSRN: http://ssrn.com/abstract=2391692 or http://dx.doi.org/10.2139/ssrn.2391692

Interestingly, that study corroborates the results of Davis’ study so despite its limitations Davis’ paper should raise the same kind of concerns as those mentioned by Mueller-Langer and Watt about the value of hybrid APCs.

Now, in greater details, in Davis’ paper, the citations were measured over three years – but the controlled experiment only lasted one year “for pragmatic reasons”. The pragmatic reason is that most journals selected were delayed open access journals (all after one year, and one journal provided free access after 6 month). A properly controlled experiment would have avoided this pragmatic effort instead of accepting to build a study mostly on delayed open access journals which may not be representative of the general population of journals. In Davis’ study, 81.5% of the articles in the treatment group were published in delayed open access journals, and 90.6% of the articles in the control group came from delayed free access journals. If the general population of journals behaved like those in that “controlled” study, about 90% of the total population of papers would be free after one year which is clearly very far from even the most optimistic measure of OA availability. Hence, the randomized experiment did not start with a very robust way of assuring that the test environment was representative.

The paper mentions that “Authors and editors were not alerted as to which articles received the open access treatment. For some journals, treatment articles were indicated on the journal websites by an open lock icon.” For a proper blind experimental protocol, this sentence should have read “Authors and editors were unaware that a study was being conducted. Treatment articles were always undistinguishable from the control group”. This is weak experimental protocol as it is easy for authors and editors to know which articles are openly accessible or not and to alter the experiment. A properly controlled experiment cannot simply wish that actors who have the means, and an interest in altering the course of an experiment will be honest and won’t willfully affect the results, should they want to. In a placebo procedure, patients have a substantially more difficult barrier to determining if she was administered a placebo or not. If there is an open lock icon, isn’t it a clear signal that the article is in the open group which nullify the statement “Authors and editors were not alerted as to which articles received the open access treatment”. But conversely, if the treatment group doesn’t have a sign to signal that the paper is open, then it is more likely that users won’t spontaneously open this article to download it. Why would users try all articles in the hope that some of the them would be mistakenly free in an another fee-access paper. As one can see, it is extremely difficult to control this type of experiment in an absolute robust manner, and in this respect the article doesn’t control for the effect of having an open lock icon or not: if there is an open lock icon, you expose the experiment to tampering, if you don’t, then you limit the signal the paper is open and potentially reduce uptake.

Davis wrote that “To obtain an estimate of the extent and effects of self-archiving, we wrote a Perl script to search for PDF copies of articles anywhere on the Internet (ignoring the publisher’s website) 1 yr after publication”. That method was highly imperfect. What is the recall and what is the precision of that PERL script? There are probably half a million sites harboring freely available versions of papers. What method did that script use to harvest these data from the myriads of sites potentially containing green OA? The author mentions: “Articles that were self-archived showed a positive effect on citations (11%), although this estimate was not significant (ME 1.11; 95% CI, 0.92–1.33; P = 0.266). Just 65 articles (2%) in our data set were self-archived, however, limiting the statistical power of our test.” Over a four-year period (experiment year + 3 years of measurement), way more than 2% percent of papers surely became green OA, it should have been between 8% and 20% (400% to 1000% more) if we trust measures taking at that time by Harnad and Björk and their co-workers. Importantly, most of the literature that has mentioned an open access citation advantage studied green OA but that controlled experiment failed to do justice to that most important part of the study and in the end concentrated on a protocol useful to study hybrid OA. So there was an effect in the direction observed by others for self-archived OA, but the puny sample size of the experiment and inadequate efforts expanded in measuring green OA limited its usefulness.

“As we were not interested in estimating citation effects for each particular journal, but to control for the variation in journal effects generally, journals were considered random effects in the regression models”. These were not randomly selected journals. They were all available on HighWire Press platform and more than 90% of the experiment group were open access anyway after one year (delayed open access). This is hardly a random selection of journals and the controlled experiment had to be limited to one year instead of four if a more random selection of journals had taken place. So this is a randomized selection of articles from a non-random journal set.

A last thing, yes we all agree that variables such as article length has an effect on citation. Importantly, there are thousands of variables such as that one which are potentially acting as confounding variables. The question that needs to be answered is what such variables are likely to be non-randomly distributed between two groups of observations or experimental groups. In the study we have performed in the past to test whether there was a difference in citedness, we have normalized data for year of publication, article type, and research specialties. We may have missed the number of author as, everything being equal, the more authors on a paper, the more likely that the paper will be self-archived. If this enough to account for the difference in citedness we observed, I doubt it but I have an open mind and would gladly accept the result if it was shown in a robust study.

We know that the number of authors plays a role in increasing the citedness of papers hence there is likely a bias here, and as such this variable should be controlled. I doubt that the number of pages is different in OA and non-OA papers, but controlling for this is trivial so it should be taken on board. Other than that, David paper didn’t control for other variables we don’t take into account so that wasn’t the all out control paper which the title made it sound like.

Still, one could always come with more or less frivolous ideas and jam everything. For example, one could always loudly that OA papers are published by older people and these are more likely to be highly cited. Difficult to control, Davis didn’t do it either. One could claim that some labs are better than others and maybe these have a greater propensity to have their papers in OA, and hence would be more likely to have more citations. Davis didn’t control for that either, quite difficult to do in fact with large sample size but feasible in the small types of study Davis undertakes. Where I want to go with this is that it’s easy to discredit studies on the amount of control that went into them or not. Was Davis studies flawed because he failed to control for age and laboratory prestige, perhaps and if it is so then the OACA deniers should drop their last weapon and simply say like climate-change deniers that we don’t know anything. I don’t buy that however, repeated measurements with sample sizes in the thousands, hundreds of thousand, and million of papers with reasonable controls repeatedly point to a citation advantage. We don’t know yet whether citedness derives from openness or from a form of selection bias (I would think both are at play), either way it is good for the supporters of openness as they either get increased impact of science due to open access or increased quality of the freely available papers compared to the remaining ones that are acquired through subscriptions.

Either way, a proper experiment is the only way to legitimately and conclusively settle that question. Until then it’s just your hunch against mine really, isn’t it.

It doesn’t study what it purports to study; my wishes have nothing to do with that. It may ask and answer a specific question, but not the general one — whether or not OA c.a. is a thing at all — remains open still.

Your comment raises many questions:

Phil’s article, and it was so poorly designed that it doesn’t prove anything.

This is an unsupported, inadequate critique. Specifically, what are the flaws in the experiment’s design, and how do they potentially invalidate the conclusions reached?

Again I ask, where is the experimental evidence supporting a citation advantage. Every study that purports to show such an advantage is an observational study that at best shows a correlation, not a causation. The correlation between OA and increased citations is just as valid as the correlation between ice cream sales and murder (http://www.tylervigen.com/spurious-correlations).

…If this is the case, why subscribe to journals? To access the lesser quality articles that were not selected for online access?…

This entire argument is based on flawed ideas. First, it requires citation to be the only valid indication of quality research. Many fields have very different citation behaviors, and article types like those seen for clinical practice or engineering often see very low citation rates but high readership. Are these then automatically “low quality” articles?

Second, you assume that librarians care about citations in making their subscription decisions. This is a misunderstanding of how and why journals are purchased. Librarians are charged with meeting the needs of the researchers on campus, not with selecting only journals they think are important or good. Purchasing decisions are based on campus demand and usage, not on perceptions of quality based on citations.

More rationally, libraries are going to switch to OA in large part because of necessity: most libraries’ budget is not increasing as fast as subscription prices.

And this is another flawed argument. As the California Digital Library showed, a move to OA means increased costs for productive research institutions (http://icis.ucdavis.edu/?page_id=713). Given that the US president just proposed 20% cuts to the NIH, DOE and 10% cuts to the NSF budgets, where is all this extra money for OA going to come from?

I also object to the sales job being done for OA by promising authors they can get more citations by paying money. I find this ethically questionable, telling them they can buy prestige and career advancement. It exemplifies the worst flaws of a “rich get richer” system.

A careful protocol would likely show that gold is progressively increasing its acceptability, and citation impact but again, this is just a hypothesis and I haven’t taken the time to carefully measure this.

So your arguments are based on feelings and guesses, rather than controlled experiments? Fair enough.

>Phil’s article, and it was so poorly designed that it doesn’t prove anything.

>This is an unsupported, inadequate critique.

David, you are right, I didn’t support my claim, I will tonight after re-examining Phil’s article a third time. The critique is adequate as this article is interesting, but certainly doesn’t trash all those in here:

> Again I ask, where is the experimental evidence supporting a citation advantage.

Good strategy, you deny that any science that doesn’t use the experimental method is trash so you’re left with one study to support your pamphlets. You’re on your own to trash 2000 years of scientific progress based on a plurality of non-experimental methods (if only experimental methods were valid, as a case in point, OUP would publish far fewer scientific articles the it does). I’ll stop here on that argument as it is not even more arguing about. The onus to trash all other methods is on you. Here are several studies examining this issue for those who are willing to read papers instead of passing an a priori judgment based on a private view, restrictive view of scientific methods:

>Every study that purports to show such an advantage is an observational study that at best shows a correlation, not a causation.

You are conflating two things. Citation advantage, and explanation for this. The first question is is there a citation advantage? There is ample evidence of this and even if you’re throwing names at these methods, there are simply too many of them to continue to rationally be an OACA denier. Minimally, if you were fair game and not trashing 80% of science you would propose controls we should add to measurement protocols. As I mention, at Science-Metrix, when we measure citation of OA and non-OA papers, we control for fields and year of publication. What else should be controlled for, what is the evidence it is important or minimally, what is your hypothesis suggesting a phenomenon needs to be accounted for in the measurement. It’s not enough to propose a long list of unsubstantiated controls just for the sake of stalling the debate. Those who measure instead of just talking are not going to measure the effect of astrological signs on citedness so we need a rigorous debate here based on solid ideas, not stalling tactics. The second aspect is what is the explanation for the greater citation observed (provided you are not a OACA denier). In the OA camp, they argue it is due to openness – more people see the papers, hence more people cite them – quite intuitive, simple, and elegant – a truly nice, parsimonious hypothesis. Mostly in the publisher’s camp, the explanatory hypothesis is that of the “selection bias” whereby better articles would be more likely to be self-archived (green) hence increasing the number of citations – plausible also.

On the first point, I’m not an OACA denier and the numbers I’ve seen time and again that tens and tens of measurement nearly always point to a greater level of citation of green+established paywalled journals. With gold it seems there is a slight citation disadvantage, probably due to young age of the journals. More research is needed to establish if this is case (citation disadvantage), and why. With hybrids, we would expect a larger citation count but a German study has failed to show significant differences. OK, I’ll buy we need more data with more carefully controlled measures to cut this once and for all. Yet, I suppose that even when 90% of the scientists will be content with the measurements, you’ll still deny that based on the single experiment by Phil based on Gold OA journals (which is off topic as most of the literature speaks about green and Phil’s experiment is extremely weak on this, or you will deny this as well).

Where we have way less research is on the explanatory factor(s). I think the more people, more citation hypothesis is elegant and makes sense but still I agree with you and we can’t presently say this is the explanatory variable beyond doubt. The alternative better quality of the self-selected articles hypothesis is also likely to play a role, we need to find a robust protocol to examine how much of the advantage it explains. I don’t care which one, or if both wins, the important is to stop throwing names and design robust measurement protocols to explain the observed greater citedness of OA articles.

>Second, you assume that librarians care about citations in making their subscription decisions.

Well I would certainly think so: the Journal Citation Report is the most important work of bibliometrics ever, it has reshaped science, and acquisition patterns in library. The JCR and the Impact Factor are both based on citations. So yes, citations are greatly influential, but they certainly don’t explain everything, and I never argued that.

Anyhow, this wasn’t my point. My point was following the logic of self-selection hypothesis. This hypothesis claims that OA papers are better quality, this is the base of the self-selection argument, are you denying this as well? This argument doesn’t require more citation. If the argument that better articles are self-selected for OA, then conversely, logically, non-selected non-OA that are strictly kept behind paywalls are of lower quality. Again, I’m not certain this unproven hypothesis explains a large part of the citation advantage but it is certainly worth testing.

So David, it would be nice if you contributed to the debate with data. At the moment, you are accusing everyone of not presenting robust data and empirical evidence, where is yours? Apart from Phil’s study, where is your evidence? Your whole attacks on the work of others is based on denying that large parts of science are not valid a priori, and the only valid method has one study to back it up. If that study is shown to be inadequate, you will be left with nothing but flames.

Again, please don’t speak for me. What I say here, and I have repeatedly said, is that under some conditions, one can certainly claim a correlation between OA and increased levels of citation. One cannot claim a direct, causal relationship, that OA results in higher citation levels, without evidence directly showing this. Seems pretty simple to me.

Observational studies are great, and important. But with any study, observational, experimental, whatever, one must take great care not to overstate one’s conclusions. I would love to see more experiments, as you suggest, though I think that if one posits an eventual shift to OA, then the point is moot. If all articles are OA (Green, Gold or whatever), then they’re all on equal footing any potential advantage disappears.

Where we have way less research is on the explanatory factor(s). I think the more people, more citation hypothesis is elegant and makes sense but still I agree with you and we can’t presently say this is the explanatory variable beyond doubt. The alternative better quality of the self-selected articles hypothesis is also likely to play a role, we need to find a robust protocol to examine how much of the advantage it explains. I don’t care which one, or if both wins, the important is to stop throwing names and design robust measurement protocols to explain the observed greater citedness of OA articles.

Here we agree. But I would add that it is irresponsible to make the sorts of statements one regularly sees, that OA confers a citation advantage. State what is known accurately, and I have no argument whatsoever. Correlation is not causation, and this must be made clear.

Anyhow, this wasn’t my point. My point was following the logic of self-selection hypothesis. This hypothesis claims that OA papers are better quality, this is the base of the self-selection argument, are you denying this as well?

I think it argues this, and more — are the articles higher quality or just from better funded labs? Are articles from better funded labs of higher quality? What is the relationship between funding and citation? Again, my point is there are too many confounding factors in an observational study in order to make firm conclusions about causation.

So David, it would be nice if you contributed to the debate with data. At the moment, you are accusing everyone of not presenting robust data and empirical evidence, where is yours? Apart from Phil’s study, where is your evidence? Your whole attacks on the work of others is based on denying that large parts of science are not valid a priori, and the only valid method has one study to back it up. If that study is shown to be inadequate, you will be left with nothing but flames.

It would be nice if I was paid to be a researcher. Sadly, I am not, unless you’re offering me a position (not sure you can afford me). But one need not perform experiments in order to read and understand the experiments of others, nor is it a requirement in order to comment on them. If the Davis study is magically shown to be invalid, then we will simply have a more open question. For now, there is evidence of correlation, and the only experimental evidence points against causation. Eliminate the latter, and the question is not answered, and one still can’t make spurious claims about causation.

You are conflating two things. Citation advantage, and explanation for this.

David will respond to the rest of your comment, I’m sure, but I feel the need to clarify this right away: the situation is not that OA definitely confers a documented citation advantage, and now we need to figure out exactly why it does so. The issue here is whether the citation advantage demonstrated by these studies actually arises from the articles being OA, or from some other variable (such as selection bias). The failure to control for other variables is exactly what limits the validity of observational studies.

There aren’t any because, as noted, there hasn’t been a proper experiment yet. Have no doubt about it, though: the theory itself is rock solid; it’s just that the studies undertaken so far have largely been looking into the wrong data.

Randomized, blinded, and controlled ultimately means nothing if you don’t apply it to proper data, though it may appear methodologically flawless on the outside.

Still waiting to hear a coherent explanation of the fatal flaws in the Davis study. Even if that were true though, the best one can claim is a correlation, which does not prove causation. If the theory was indeed “rock solid”, then why is it so hard to do an experiment to prove it?

Think of it as a Higgs bOAson for finding which a suitable LHCA has yet to be built. It’s not that hard in itself, just time consuming and likely expensive.

A more coherent explanation is on its way but no ETA yet. No rush though; the OA c.a. sure won’t disappear.

Face validity, emotional gratification, … yet another way to think of this tendency is in terms of the stories we’re telling ourselves. Stories are very powerful, and nearly everyone thinks of themselves as participating in a larger historical narrative. There’s a powerful tendency to accept the ideas that fit into our story, amplify those that push it along, ignore those that don’t fit into it, and suppress those that contradict it. But is history a story?

Great post! I have a question concerning what you write about the impact of green OA on journal subscriptions. I realize that by asking such a question, I am to an extent confirming your main point, but it is an honest question. I do not know that answer.

I have seen the claim before, that Green OA has not led to a reduction in journal subscription. However, what I wonder is how this data is normalized. It is also being said that the number of article submissions world wide has skyrocketed. If there is not a commensurate increase in journal subscriptions, that could indeed be interpreted as a negative effect, regardless of what the causes might be.

Those who argue that Green OA does not affect journal subscriptions typically point not towards data in support of that position, but rather towards a lack of data against it — in other words, the typical formulation is “there is no evidence that policies promoting OA to articles will negatively affect subscriptions to journals”. Since this isn’t a positive hypothesis, there’s no data to normalize.

Beautiful idea beautifully crafted. I concur. However, I doubt whether it would matter to me so much if Green OA reduces library subscriptions. What would really matter is that more people are having access and reading the content. Library subscriptions may not necessarily be due to demand by readers but a retention of old practices which will definitely take a long time to be influenced by Green OA. Furthermore, how does the face validity in closed access publishing compare or cancel face validity in OA? The focus of the interesting piece on the incapacities of the face validity to OA only appears to be an unjustifiable bias. Face validity is a problem whether in closed or OA publishing. The classing of journals as high quality and low quality, IF, etc are in a sense, face validity judgements.

Furthermore, how does the face validity in closed access publishing compare or cancel face validity in OA? The focus of the interesting piece on the incapacities of the face validity to OA only appears to be an unjustifiable bias. Face validity is a problem whether in closed or OA publishing.

Face validity is a concept that applies to propositions and hypotheses, not to systems. Both closed and OA publishing pose problems and offer benefits, obviously, but the concept of face validity doesn’t really apply to either type of publishing.