In a few weeks, Clarivate Analytics will release their 2016 Journal Citation Report (JCR), which will disclose the Impact Factors of over ten-thousand academic journals.

With each release, the JCR also suspends titles for citation practices that distort their Impact Factor score and rank. Last year, 18 titles were suspended from the JCR, 16 for high levels of self-citation, the other two for “citation stacking,” a behavior that is more informally referred to as a citation cartel. In prior years, the JCR suspended many more titles. In 2012, a total of 65 titles were suspended. In 2011, it was 50 titles.

The Impact Factor is a lagging performance indicator — a measure of last year’s citation count to papers published in the preceding two years. If you’ve identified that citation distortion has already taken place in your journals, as a publisher, there is little you can do but wait for your day of reckoning and hope that your journal escapes suspension.

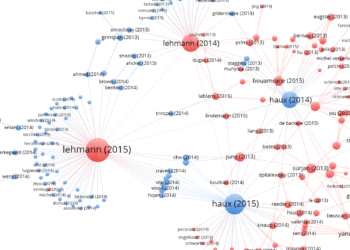

Last year, Retraction Watch covered the investigation of a soil scientist, Artemi Cerdà, who allegedly coerced authors to cite his own papers and journal, Land Degradation & Development (LDD), for which he served as Editor-in-Chief before being forced to resign.

The collective effect of his coercion resulted in a massive increase of LDD’s Impact Factor, from 3.089 in 2014 to 8.145 in 2015, according to the JCR. Some of this rise can be attributed to self-citation, which accounted for one-third (33%) of its score. (Before Cerdà assumed the role of Editor-in-Chief in 2013, self-citation accounted for just 1% of LDD’s Impact Factor.) The other contributing cause of LDD’s score was citation stacking.

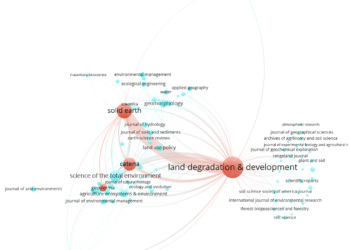

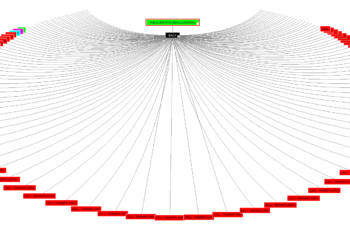

Using JCR’s cited-by tables, I was able to plot the flow of citations among four journals with which Cerdà was involved. This type of graph is called — somewhat befittingly — an alluvial plot, named after the sediment patterns made by moving water.

Among these four journals, we find a high flow of citations made in 2015 to other papers published within the same journal (top panel). This pattern of self-citation is not uncommon among specialist journals, as related papers are often published within same journal. What IS surprising, however, is when this flow of citations is focused on papers published in the prior two years (bottom panel) — the window from which the Impact Factor is calculated.

Self-citation and stacking were responsible for doubling Land Degradation & Development‘s 2015 Impact Factor

In 2015, nearly half (46%) of self-citations in LDD were focused on the prior two years of publication, compared to just 4% of LDD citations made to other journals. You can see this flow of green citations in the bottom panel of the above figure.

Even more extreme was the flow of Impact Factor-directed citations from Solid Earth to LDD. In 2015, the journal sent 350 citations to LDD, 68% of which were focused on the prior two years. In sharp contrast, Solid Earth only sent a total of 58 citations to itself.

So, why didn’t the Journal Citation Report suppress LDD and Solid Earth in 2015? To consider a journal for citation stacking, the JCR measures:

- Donor journal citations as a percent of recipient’s total journal citations.

- Donor journal citations as a percent of citations counted toward recipient’s Impact Factor.

- The number of donor journal citations counted toward recipient’s Impact Factor.

- Proportion of citation exchange between the donor and recipient journal.

In an email response from Stephen Hubbard, Content Team Lead for the JCR at Clarivate Analytics, Solid Earth and LDD met the first three criteria but failed the fourth.

While I appreciate that suppression decisions are based on a multiple criteria, the combined effect of self-citation and stacking was significant. Without them, LDD’s 2015 Impact Factor would have been just half (3.982) of the score it received (8.145).

Based on raw calculations, if LDD is included in the 2016 JCR, it will receive another Impact Factor score above 8, and self-citation will account for almost half (49%) of its score. I also count a total of 207 Impact Factor-directed citations from Solid Earth to LDD, which will account for 19% of its score. We should also remember that the investigation by the European Geosciences Union reported citation coercion in other journals as well.

There should be no question of the cause and purpose of the manipulation. We have have a smoking gun. We have resignations. It never gets clearer than this.

I work with editorial and publication boards who routinely ask me about strategies to increase their Impact Factor scores. While questions of self-citation and stacking often come out obliquely, some editors get incensed when they believe that their competitors are getting away with it, often flagrantly. Citation manipulation is therefore justified as a way of leveling the playing field. Personally, I find this line of thinking pernicious as it normalizes unethical behavior. Even coercion can be acceptable just as long as you don’t commit too much of it.

To me, the inclusion of LDD and Solid Earth in the 2015 JCR sends a strong message that citation manipulation is acceptable behavior just as long as it doesn’t reach a critical level — a level that is set absurdly high. Second, in the case of LDD and Solid Earth, there should be no question of the cause and purpose of the manipulation: We have have a smoking gun. We have resignations. It never gets clearer than this.

This leaves us in a quandary on what should happen next. Should LDD and Solid Earth be suppressed in the forthcoming 2016 JCR? If so, is it fair that the journal continue to bear the responsibility of the past editor? If not, has the damage to the reputation of the editor and offending journals sufficient punishment? And, most importantly, has Clarivate’s criteria for suppression changed the way we think about citation manipulation?

Discussion

15 Thoughts on "How Much Citation Manipulation Is Acceptable?"

If self-citation accounts for 49% of LDD’s 2016 Impact Factor score, that actually might not be such a remarkable proportion.

In 2010, for example, 4.2% of all science journals in the JCR had greater than 50% self-cites in their Impact Factor numerator. The self-citation rate is higher in the social sciences. (These numbers were supplied to me for a Nature story that ran here: https://www.nature.com/news/researchers-feel-pressure-to-cite-superfluous-papers-1.9968 ).

So – and please correct me if I’ve misunderstood this – that would suggest that LDD is not even in the top 4% of journals by self-citation proportion.

Of course the additional effect of the citation stacking is a critical detail too.

Thanks for the comment and the data, Richard. The problem is that the JCR measures two citation manipulation behaviors—self-citation and stacking—separately, neither of one reaches their arbitrary cut-off for suppression. Put them together, however, and LDD’s Impact Factor more than doubles.

More importantly, in most cases of suppression, JCR can only work with the numbers, percents and proportions. In the case of LDD, There should be no question of the cause and purpose of the manipulation. We have have a smoking gun. We have resignations. It never gets clearer than this.

By ignoring the smoking gun and relying entirely on their separate-bin accounting process, JCR sends a strong message that citation manipulation is acceptable just as long as you don’t do too much of it and don’t do too much of one kind of it. I think this is the wrong message for science and the wrong kind of message coming from a metrics company.

Beyond the rights and wrongs of suppression or not in this case I can understand why Clarivate avoids making ‘editorial’ judgments with self-citations and stacking. Trying to make a distinction between ethical and non-ethical self-citations or between victims and perpetrators of citation stacking is very problematic when it moves beyond the raw data. Even in very clear cut cases any move away from position means that all future decisions would have an assumption of an editorial judgement on their part.

I see your point, however, the JCR makes editorial judgements all the time. The 4 criteria for suppression listed above are purposefully called “guidelines” to give the JCR some flexibility in decision-making. The JCR can adjust the citable item count in the denominator of a journal’s Impact Factor if they disagree with Web of Science classification. And the Web of Science makes editorial decisions on document classification–occasionally even refusing to index some papers because of their effect on citation manipulation. If there was no room for editorial judgement, we wouldn’t have to wait 6-months for relatively simple metrics.

Phil, I vaguely recall that in the past JCR had a self-citation percentage “threshold” that would trigger their taking a closer look at the journal. Did I imagine this? If not, do we know if/when this practice was eliminated or changed?

Hi Adam,

You are correct, and the criteria for suppressing for self-citation is listed on page 3 of this document http://wokinfo.com/media/pdf/jcr-suppression.pdf :

Data considered:

• Total citations (TC)

• Journal Impact Factor (JIF)

• Rank in category

• % of journal self-citations in Journal Impact Factor numerator

• Proportional increase in Journal Impact Factor with/without journal self-citations

• Effect of journal self-citations on rank in category by Journal Impact Factor

Unless I’m missing it, these guidelines (from 2014) don’t offer much in the way of specifics. I wish someone from Clarivate would chime in.

FWIW, my response to the question posed is that ANY manipulation is too much. If an editor values IF they should publish good stuff and help promote it to get “eyeballs” on it.

I seem to recall that the lack of specifics, at least publicly, was deliberate: once you set a citation limit (even it that is possible across multiple disciplines), you risk it being treated as a target.

Firm evidence of editorial coercion/conspiracy should be the *only* criteria for title suppression decisions. The numbers/percentages/ratios method of identifying offenders is bound to end up with false positives sooner or later.

Measurements that have become a goal are no measurements anymore! Perhaps with the passing away of Eugene Garfield (February 2017), time is ready to start a process to stop using (ranking of) Journal Impact Factors and other citation parameters. Some journals have even stopped voluntarily using the JIF: see E. Callaway, Nature, 535 (2016), page 210-211, for details.

Jan, I keep wondering why no one deals with the issue you and others have raised. Until this is effectively addressed, the concern about the equivalence of artificial padding and the numbers to several decimal places seems like avoidance because of inherent momentum and faint hope, a case of “willful blindness”.

Most journals with high impact factors follow the same practice. Nature for example is the most abusive journal in term of self-citation: take any issue of Nature and try to count the number of papers or internal citations; you will be surprised how many they are. In each issue, indeed, Nature staffs tend to cite/comment previous papers through for example their short sections: “Comment, News & Views, Letters, etc., which results in high number of citations. This is why the impact factor of Nature is high.

This is a nice piece of work (especially the pictures design). The topic is hot and many of us are waiting to see how Clarivate will react.

We also mentioned the LDD case in a just released paper on the coercive citations:

http://www.mdpi.com/2304-6775/5/2/15

This reminded me of a situation several years ago with ‘Gems & Gemology’, which is the primary journal in the field and would obviously have a high ‘self-citation’ rate. It was suspended until its uniqueness was pointed out by one of our researchers, who is a frequent contributor. It does seem odd that the limited number of ‘suspensions’ don’t seem to be more carefully reviewed.