By their very nature, citation cartels are difficult to detect. Unlike self-citation, which can be spotted when there are high levels of references to other papers published in the same journal, cartels work by influencing incoming citations from other journals.

In 2012, I reported on the first case of a citation cartel involving four biomedical journals. Later that year, Thomson Reuters suspended three of the four titles from receiving an Impact Factor. In 2014, they suspended six business journals for similar behavior.

This year, Thomson Reuters suspended Applied Clinical Informatics (ACI) for its role in distorting the citation performance of Methods of Information in Medicine (MIM). Both journals are published by Schattauer Publishers in Germany. According to the notice, 39% of 2015 citations to MIM came from ACI. More importantly, 86% of these citations were directed to the previous two years of publication — the years that count toward the journal’s Impact Factor.

Thomson Reuters purposefully avoids using the term “citation cartel,” which implies a willful attempt to game the system, and uses the more ambiguous term “citation stacking” to describe the pattern itself. Ultimately, we never know the intent of the authors who created the citation pattern in the first place, only that it can distort the ranking of a journal within its field. This is what Thomson Reuters wants to avoid.

Schattauer Publishers appealed the suspension, offering to exclude the offending papers from their Impact Factor calculation as a concession. Their appeal was denied. Offering some consolation to its readers, the publisher made all 2015 ACI papers freely available. It has also offered all ACI authors one free open access publication in 2016.

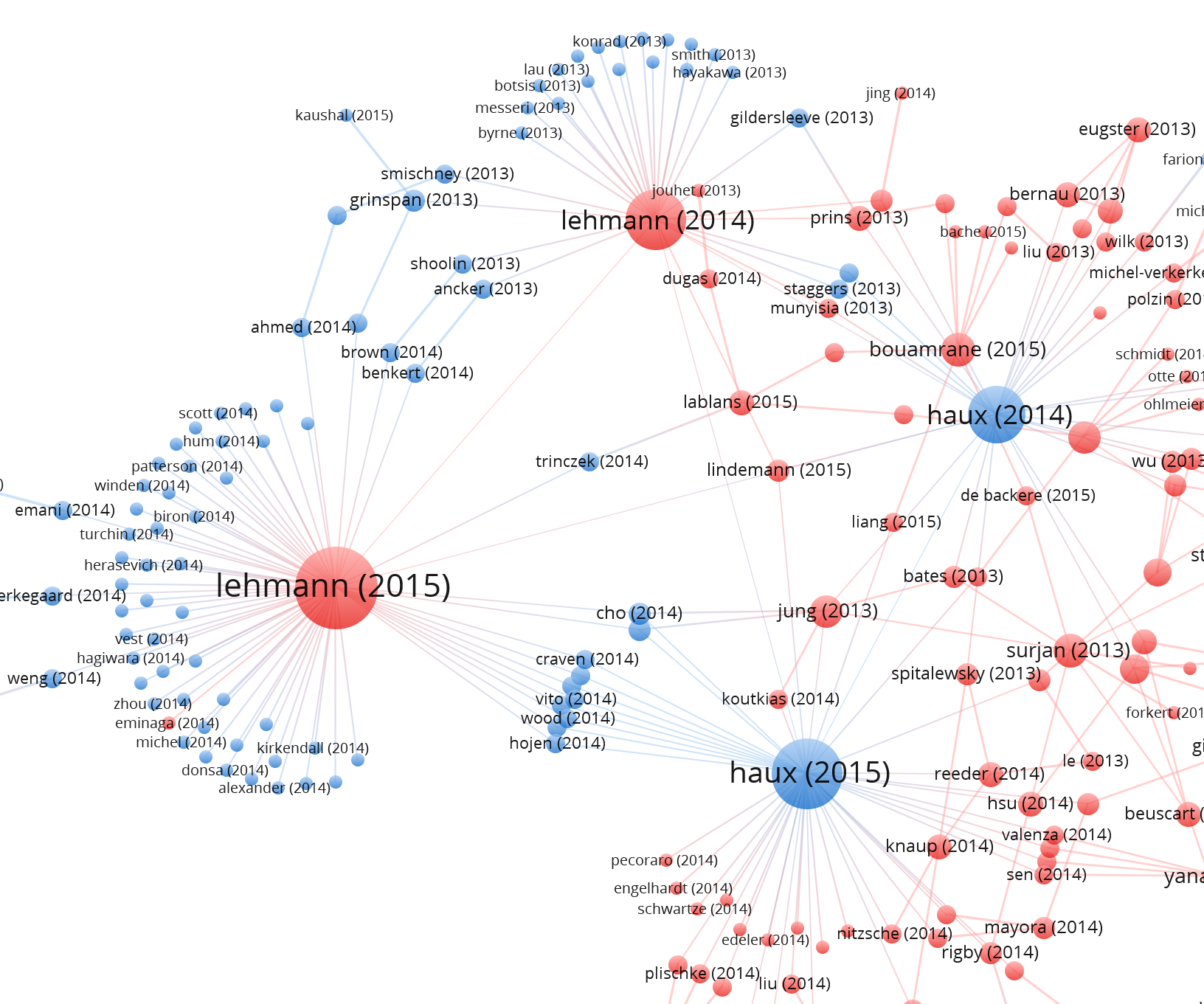

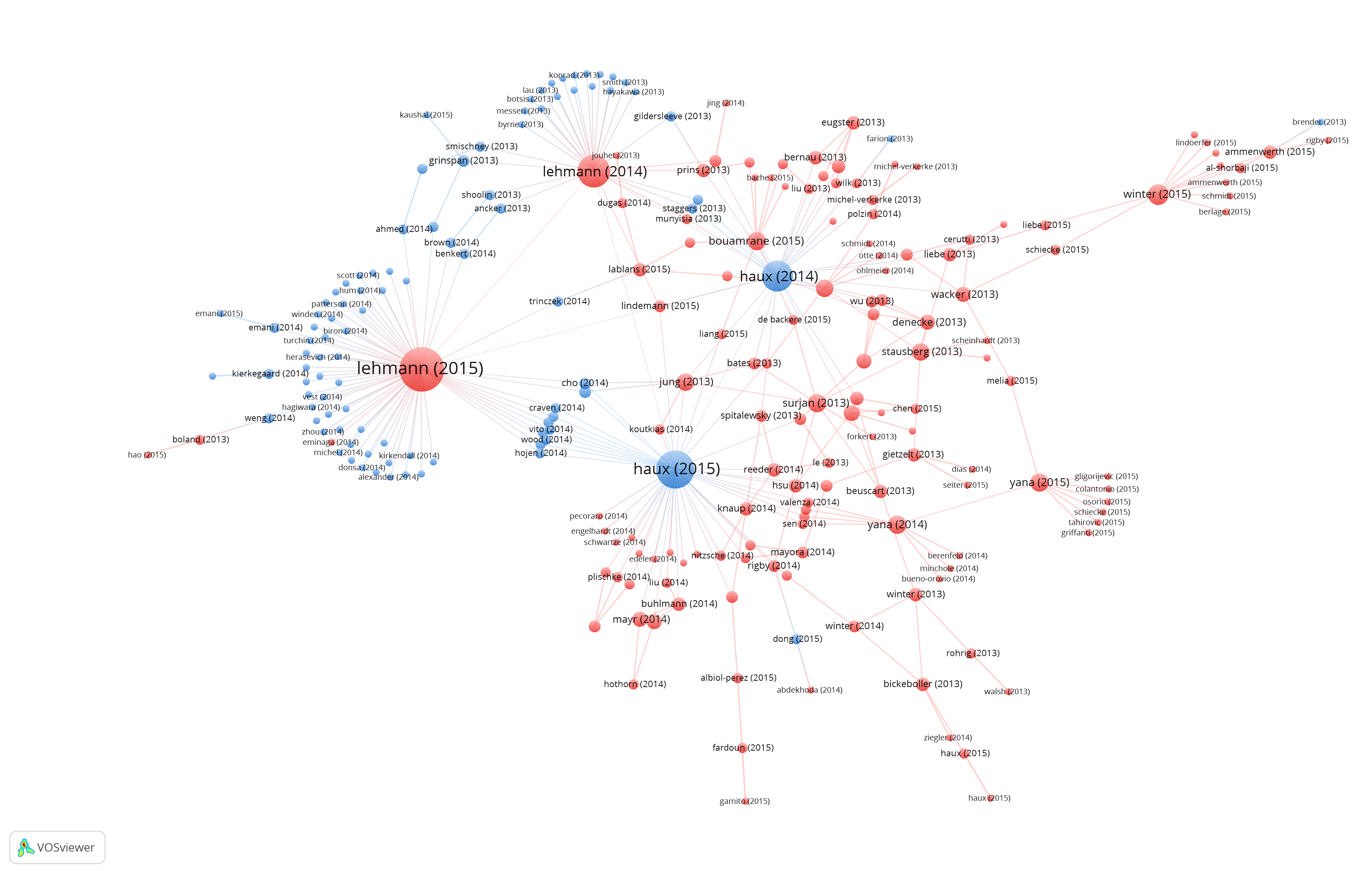

To better understand the citation pattern that resulted in ACI being suspended, I created, using VOS Viewer, a visualization of the citation network of papers published in ACI (blue) and MIM (red) from 2013 through 2015. Each paper lists its first author, year of publication and links to the papers it cites.

From the graph, there appear to be four papers that strongly influence the flow of citations in this network, two MIM papers by Lehmann (red) and two ACI papers by Haux (blue). Each of these papers cites a large number of papers published in the other journal within the previous two years. Does this alone imply an intent to distort one’s Impact Factors? We need more information.

Both Lehmann and Haux are on the editorial boards of both journals. Lehman is the Editor-in-Chief of ACI and also sits on the editorial board of MIM. Haux is the Senior Consulting Editor of MIM and also sits on the ACI editorial board. This illustrates that there is a close relationship among the two editors, but still this is not enough to imply intent. We need to look at the four offending papers:

- The 2014 Lehmann paper (coauthored by Haux) includes the following methods statement in its abstract: “Retrospective, prolective observational study on recent publications of ACI and MIM. All publications of the years 2012 and 2013 from these journals were indexed and analysed.”

- Similarly, the 2014 Haux paper (coauthored by Lehmann) includes this methods statement: “Retrospective, prolective observational study on recent publications of ACI and MIM. All publications of the years 2012 and 2013 were indexed and analyzed.”

- The 2015 Lehman paper states: “We conducted a retrospective observational study and reviewed all articles published in ACI during the calendar year 2014 (Volume 5)…”, and lastly,

- The 2015 Haux paper states: “We conducted a retrospective, observational study reviewing MIM articles published during 2014 (N=61) and analyzing reference lists of ACI articles from 2014 (N=70).

What is similar among these four papers written by ACI and MIM editors is that they are analyzing papers published in their own journals within the time frame that affects the calculation of their Impact Factors. Again, this alone does not imply an intent to game their Impact Factor. Indeed, the publisher explained that citation stacking was “an unintentional consequence of efforts to analyze the effects of bridging between theory and practice.”

I can’t dispute what the editors and publisher state was their intent. However, what is uniformly odd about these papers is that they cite their dataset as if each datapoint (paper) required a reference.

Why is this odd? If I conducted a brief analysis and summary of all papers published in a journal, would I need to cite each paper individually, or merely state in the methods section that my dataset consists of all 70 research papers published in Journal A in years X and Y? While ACI and MIM are relatively small journals, if this approach were used to analyze papers published in, say, PNAS, their reference section would top 8000+ citations. Similarly, a meta-analysis of publication in PLOS ONE would require citing nearly 60K papers. Clearly, there is something about the context of paper-as-datapoint that distinguishes it from paper-as-reference.

One could play devil’s advocate by assuming that it is normal referencing behavior in the field of medical informatics to cite one’s data points, even if they are papers, and unfortunately we’ve seen this pattern before. In 2012, I took the editor of another medical informatics journal to task for a similar self-referencing study. The editor conceded by removing all data points from his reference list, acknowledging that this was a “minor error” in a correction statement. Citing papers-as-datapoints, in the cases of Lehmann and Haux is not standard citation practice. The editors should have known this.

If it was not the intention of the editors to influence their citation performance, there were other options open to them at the time of authorship:

- They could have simply described their dataset without citing each paper.

- If citing each paper was important to the context of their paper, they could have worked from a group of papers published outside the Impact Factor window. Or,

- They could have listed their papers in a footnote, appendix, or provided simple online links instead of formal references.

Suspension from receiving a Journal Impact Factor can be a serious blow to the ability of a journal to attract future manuscripts. The editors apologized for their actions in an editorial published soon after ACI suspension. In the future, they will refrain from publishing these kinds of papers or put their references in an appendix.

Thanks to Ludo Waltman for his assistance with VOS Viewer.

Discussion

15 Thoughts on "Visualizing Citation Cartels"

Self-citation is a curious notion too. The same notice that listed this citation stacking pair had caught my attention for delisting a journal in my field for excessive self-citation. This made me ponder what is excessive self-citation, suggesting mischief from the editorial office, versus normal author affinities to citing other work in their niche journal?

The delisting of Springer’s Earth and Environmental Sciences (formerly Environmental Geology) caught my eye as my close colleagues have published in it, and it has been around a long time as one of the many mid-tier, niche journals that publish respectable but not highly selective works. It’s last JIR was 1.7 according to the journal.

I looked at its record in Scopus, and there are indeed anomalies. In the early 2000s as Environmental Geology they published about 300 articles a year, but it took off from 297 articles in 2010 (first year as Earth Environ Sci) to 1137 in 2015. Total citations shot up by a factor of 10 during that period. Self-citation rates were indeed high: From 2011 through 2015 self-citation rates were 11, 29, 29, 40, and 35% respectively per year. For comparison, rates at two other journals in the field looked to be under 10% (JAWRA and Hydrology and Earth System Sciences). I glanced through the most highly cited article published in 2013 in Earth Environ Sci, on landslide susceptibility in Korea, with 53 citations in Scopus. Indeed many of the citing articles were self-citations to the same journal, but they all seemed to be relevant to the topic. Anomalies alone may not indicate editorial misbehavior.

Because TR calculates the 2-year JIR with an arithmetic mean rather than a median, a single highly cited article can proportionally move a low JIR value quite a bit. Maybe this is a gotcha and there is indeed a heavy-handed editor behind the scenes twisting authors’ arms to boost citations, but maybe it’s just that the journal is influential within its niche and authors read and cite others in their niche. Looking back at the most recently published 25 articles, only two were from authors in Western Europe or North America. Chinese authors were strongly represented. Certainly editors can misbehave, but this suggests that if TR is delisting solely for statistical anomalies without digging deeper, if a community of authors are selectively reading and publishing in a niche journal there is a risk of penalizing authors within the niche.

Phil, This is quite interesting (and a very nice use of the VOS viewer). Am I reading the network diagrams correctly in thinking that with partial exception of the Haux 2015 paper, these four review articles primarily cite work in the other journal only?

Good point about citations versus data points. If we behaved this way in some of our papers with >30 million articles, a hard copy would be a 500-foot-tall stack of paper.

Hi Carl. The 2014 papers cover both ACI and MIM, while the 2015 papers focus on the reciprocal journal’s papers.

I’ve noticed editors publishing more of these summary-of-what-we-recently-published papers. In some cases, they are no more than laundry lists by topic, or top-cited, or highly-downloaded. While the editors will claim that they are providing a reader service, the Web of Science has ceased to index these kinds of papers in a number of journals that are repeat offenders. Coincidentally, the editor stops publishing them too!

There is an important distinction to make among some of the uses of scholarly articles.

1. Scholarly citation : an in-line reference to a specific work (or small set of related works) used as an intellectual support of, contrast to, or context for the current work. These should be and are counted in metrics

2. Bibliography : a deliberately broad collection of works meant to show the full range of literature on the subject. They are not specifically context, or contextualized. These are not generally counted in citation metrics.

3. Articles used as the object of research – such as bibliometric or bibliographic studies. These shouldn’t generally be included in citation metrics as they are research materials.

4. Awareness – “related materials”, “other articles of interest,” “Top Cited”, “New topics.” These are, in some sense, advertisement. Also not generally counted in citation metrics.

The problem with articles that “study” the content of a single journal is that they combine type 1 and type 3 and end up being type 4. While the arithmetic is correct, it results in dubious publishing and problematic metrics.

When I wrote an article for Nature about a Brazilian citation cartel, which was spotted by Thomson Reuters in 2013, I tried to visualize the cartel in a different way.

The chart (at http://www.nature.com/news/brazilian-citation-scheme-outed-1.13604#/citations) shows how in 2011, four Brazilian journals published seven review papers with copious references to previous research in each others’ journals in 2009-10, thus raising each journal’s 2011 impact factor.

” In 2012, I took the editor of another medical informatics journal to task for a similar self-referencing study. The editor conceded by removing all data points from his reference list, …” – Phil, back then we were working already on a correction statement before you published your blog post, and you know it. There is maybe also a difference between publishing a (what turned out to be) seminal paper (cited >400 times on Google Scholar) about altmetrics which self-cites 67 papers from a top journal in the field (impact factor 4.5) which attracts a total of over 7000 citations a year (thus negligible effect on the impact factor) as opposed to covertly (=through a cartel) self-cite dozens of articles from a journal (ACI) that in 2013 attracted a mere total of 32 citations from all sources (“impact” factor of 0.386), thus almost doubling the impact factor through a single self-citing article, which also seems to have a contrived objective. As an aside, MIM and ACI engaged in this practice over a period of at least 2 years and still don’t see any wrongdoing, while we published a correction statement within days after publication, removing all self-references.

Phil, back then we were working already on a correction statement before you published your blog post, and you know it.

Gunther, I’m sorry how much it troubles you that I keep referring to your Tweets Predicts Citations paper but your Trumpian assertion is not backed up by the facts. You can refer back to our email conversation on January 4th, 2012 to get your details correct.

Phil: In fact (and perhaps unbeknownst to you), we received a phone call from a reviewer a couple of days before your blog post on the issue of not citing any manuscripts as data points and an erratum was in the works. All this was during the holiday season – the editorial was published on Dec 16, 2011 and the corrigendum moving the references to an appendix was published Jan 4th, 2012. I advised you on Jan 4th by email that we will be publishing an erratum, yet you forged ahead with publishing your blog post on the same day.

I am indeed bothered by you repeating your swipe at us – you should at least acknowledge that the citation cartel case above is on a completely different level and scale, not least because we reacted immediately and proactively, and also because even leaving the references in the paper would have had no impact on our impact factor ranking, which is not the case for the 2 sparsely cited journals mentioned above. Perhaps instead of continuing to take a swipe against an independent open access journal whenever you can you should have a closer look at the world of citation cartels in professional societies and subscription-based journals. Your analysis above is just the tip of the iceberg. 3 toll-access journals in the medical informatics discipline were delisted by Thomson Reuters from the impact factor rankings this year. I can give you other examples for editorials in other medical informatics journals along the lines of “here is what we published in the last 2 years [1-127]”. Our tweetations paper (https://www.jmir.org/2011/4/e123/) is not in that category at all, both in form and in substance. Instead, it made an early and significant contribution to the field of altmetrics, as evidenced by over 400 Google scholar citations to that editorial to date.

Gunther, please find a screen shot of the email you sent me on January 4th at 1:57 AM in which you thank me for pointing out the additional references in your paper and in which you state “you are the first to note this”). I’m not sure why this detail is such a sticking point for you. You made an error and corrected your error. If giving me credit for detecting that error is so difficult for you, then so be it.

Whether or not your addition of extraneous references to your paper was intentional or not, the success of your journal and the small effect it would have is not relevant. Putting your thumb on a scale to add 2 grams is the same as putting your thumb on the scale to add 200 grams. Being a small Open Access publisher is also not an excuse for poor editorial behavior.

I am not making any “excuses for poor editorial behavior” because such poor behavior didn’t exist. We published a correction days after the original paper was published. With you being the “first to note this” I was referring to the extra references, but an erratum was in the works to move the “data points” to an appendix rather than citing them in the paper, which was your point above. We published the correction on the same day you published your blog post (which you published even though you knew an erratum was going to be published). Your 2g vs 200g analogy doesn’t make sense, as every journal has journal self-citations. Our journal self-citation rate is comparable or less than other journals. Thomson Reuters knows that and only takes action if the scale is tipped and unfairly favors a journal with excessive self-citations, which is what happened in the above “citation cartel” case. It is simply incorrect to cite our correction as “similar” for the reasons I list above.

Here is another paper (editorial in fact) published in Cortex (2012) analysing the Cortex papers published in 2010 and 2011:

Foley, J. A., & Valkonen, L. (2012). Are higher cited papers accepted faster for publication? Cortex, 48(6), 647–653. http://doi.org/10.1016/j.cortex.2012.03.018

The 117 papers under study are systematically cited in the “References” section.

If this paper was included in the JIF computation (as citable material) then it contributed +1 to the JIF of Cortex in 2012, right?

PS: I guess that the authors could have given the complete reference of each paper in a table put in Appendix, so that the Cortex references wouldn’t show up in the “references” section.