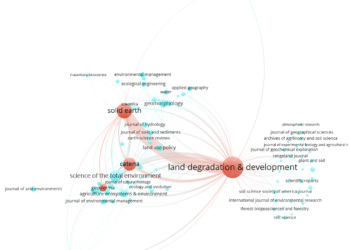

The case of Land Degradation & Development and Solid Earth, two journals recently involved in self-citation and citation cartel practices, underscored a fundamental principle of the Journal Citation Reports (JCR) — that citation manipulation was acceptable practice just as long as it doesn’t reach a critical threshold. So, what is that threshold?

The JCR uses several guidelines to make suppression decisions but is not explicit about thresholds that would automatically suppress a journal.

Using public suppression information provided by the JCR, along with prior-year data captured by the Internet Archive, I was able to reverse-engineer JCR’s thresholds for suppression. I should note that the JCR began publishing critical datapoints from 2013.

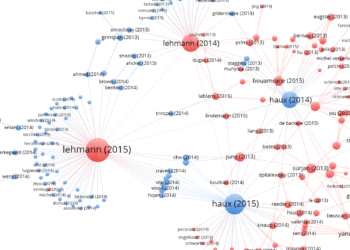

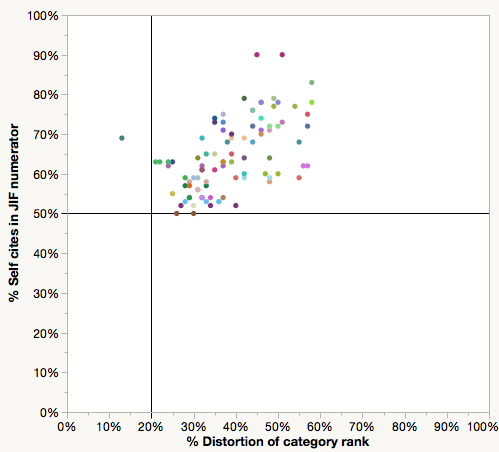

In the last three JCR editions, journals suppressed for self-citation had at least 50% of their Impact Factor-directed citations come from the journal itself (horizontal line, Figure 1). The percent distortion of category rank, meaning, how much self-citation changed the ranking of the journal within its subject category, starts at 20% (vertical line) with one exception. The journal, Foundations of Science (Springer) had a self-citation rate of 69% in 2013, which distorted its rank by only 13%.

In 2015, Land Degradation & Development‘s self-citation rate was 33%, well below the threshold of 50%. Result: no suppression.

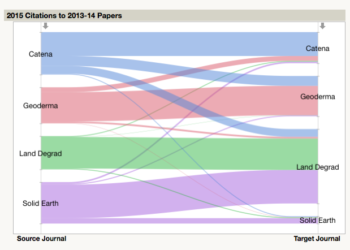

While fewer in number, journals suppressed for citation stacking (aka citation cartel) illustrated two minimal thresholds: At least 80% of citations from another journal are directed at papers published in the previous two years (vertical line, Figure 2), and these citations must account for at least 15% (horizontal line) of total citations used to calculate the target journal’s Impact Factor.

In 2015, citations from Solid Earth to Land Degradation & Development (26%) were above the horizontal threshold; however, they did not exceed the vertical threshold (68%). Result: no suppression.

Whereas the combined effects of self-citation and citation stacking more than doubled Land Degradation & Development’s 2015 Impact Factor, neither practice was sufficient on its own to result in suppression. Based on my 2016 calculations, it is unlikely that either journal will be suppressed in the upcoming 2016 JCR report.

Would we all be better off with rules rather than rules-of-thumb?

Reverse-engineering JCR decisions confirmed what I concluded in my last post — that threshold levels for suppression are set extremely high, and that JCR editors are unwilling to consider malfeasance, even when it is starting them in the face. If this is just a numbers game, shouldn’t the JCR simply make their criteria for suppression explicit and fully transparent? Why should it provide “guidelines” instead of figures? Would we all be better off with rules rather than rules-of-thumb?

Scopus, a literature index that generates journal-level metrics has explicit performance benchmarks for journals. For example, journals are flagged when self-citation rates are two or more times higher than peer journals within their field. Wim Meester, Head of Content Strategy for Scopus, described by email that flagged journals then go to content selection and advisory boards to determine whether they should remain in the Scopus index. As of May 2017, 303 titles have been discontinued from Scopus, 42 of them for not meeting metrics guidelines.

The Scopus team did discuss whether to discontinue indexing Land Degradation & Development and Solid Earth. Their decision to continue indexing, however, was made entirely in-house and did not involve an external advisory board.

Meester explained that discontinuing content indexing stops “the forward flow” of citation data. Discontinued journals do not show up in Scopus’ journal metrics and other services that use Scopus data (CWTS Journal Indicators, and SJR). In contrast, journals suspended from the JCR continue to be indexed, just suspended from receiving an Impact Factor and other related metrics until reevaluated, often the following year.

Ludo Waltman, researcher at the Centre for Science and Technology Studies (CWTS) of Leiden University, which hosts CWTS Journal Indicators, argues that title suppression decisions need to be done by the scientific communities and not by the indexers. As he explained by email:

The rules for suppressing titles will always be arbitrary and debatable. As producers of journal metrics, we are simply not in the position to develop such rules, because we have insufficient background knowledge of what might be going on in specific scientific communities. I think we should therefore provide metrics for all journals in the database that we work with, even suspicious journals, but I also think we should provide additional statistics that can be used to get an impression of possible gaming of the metrics.

In sum, we are left with two models for curating citation data: The JCR model, where in-house editors determine which titles are evaluated; and the Scopus model, where editorial decisions are made by external groups of academics who advise with content selection. Neither model is not perfect. Is one preferable over the other?

More importantly, now we know how suppression decisions are made, should metrics companies suppress titles at all or simply make the underlying data more transparent?

Discussion

7 Thoughts on "Reverse Engineering JCR’s Self-Citation and Citation Stacking Thresholds"

Phil lays out the data and the processes used by both organizations very clearly. To Phil’s point more transparency around the data and the number of self citations is very important. Let the reader make the determination on the quality of the article. Create an asterisk category for those journals that have self cited. Self citing should not be a benefit nor penalty. It should just be another data point for the reader of the article to know.

Both your posts have been very helpful. I too have been in ed board meetings where the editors are frustrated that other journals have higher self citation rates. In fact, when I show them the Impact Factors without Self Citations, the numbers almost always flatten out. If the threshold is high, then I do believe that it encourages bad behavior in the “everyone else is doing it” kind of way.

As far as policing this, that’s a tough one. It would be nice if Clarivate could demand an audit for journals suspected of citation stacking or excessive self citation. Of course they have no recourse for doing that. Journals are not necessarily customers of Clarivate and Clarivate can index journals of their choosing. I suppose Clarivate could put a journal in a time out. Think of it as an Expression of Concern and then give the journal a few months to produce certain documentation in the form of an audit. Clarivate could evaluate and then make a decision whether the journal is suppressed or released from concern.

Yes, just making underlying data available is a much better option than suppression (especially if process behind it is black-boxed).

In that sense JCR’s model is superior to Scopus’ one. Threshold levels are transparent enough even if not explicitly noted (as you demonstrate in the post), and journals get suppressed only from JCR, while all journal citation data still gets captured in WOS during its suppression period.

Transparency was not sufficient.

Data detailing the contribution of journal self-citation to the JIF were published in the very first JCR in the 1970’s, and in every JCR since. The specific contribution of the citing sources was fundamental to understanding and using any of the data in the JCR. Journal to-journal citations to each year of the past 10 years were/are displayed in both the Cited journal data (citations received, and the source of all the key performance metrics), and Citing journal data (citations given). Any subscriber has full visibility on the role each – and every – other journal played in developing the JIF for that journal in that year.

Later releases of the JCR included not just data tables, but graphical displays of journal self-citation and its contribution to overall and to JIF citations.

This was further supplemented by calculating and displaying journal self-citation percentages overall and journal self-citations in JIF. The JIF without the contribution of journal self-citations was also displayed.

Finally, the JIF without journal self-citations became a stand-alone metric in the JCR such that journals could be ranked by JIF with or without the contribution of journal self-citations.

Transparency did not prevent the manipulation of journal self-citations.

The publisher who first reported the phenomenon of “citation stacking” used JCR customer interface to explore a suspicious rise in JIF; noted the anomalous contribution of a single journal, concentrated into the JIF years, and went on to identify a specific article in WoS that accounted for this “stack” of citations. The publisher alerted the JCR team. Phil reproduced that same trace in a blog post on the topic: https://scholarlykitchen.sspnet.org/2012/04/10/emergence-of-a-citation-cartel/

Transparency allowed discovery – but it didn’t prevent the behavior. The following year that a cluster of journals was suppressed for “stacking” : http://www.nature.com/news/brazilian-citation-scheme-outed-1.13604

For the first few years that JCR took action against citation manipulation, journal suppression was based on the prior year’s published JCR data. This allowed the specific data underlying the suppression to remain visible to support the decision to remove the JCR listing for a title. Any inquiry or dispute over a suppression could point directly to the data from the prior year – still on display in the JCR.

Suppressing subsequent years was not entirely satisfactory because it allowed that one distorted JIF to be published. Analysis was moved up-stream so that it could respond to the current year’s data – and this led to additional details being published to support the suppression- and these are the data you used to create the graphs in the post. Which, honestly, are pretty cool.

Transparency was insufficient, since few people examined the underlying data, even when it was called-out graphically, and even when the targeted metric was displayed without journal self-citations as a general practice. Responding to the citation stacking suppressions, you wrote: “To me, the suspension of journals from the JCR represents a serious consequence to editors wishing to manipulate an important rating and a warning to others that such behavior is clearly unacceptable.” (https://scholarlykitchen.sspnet.org/2012/06/29/citation-cartel-journals-denied-2011-impact-factor/ )

The graphs in your post are very clever – but they do not include the overwhelming body of journals arranged along those two axes. That more inclusive graph would show two important things: 1) that the smattering of dots in the top-left quadrant are rare outliers; 2) there are many journals that are edging up to the “thresholds” – and their data are displayed in the JCR for users to consider in a rational assessment of journal performance. However, no matter where you put a threshold, there will be one journal just above, and one journal just-below.

These near-miss journals are a more challenging problem. How to draw awareness to that group, encourage use of the additional, corroborative data available, support an additional level of accountability and consequence to deter the behavior. A lot of drivers slow down when they see one car pulled over for speeding.

Whether the public exposure of LADD should have trumped any/all other “criteria” is certainly debatable. Admission is a “proof” of intent; but not all intent is proved, making a criterion of “intent” hard to enforce. Numeric criteria allow uniform application. All journals that exceed thresholds will be suppressed; no journal that does not exceed thresholds will be suppressed.

JCR suppression analysis should be independent of whether there was intention to manipulate and depend only on whether the ranking of titles in a subject area is distorted.

You ask in a prior post “How much citation manipulation is acceptable” – the answer, of course, is “None.” The problem then turns to thresholds of detection, and thresholds of certainty that can be objectively applied to all journals.

Hi Phil

Are you saw the new impact factor for LDD, Catena, Solid Earth and Geoderma? Journals which were involved into citation cartel of Professor Cerdá, Breivik, etc. … All journals were increased your IF. ALL, especially LDD.

Well, I have a paper published on LDD in 2016, and I though that this year it would be deleted from JCR. I supousse that next year will be deleted…