A recent study, out as a preprint, offers something of a muddled bag of methodological choices and compromises, but presents several surprising data points, namely that voluntary publisher efforts may be providing broader access to the literature than Gold or Green open access (OA), and some confounding shifts in claims of an open access citation advantage.

“The State of OA: A large-scale analysis of the prevalence and impact of Open Access articles” is posted as a preprint, and like all preprints, it has not yet gone through peer review and must be viewed with some level of skepticism. It suffers from a variety of issues which will hopefully be addressed via a rigorous peer review process should the article be submitted for formal publication.

As written, the paper veers too far into advocacy. One of the key jobs of a journal editor is to insist that authors stick to the data, rather than voicing opinions and letting their political and social views, or even their theoretical suppositions, color the factual reporting of research results. Here the authors do things like make suggestions to libraries about how they should allocate future budgets, which would be better suited to an article labeled as an “editorial”.

The preprint contains scant information on their statistical methods. It’s unclear whether the authors controlled for article properties like age, paper type, and journal type, etc. Some of the biggest problems come from the authors’ characterizations of papers, and in particular the definition of “open access.” A significant portion of the introduction of the paper discusses the many variations and confusions around the term “open access.” Here they create a novel definition for OA, which does not follow that of the more commonly cited Budapest Open Access Initiative (BOAI), instead declaring everything that can be read for free, regardless of reuse terms, to be OA.

But not everything that can be read for free — papers that can be found on academic social networks like Mendeley and ResearchGate are excluded from the study because of concerns over ethics (many of the posted papers violate copyright) and over reliability, as availability of papers on these networks may be fleeting. This seems an odd distinction, given that many of the papers they do include in the study could be similarly characterized (Do all repositories check all deposits for copyright compliance? Availability of articles made free on publisher sites for promotional purposes may be similarly fleeting.) I suspect the real reason for leaving out scholarly collaboration networks is a more practical one — due to their proprietary and closed nature, the authors didn’t have a good means of indexing their content.

Further, where many articles fall into multiple categories, the authors here assign each to a single category. Many journals provide free access to the articles they deposit in PubMed Central for example. In this study, such a paper would only count as the version in the journal, and all other copies in repositories are ignored. A paper in a Gold OA journal may be deposited in hundreds of repositories, but those are not counted in this analysis. This makes it difficult to attribute any effects to any particular factor, because you don’t know which version is responsible (or even whether there are indeed multiple versions playing a role). A better way to do the analysis would have been using the availability for each designation as an indicator variable. In that way you can measure the effect of each designation on the performance of the paper.

The authors invent a new flavor of OA, “Bronze,” which they describe as, “articles made free-to-read on the publisher website, without an explicit open license.” I’m not sure this helps do much other than add yet another confusing term to the pile. For years we have referred to freely available copies without getting into any particular license requirements as “public access”, and the US government, in their policy statement, uses this term to avoid such confusion.

With all those caveats in mind, there are two data points that caught my eye. We know that in recent years, many publishers have de-emphasized archive sales in favor of focusing on current subscriptions and Gold OA. Many mission-driven publishers have also increased their efforts to make more papers freely available, driving access as broadly as possible, hopefully without harming their subscription base. And so older papers are frequently made publicly accessible.

The extent of these efforts becomes evident in the data collected.

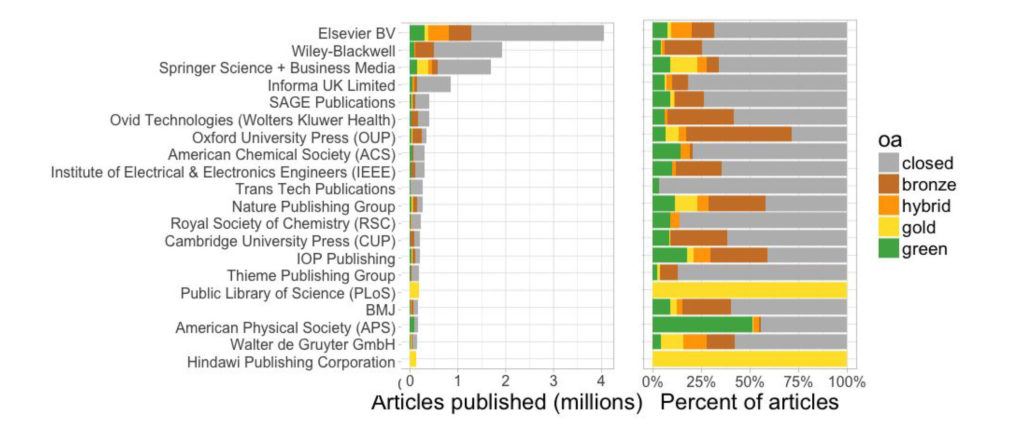

Bronze OA, or rather papers voluntarily made publicly accessible by publishers outnumber every category of OA:

Bronze is the most common OA subtype in all the samples, which is particularly interesting given that few studies have highlighted its role.

Several publishers, the study points out, are notable in that more than 50% of the articles they publish are freely available. This includes the Nature Publishing group (inexplicably separated out from Springer Nature), IOP Publishing, the American Physical Society, and Oxford University Press (full disclosure, my employer).

This has largely gone unnoticed, or at least uncommented upon in policy and advocacy circles. Publishers are responding to research community and funder desires, and are voluntarily opening up access to enormous swathes of the literature. This is all being done at no cost to the research community, and perhaps deserves greater attention.

The other interesting point seen from the data is about the alleged Open Access Citation Advantage (OACA). At this time, the argument is really moot. Given the existence of Sci-Hub, every single article published is freely available. There is no longer a corpus of articles that one can claim is strictly behind a paywall. This essentially abolishes the control group and removes most of the relevance from the question being asked, as everything is freely available.

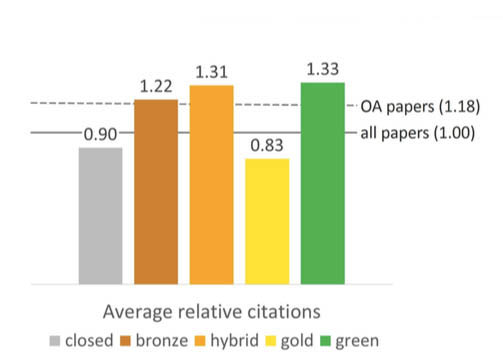

That said, again, this study presents unexpected results. Of the OA classifications used in this study, citation performance ranked (highest to lowest) as follows: Green, Hybrid, Bronze, Closed Access, and last Gold, which surprisingly offers a citation disadvantage. Far too much can be read into these results, and new confounding factors muddy the picture even further. Here the refusal to count articles that fall into more than one category makes it impossible to attribute results to any particular factor. Many Hybrid, Bronze and Gold articles are also Green articles, yet these are excluded from the Green measurement offered.

Green OA articles (those found deposited in repositories) presumably favor authors with funding and subsequent deposit requirements, as well as authors who have or intend to remain in research over the long term. Much deposit in repositories is driven by mandates, and those not building a long term career in research are less likely to respond to pressure to follow those mandates. Authors may also be more willing to deposit their stronger papers in public repositories than their lesser works. Whether these factors are playing any role in the papers’ citation performance cannot be ruled out.

Hybrid journals have been argued to offer higher quality publications than fully-OA journals by some measures, and this study suggests they are a more effective means of driving future research (at least as measured by citations), this despite efforts to de-fund the use of hybrid journals or penalize authors for choosing this path. Bronze performance is not surprising, in that many journals make highlighted articles freely available, freeing up access to carefully selected papers for marketing purposes. Again, since both of these categories include Green OA papers found in repositories, the causation behind their citation performance remains unknowable.

But what to make of Gold OA coming in last, even behind articles that remain accessible only to subscribers? Clearly the notion that one can simply buy citations by paying an OA fee can now be dismissed as untrue. The authors suggest that the Gold category is becoming watered down by low quality publications:

Interestingly, Gold articles are actually cited less , likely due to an increase in the number of smaller, less prestigious OA journals (Archambault et al., 2013), as well as continued growth of so-called ‘mega journals’ such as PLOS ONE (PLOS, n.d.).

So while I’m hesitant to put much weight behind the paper’s conclusions, there are a few data points noted that warrant further investigation. There’s too much goalpost shifting that’s gone on in this paper, whether due to experimental convenience or desire to reach certain conclusions to solidly rely on what’s being offered here.

But if these data points hold up, particularly the state of voluntary efforts by publishers to broaden access to the literature, it may be time for a new narrative to emerge, one that talks about the collective efforts of all parties rather than pointing fingers at enemies or villains.

Discussion

10 Thoughts on "Study Suggests Publisher Public Access Outpacing Open Access; Gold OA Decreases Citation Performance"

Here we have a paper that reeks of bias, is not peer-reviewed, has major methodological issues, ignores simple factors that could explain away an invented buzzword (“Bronze OA”), and makes basic errors in categorization. Yet, Nature News covered it in uncritical terms a while ago (https://www.nature.com/news/half-of-papers-searched-for-online-are-free-to-read-1.22418).

Because this was released how it was, now we have to battle against what must certainly be weak or wrong information. Perhaps our level of skepticism for preprint papers should be higher.

Hi Kent,

Thanks for letting us know you see major problems with the paper. Since, as you point out, the piece is not yet formally published, this is a great chance for us to fix these issues before the die is fully cast, as it were. If you can give us some more specific pointers, we can perhaps address some of your concerns in our revisions, and I’m sure our future readers will thank you!

I think this preprint would hold up as a methods paper: Here, we present a tool for identifying freely available papers in a limited number of forums (publishers websites and library repositories) PERIOD. The problem is that they attempt to confirm a theory (OA-citation advantage) that has little relevancy (or sense) today as it did 16 years ago. Coupled with a complete lack of a theoretical foundation and statistical rigor, this paper just confuses the issues even more.

I should note that the overall citation effect they present (18%) is at least a full factor lower than the numbers being pushed by OA advocates a decade ago. If the OA-citation effect really exists, it is small, likely overstated due to confounding effects, and, in reality, has little practical effect.

Thanks for your helpful comments on our paper, David. We’ll address these in revisions we’re currently working on for peer-reviewers, and the final published paper will be better for it (it will appear in PeerJ).

Although this isn’t the right venue for a complete response, I’d also love to address a few of your comments here as well.

> The preprint contains scant information on their statistical methods. It’s unclear whether the authors controlled for article properties like age, paper type, and journal type, etc.

We asked two questions: 1) What % of the lit is OA, and 2) What’s the citation impact of OA. The first is merely descriptive and of course requires no “controls” as such, although we do describe the distribution by age and publisher, and as reported in the text we analyze only papers classified as “journal-article” by Crossref.

The second does require controls as you describe, and we discuss these in the text on pp 10-11. To summarize: We restrict to documents to WoS-defined “citable items,” and then control for discipline and article age.

Clearly we did a poor job making this clear in the paper, since you didn’t see it! That’s useful feedback, and we’ll work to make it more evident in this next revision. Part of the problem is that the paper is so long…

> Some of the biggest problems come from the authors’ characterizations of papers, and in particular the definition of “open access.”

I really sympathize with you here. However, I think the problem is out of our hands to fix. The more one reads the OA literature, the more one realizes the frustrating diversity, instability, and vagueness of definitions. Consequently, it’s simply *impossible* to use any taxonomy that doesn’t provoke argument. If I use the definitions you prefer, then I’m disagreeing with definitions used elsewhere in the literature (Lakso and Björk, for instance, whose OA papers are hugely influential).

This is hugely frustrating to me, because personally I don’t care about the definitions at all…I just wish the literature would settle on something, because otherwise discussion of terms ends up hijacking the discussion. Anyway, we’ve done our best to support our taxonomy with extensive references, and I think beyond that we’ll have to just admit it can’t please everyone.

> I suspect the real reason for leaving out scholarly collaboration networks is a more practical one — due to their proprietary and closed nature, the authors didn’t have a good means of indexing their content.

There’s some truth to this :). But beyond that, we do think the issue of ethics is a meaningful one. After all, I doubt you would call Sci-Hub papers “OA” or “public access,” yeah? Research suggests that 50% of RG papers are posted in violation of copyright. For us, that puts it out of scope of this study, along with Sci-Hub. IRs typically (though not always!) invest in compliance such that violation is much lower. But we’re sympathetic to other ways to split the domain, and eager to see more research on RG in either case.

> For years we have referred to freely available copies without getting into any particular license requirements as “public access”, and the US government, in their policy statement, uses this term to avoid such confusion.

That’s a good criticism.You have a great point that “public access,” while not super common in the research literature, is quite well-established in practice, which is a huge plus. I guess the downside is prosaic…it breaks the symmetry of the categories! “gold OA, hybrid OA, green OA,…public access OA?” We’ll think about it.

> the paper veers too far into advocacy….the authors do things like make suggestions to libraries about how they should allocate future budgets

I agree that it’s important we avoid any advocacy! It will only hinder the impact of the paper. Could you give some more instances? We do report implications for libraries, but that’s less advocacy to me, and more just “Discussion section.” One does want to mention implications. If you can point out the other advocacy areas, we will correct those, though. I’ve written many advocacy papers, but that’s not the goal this one.

> A better way to do the analysis would have been using the availability for each designation as an indicator variable.

It’s a fair point, and indeed some studies have taken this approach. We do include in the appendix a graph showing green OA *disregarding* whether or not it’s open elsewhere, which is close to what you’re looking for. But in the main analysis, we prefer exclusive categories because 1) it’s more consistent usage of the term “Green OA” in practice and relatedly 2) it’s more consistent with a reader’s experience. For most readers, the publisher version is the *real* copy. If I find it on the publisher site, I’m done looking…if it’s available on something called an “institutional repository,” whatever that is, that’s irrelevant for me. So that’s how we do our taxonomy.

Ok, I will wrap it up there, and apologies for the long reply. You raise good issues, and again, I appreciate your taking the time to share your feedback. Oh, and I hope that your readers didn’t miss the main takeaway of the paper–that OA is experiencing steady growth, and that users of OA discovery extensions like OA Button or Unpaywall (which I helped make) are currently reading around HALF the literature for free, legally. I thought that was a really interesting finding.

Thanks to David for drawing my attention to this, and to Jason for a helpful and good natured response, and for emphasising the overall takeaway which I agree is eye opening even while it accords with my own experiences!

Jason – You and your colleagues seek to answer two important questions in your paper. Fantastic! As for revision suggestions, I agree with David Crotty, Mark Patterson, and Stuart Taylor about the problematic classifications. This is somewhat confounded by the focus on where a paper is published or accessed and what, if any, bearing this has on answering your main question “what % of papers are OA”? For example, would the paper’s OA status be any different if accessed on PubMed Central rather than the publisher’s site? Can “what % of papers are OA” be answered with a simpler set of classifications using descriptive terms and phrases rather than promulgating the confusion caused by the conflicting meanings of terms like green, gold, and bronze (yes, that’s public access/gratis) – e.g. simplifying it down to freely accessible and reusable, freely accessible but not reusable, not freely accessible or reusable?

Upon seeing the graph in this post, the first thought I had was, “I’ve seen this before…” and then I remembered where. For those who attended Clarivate Analytics’ update on September 20, you’ll remember that they cited this study quite heavily in their webinar. I do not recall them clarifying that it was a preprint–does anyone else? I’m a bit put off by that.

Jason, I think there is also a problem with the counting of citations. It seems the method used ignores factors that might influence the results particularly the conclusion that for publishers being or becoming a gold open access journal is a bad business in terms of citations and for authors publishing in gold journals will imply less impact: (a) language of articles – about 10% of WoS 2015 OA golden articles are not English; (b) seniority of journals. 22% of WoS 2015 OA journals articles are from ESCI and about 20% of SCIE or SSCI OA golden journals were not in JCR in 2011; and (c) it is well known that citations received by journal articles distributes approximately as a Pareto 80-20 rule. So, unless properly identified, ailed individual articles cannot be compared with all journal articles, which occurs for example when opened articles are more relevant than closed ones. Another point is that the “large-scale” analysis restricts publishing to the traditional publishers and ignoring solutions like SciELO which is operational for 2 decades already.

Additionally, as I argue in my blog post (http://openscience.com/recent-pre-print-findings-cast-doubt-and-spark-discussion-on-the-citation-performance-of-open-access-journals/), the relative citation under-performance of Gold Open Access articles may be related to the economic performance of respective journals and publishers as well as possible budgeting constraints, since not all Open Access journals charge article processing charges and PLoS, as one of the only two primarily Gold Open Access publishers in the sample of Piwowar et al. (2017) has seen its number of articles, revenues and very possibly citation ratings go down in recent years.

One thing to consider is that citation is a slow process. If one assumes a continuing growth in Gold OA uptake, then how much of that population of papers represents recent articles, versus the other categories which may have more of a leaning toward older articles that have had a long time to collect citations. It’s unclear from this study whether they controlled for age of article.