In the past, journal growth was mostly horizontal. Publishers were always on the lookout for the next new discipline, sub-discipline, a cross-disciplinary approach based on new methods. If you acted quickly, lined up your editors and launched before your competition, you were likely to secure your place as a successful journal. Act late and you found yourself in a crowded field starving for submissions.

Journal growth seems more vertical these days. Instead of building horizontally through specialization, many publishers have been focusing on their cascade — a term used to describe a portfolio of related journals ordered vertically by measured (and perceived) importance.

This realignment of thinking, from a horizontal to a vertical approach, is likely the result of technological integration of the publication process. In the paper-based model, transferring a manuscript to another journal meant lots of photocopying, bundling, and mailing. It was far easier to send the author a rejection letter.

Electronic management of the manuscript submission and review process has been around for several decades; however, it was initially designed and built around the traditional silo model of one-journal-one-process. Moving a manuscript from one journal to another often meant resubmission.

Solving the transfer problem has created a widespread perception that rejecting a manuscript–especially after considerable time and resources have been devoted to its review–is downright wasteful. If it’s publishable, why not publish it?

Today, transfer is often built into the submission process of many journals. If you didn’t get into Journal A, editors can recommend transfer to another title within the publishers’ portfolio, and sometimes, between them. Many submission systems require authors to select from a list of secondary and tertiary titles when uploading their manuscript, and when manuscripts transfer, reviews and editorial decisions can go with them.

Solving the transfer problem has created a widespread perception that rejecting a manuscript — especially after considerable time and resources have been devoted to its review — is downright wasteful. If it’s publishable, why not publish it?

This rhetorical question sounds simpler than reality. Often a publisher and its editorial board are supportive of the idea of publishing more papers in theory, only they want these papers to go somewhere else, lest they dilute the exclusivity of the journal and its brand. Editors are also hesitant to be associated with a “journal of rejects.” While there are excellent arguments for increasing transparency in the peer review and publication process, keeping rejection and transfer information private and confidential provides a poignant counter-argument.

Before working on the myriad details involved in starting any new journal, a publisher considering a cascade journal built initially from its source of rejected papers should consider its manuscript flow. If current submissions cannot support a new journal, then it should reconsider the prospect before devoting itself (its staff and its money) to a new title. Consider the following hypothetical scenario:

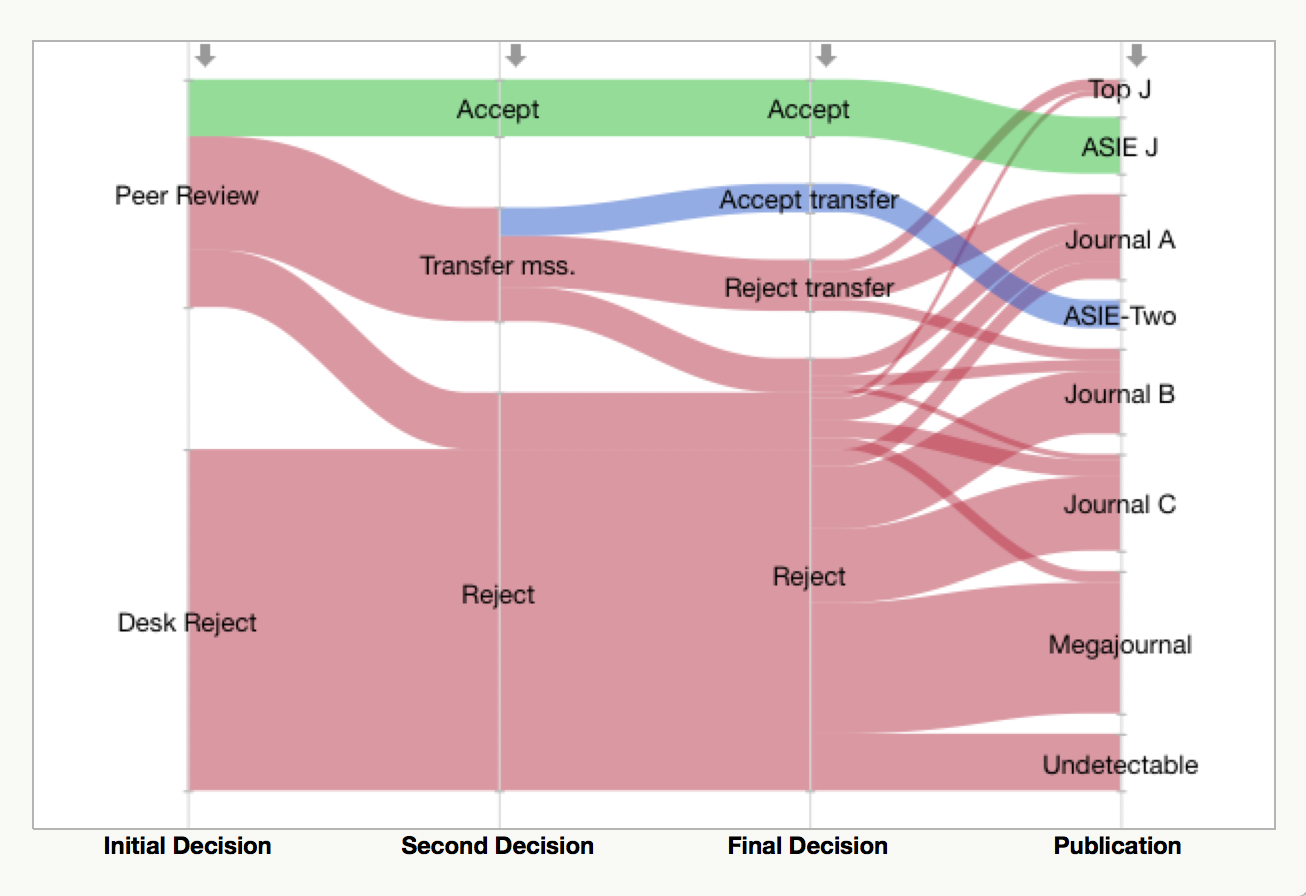

The American Society for Irrational Exuberance (there is none, but there should be!) is the publisher of The ASIE Journal. ASIE J receives 1000 original manuscript submissions each year, 40% (400) of which go through the peer review process, and 60% (600) are desk-rejected. One-quarter (100) of peer reviewed papers are accepted and published in ASIE J (green flow), for an overall acceptance rate of 10%. Of the remaining 300 peer reviewed papers, the best 200 are transferred to their new journal, ASIE-Two, which employs a much less stringent acceptance criteria. The editor of ASIE-Two recommends publication for half (100) of these manuscripts; however, just one-half of the corresponding authors accept the editor’s offer to publish in ASIE-Two, the other half revise their manuscript and submit elsewhere. We are left with just 50 published papers (blue flow).

Peer review is not a perfect process and editors are not always able to detect the most important papers. A few of the manuscripts that were transferred to ASIE-Two, but rejected, were published in the top journal in their field (Top J). Other transferred-then-rejected papers were published in disciplinary journals (A, B, C) or found their way into megajournals. Similarly, a few desk-rejects (red flow) find their way into good disciplinary journals, yet the vast majority were ultimately published in lower ranked titles, megajournals, or could not be found (undetectable).

If you considered the number of manuscripts coming in to ASIE J each year (1000), and its selectivity (10%), you might initially believe that this journal could support a second journal, but it can’t. Initial manuscript flow has to be much greater. Until ASIE-Two can start attracting its own submission flow, it will need to rely on its parent journal for support. However, ASIE J cannot afford to be less picky or risk its standing among its competitors. If ASIE J is too picky, it risks angering its society membership and author base.

“We’ll start small and grow over time,” the pragmatic editor of ASIE-Two might humbly offer. But, without sufficient papers, the journal will likely remain unindexed and not receive any recognized performance ratings. This will make it difficult to attract its own submissions. Convincing authors to have their manuscript transferred to a new journal with no standing and an uncertain future may be harder than the above flow diagram suggests. Manuscript transfer is wonderful invention, but it doesn’t solve a fundamental flow problem.

Anecdotally, I’ve heard from smaller society publishers that they are suffering from declining submissions from titles located upstream. Rejections from Lancet, JAMA, and Cell are flowing down their own journal cascades. Even disciplinary publishers have established cascade journals intended to keep manuscript flow from their competitors. In this environment, is tempting to conclude that you need your own cascade. Nevertheless, many smaller publishers are not in a position to start these kinds of journals. They simply don’t have the flow.

Note: The above diagram (called a parallel plot, flow diagram, or alluvial plot) was generated using JMP (SAS). Data tracking the fate of rejected manuscripts can be found in products such as HighWire’s Rejected-Article Tracker.

Discussion

9 Thoughts on "Not Every Publisher Can Support A Cascade Journal"

Good article Phil. We have a real need to soak up demand for publishing in our journals with new journals – we have significant flow. It is interesting balancing mission and business in this context. My view is that one should publish more, and do this through launching new journals, or splitting existing journals, recognizing that one needs to maintain the highest quality (in our case – not for everyone) article output. One also faces the conundrum that there is clearly a demand to publish more, and no increase in budgets available to pay for more content from customers, and in some fields no thirst for authors to pay APCs.

The problem I see here is that the focus has moved away from publishing quality archival material to the business model and how best to succeed financially in a competitive environment.

One publisher publishing multiple journals in a given field with greatly varying review standards is not the answer; it is the problem. If a given paper does not meet the highest applicable standard for publication in its field, it should not be published. The world doesn’t need more mediocre science with questionable data, sloppy analysis, and poor writing.

The root of this, as I see it, is the trend toward viewing scholarly journals as a resource for authors rather than as a resource for readers. The requirements some universities have set, where a student must be a published author in a journal with a specified minimum impact factor to secure their degree, has led to an influx of sub-standard papers written only as a graduation requirement. Often these papers merely reproduce work done years earlier on the assumption that what was published once is by definition worthy of publication. And when scholarly journals publish those papers in service to the next generation they end up flooding the archives with material that does not stand up over time and which, paradoxically, ends up lowering their impact factors, making them ineligible to serve the very purpose that they are trying to serve by publishing those papers.

Competing journals from competing publishers with varying standards is one thing. Having one publisher put out “The Journal of Quality Chemistry”, “The Journal of Mediocre Chemistry”, and “The Journal of Sub-Standard Chemistry” to avoid rejecting papers is something else entirely. We should avoid the cascade approach altogether and hold each paper submitted to the highest standards applicable.

Paul, you make a good point about the quality issues. That said, there are “cascading” practices that are a lot more nuanced than quality. I have 35 journals in my program and papers move between journals a whole lot. An author can submit to journal A, which is a broad topic like Structural Engineering. This journal gets over 1,000 submissions and accepts 15%. Some of those rejected papers are transfer decisions to journals like architectural engineering or construction journals. The topic is not out of scope for the structural journal but that journal may only accept the top 5% of construction related papers submitted to them. This is in order to maintain the multidisciplinary nature of the general journal. Having some of the rejected construction papers go to one of our construction journals does not make the construction journal a lesser quality. The same level of peer review under the same standards are applied to every journal.

Any publisher with a medium to large number of journals will have some transfer or cascading that happens. I don’t think that is a problem, I think it shows that there is a dedicated user base and loyalty to that family of journals. I do agree with you that holding on to poor papers for the sake of boosting numbers is a game best avoided.

Your assessment of “the trend toward viewing scholarly journals as a resource for authors rather than as a resource for readers” resonated with me. The need to balance a publication as a scientific service to the community and publishing as a business model should be self evident. Journals that can’t at least support their own costs after a period of initial investment won’t survive. But the shift away from the published article as a resource for readers is an intriguing idea about why article cascades and expanded journal portfolios have and continue to occur. However, I don’t think that poorly executed science should be published at all: It is incumbent on the editors or reviewers to convey the fatal flaws to the authors so that those studies don’t just cascade (wasting everyone’s time in the process) into the Journal of Poorly Executed [Discipline of Choice]. The “incremental” science that often makes up rejected submissions is critical to move from ground-breaking conceptual science (often published in a high-profile, top-tier journal) to believable science with an understanding of the conditions under which the ground-breaking concept holds true.

Paul you make a strong and cogent argument. Indeed in a rational world where there are few journals quality should rule. However, in a competitive world which demands top and bottom line performance it seems that quality is suffering. But then again one person’s gold is another’s dross.

It seems to me that cascading is a double edged sword. One must have an established journal in the stable that needs papers to meet its publishing schedule or to grow. If that secondary journal becomes reliant on the cast off from the primary journal and authorship is looked upon as being less than sterling then authors will not agree to being in the secondary journal. Worst still the secondary journal may lose all it authorship because it becomes known as a dumping ground!

This is a very interesting article. It’s very easy to be blinded by top-line numbers and over-estimate what would come through the pipeline in reality.

It feels like there is a compromise to be had with setting up new journals and using a cascade as a stepping stone, i.e., combining a cascade model with a proactive commissioning approach. For example, it can be difficult to attract submissions of original research for a new launch, a lack of which makes indexing more difficult further down the line – this is much easier using a cascade approach.

One has to think about the establishment of a new journal as a long-term project. Back in the subscription days, the rule of thumb was that a successful journal started running in the black within 5 to 7 years, and have paid off all costs in 7 to 10 years. Things are a little different with open access because you can start earning revenue earlier in the process, rather than having to wait and build a readership before you can sell subscriptions.

But if you’re thinking about the long-term (rather than trying to satisfy the short attention spans of VC investors), 50 cascaded articles published in year 1 doesn’t sound that bad to me. One would need to supplement those articles with a strong commissioning program, particularly calling in leaders of the society to supplement the growth of their own group’s journal.

“Other transferred-then-rejected papers were published in disciplinary journals (A, B, C) or found their way into megajournals.”

My question: what is a disciplinary journal?