COPE Case #18-03, “Editors and reviewers requiring authors to cite their own work” reads like a political thriller:

Working alone late one night, a staffer stumbles upon a decision letter in which a handling editor instructs an author to cite some of his papers. Intrigued, the staffer digs deeper and finds a pattern of systematic abuse that involves a gang of crony reviewers willing to do the handling editor’s misdeeds and evidence of strong-arming authors who put up any resistance. The staffer brings the ream of evidence to the Editor-in-Chief, who goes to the editorial board. Confronted by questions to explain himself, the handling editor resigns out of haughty indignation. Case closed. Or is it?

All COPE cases are public, however, the texts are carefully edited to preserve anonymity. COPE is an industry advisory group, not a court of law. The purpose of publicizing cases is to educate, not adjudicate. We can only hope that the summary of actions provides a clear path of action for future staffers and editors dealing with similar cases of misconduct. Still, it makes me wonder just how common is editorial misconduct and whether the vast majority of cases, like similar power-abuse misconduct, goes unreported.

A 2012 survey of social sciences authors published in the journal Science, reported that one-in-five respondents said they were coerced by journal editors to add more citations to papers published in their journal. Not surprisingly, lower-ranked faculty were more likely to acquiesce to this type of coercion. A follow-up study in PLOS ONE confirmed that the practice of requesting additional citations to the journal was prevelant across disciplines, although much more frequent in marketing, information systems, finance, and management than it was in math, physics, political science, and chemistry. In these studies, the researchers limit coercive citation to the journal itself, assuming that its purpose was to inflate the journal’s Impact Factor. But what if its purpose was also to inflate citations to the editor himself or to a cartel of other participating journals?

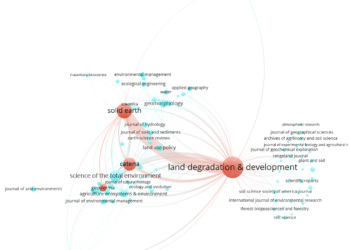

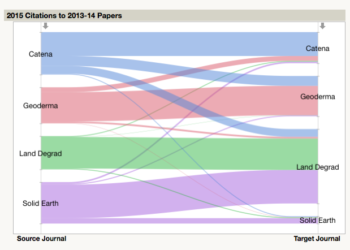

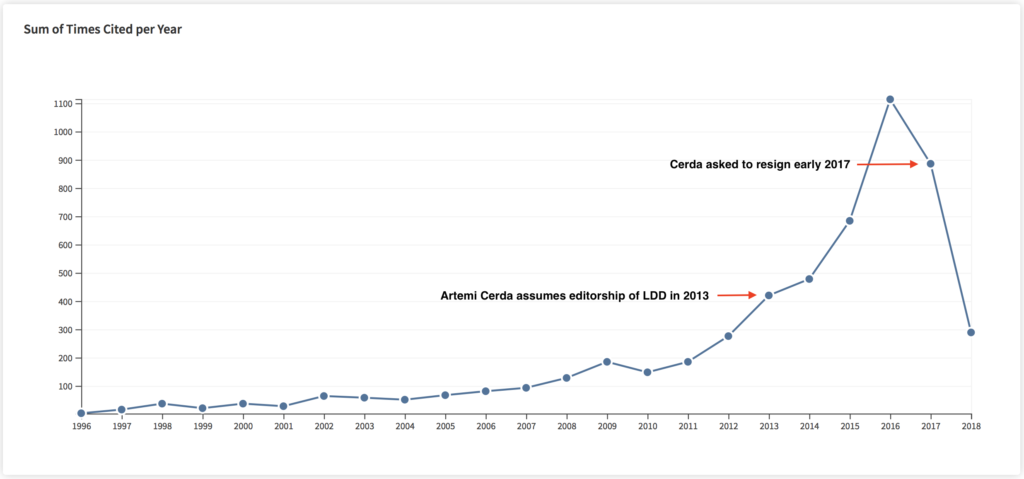

Last year, the journal, Land Degradation & Development, underwent public scrutiny when its editor, Artemi Cerdà, was accused of coercing authors to cite LDD and his own papers. Within a few short years, the journal’s Impact Factor rose from 2.058 in 2013 (the year Cerdà took charge) to 9.787 in 2016, or from rank #12 among soil science journals to #1. Oddly, Clarivate celebrated LDD as one of the “world’s most influential journals of 2017.” Cerdà was forced to resign in early 2017.

Stratospheric increases in individual citation counts don’t necessarily mean that misconduct has been committed. Some authors are fortunate enough to publish a fundamentally important paper that quickly becomes highly cited. While individual papers can lead to exponential growth in author citations, single papers have little (if any) effect on an author’s h-index, a measure of an author’s entire portfolio of published papers. Thus, we could imagine that a better indicator of coercion would be detected for authors whose h-index suddenly goes from linear growth to exponential growth.

Computationally, looking for rapid rises in citation performance is not difficult — the financial market has many algorithms that look — in real time — for odd patterns that trigger buying or selling decisions. Some of these transactions are investigated by the US Securities and Exchange Commission when made by individuals with cosy relationships with the company. Given that the citation market is so much smaller and less complicated than the financial market, searching for unusual patterns in the citation literature should be much easier. Metrics and analytics are becoming big business in publishing, offering tools for evaluating authors, journals, and institutions. I’m somewhat surprised that no one has stepped in this arena offering tools for the purposes of forensics.

I have to admit that I’m a little uneasy with developing such citation pattern detection algorithms as it assumes that there is something unseemly to be found in the data. An unusual growth pattern is simply an unusual growth pattern. Nevertheless, if citation coercion is much more widespread that reported because of a fundamental power unbalance within academic publishing, providing more transparency may help staffers and victims of citation coercion speak out.

Discussion

8 Thoughts on "How Much Editorial Misconduct Goes Unreported?"

A related area is book reviews. A prickly reviewer may get belligerent in his commentary because his work (or something written by one of his cronies) is not cited in the bibliography. I’ve seen this happen, though not (yet) to me. As ever, this may be worse in humanities than in STM and other areas.

Another way of detecting this might be to compare reference lists in the final and first submitted version of a paper, and flag the decision for additional review if papers by an editor or reviewers have been added. It seems like it would be possible to automate this through publication management software?

Wouldn’t help if the people in charge are in on the racket, of course, but in cases like this one it could help the editorial board spot rogue editors.

For several of the editors I’ve worked with, one thing they always look for in submissions are citations to the journal to which the article was submitted. The idea is that it is a sign that the authors have selected an appropriate journal for their work — if the new work doesn’t cite anything the journal has ever published, then is it the right place for that work to be published? Sometimes yes, it’s novel and groundbreaking for the field, but often it’s a sign that they’ve chosen the wrong place for their article.

Of course, this is very different from demanding that additional citations be added…

I’ve heard the excuse that editors look for citations to their journal to see if the manuscript “fits” many times. It doesn’t hold water for me. Reading and thinking about the abstract would be much faster, and more interesting, than counting your way through the references. From that abstract the editor should be able to decide whether the subject matter is something they want to consider.

In theory perhaps, but often you’re dealing with a very broad journal, and an editor who is an expert in one particular aspect of a wide field that the journal covers. When a paper comes in that isn’t in their direct area of expertise, they still need to decide whether to send it along to an associate editor for potential review, and one way they can get a positive sense that the subject matter is in scope for the journal (no matter how “interesting” it may seem) is seeing that the new work extends previous work that was published in the journal. It’s not foolproof, nor the only method the editor should be using, but it can provide a valuable signal.

Unfortunately it is a signal that is malleable. Graduate students are taught to cite articles from the journal to which they are submitting. Coercion without coercion.

Maybe — but why send your paper to a journal that has never ever published anything relevant to your work? One of the key goals for most authors is to make sure their work reaches the right audience, and this can be a useful signal for both an author and an editor. Which is not to say that it’s infallible, and that there are times where one wants groundbreaking work to reach a new audience.

“never ever published anything relevant to your work” is hyperbole and doesn’t reflect my original comment. No one cites everything that could be considered relevant because citation lists would be endless. But scholars should cite the work that inspired them, guided them, and pointed the way to their contribution. What I would like to see is editors start to add their own citations after the authors’ reference lists–sort of like a suggested additional reading list that would likely focus on articles in their own journal (although that wouldn’t be necessary.) These editorial citations would not be included in citation indices but they let readers know about other papers on this topic.

I have no issue with editors wanting to point to articles in their journal, but I have a big issue with those papers being disguised as citations and with rejection decisions based solely on an insufficient number of such citations.