Altmetric have released their annual Top 100 list, the articles that their measures point to as having received the most attention for the year. As in 2013, the list is fascinating for what it tells us about communication between scientists, the attention paid to science by the general public, and also for what it tells us about altmetrics themselves.

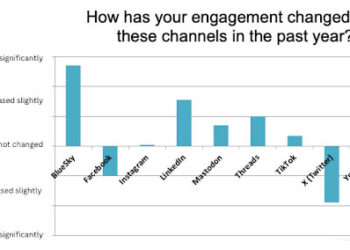

The article drawing the most attention for 2014 describes the hugely controversial study Facebook performed to see if they could emotionally manipulate users, and articles about social media appear throughout the list. This is not particularly surprising given the emphasis that Altmetric places on social media sharing, as well as the navel-gazing that dominates such forums. People using social media to talk about social media seems par for the course. Nutritional studies and various diets continue to draw a great deal of attention, as do clever joke and semi-joke articles about things like James Bond, chocolate consumption and time travelers which also make the top 10.

Controversy always scores well with altmetrics. Along with the Facebook article, two retracted papers on STAP stem cells make the top 20. Schadenfreude led to an otherwise unremarkable paper on melanism being the second highest ranking article of the year because the authors and editors forgot to delete a note to themselves about whether to cite “the crappy Gabor paper.” I suspect that this would have been surpassed by the “Take Me Off Your F–king Mailing List” paper if Altmetric included predatory journals in their rankings.

And then there’s the just-plain-weird, which always gets people talking. Odd sexual development, brain-to-brain communication and mysteriously moving rocks all make the top 15. This is all well in line with what we saw last year, and then as now, if you dig past the noise, you can start to see some of the more important stories of the year that reflect the public’s concern (the Fukushima meltdown in 2013 and Ebola in 2014).

Altmetrics tell us a great deal about what captures the attention of the public, as well as what scientists are talking about among themselves. It would be interesting to try to separate out the two–which results here show the gossip within the scientific community, and which represent the interface between the community and the general public? It’s all interesting stuff, ripe for speculation as to how this reflects modern society. Also of interest, according to Altmetric there’s no evidence in this list that open access publishing makes an article more likely to get shared and discussed.

But what altmetrics don’t tell us is much about the best or the most important science done during the year. To be fair, Altmetric is very up front about this, stating:

It’s worth mentioning again that this list is of course in no way a measure of quality of the research, or of the researcher.

There’s general consensus that the Impact Factor should not be the primary methodology for measuring research impact. No one, however, seems particularly sure about what to use in its place. While a deep, expert-led understanding of a researcher’s work is always the ideal, practical considerations make this difficult, if not impossible in many ways. As funding agencies and research councils struggle with these questions, there are often suggestions that altmetrics should play some role, in particular as a way to measure a work’s “societal impact”.

But looking at this list, it would seem that altmetrics are not yet up to that task. People finding something funny or interesting does not necessarily translate into “impact”, no matter how fuzzy that term may be. Actual impactful research, a new diagnostic technique put into broad use or a historical examination leading to a change in legal policy, is likely to be drowned out by a paper claiming that dogs line up with magnetic fields when they defecate (number 5 for the year). How do we separate the serious wheat from the chaff when the chaff performs so much better in the favored measurements?

These year-end lists serve as great reminders that altmetrics isn’t quite there yet. The kitchen sink approach, including nearly anything that can be measured, still needs refinement. The big questions remain–what do measures of attention really tell us, does this in any way correlate with importance, quality or value to society, and is there something else we should be measuring instead?

Discussion

20 Thoughts on "Altmetric’s Top 100: What Does It All Mean?"

Although “the crappy Gabor paper” was evidently a careless oversight, it’s an interesting one because it points to a flaw in the most traditional metric of all — citation count. (For whatever it’s worth, I think that despite its flaws, it remains the least inadequate of all metrics.)

The issue here is that we cite papers for many different reasons. The two main ones, perhaps, are appropriate for counting. First, giving credit for an idea that someone else had or explained before, as when I write “Sauropods were not primarily aquatic but terrestrial (Coombs 1975); and second, claiming authority for an assertion that I am making without giving my own evidence, as when I write “animals do not habitually hold their necks in neutral pose (Vidal et al. 1986)”.

But we also cite papers just because they’re there and it seems churlish not to — as in the case of the crappy Gabor paper. So I might write “Sauropods were the largest terrestrial animals (Upchurch et al. 2004)”. I am not crediting them for that insight, nor claiming their support for my assertion — everyone has known it’s true for a century. I am just tossing in what seems like an obligatory citation in an introduction. Certain papers accumulate a lot more citations than they “deserve” for this reason.

And finally, there’s our old friend, citing a paper because of its flaws, as in “animals do not habitually hold their necks in neutral pose, as Stevens and Parrish (2005) have claimed”.

I’ve often wished that we had a way to distinguish between these four modes of citation (and others that I’ve no doubt missed). I don’t have a solution to propose, and I’d be pleased to hear anyone else’s.

Have you heard of SocialCite?

http://www.social-cite.org/

Mike, one can to some degree distinguish these different types of citations by a combination of where they occur in the article plus semantic analysis. There is active research along both lines. But what is the value of the distinction as far as impact is concerned? Are you proposing some sort of complex weighted impact estimating model, instead of the rough measures we already have?

Well, I’m not exactly proposing anything. But it certainly seems to me that the first two kinds of citation really do indicate that the cited paper is “better”, whereas the last kind suggests it’s less good. You could imagine a metric that scores +1 for citations of the first two types and -1 for the last type (and nothing for the third type). My guess is that it would better match subjective expert opinion of how important and valuable the paper is.

I think the last type (negative citation), while identifiable semantically, is relatively rare. I am not sure that the third type is defined well enough to even be meaningful, much less identifiable. Thus trying to factor out these two types probably would not be worth the effort. It is the case, however, that citations do not measure who has directly influenced whom and this may be their greatest weakness as evaluation tools. Most citations occur in the context of explaining the background for the research (your first type) and these are often found after the research is done. Most of the rest are, as you point out, providing links to support specific stated facts.

What bothers me is that there are a host of citation based metrics for individual articles and authors, but people talk as though the IF were the only metric in use. That I thinks is simply false. Beyond that I think that there are semantic ways to track the flow of ideas and they would be superior to citations. New ideas always change what we say.

What I find most interesting about the list is the different metrical patterns that get different items onto the list, because they suggest different flows of information in society. For example, some have lots of news coverage but few tweets, while others have the opposite. Why may be an important scientific question.

As for importance, it looks like society has many things to do with science besides ingesting serious stuff, laughing for instance. So societal importance is different from scientific importance and if altmetrics are being sold as measures of the latter than that is incorrect.

I do have to point out that if dogs are indeed sensitive to the magnetic field that is potentially very important scientifically. That paper is part of a series of studies of different mammals, doing different things. Of course it is also very funny, so here we have a case of widespread societal awareness of potentially important science because of the flow of humor. Is this process good or bad? And is that an important new question, thanks to altmetrics?

I can see the news room now. Editor: We have to fill a 6″ double column! Frank go do a story on some science thing.” Frank: Any ideas boss? Nah, just something wierd – you know those science types, frizzy hair and thick glasses. OK.

Lets see here’s something on something facebook did and lots of people use facebook and it looks like it will fill 6 inches double column! And so the altmetric blossoms.

Yet, for all the talk in political circles about climate change that topic did not make the top 15! I guess there is just too much math when it comes to climate change.

Lastly, when I see this list I am reminded of the SNL skit in which Chevy Chase is reading the news when someone unexpectedly handed him a piece of paper torn from a teletype. He stopped, got very serious and said: Scientists have just discovered that saliva causes stomach cancer, but only after being swallowed in small amounts over a long period of time.

Altmetric do argue from their Top 100 that OA articles are no more likely to be shared and discussed. Their evidence is that “just 37 of the 100 articles featured were published under an Open Access license.”

But this is roughly double the OA share of all articles (estimated at 15-20% recently by Richard van Noorden):

http://scholarlykitchen.sspnet.org/2014/11/17/additive-substitutive-subtractive/#comment-148071

Thus, OA articles are in fact roughly twice as likely to be highly shared and discussed than TA ones.

This is consistent with Altmetric’s previous study, which found that OA articles in Nature Communications received more attention than TA ones:

http://www.altmetric.com/blog/attentionoa/

It’s hardly surprising that people are more likely to share things that can legally be shared.

I never know quite what to conclude from observational studies like this. Phil Davis has written about some of the dangers in attributing causation to OA when one sees a correlation like this, because it’s so difficult to control for many other factors that might be playing a role:

http://scholarlykitchen.sspnet.org/2014/08/05/is-open-access-a-cause-or-an-effect/

The papers at Nature Communications may be under the same selection bias (wealthy labs that can afford $5K for an APC may be doing better work than poor labs, labs may save their OA funds for only the papers they think are really important) as seen in other observational studies.

Further confounding any such study, I know that at OUP if any article gets any sort of press coverage or action on social media, we almost always immediately make it freely available, and this is a fairly standard practice for most publishers. Some of the more liberal acceptance policies at some OA journals (methodological soundness versus significance) may lead to more of the sorts of funny or weird articles that draw social media attention than the demands that other journals place on papers.

Also, since Altmetric does not cover the entirety of the research literature, one would have to adjust one’s comparative numbers to reflect the proportion of OA journals among the total number of journals that Altmetric samples, rather than looking at the entire market.

Right now, all of the altmetrics observations are interesting, some are tantalizing and most are curious. The world is full of curious things to observe.

What can and will turn this into science is taking the observation and trying to uncover its cause, and test the mechanism.

This is what Phil Davis did in his randomized controlled studies of OA;. He addressed not the observation that OA articles had more citations, but the speculation that OA caused the articles to have more citations.

It is endlessly fun to guess at why and how any particular item gets on the Altmetric Top 100, but until it is a testable hypothesis, then we still don’t know what it means. And this will take some doing – probably with the best, first approach being to parse the data inputs a bit: what articles are most Tweeted? What do they have in common? Which are most picked up by news? Do these differ from the blogged ones? Do “academic sharing” top-100 correlate to later citation? Is academic sharing an earlier time-point in the same information usage? (Privacy concerns could limit this, but, I’d love to see if who shared = who cited)

D Wojick, above, calls this the “different metrical patterns that get different items onto the list, because they suggest different flows of information in society.” But this seems to be a necessary step towards making altmetric science a contender with citation science.

Altmetric has made the data file available as a csv download on Figshare (thanks to Euan Adie and Cat Chimes! ) http://figshare.com/articles/Altmetric_data_on_top_100_articles_by_citations_in_2014/1230027

Thanks Marie – to clarify, that file linked above is Altmetric data for the top 100 most cited articles of all time (see our blog post on this at http://www.altmetric.com/blog/most-cited-papers/).

The dataset that we used to populate our Top 100 list (ranked by the amount of attention alone) is available here: http://figshare.com/articles/Altmetric_Top_100_2014_article_data/1269155

“There’s general consensus that the Impact Factor should not be the primary methodology for measuring research impact. No one, however, seems particularly sure about what to use in its place.”

No single indicator can ever be useful on its own. The JIF can’t. Citation counts can’t. Altmetrics (***when considered only as a single-number indicator, rather than a group of interrelated metrics***) also can’t.

Metrics _can_ be useful when considered as a group of metrics that can tell us interesting or important things about the different dimensions of impact research has had.

That is–Facebook shares alone don’t tell us much, but Facebook shares plus certain F1000 ratings can tell us that it’s likely that your work is being found useful by educators [1]. Or your work might be considered a “popular hit” when it’s got a lot of shares on Twitter and Facebook but no cites, whereas it’s likely an “expert pick” if it’s got citations and F1000 ratings [2].

These are called composite indices, and they’re much more useful for understanding research impact than single numbers alone. And as David W. points out, when you add semantics to the numbers, things can get even more meaningful.

Altmetrics is an evolving field of both study and practice. Let’s not throw the baby out with the bathwater and dismiss altmetrics as being “not yet up to the task” because some of the current implementation of metrics don’t always show what we want them to. (Though, to be clear, the Altmetric 2014 list does show us many useful things that–were we to look at citations alone–we otherwise wouldn’t know about.)

[1] http://arxiv.org/abs/1409.2863

[2] http://arxiv.org/html/1203.4745v1

The top chemistry paper … “Volatile disinfection byproducts resulting from chlorination of uric acid: Implications for swimming pools” made the list because of over 1000 tweets!

On reflection, I think several basic questions arise from this data. First, is the scientific community interested in the broader social impact of its work, or just the scientific impact? For example, is awareness of the research per se, even as a joke, of value to it?

Second, and more relevant to this blog, what about the publishing community? This data tells us something about those aspects of scientific output that non-scientists are directly interested in and there are far more non-scientists than scientists. Are there new publishing opportunities here? New opportunities for libraries?

Altmetrics are zeroing in on a specific set of information flows. The big question may be how important those flows are, not how important altmetrics are. Citations are looking at the direct flow of scientific information from scientist to scientist. Altmetrics are looking at the direct flow from scientists to non-scientists. The “alt” is in the flows.