[Editor’s note: This is the edited text of a presentation that Angela Cochran gave as a keynote at JATS-Con 2016, the annual user group meeting for the Journal Article Tagging Suite used for XML creation. This talk was given on April 12, 2016, at the Lister Hall Auditorium at the National Medical Libraries in Bethesda, MD.]

At least once a month my colleagues and I walk out of a meeting and someone says: “Remember when we used to be publishers?” It’s become the obvious joke when all we talk about is metadata, digital content distribution, e-commerce solutions, or content licensing issues.

What we are experiencing is not only a shift in how we talk about content but also a shift in skill base. Of the directors in our publications department, we have acquisitions, production, marketing, business operations, and publishing technology. The publishing technology group is only 5 years old but consists of long time employees that have shifted, mostly from production, to a technology focus.

While each of us directors have clear product or service lines, it’s safe to say that we have all gained significant technology chops in the last 5-8 years. All of us have a basic to comprehensive understanding of how databases work. All of us have medium to advanced knowledge of XML. All of us know what a taxonomy is and how best to apply it to our content. All of us know a whole lot more about e-commerce than we would ever want. All of us think we know about web design.

While it is great that we all have exposure to these issues and concepts, not a single one of us has ever actually had any formal training on any of this. Everything is on the job learning — lots of Googling; lots of webinars; lots of conferences and workshops; lots of calls to our vendors and colleagues; lots of trial and error.

As this technology has invaded our happy little publishing space, it is usually my boss that reminds us, and more importantly others in the building, that this is not what we do. We are not back-office people. We are not developers or solutions architects. We are not content strategists or web designers.

So sometimes we mess up. We have built an e-commerce system no less than three times. It’s still not perfect so there will be a fourth. We have been through major platform upgrades twice as well as a move. We are currently working on the third major upgrade and redesign. We have selected vendors that have promised the moon and let us down. We have been unrealistic with other vendors forcing us to examine our own standards.

Last week I had the honor of addressing colleagues on the other side of the world at the ISMTE Asia Meeting in Singapore. I was asked to organize a session about platforms, specifically whether to build or buy and also transitions between platforms. As Rick Lee from World Scientific and I were both sharing tales of woe, there were lots of heads nodding. Clearly others were identifying with the pains. There was an acknowledgement that even that standards aren’t always standard. If you think your tagging is standard, just wait until you try to move platforms. I can assure you that this is a global problem.

Another session organized by Tony Alves of Aries Systems looked at initiatives such as Crossref, ORCID, and CreDit. These systems were described by one attendee as the “plumbing” of the scholarly publishing system, though I prefer a more civil engineering related term, infrastructure.

In some retweeting back and forth, a critic of publishers made a comment to me about publishers being slow to innovate. My response was that this was a load of garbage — well, I used a different word but you get the idea. The industry standards that publishers are creating, supporting, or paying for is an incredibly sophisticated and complex system.

Just take the Crossref DOIs as a start. Crossref formed in 2000 as a publisher collaboration to implement the DOI system created by the International DOI Foundation as a way to provide persistent links to digital content. That was the same year that I started working in Journals having been a book production person first. Adoption of DOIs was slow, as most new technical standards are. Publishers or their vendors were now being asked to tag metadata in a structured way, to insert specific information into that metadata, and to deposit that metadata with a third party. Back then, linking references was a dream. Now, it’s functionality that is taken for granted. Reference links are enormously helpful to readers.

I think that anytime publishers are asked to drop everything and implement new standards, you will hear groans from the bleachers. It’s hard enough to get content imported into production systems, copyedited and competently tagged that adding something new is asking for trouble. New standards also suffer from some “chicken vs. the egg” problems. ORCID is a good example of this.

ORCID is attempting to solve a number of identified problems in our ecosystem but the one of most interest to publishers is the disambiguation of author names. The trick with ORCID implementation is that unlike DOIs, it requires participation by our authors, many of whom don’t understand the benefits and couldn’t care less about our plumbing problems. So we ended up in a situation where authors couldn’t see the benefits because ORCIDs weren’t appearing in the publications and profiles weren’t being automatically updated. Of course, there were no ORCIDs in the publications because the authors weren’t getting them.

In the early days of ORCID, I signed on to be an ambassador to try and educate civil engineers on the value of ORCID. Many of my editors created profiles, literally because I told them to. They found it difficult to add their publications. It’s been a few years but now we can auto-populate ORCID records with publications. Sadly, it may be too late for me to get these original adopters back into their profiles. I invited them to participate too soon.

It definitely takes time. One ORCID business model was to get institutions to use ORCID as a persistent identifier. The institutions were “creating” ORCIDs for people. This had the potential to solve the university problem of not being able to follow their alumni out to the real world. While this model helped keep ORCID afloat with cash and certainly added registrants, ORCID has discontinued this model due to low implementation by the end users — basically the faculty and/or students were getting ORCIDs whether they wanted one or not, were not understanding the benefit, and were never claiming their profile. A database full of empty or inactive profiles is not helpful to anyone.

New initiatives require a tipping point — something that pushes it from good idea to industry standard. Now that some funders and publishers are requiring ORCID, we may have hit this tipping point.

The Open Funder Registry, formerly called FundRef, is another system that is improving but still causing challenges for publishers. Having a disambiguated list of funding bodies is a clear benefit to those funding agencies who have no way of knowing how many papers are published as a result of their grants. While I still question why this is a problem for publishers to address, it is a requirement for participation in CHORUS. The issue today is that it is difficult for publishers to tease this information from the authors.

Publishers can use a Crossref widget or another kind of tool provided by manuscript submissions systems but authors still have difficulty finding their funder in the list of over 11,000 agencies. What is often entered then are free text agency names. Further, publishers are finding that what the author selects from the funding widget when they submit a paper does not always match what the author wrote in the acknowledgements of the paper. This leaves the publisher or their vendors to have to compare the information (or abandon the widget altogether) and instead pull the information from the text and query the authors in proofs. Once the funder is clear, the publisher can find the funder ID, populate the tags required, and insert the information into the metadata and Crossref feed. The tags required include the funder name as included in the registry and the associated ID.

There will be errors. We know this because while publishers are starting to include funder information in their Crossref feeds, the failure rate on funding information is very high. This will improve over time and it really goes to show how difficult it is to create an authority file, develop tools for people to navigate the authority file, train authors how to use the list, and train production staff or vendors how to tag the materials.

New tags have also been added for license information so that machines can tell what is open access, free for all, free with registration, and so on. These licenses are important for all public access initiatives as well as text- and data-mining requests.

Most of what I have been talking about so far pertains mostly to journal content but standards for book content is really crucial too. Publishers, particularly society publishers, are deeply concerned about what effect public access mandates will have on their subscriptions. Book content offers alternatives.

Society publishers are looking for other revenue streams related to content and better digital products and tools around book content is one possibility. Publishers are also looking for value-adds to the journal article Version of Record so that users will have a reason to want to access the subscription only version instead of the accepted manuscript in all its double-spaced and typo-ridden glory. Being able to more richly link book content and journal content is one way to do this and having tagging standards that are interoperable is crucial.

For those of us who publish Standards with a capital “s” (meaning technical standards for things like buildings, boiler systems, and airplane engines), there are remarkable opportunities to provide digital versions with really neat tools. Being able to connect the research presented in journal articles that lay the foundation for the standards within these digital products brings a wider audience to journal content.

Implementing standards, or installing plumbing, can be slow, difficult and potentially expensive. It may not seem obvious at the beginning that these changes are the gateway to future innovation.

Let’s look at some of the tools or services that are only possible because of the rich infrastructure. CrossMark provides additional information about related content helping to ensure that the reader is accessing the most recent content or can easily find corrections and retractions. Finding corrections or retractions is appallingly substandard on some journal websites. CrossMark goes the extra step by providing this information on locally saved PDFs as well.

Pretty much the entire CHORUS system is based on this existing infrastructure, which is why it’s not 10 times more expensive than it is. I am not trying to make it sound like connecting articles, DOIs, Funder IDs, and licensing is easy, but the cost of implementation may have doomed the project had it not been for the existing plumbing.

Innovations are coming from third parties as well. Kudos, Mendeley, colwiz, JournalMap, ReadCube and many, many others are using information from Crossref to power these new services. The ability to make content more discoverable by including them in these products as well as significant cost savings for the innovators who don’t need to keep building the same thing over and over again has really dropped the barriers to innovation.

There are downsides to this proliferation of innovation. This problem smacks me upside the head every time an author or editor says that he has a ResearchGate account so he doesn’t see the need for ORCID. I start by trying to explain that ORCID is part of the scholarly publishing infrastructure and ResearchGate is not. Once again, our plumbing problems are not of concern to our authors.

My next tactic is to explain that ResearchGate is a for profit entity making money off selling the information put online. Seeing as this is the exact same business model for Facebook, LinkedIn, Google, and Twitter, I lose that argument as well. We make calculated risk decisions all the time about what we share and how others can use it.

Taking the proliferation problem a step further, I am not at all convinced that the typical user knows whose tools they are using anyway. Academia.edu is a prime example. Most users don’t know that there isn’t anything .edu about them. They purchased that domain from someone who bought it before non-educational entities were restricted from having .edu suffixes.

If users don’t know who is providing the tool, they certainly don’t know which of those tools have publisher backing or cooperation.

Publishers very much want to be in the digital coffee shop where their readers hang out. Social sharing of content is definitely a defining issue of this decade. Publishers need to choose how and whether to get into this space. Elsevier made the decision to buy Mendeley even though it was chock full of copyright violating material. I suppose there were some conversations about whether to buy it or sue it. But now that they bought it, they are investing heavily in it with staff and development. If you have not received your Mendeley amendment to your Elsevier agreement yet, keep an eye on your inbox and make sure you give it a thorough review.

So instead of some unknown third party facilitating sharing of your content, Elsevier is doing it. Individual publishers will need to decide if this is in their best interest or not. On the plus side, Elsevier is promising usage statistics for all this sharing by the end of the year, something you won’t get from ResearchGate or Academia.edu.

If you don’t have $80 million to buy your local sharing hotspot, you can build a new one and hope the cool kids come and hang out there. Again this requires a lot of investment and to date, it seems only large societies, such as AAAS with Trellis and American Chemical Society with ChemWorx, have the resources to build these out.

While it is exciting to see the proliferation of innovation from super creative third party start-ups, the light between some of the service offerings is thin. It wouldn’t be a proper publishing conference if we didn’t have a “Lightning Session” featuring 5-8 start ups giving their 5 minute elevator speech. Maybe one or two of them have an idea worth pursuing but the rubber hits the road at some point and it is almost always over money. Each service is designed to solve a problem our users might have; but because researchers are not apt to pay for these services, the start-ups want publishers to foot the bill. Again, it is up to individual publishers to decide whether the investment in money (and time in coaching these start-ups) is a good fit for them. Remember that most of the entrepreneurs are not publishing people. They are mostly researchers or postdocs. This makes them well versed in day-to-day issues for researchers and but not at all knowledgeable about the infrastructure that underlies our content.

Society publishers are not known for being risk-takers, nor do they have a lot of money sitting around waiting for investment opportunities to come along and so we wait to see which of these new start up tools will survive. In the meantime, we take our chances that our users won’t find a home in a neighborhood that we don’t particularly like, such as ResearchGate or Academia.edu.

Applications for use of tagged content is now expanding to advanced analytics. This is quite exciting. Companies like ScholarlyiQ and Squid Solutions are providing advanced usage analytics giving us a lot more information about who is using the content and how.

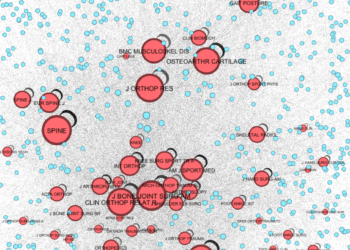

Content analytics such as HighWire’s Vizor products and similar tools in development elsewhere can tell us things that only the largest publishers had resources to tease out before. Knowing how your articles are related to each other, how they relate to competing articles, and what happens to the papers you reject can be game-changing information for any editorial office.

I am certainly not an expert on all things digital, but I can’t think of another industry that has the kind of infrastructure we have in scholarly publishing. It’s certainly not free. We all pay membership dues to Crossref and hopefully ORCID and NISO, and perhaps CHORUS. We also invest our staff resources in order to implement new standards.

Jon Oliver did a piece last year on Last Week Tonight about our country’s failing infrastructure. He highlighted the one thing civil engineers know and we can take a lesson from. Infrastructure is not always sexy but it is vitally important in order to move civilization forward. As publishers, we either need to innovate, or partner with those that will. Starting with structured content and maintaining our own infrastructure is the first step in the right direction.

Discussion

7 Thoughts on "Integrate to Innovate: Using Standards to Push Content Forward"

As I spend a lot of words in the above post on Crossref and the value of the DOI system, I would like to acknowledge the enormous contributions of Norman Paskin, the founder of the International DOI Foundation, who passed away last week: http://blog.crossref.org/2016/04/dr-norman-paskin.html

Excellent article! The slowness of authors to adopt innovation is one of publishing’s biggest barriers. Authors’ traditionalism extends even to the content itself. I tried for years in my journal to get authors to take advantage of newer display technology: photos, videos, interactive graphics, etc. Nothing! Authors would load their conference presentations with whiz-bang stuff but stick with the tired old PDF when it came to the journal. (This when every software package includes great on-line tutorials!)

Any ideas how we can get authors more willing to innovate?

I think we need to prove the value in spending the time to do this. I like some of the tools publishers are making available to authors, such as the video abstract which is mostly a narrated powerpoint that anyone can make. Podcasts were pushed for a while but it turns out no one wants to listen to an author read their paper. Kudos provides a space for authors to write a plain language summary but there is still a huge debate on exactly who is the target audience for scholarly papers!

I think there will have to be undeniable proof that your paper will be *cited* more if you do the extra work. Period. I can’t think of another carrot that would encourage most authors to go the extra mile and write a summary, post a video, write a blog about a paper, or even tweet it. The good news is that there are attempts to quantify this activity with initiatives like Impact Story, Kudos, and Altmetric. But again, the carrot is citations, not views or retweets or media mentions.

I would say that the main carrot is not the measurement (citations) as such, but the usage and application of the research. Citations have just become the (overvalued?) convenient illustration of that usage – but it is only one form of usage, and as such, much of the achievements of a piece of research go unrecorded, though not unnoticed or unvalued by the authors.

As we are painfully aware, the ‘citation’ has a certain esteem attached to it, and serves an assessable function, but most researchers I have spoken to value constructive application and practical use of their work over the accrual of citations per se.

I think we forget that the end of the project is the paper and the person writing the paper is on to something new. Innovation of presentation is costly in time. Time that thing we cannot buy nor ever recover is dear.

My brain is spinning trying to process everything in this great article. I never heard of Squid Solutions, CrossMark, ScholarlyiQ, or Impact Vizor before. It is a major challenge trying to get a handle on the various bibliometric and altmetric applications in the publishing world at this time. I am the engineering librarian at the U of Alberta, and am slowly working with the Faculty of Engineering to help individuals create ORCID records. This is becoming more critical as a growing number of publishers require their authors to have ORCID IDs before accepting a paper for publication. IEEE will be requiring this sometime in 2016.

I was not aware that ResearchGate is a for-profit entity, nor that academia.edu isn’t a true .edu site.

Thank you for this article.