When Artemi Cerdà assumed the position of Editor-in-Chief of Land Degradation & Development (LDD) in 2013, it ranked 10th among 34 soil science journals in the Journal Citation Report (JCR) with an Impact Factor of 1.991. Four years later, the journal is now ranked 1st with an Impact Factor of 9.787.

It is rare that journals go from rags to citation riches within a few years. There is usually an explanation. In this case, LDD’s meteoric rise to prominence was fueled by Cerdà abusing his position of power to coerce authors into citing LDD and his own papers.

While the European Geosciences Union investigated and documented the extent of the editor’s abuse within its own journals, neither LDD, nor any journal that participated in artificially inflating LDD’s Impact Factor, was suppressed in this year’s JCR. Oddly, Clarivate Analytics, the publisher of the JCR even celebrated LDD as one of the “world’s most influential journals of 2017.”

Threshold levels for JCR suppression are set exceedingly high, so high, that even when a journal quintuples it’s Impact Factor and moves from 10th to 1st place, it may not be sufficient to trigger suppression. In an official response from Clarivate, reported on the Retraction Watch website, LDD did not satisfy all conditions for suppression.

Nevertheless, JCR‘s own data suggest that suppression is an effective tool for curbing high rates of self-citation, even years after a journal is reintroduced.

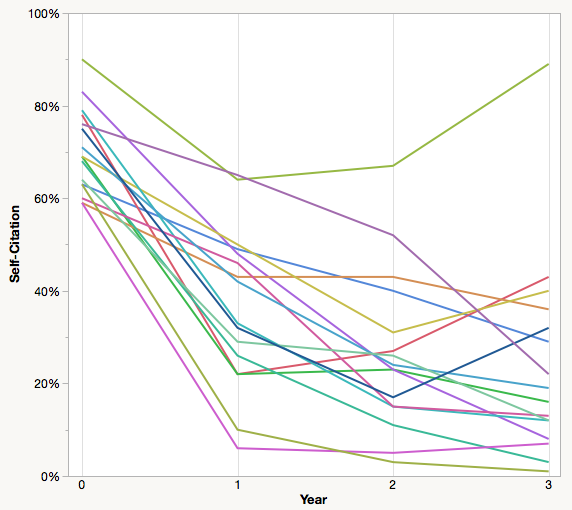

In the 2013 JCR, 16 journals were suppressed for excessive levels of self-citation. Average self-citation rates affecting their Impact Factors was 70%, with one journal, Law Library Journal, at 90% (see figure).

In the first year of reintroduction, average self-citation levels dropped to 37%; by year 2 to 26%; and by year 3 to just 24%. Three years after suppression, just one of the 16 journals, Law Library Journal (top green line) returned to pre-suppression levels.

Based on JCR citation data, suppression does appear to have a long-lasting influence on the citation patterns of journals. This is not surprising given how important Impact Factors rank for many authors.

An editorial board may knowingly turn a blind eye to unethical behavior if they avoid the cast of aspersion and return the right results.

While the JCR has a stick to prod editors into behaving, they have incidentally offered them a carrot in the form of a simple numbers-based guide to how suppression decisions are made.

Given the attention that LDD has received in the media, I’m particularly concerned that editors and publishers will view citation manipulation, not as a matter of ethics, but as a matter of accounting. Clarivate’s decision on LDD has legitimized the position that manipulating the citation record — even through coercion — is acceptable behavior just as long as you don’t do too much of it. Playing by these rules, an editorial board may knowingly turn a blind eye to unethical behavior if they avoid the cast of aspersion and return the right results.

Discussion

3 Thoughts on "Does Journal Suppression Reduce Self-Citation?"

Nice guys finish last. That’s about the only thing I can say to express my dismay over the outsized power of the impact factor, the fact that the corporation that most benefits from this is also its chief arbiter, and the extent that ethics seemingly must be bent in order to grow impact factor.

We’re currently wrestling with ways to ethically increase self-citations in the journals I manage. We must. Our competition has 2-3 times more self-citations, which has contributed to their passing us in the rankings even though we increased our own impact factor.

It seems that this phenomenon will only serve to accelerate the downward trajectory of trust in the work of scientists.

Perhaps we all should realize more that: when such “measurements become a goal, they are no measurements any more”….. It is just sad when editors (like Jonathan) write: “Our competition has 2-3 times more self-citations, which has contributed to their passing us in the rankings even though we increased our own impact factor.”

With Eugene Garfield being no longer with us, it is perhap a good time to stop using Journal Impact Factors.