I asked an academic colleague, well past tenure, if he would have preferred to think of his career trajectory in terms of more individualized milestones and horizons, rather than the standard, institutional tenure and promotion process. His answer surprised me. He’s a pretty straightforward fellow, a thoughtful and accomplished scholar, an inspired teacher, and a mainstay of service on our department’s many committees — the trifecta for academic evaluation. I thought he would report that the clarity and longstanding expectations for tenure would be more appealing; after all, this was a process he had mastered. But no. He reported that he would rather have been assessed on a quite different set of criteria, including intellectual milestones such as the acquisition of knowledge and the mastery of methods of dissemination that had been a priority for him from early on.

The basic notion that we can and should apply standardized criteria for intellectual achievement and contribution is central to much of contemporary education. In higher education, such standardization in the form of metrical evaluation is patently biased, as any number of studies have demonstrated. In scholarly communications, citation metrics that are often used as an indicator of quality scholarship, including in the academic tenure and promotion processes, are one of the most vexed subjects. There are technical, epistemological and philosophical issues to consider, all of them politically freighted. How to weight the importance of citation metrics, the evident bias in metrics of all kinds, and the applicability of these metrics for the humanities versus STEM fields are issues that have prompted numerous studies, reports, and reflections. Recently in the Kitchen, Angela Cochran surveyed citation and other metrics offering a metrical assessment of these metrical tools on a grain of salt scale — pinch, cup, bathtub, to classroom full. By Angela’s calculation, on average metric tools ought to be taken with 286 billion grains of salt. Sounds about right to me. An intensive review by the Higher Education Funding Council for England (HEFCE), an important body given the concerns about over-reliance on metrics in the Research Excellence Framework (REF), concluded in 2015 with a cautionary note. In another recent Kitchen post, Sara Rouhi pointed to the structures of power inherent in every aspect of evaluating scholarship.

But is there a way to fashion metrics differently? For academic humanists like my colleague, for whom citation metrics are not yet playing a major role in their professional profile and evaluations, there is still a discomfort with the way that metrics have begun to leak into all aspects of the intellectual economy. And there is a sense that there ought to be better — more humanistic — ways to assess their labors. An alternative is being workshopped. With a grant from the Andrew W. Mellon Foundation, HumetricsHSS is a kind of meta-workshop in “rethinking humane indicators of excellence in the humanities and social sciences.” A pilot phase is allowing HumetricsHSS to test a set of propositions exploring how, if an evaluative process could be rebuilt with humanities values, individuals and institutions might embrace a very different approach to assessing scholars and scholarship. Christopher Long, one of the HumetricsHSS leads and Dean of the College of Arts and Letters at Michigan State University observed in a post on the LSE Impact blog yesterday,

“If the metrics we use are to be capable of empowering innovative research along diverse pathways of intellectual development, they must be rooted in values that enrich the scholarly endeavor and practices that enhance the work produced.”

In addition to Long, the HumanitiesHSS core team is a group with primary professional affiliations in libraries and scholarly society programs but with long experience in higher education. I spoke with three of the six, Nicky Agate (Head of Digital Initiatives at the Modern Language Association), Rebecca Kennison (Executive Director and Principal of K/N Consultants and a co-founder of the Open Access Network), and Stacy Konkiel (Director of Research and Education for Altmetric), to ask them more about the premises behind HumetricsHSS, how the project is developing, and what it might ultimately offer for individuals and institutions. The enthusiasm and commitment of the team is clear, and infectious.

Nicky Agate made clear that HumetricsHSS is not a product or a service, but at this point an initiative to bring interdisciplinary HSS groups together around the potential for different standards of evaluation. The pilot phase is “exploratory not programmatic.” The HumetricsHSS workshops are meant to be iterative, allowing evolution within each workshop group and then among the workshops. A key insight for HumetricsHSS is that given the evidence suggesting that metrics can drive negative behaviors, as Stacy Konkiel puts it, could “certain measures, if well chosen and incentivized… actually drive positive behaviors?” But what, exactly, will be measured?

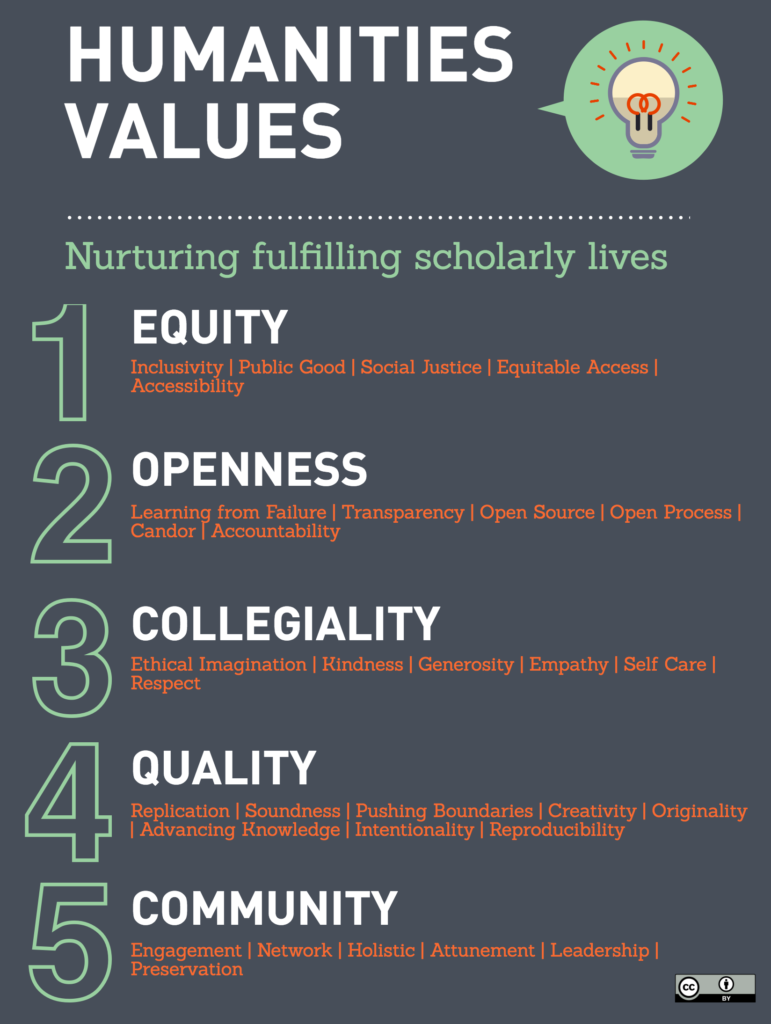

With the ever wider array of measurable outputs, one answer might be to use more subtle measures of different kinds of outputs (more like Altmetrics than the JIF, for example). But Rebecca Kennison described, as a result of the first HumetricsHSS brainstorming, how “We wanted to think about not just what we wanted to measure, but what we wanted to inspire.” The resulting “Humanities Values” graphic, now prominent in HumetricsHSS materials, is a bit misleading in that this represents only an early product of that desire to inspire. But it is illustrative. The team articulated 5 core values: equity, openness, collegiality, quality, and community, with some specification and elaboration of each (inclusivity, public good, social justice, equitable access, accessibility, for example, within the general value of equity). Based on these values, presumably, one could design and employ a set of those more subtle and appropriate (hu)metrics.

The initial step then, has been identifying the values as a foundation on which to build. In their first workshop, when new voices were brought in to the discussion, the HumetricsHSS team found that there was actually little agreement on the core values they had proposed. Held last month at Michigan State University, “The Value of Values” was a 3 day event with two dozen humanities and social science scholars and administrators to explore the initial list of 5 values. Pre-reading included the Palgrave Communications article from January 2017, “Excellence R Us”: university research and the fetishisation of excellence,” which argues that the rhetoric and infrastructure around “excellence” narrows intellectual inquiry and can reinforce existing hierarchies of all sorts. Workshop participants were asked to think about values both for individual scholars and for institutions. Adriel Trott was a participant in that workshop, and blogged about the experience. She included some images of the workshop sticky note and poster paper process of sharing ideas — if not coming to consensus — around values, with an illustrative profusion of pink paper, lines, arrows and circles suggesting the intensity of the exchanges. After the three days, she wrote, it seemed time to start thinking about how to scale and transfer the idealism encouraged (productively so) in the workshop.

This is part of the longer term plan. Nicky Agate told me that HumetricsHSS could ultimately offer “a flexible framework that institutions, departments and individuals could use.” Long describes the initiative as looking to expand the types of scholarly contributions that are rewarded in the academy (reviewing and mentoring, for example) to present, using words I heard from his teammates, too, “a more textured story” about a scholar’s work. HumetricsHSS has scheduled presentations and shorter workshops within other conferences and meetings, and further workshops, through this pilot phase.

But is there really an end in these means? Some scholarly societies have already embraced a broader range of scholarly activities and are encouraging academic departments to do the same; some universities and academic departments have long been more expansive in the ways they appreciate a variety of scholarly contributions, along with teaching and service. Surely much more is needed in this direction, but I’m not sure that will be the primary value of HumetricsHSS. In the final analysis, HumetricsHSS isn’t necessarily about metrics, or the Humanities, and it’s clearly not only applicable to the academy. The value of the project may be in creating — even forcing — time and space for a more intentional, less reactive approach to professional development and to appreciating a rich diversity of scholarly contributions. If there is unlikely to be unanimity, or even enough consensus, about “core values” to make measurement practical beyond the individual level, the process of debating the values inherent in scholarship seems important in itself for broader scholarly communities.

Discussion

6 Thoughts on "Metrics: Human-Made, but Humane?"

I love the idea of “humanistic” assessment; but I am skeptical. Humans overly rely on measurable metrics from the moment we are born. Babies are tested with an APGAR score; adolescents are measured and weighed with percentiles reported to nervous parents; school-aged children are subjected to loads of assessment tests (for cognitive abilities and success of rote memorization); high school students seeking college are living for the GPAs, SATs, and ACTs; and working professionals are STILL mostly being assessed on a scale or rubric of desired performance.

Despite being conditioned to be judged, ranked, and scaled in some way, most behavioral science studies show that being scored and ranked is counterproductive to most people. It’s demoralizing and the majority of people wish, as your colleague does, to be judged on what they know, how they apply it, and what they can contribute–even if those things can’t be easily measured.

So if no one likes it and the metrics work against our best interest in being motivated/productive, why do we insist on relying on these metrics? I think because it’s easy and perceived as more fair. As they say, the “number never lie.”

I hope this new approach takes off and can be a starting point for other fields.

Suspect its more to do with to what what’s easy for those doing the measuring rather than what matters to actual outcomes.

Behavioural economics has led to the concept of the nudge to drive behaviours leading to desired outcomes. I used to work in medical education and a frequent saying there was ‘assessment drives learning’ – and using that insight to frame better assessment based on what people should learn to improve patient outcomes.

Thanks, Angela! I think you’re exactly right. We count because we can, and we keep doing it, and expecting it to have meaning (from the APGAR to the JIF is a good blog post title, fwiw). I think this project is in keeping with a lot of critical perspectives on metrics. Metrics are biased in a zillion ways from design (because created by humans with biases) to interpretation (ditto). But what if we took that critique a step further and every time we employed metrics we had to be responsible to that design and interpretation? I think this project is also in keeping with the sentiment that more reflection is not just a good thing, but a practical step.

Side note. The colleague I queried I fully expected to say, no, the basic processes are good, they’re expected, they’re reasonably clear. I didn’t explain anything about humetrics, just asked “what would you think…” And he’s been in the position to manage lengthy evaluations, surely isn’t looking to make things less efficient. So it was interesting that he was responding not about what would be ideal, but what would actually better reflect what work he does/ our colleagues do.

This topic always brings me back to the academic tenure system. Having just read a NY Times article suggesting that many faculty knowingly publish in predatory journals with no peer review, it really suggests a broken system. I presume the tenure system remains in place due to inertia at many levels, including perhaps the accreditation level. Also perhaps the sense that if tenured faculty had to undergo that initiation, others must as well. In the commercial sector we check in with our supervisors on a weekly and monthly schedule to make sure all is well, and then we have an annual review to tie up the year and reward performance and offer constructive criticism. It is pretty human. Everyone knows what is expected, and where bonuses are permitted, those criteria are spelled out in advance. What is the tenure system anyway, some kind of hedge on poor hiring and review process? Get rid of it. It would be good for the overall health and quality of science and science publishing.

I’m excited about this project. I tend to believe that we can derive measures/criteria for judgement for almost anything … but I wonder a lot about whether we are focused on the right things. There is a common mantra in the community of student learning assessment folks in higher education – we should measure what matters because what is measured is what ends up mattering. True of scholarship as well.

If incentivization was “humanistic”, the assessment would also have been humanistic long back. The entire system of how one gets appointed to academia, how teaching and research are balanced (sacrificed) or how unequal the university ethos is, has led to the demise of a humanistic approach to incentivization and thus assessment.