Editor’s Note: Today’s post is by Sneha Kulkarni. Sneha is the Managing Editor for, Editage Insights, Editage, Cactus Communications. Her passion to bridge the communication gap in the research community led her to her current role of developing and designing content for researchers and authors. She writes original discussion and comment articles that provide researchers and publishers a platform to voice their opinion. The complete list of her published content can be found here: http://www.editage.com/insights/users/sneha-kulkarni.

Is there such a thing as too much science? The volume of global scientific output has increased exponentially. In 2016, close to three million papers were published by researchers all around the globe, as per the recently published report by the U.S. National Science Foundation. We are witnessing a boom in science publications that is both exciting and concerning. Is the deluge of scientific publications taking us closer to unraveling unanswered questions? Or is it adding to the noise that makes identifying the really significant publications difficult?

The motives behind publishing research have rapidly changed. Beyond the personal gratification of making one’s findings known and helping the field progress, an unhealthy competitiveness has spiked the need to publish. In the cutthroat, competitive arena that science has become for many researchers, lengthening the list of publications on one’s CV has become a necessity to survive. Despite the criticism around the “publish or perish culture” of academia, publication output continues to be the primary measure of a researcher’s success. An applicant’s publication record is one of the most important factors funders consider in decisions to grant or renew funding. Similarly, for hiring decisions, promotions, or even salary hikes, institutions tend to favor those with a lengthy publication list. In fact, some universities have gone as far as incentivizing publications in high impact factor journals. Therefore, in their attempts to showcase worth and productivity, researchers scramble to add publications to their names. In fact, it could be said that the competitive culture of academia leaves researchers with no option but to churn out papers rapidly and consistently.

In this race to publish more, some end up choosing the path of leniency. They indulge in unethical publication practices that are hard to detect at the publication stage, such as slicing a set of findings into several publications or publishing results that haven’t been thoroughly verified. Another concerning repercussion is the rise of the fragmented authorship or “hyper-authorship” phenomenon wherein a single paper lists up to hundreds of co-authors. Of course, multiple-author papers are a cultural norm in certain disciplines such as physics and biomedicine, but shared authorship has seen a rise in non-scientific subjects such as social sciences. Therefore, discerning the contribution of each author in such papers can be arduous for grant and tenure review committees. Initiatives such as Project CRediT can help distinguish between the contributions of all authors listed on a paper and bring clarity to the attribution as well.

Apart from this, the publishing boom has set researchers with a very real challenge. With hundreds of papers being churned out every month, keeping up with the publications in one’s own field is becoming a challenge for many researchers. “I can’t even keep up with my own relatively specialized field: the euro,” says Professor Jesper Jespersen, an economist from Roskilde University, Denmark. “I think it’s difficult to see [which papers] are really good, and [which papers] are just repetition,” he adds. Turning what could have been a single publication into a series of papers might provide a push to researchers’ career aspirations, but it can be disastrous to the field, as it can drown out meaningful results.

Discovering relevant literature in the expanding library of publications is becoming a real issue for researchers. Despite effective discovery tools, the sheer volume of search results can be overwhelming. And imagine going through a staggering number of these results to discover that most of the studies listed are repetitive or weak! We are thus faced with a multifaceted problem: Researchers are adding to the global publication output, but are they really contributing to science?

The research deluge only exacerbates the problem of low uptake of replication studies in scholarly publishing. With the constantly increasing publication output, it would be unrealistic to replicate every published study. And, of course, most researchers are inclined towards publishing novel and groundbreaking results rather than tinkering with a study that has already been published.

At the journal end, the swelling volume of publications can put immense pressure on editors who need to identify quality studies. Journals are flooded with submissions, and to tell genuine studies from plagiarized ones can be challenging. No wonder, perhaps, that salami sliced publications and other studies that provide little to push the existing boundaries of knowledge still end up getting published.

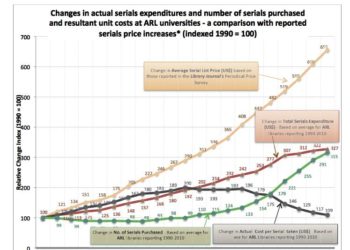

And that’s not all! The research deluge affects all stakeholders in the system; consider, for example, libraries. Around the world, libraries have to walk the tightrope between strained budgets and the ever-growing number of new journals being launched to accommodate the burgeoning research output. Adding new titles to already expensive subscription packages is a tough proposition for university libraries. And is it really worth it for librarians to even consider adding new titles, if much of the research published in them may not even be making a significant contribution?

The cumulative effect of the mass production of less valuable publications is straining science heavily. From using up often-dwindling science budgets to burdening the scholarly community with too much information, mass production of research can have a far-reaching impact. Countries across the world are increasing their R&D expenditure. However, in order for this investment to provide tangible returns, the misplaced focus on the volume of publication and the average output of researchers needs to be correctively redirected to the impact that publication is likely to have for the concerned field of study. Funding bodies and institutions should encourage researchers to publish fewer yet better papers – ones that adhere to good publication practices and are likely to significantly add to the current body of knowledge on the topic. In order to pick the best research for funding, some granting committees and institutions allow researchers to submit only a select few papers that best present their work. Making this the norm would help ensure that only the best research (and not necessarily a long list of publications) would get the researcher the grant, the job, or the position he or she is vying for.

Every year sees the addition of a new crop of researchers and every day sees the uncovering of new knowledge about the world. Therefore, while more research is welcome, there is a need for us to pause and consider whether the endless stream of publications is leading us to the solutions necessary for a better future.

Further Reading:

Science & Engineering Indicators 2018

Crisis in basic research: scientists publish too much

How Many Is Too Many? On the Relationship between Research Productivity and Impact

Discussion

21 Thoughts on "Guest Post: Research Deluge — Are Researchers Writing More yet Contributing Less?"

Two months ago we were talking about the business model of cascading journals as a means of keeping substandard papers in house rather than seeing them published by a competing journal.

Now the issue is too many substandard papers being published.

I can only hope everyone sees the connection.

Do any of these reports on this look at whether this is an effect of more scholars with the obligation to publish rather than the same number of scholars publishing more items?

That is a good question. My guess both causes are operative here, but a Scopus hunt should answer this.

Each field has its own characteristics. Obviously, Sneha’s focus here is science, but in a recent book (Sicilian Studies) I made the point that in the field of European medieval history we are seeing papers that are ever more ‘specialized,’ actually more pedantic, sometimes repeating work that was done a decade or two ago (which ties in with Professor Jespersen’s comment about redundancy). Certain monographs converted from dissertations are all but whimsical because doctoral candidates are forced to come up with something ‘original’ in historical areas that have been studied to death (think of the work of Chaucer or Dante, or the reigns of some English kings).

STEM fields are a different animal, but in SSH the job market for tenure track professorships is so competitive, with hundreds of candidates for one position, that ‘over-qualification’ is the norm. That means lots of papers, regardless of quality (though quality may be quite good in some cases), and a monograph even for an entry-level TT position.

Thank you for highlighting a problem that persists in many fields – the pressure of securing a position can drive researchers to publish papers that barely add to the existing knowledge about a topic.

This post documents the rise in publication volume, but does not attempt to answer its own question…are researchers contributing less?

The growth of the scientific literature is not a new phenomenon, nor is the “crisis” of libraries to attain such literature. The problem underlying both of these factors is the limited resources of the reader’s time and attention. And to this, the scientific literature has evolved into a highly specialized and highly stratified market of journals, whose structure is used by readers in the information seeking process. Without such a structure, we’d all suffer terribly with information overload.

“the scientific literature has evolved into a highly specialized and highly stratified market of journals,”

Scientific research though has also gotten more cross-disciplinary. As researchers are forced into ever more stratified reading, I worry that research will tend to be mostly incremental and that there will be fewer of the leaps that come with cross-disciplinary research.

This post raises some crucial existential questions that undoubtedly trouble many scholarly communications enthusiasts. I doubt any of us can provide an answer though. On one hand, we are witnessing advances in science and technology that we would never have imagined possible even 5 years ago. On the other hand, it’s getting more and more difficult to separate the wheat from the chaff, and retractions and cases of misconduct are rampant.

Around this time every year, we meet at industry conferences to discuss some of these problems plaguing scholarly publishing as well as innovations that are emerging to counter them. While it’s good to raise these questions and get everyone thinking about them, I wish the industry had some kind of global standards and quality control body for governance and to oversee the implementation of such innovations.

Every wonder why tennis is played with a ball of exactly the same size and weight and a net of exactly the same height the world over, whereas no two journals in the world are expected to have the same set of acceptance/rejection criteria?

In my opinion, and looking at this article in a slightly different light, researchers need to be making their publications more “consumer friendly” through the use of attention-grabbing plain-language summaries, infographics, and video summaries, to not only bring focus on one’s research, but to provide the “social plumbing” and anchors (multimedia, DOI tags, tweet handles, journalism uptake, etc.) to make the research more discoverable. The PDF alone doesn’t cut it anymore. Yes, a PDF is portable, but is the information it contains really accessible?

Secondly, researchers need to leverage these tools to become more socially responsible themselves. They need to actively talk about their research findings with not only their peers, but with their non-traditional peers in other disciplines. Researchers also have a moral responsibility to communicate their research findings to the public and to policy-makers at all levels of administration and government.

In short, I feel that in the face of the tsunami (and I choose tsunami versus tidal wave, because it is not just one event… the swell will keep growing, seemingly with no end in sight) we are perhaps failing at “communicating” research value. We need to develop ways to distinguish value from worthlessness. Unless we can learn to communicate research findings better, and in a more consumable and discoverable way, we will be falling short in the mission to facilitate discovery, accelerate research, and support industrial development, to better the human condition.

Good points. Despite the rhetoric of some OA advocates, simply making work available to the public is not equivalent to having the public actually understand the importance of such work. As an example, I worked in teaching hospitals and universities for decades, and am quite familiar with general medical terminology, but I am completely baffled by the meaning of the average genetics papers after reading the first couple of paragraphs.

Without a translation of such papers into comprehensible English (or Chinese or Spanish or Arabic), it is pretty unlikely that an average reader will be able to do much with the information encoded in the dense genetic jargon in the PDF or abstract.

The requisite knowledge to interpret information, and not information itself, is the scarcest resource. What makes a EKG valuable as a diagnostic tool? Only the knowledge of the physician or cardiologist who is able to interpret it.

From the research consumption perspective, if academics find it difficult to keep up with the overwhelming number of publications, one can imagine how difficult it might be for the non-academic consumers or the general public to consume information. Research communication can fill this gap while also increasing the discoverability of science.

As a simple “consumer “ of science through news feeds I see the problem of the proliferation of bad science as another example of the commodification of everything. Higher education and medicine are also to an alarming extent markets offering product—Why would science be immune to cultural forces larger than itself? A field of endeavor flooded with hype, distortion, lying, intellectual theft, etc. are the symptoms of the adoption of the methods and goals of advertising.

I’m an editor for a social sciences journal, and a key factor driving journal submissions is the increased pressure for academics in other countries — particularly China — to publish. Many of these are fairly poor quality. However, sorting through them places a fair amount of pressure on the editors, not to mention the difficulty of finding reviewers for these articles!

Sneha is to be commended for an articulate and economical description of the current publish/perish dilemma. The “highly stratified market of journals” as noted by Phil may be more of contributor as authors “slice the salami”, add some condiments and repackage essentially the same product. As has been suggested, there is a symbiotic relationship between the academic community and the publishing community that encourages the practice and packages much of the persiflage padding the library “bundles”. It will be interesting to see which publisher “hires” one of Watson’s children as a gate keeper which also provides readers with a well filtered list of references. Proliferation of increasing numbers of specialized journals that need human access to scan for relevance should be early on the list of professionals replaced by AI.

Thank you so much Sneha! Your post reminded me that information overload, and our responses to filter and screen information, is ever-evolving.

Wikipedia may indicate some of this under Information Overload, where it says “As early as the 3rd or 4th century BC, people regarded information overload with disapproval.[11] Around this time, in Ecclesiastes 12:12, the passage revealed the writer’s comment “of making books there is no end” and in 1st century AD, Seneca the Elder commented, that “the abundance of books is distraction”.[11] Similar complaints around the growth of books were also mentioned in China.[12] https://en.wikipedia.org/wiki/Information_overload

Working in the field of AI, I’m entirely biased of course, but because I know what we’re working on, I reckon technology will/ should increasingly be used to help us filter and screen the growing information in new ways we didn’t think of prior.

Our collective information filtering challenge is technology related in the first place, but it is also a driver of new application of technology to help filter. I suspect, we will possibly always feel overwhelmed in the current, until we look back and realize how we responded to challenges that seemed overwhelming in the past.

Indeed we live in a world that bombards us with information all the time and it can overwhelm us. Sorting through information at times might seem like looking for a needle in the haystack. And while technology can certainly help in finding the information being sought, some part of this problem could be alleviated if scientific literature is published in a more responsible and efficient way.

A couple of points to note: It is true that we have seen a rise in co-authored papers in many disciplines, but this does not have to be interpreted negatively. It indicates greater (international) interdisciplinary collaboration, and a realisation that the scientific and social problems we face today will not be solved by a single discipline alone. A development to be celebrated.

One of the reasons for the growth of scientific research is that many researchers in countries previously too poor to produce scientific outputs are now making a contribution and this should be encouraged (facilitated mainly by society publishers). It is inevitable that as some nations come online they will initially produce research that is not of the quality/standards expected by the top journals, but adds to the volume of content available. China and India have decided that education is the way out of poverty and again this should be encouraged, however, it is true that it does not help that students from these countries are told that they must achieve 10 publications in international journals before they achieve their PhD, or that they and other researchers are rewarded in cash for publications in journals with high impact factors (the higher the IF the higher the reward). Search engines are improving and ML/AI will further allow more focused access to appropriate content in future. I think the real problem is not necessarily the volume of content out there, but determining and excluding the volume of fake content and duplicated content that is available.

You’ve made some really good points. We definitely need more research but, as you pointed out, we need good research that adds significantly to what we currently know.

The forces driving stakeholder behavior in the scholarly publishing ecosystem continue to push quantity over quality. Incremental research leads to more publications leads to more publication destinations (=more titles) leads to bigger deals, more strain on peer reviewers, too many discovery pathways. Incentives are far too skewed in favor of binary events (publish or perish) to account for sustainable metrics of real-world impact, whatever they might be. The sheer scale of operations in the ecosystem means we reward on the basis of that what we find easiest to measure, not necessarily that which is a more accurate barometer. Technology helps track and discover better, but what we are tracking itself is arguably shrinking in terms of its contribution to science.

There is the cartoon showing two mice rapidly spinning their wheels when one says to the other: Boy do we have those guys in the white coats trained. We just hop on our wheels and they feed us. Maxwell well understood.

Congratulations on the thought provoking piece, Sneha. You’ve identified a phenomenon that has been noticed by many. Several of the comments here have pointed out very specific aspects that could be identified as the root cause of the problem. A few things that seem to stand out for me…the expression “glass half empty or half full” comes to mind. On the one hand, we have great positives, thanks to better research infrastructures and publication workflows globally: some fantastic breakthroughs that we might never have been able to fathom; great international collaboration; a serious focus on the need to increase research impact on society; the formation of a global scientific community; movements like open access that questioned our own value systems in research; tools that make magical things happen and increase discoverability like never before; better communication among all stakeholders in scholarly publishing…this list runs long. If the glass is half full isn’t it half empty too? Along with the positives we have the challenges and problems: the need to publish or risk dealing with a very mediocre academic career, the pressure to use any means possible to attain the end goal, the superficial importance given to “how many” over the “how good”, the prevalence and growth of opportunistic predatory publishers who feed on researchers’ fears of missing out on that next grant or promotion, the fact that journal publishing is business model which has its own strengths and weaknesses, excessive focus on the impact factor…this list runs long too.

I feel that perhaps it’s time to push for better conversations between science and policy. When will the requirements for a certain number of publications change? If the change happens at the institutional level after a policy influence. When will researchers be able to focus on societal impact and outreach (perhaps by sharing lay summaries or engaging with the non scientific community on social media) more consciously? When aided by policymakers and relevant mandates. Of course other factors will play a major role in addressing many of the problems–technological innovations such as AI come to mind here–but in my view, a better and more harmonious and more symbiotic science-policy relationship might help a great deal.