Editor’s Note: this post is based on a talk the author gave at the 2018 Society for Scholarly Publishing Annual Meeting.

The reason our governments and charities fund research, or why anybody bothers to devote their lives to it, is to make the world a better place. We benefit from the generation of new knowledge, as it leads to things like better healthcare, powerful new technologies, and a stronger understanding of who we are as a species and a society. A better world is the end goal of research, so it’s not surprising to see funders and governments increasingly demanding proof of “real world” or “societal” impact. But while the idea of measuring real world impact makes sense, objectively measuring it is not a simple or straightforward process, and it raises some red flags about falling into the same sorts of traps we’re already in from the ways we already do research assessment.

Funders and research institutions set goals for researchers – here’s how we’re going to evaluate your performance, and while these goals are meant to provide qualitative measures, they are inevitably reduced down to quantitative metrics. In an ideal world, every grant officer, every tenure committee, every person on a hiring committee is going to read every single one of every applicant’s papers and develop a rich understanding of the importance and meaning of their work. But we know that’s not going to happen, and in practice, even six years after DORA, they instead rely heavily on numerical metrics, largely for two main reasons: there are too many things to evaluate, and no one has all the necessary subject knowledge.

We have an enormous number of people doing an enormous number of research projects. Grant offerings and job openings routinely get hundreds, if not thousands of qualified applications. In 2014, the UK’s REF review assessed 191,150 research outputs and 6,975 impact case studies from 52,061 academics. The NIH alone awards around 50,000 grants to more than 300,000 researchers, which represents a tiny fraction of the applications they receive.

Even if you had the time to read everything, many decisions have to be made by non-experts. University administrators, grant officers and others can’t be expected to analyze high level research. If I don’t know a thing about Keynesian economics, how am I going to judge the importance of your work on the subject?

Real world impact cannot be effectively reduced to a numerical ranking for a number of reasons:

- It’s often subtle and non-obvious, requiring an explanation, rather than a yes/no tick box.

- It’s slow, in many fields better measured in decades rather than months.

- It’s widely variable, and there’s no one standard measure that makes sense for a particle physicist and an economist.

- Trying to metric-ize real world impact leads to short term thinking rather than doing what’s best for research and for society over the long term.

Probably one of the most impactful set of experiments of the last century was done in the early 1950s, when Salvador Luria and Guiseppe Bertani were looking at how bacteria protect themselves from viruses. They realized that there were differences in how well the virus could take hold in different strains of bacteria. Maybe kind of interesting, but where’s the societal impact?

Cut to the next decade. In the 1960’s, Werner Arber and Matt Meselson’s labs showed that the reason for the restricted growth in some strains was because there was an enzyme chopping up the viral DNA, something they called a “restriction enzyme”. Again, it’s bacteria, so where’s the “real world” impact?

A decade later, in the early 1970s, Hamilton Smith and Daniel Nathans, among others, isolated these enzymes and showed that you could use them to cut DNA in specific place and map it. This quickly led to the notion of “recombinant DNA,” and genetic engineering. Herb Boyer and Stanley Cohen filed a patent and started a company called “Genentech”, and started producing synthetic human insulin. Until this time, the only insulin available to diabetics was purified animal insulin, which was hard to get and expensive. This made an incredible difference in the lives of the people suffering from this condition. Arber, Nathans and Smith were awarded the Nobel Prize for this work in 1978. The patent, by the way, wasn’t officially awarded until 1980.

That seems a pretty significant piece of real world impact. But going back to Luria and Bertani, how would we have measured the value of what they did? What metric would have captured that impact?

Real world impact can be subtle and slow. It took decades for the real payoff from the initial experiments. RO1 grants are offered for 1 to 5 years. How does that time scale gibe with the course of these experiments? In today’s funding environment, would Luria and Bertani have kept their grants, their jobs?

Altmetrics are often mentioned as a way to measure real world impact. The first thing we must absolutely be clear on though, is that attention is not the same thing as impact. Just because something is popular or eye-catching, doesn’t mean it’s important or of value. Cassidy Sugimoto, summed up the state of Altmetrics and societal impact in a recent tweet:

I have not seen strong evidence to suggest that #altmetrics – as presently operationalized – are valid measures of social/societal impact. Rather, they seem to measure (largely unidirectional and concentrated) attention to articles generated by publishers, researchers, and bots.

The key to understanding why Altmetrics, or any metrics are problematic comes down to a concept that’s so important it has been codified under three separate names (Campbell’s Law, Goodhart’s Law, the Lucas Critique) — essentially, if you make a measurement into a goal, it ceases to be an effective measurement. As currently constructed, Altmetrics measure as much the level of publicity efforts from the publisher and author as they do the actual attention paid to the work.

Some caveats should be considered when thinking of Altmetrics though, as there are a couple of interesting and potentially relevant things they do capture. One important indicator is when a paper is referred to in a law or policy document. This shows the research is directly affecting society’s approach to a subject. However, this is not a universal metric for all fields – there’s not a lot of policy being written about string theory or medieval poetry studies. Going back to recombinant DNA, in the mid-70s, a number of communities, notably Cambridge MA, passed laws banning or strictly regulating recombinant DNA research. That’s certainly a sign of societal impact, but I’m not sure how much a funder or institution would reward a researcher for inspiring laws that essentially ban their research.

Another interesting metric with some potential is tracking connections between research papers and patents. In theory, a patent shows a practical implementation of something discovered during the research process. Turning an experiment into a product, a methodology, or a treatment would certainly qualify as real world impact.

But again, this is not universal. Not all research lends itself to patentable intellectual property. Campbell’s Law comes into play again – if you get credit for patents, then researchers are going to start patenting everything they can think of, regardless of its actual utility. Do we really want to lock up more research behind more paywalls? If everything that’s discovered gets patented, then does that stop further research – I can’t take the next steps in knowledge gathering because I’d be violating your patent if I try to replicate or expand upon your experiments. That goes against the progress we’re making toward open science.

Time scales come into play here as well. It takes about 12 years to go from the laboratory bench to an FDA approved drug. Most funders aren’t going to wait 12 years for you to prove societal impact to get your grant renewed. Remember also that only about 1 in every 5,000 drugs that enters preclinical testing will reach final approval. If your drug gets tested and fails, is that real world impact?

Exerting pressure to productize research, and to strive for small, short term gains over creating long term value is bad for research.

This gets us to one of the other roots of society’s ills, short term thinking. In the US, schools are willing to trade-off having a generation of citizens that haven’t been properly educated for a quick infusion of local tax dollars, and so focus on the metric of standardized test scores. Kent Anderson wrote a post about this for The Scholarly Kitchen last month, where he called it a “race to the bottom”, with people keen to pay lower prices now even if they know it will hurt them in the long run.

We already have some major issues getting funding for things like basic science. The technology and health gains we’re putting together today are based on the basic science of the last few decades. Luria and Bertani weren’t trying to cure diabetes, they were investigating defense mechanisms of bacteria. You probably couldn’t get that project funded today, as researchers are increasingly asked to show practical results of their work – as noted sage Sarah Palin put it,

You’ve heard about some of these pet projects, they really don’t make a whole lot of sense and sometimes these dollars go to projects that have little or nothing to do with the public good. Things like fruit fly research in Paris, France. I kid you not.

The idea of codifying “real world impact” as the key to career advancement and funding puts us in even more dangerous straits. Researchers will optimize for these goals, and we’ll see less and less basic science and more and more attempts at quick wins.

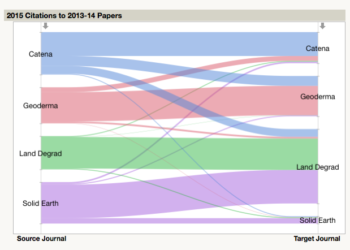

I’ll end this post with one crazy idea for measuring real world impact – hear me out, put down those torches and pitchforks! We know that science is slow and incremental, “standing on the shoulders of giants” and all that. How do we know that Luria and Bertani’s work led to synthetic insulin? From the citation record, as each step of the way, new research cited the previous research that led to it.

We know that citation is an imperfect metric, it lags, and we know that the Impact Factor distorts it and remains deeply problematic. But there is valuable information in the citation record that can help us track the path of research. We shouldn’t be so quick to dismiss that value just because we don’t like the way academia has chosen to use the Impact Factor.

Discussion

13 Thoughts on "Measuring Societal Impact or, Meet the New Metric, Same as the Old Metric"

Many years ago there was an international survey asking, in part, whether an academic would rather be teaching or doing research. The weight favored the teaching. There are now institutions awarding the equivalent of Ph.D.’s that are not driving the recipients into the pub/perish market place. This alternative path may have a greater societal impact than the micro results published in academic journals. Additionally, the secondary impacts within the scholarly community would be significant.

Hi David, thanks for this post – lots of great points! The things you’ve raised here definitely align with the thinking and approach we’re trying to take here at Altmetric.

We’d certainly support the argument that altmetrics can only ever be used as an indicator of influence and reach, as opposed to conclusive evidence of societal or real-word impact (unless the impact that you are trying to achieve is knowing that a certain person or audience has been made aware of/engaged with your research, of course!).

We think a critical part of what both citations and altmetrics can offer is in the transparency of the data. In making it possible to explore who is engaging with research, and how, we’re aiming to provide useful information that researchers can use to develop their networks, create new opportunities, and ultimately deliver benefits that can impact their work for the better.

The way we try do this is by providing a full record of all of the mentions on our details pages (https://www.altmetric.com/about-our-data/altmetric-details-page/). We definitely think citations have a valuable part to play as well — hence our addition earlier this week of the Dimensions citation data!

Evolving metrics, and most importantly, what we are really trying to measure with them, are a topic we love to talk about and would encourage anyone and everyone to contribute to.

Those interested might like to consider attending the Altmetrics Conference, taking place in London on the 26th and 27th of September (http://www.altmetricsconference.com/) or the Transforming Research event in Rhode Island in October (http://www.transformingresearch.org/).

All of our data is also made openly available for scientometrics research – anyone is invited to reach out to us support@altmetric.com or support@dimensions.ai for more information on this. We’d love to see others build on what we have started!

Cat

I don’t see how David’s post aligns with your thinking at all. In fact, it is a repudiation of your thinking.

David Crotty writes that “Luria and Bertani weren’t trying to cure diabetes, they were investigating defense mechanisms of bacteria. You probably couldn’t get that project funded today … .” Did David Crotty check their original grant application to see what project they actually wrote up? Unlikely, but perhaps their application offered to cure diabetes and had nothing to do with bacterial defence mechanisms.

Each individual grant application competes with many others for limited funds. The problem is that the powers that be tend to regard this like competitions in general, where there is a well-defined finishing tape. If Luria and Bertani wrote for the diabetes “tape” they would get funded. If they wrote for the bacterial “tape” they would not. Like Usain Bolt in the 100 metres, they were yards ahead of the competition, but the grant agency adjudicators would not have been able to detect this.

So were Luria and Bertani just lucky, or did they do what many are forced to do, write a dishonest “marketing” application and then, when they had the cash, do experiments for which they had not sought funds. A possible solution to the problem, “bicameral review,” was presented decades ago, but as with many competitions, dishonest marketing still tends to prevail, the US election being an outstanding example. Some of the academic “Trumps” are eventually found out. Some remain to make mischief on grant and tenure committees.

I can absolutely guarantee you that researchers in the early days of phage genetics were not writing grants about producing synthetic insulin. That was decades off of their radar. Also, the 1950s were a very different funding environment than the current day, and my experiences with researchers from those times is that they often landed “their” grant, which they then kept for their entire careers. Competition was lesser because molecular biology was not yet a recognized (or named) field. They were merely doing what other biochemists of the time were doing, trying to understand the basic mechanisms of life.

As you note, this pursuit of basic knowledge is no longer a fund-able activity in today’s grant environment, and it is likely that progress will suffer for the next few decades because of this.

A key value of citation metrics is that they are generated by scholars in the practice of their actual scholarship – research itself requires awareness of the current state of knowledge, and communicating that research effectively requires citation. While the specifics of time, volume, and type of citation vary by discipline, the fact of citation’s role in scholarly work does not. No matter what your field, you need to identify the giants upon whose shoulders you stand, and/or the giants you’re going to dispute.

The “alt” in altmetrics is meant to show that they are an alternative measure – a way to measure something differently, or to measure a different thing. Part of the difficulty there is that they ARE a bit of an agglomerate of different types of activity, different types of communication and, at present, some are closer-in to fundamental work of scholarship, and some are closer to the more general use of scholarly outcomes, and some are entirely uncertain in their meaning or significance. The last ones, I’m afraid, are almost always the largest effects. Tweets and mentions – and bots and press-release are the noise that blocks what might be the signal.

(Consider it duly noted that the “everything looks like a nail” use of citations is a current problem and the source of unethical citation in many forms.)

Joe what a suscient statement!

I can only imagine the altmetric on the cold fusion paper of 1991!

In the world in which we live of pay to publish and manipulating facebook, it seems to me that altmetrics lose much of their legitimacy as a tool of measurement. Or to put it another way – reality is trumping altmetrics!

I agree that metrics, of any kind, do not capture the whole story. Stories, by definition are narratives, told by people, and this meaning making is subjective. But quantitative measures are not the only ways to structure research or tell the stories of its impact.

If we set our research up to respond to the needs of people and society, then baked right into our research will be tales of impact of that research. Using action research (also know as or related to methods like cooperative inquiry, participatory action research, emancipatory research, or Indigenous research) people (not just researchers) get to ask questions of relevance to them and their communities, and often also participate in carrying out the research. The knowledge and skills gained then automatically change and improve peoples lives – impact. These case studies, while not generalizable, can be used by others in similar circumstances to improve their own lives.

Not that all research can or should be carried out using action research methods , but it is a way to immediately see positive social change, and impact.

Thanks David for continuing the conversation from our panel. As we discussed on the day, assessing qualitative change is fraught with subjective nuance with no easy shorthand–but we as publishers and institutions would be wise to respond to academic sentiments and societal forces that just aren’t supported by the current system. Maybe it’s not about finding a way to measure the real world impact of research as it is conducted presently or was in the past, but encouraging changes to the way research is done, via co-creation with practitioners and reaching beyond the ivory tower on problems that connect with wider society from the beginning. Not in all cases and not to supplant impact factors, but we want to acknowledge that there’s more we can do.

For those who haven’t already done so, I recommend looking at the UK’s national research assessments that take place every few years, the most recent being the ‘Research Excellence Framework’ in 2014. That exercise, somewhat modified, will be repeated in 2021. It includes an assessment of societal impacts, quite distinct from the assessment of academic/scholarly significance.

The societal impacts part of the assessment will be given a weighting of 25% in the 2021 REF assessment of UK university departments. These impacts assessments have added a new dimension to such assessments. They have traction, because the REF’s outcomes are tightly linked to subsequent government core funding of the universities. I am told anecdotally that the societal impacts component has allowed universities that are strong in applied and societally-directed research to do better in the ultimate rankings.

For examples of the impacts evidence provided by research groups, see the REF database (http://impact.ref.ac.uk/CaseStudies/) of about 7000 societal impacts case studies. It includes fascinating and diverse examples of impacts pathways. The corroborative evidence comes often from testimonials from those bodies who have created the impacts arising from the original research. The templated case studies include also the original research publications, but those are not the basis of the evidence for impact. Note also that, unsurprisingly the time delay between those impacts and the original research can range from a few years to well over a decade.

After the 2014 exercise, the then-minister for research in the UK government commissioned a study of the REF’s future use of metrics (mainly in the interests of cost-saving, as I perceived it – the REF is indeed costly and time consuming).

There was a resulting report ‘The metric tide’. (See http://www.hefce.ac.uk/pubs/rereports/year/2015/metrictide/ – I was a member of the working group, which also included research-metrics experts.) We concluded (a) that there was no way of basing adequate assessments of societal impacts on any quantitative metrics; and (b) that the scope for more generally reducing human assessment and increasing the use of metrics across the REF process is very limited.

Thank you for this very important and topical post. I have an observation to contribute, mainly stemming from working with funding advisors, conversations with researchers who have been rewarded with an EU or national grant (the Netherlands) and developments in Dutch policy and institutions–

How and if societal impact can be measured is strongly up for discussion. Currently, funding committees seem to mainly (if not exclusively) focus on the aimed, desired impact of a research project. More than about (alt)metrics it is, for now anyway, all about mentality: showing that, as a researcher, you can demonstrate concrete applications or links to society that your research may result in. (This is certainly true for the Horizon2020 program, which is not created to fund research at all, but “is the financial instrument implementing the Innovation Union, a Europe 2020 flagship initiative aimed at securing Europe’s global competitiveness”.)

In practice this still leaves a lot of wriggle space for researchers, but also means that most projects carried out in the name of societal impact are small-scale and often unsustainable, there for the sake of but not necessarily resulting in much actual impact.

Ultimately, also, this is a discussion not only about rewarding systems and metrics, but also about the role of the researcher in society (according to policy-makers and, subsequently, funders), an apparent – perceived – disconnect between academia and society, or/and a desire for researchers to become producers or entrepeneurs (this is an undeniable fact in the Netherlands).

To move away from this existential discussion, it should be interesting for all publishers and other commercial players to note that there is an awful lot of budget available for initiatives that touch on aspects of societal impact (see also, Springer’s new foray https://www.springer.com/gp/about-springer/media/press-releases/corporate/polar-sciences-in-the-spotlight–springer-launched-new-platform-to-promote-interdisciplinary-research/15860268)