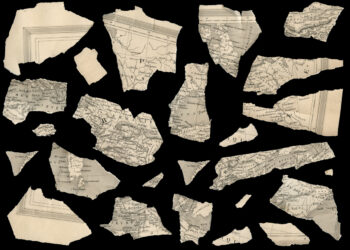

By Hein Ciere – Wikiportrait, CC BY 3.0, https://commons.wikimedia.org/w/index.php?curid=4434000

When metrics are adopted as evaluative tools, there is always a temptation to game them. Without rules and sanctions to prevent widespread manipulation, metrics lose their relevance, become meaningless, and are quickly disregarded by those who once believed that they stood for something important.

Why count Facebook Likes and Tweets when you can purchase thousands of them for just a few dollars? For these metrics to remain robust indicators of something meaningful, it is important to keep the cheats out of the system.

Each year Thomson Reuters, producers of the Journal Impact Factor, puts dozens of journals in time-out for manipulating their numbers through self-citation. This year, for the first time, they delisted several titles for engaging in a citation cartel.

Thomson Reuters has a vested interest in keeping their citation database clean for a simple reason — they profit by selling their data and services to universities, publishers, governments, and funding agencies. Sanctioning those who wish to game the system from their database puts Thomson Reuters in a position of authority, and not all researchers are pleased with their monopoly of power. Some researchers and organizations support Google Scholar Citations as a free alternative.

In a recent paper uploaded to the arXiv, “Manipulating Google Scholar Citations and Google Scholar Metrics: simple, easy and tempting,” free may come with some serious data quality issues. Indeed, as the researchers write, the effort required to radically alter citation counts to one’s papers (and thus increase one’s h-index) are open to anyone who can cut, paste, and post:

It is not necessary to use any type of software for creating faked documents: you only need to copy and paste the same text over and over again and upload the resulting documents in a webpage under an institutional domain.

For the purposes of their experiment, the researchers created a fake researcher (Marco Alberto Pantani-Contador — a reference to two infamous cyclists, Marco Pantani and Alberto Contador, each of whom was accused of blood doping). Copying and pasting text from a website, adding a few figures and graphs and lots and lots of self-citations (774 citations to 129 papers), the researchers created six fake documents, translated them into English using Google Translate, and uploaded them to a new webpage under their university’s domain. It was a process, the authors explain, that took less than half a day’s work.

All the authors needed to do was to sit back and wait for Google Scholar to index them.

Less than a month later, the citations came back, boosting everyone’s citation profile and the profiles of several journals. After demonstrating that Google Scholar could be gamed, the researchers took down the faked documents and waited for the citations to return to their previous numbers. Unfortunately, they are still waiting. Versions of their documents are still available in Google as cached documents. This suggests that the system is both vulnerable to gaming and resistant to correction. Google Scholar is on a trajectory toward chaos.

While the authors do concede that a free tool for evaluating the impact of research is empowering to those traditionally disenfranchised by commercial products, there is a tradeoff between data integrity and free. They write:

Switching form a controlled environment where the production, dissemination and evaluation of scientific knowledge is monitored (even accepting all the shortcomings of peer review) to a environment that lacks of any kind of control rather than researchers’ consciousness is a radical novelty that encounters many dangers.

Pursuing an open and unregulated evaluation system means having all of the filters at the production end. Yet without any central authority, the authors of this paper can only appeal to researchers’ good “ethical values” and guidelines for acceptable behavior. Unfortunately, the authors’ own experiment demonstrates how easy it is to game the system and how impervious the system is to self-correction.

The barrier to entry for indexing in Google Scholar is an academic domain, which, as many in academia and publishing understand very well, is largely unregulated. Students are often given webspace for their projects; departments and labs and individuals are allocated space or are permitted to run their own servers. Most institutional repositories require that those submitting new documents merely click through a generic copyright page. Subject-based repositories, like the arXiv, provide only cursory review of submitted documents. The approach for these spaces is to intervene only when someone complains. While uploading documents into these spaces is considered “publishing” in the broadest interpretation possible, these spaces lack the same filtering that goes on in the journal space. Combining citations from a largely unregulated space with a tightly regulated space is not just problematic, it corrupts the citation as an evaluative metric.

Calling on Google to tightly regulate their citation index is a call to deaf ears. Google prefers algorithms over humans, and at this time, it is still very easy to trick an indexing software to think you’ve created an original scholarly document. Moreover, there is no reason why Google, unlike Thomson Reuters, would want to invest huge amount of human resources into fixing their citation indexing problem. Google is in the business of selling advertisements to companies, not metrics to scientific organizations.

Discussion

28 Thoughts on "Gaming Google Scholar Citations, Made Simple and Easy"

Reblogged this on Progressive Geographies and commented:

Interesting piece about the problems with Google Scholar for counting citations, or perhaps about the idea of metrics generally.

I prefer free data that can be gamed to secure data that I cannot afford. So unless gaming becomes widespread the GS data is good enough for me, considering the alternative. Plus isn’t the community supposed to police itself in any case? Academic cheaters can be caught and punished locally. Expensive central authority may not be the answer.

I agree with some of this, in particular Google’s susceptibility to gaming. But in other parts I disagree: I think Google indexing and counting a wider range of citations is a significant strong point, which better captures actual influence. Thompson ISI only counts citations from journals to other journals, which in many fields is a small proportion of what goes on, and sometimes not even the best part of it. Google Scholar, on the other hand, correctly includes citations from things such as conference proceedings and books.

I agree that Google Scholar counts citations from other types of objects; however, it is also prone to over-counting. For example, a manuscript that was posted in the arXiv, published in a journal, made available from a laboratory website, and then deposited in an institutional repository would all be considered as separate citation sources. The issue may be even worse in economics, for example, where a working paper could be uploaded to several repositories, updated several times, and then published years later under a different title.

I’ve found that GS does collapse duplicates if they have the same title: many arXiv papers are grouped under the same Scholar entry as their subsequent journal version, and counted as alternate versions of the same paper when you click “All N versions”. I agree it’s a problem when titles get changed, though.

I do not agree that this is a problem. If version A gets X citations and version B gets Y citations, GS does *NOT* count A and B as two papers with X+Y citations each. If h-index is the metrics, when several versions of the same doc exist, this may be to the detriment of the author, since none of them may make it to the top h whole the combination of them might.

Clarification: Thomson Reuters does include/count citations from books (10,000 per year) and conference proceedings (3600 per year). These are part of the broader Web of Science content set and so contribute to the count of Times Cited on each article. Proceedings are also included in the Journal Citation Reports – you can see them in the Cited Journal Data tables.

Comment: We are pretty clear about how/when/why we suppress journals from appearing in the JCR due to citation anomalies. Suppression from 2011 JCR was based on analysis of 2010 JCR data – so that the data WE used is visible still to subscribers. Pick a journal off the list of suppressed titles – and go look at their last listing in JCR (most are either 2010 or 2009) – and you’re going to see pretty extreme citation patterns. Most of these are still journal self-citations – but as Phil notes, we are now also analyzing Citation Stacking patterns (I can’t and won’t call them “cartels” because that implies an intentionality and collusion that are not objectively detected in the data). These, too, are fully visible in the 2010 JCR data.

The only systems that are not active targets for gaming are systems where the outcomes do not have significant effects in their surrounding area. (To date, no one has tried to manipulate the star-ratings on my books in GoodReads.) It is therefore important to not just to be transparent (fully report the data and how it affects the result), but also to ensure accountability. Journal self-citations were fully reported in the JCR from the very first publication (1975 data) – when it became clear that there were instances of extreme self-citation leading to distorted Journal Impact Factors, we closed the loop with a consistent approach to sanction the titles.

We all know that TR WoS data is being gamed too. They correct that, sure, but how, when, etc is done behind closed doors. I prefer a transparent environment where gaming is obvious. That said, it would be even more transparent and obvious if Google would provide an API to GScholar.

The question about machine-only or human-only… WoS is not all curated either, and I have filed curation requests rejected. That’s not even deaf but stubborn. Such discussion would have been welcome in a comparison.

I agree with David on this one. Google Scholar isn’t the only one counting citations and making the information available for free. While I agree with Phil that the reputation of the data becomes suspect when gaming occurs, I would say this affects Thompson Reuters right now more than anyone. At every single editorial board meeting my editors’ have (we have 33 journals) there is a conversation about IF and how we can increase them to be more competitive with journals in our field (published mostly by Elsevier). The editors then lament about other journals they submit to or review for where the decision letters make it clear that authors should cite the journal. Overall, my editors don’t condone this behavior and they refuse to go down this path. That leaves them with one option– publish better papers. This is, of course, not a problem but will take forever. I wish I could help them by providing lists of papers that are highly cited from competing journals so they know which topics and authors to solicit but I can’t justify the $30,000 subscription to TR to have access to this data. Users/readers should be able to rely on metrics from Google and Microsoft Academic and other sources, if they consider them in a composite view. Counting on just one metric is dangerous but taking them all into consideration should even things out a bit.

Google and other popular search engines have been dealing with the gaming problem since their inception. What applies to journals applies to web sites. I would be surprised and disappointed if Google Scholar did not apply some of the anti-gaming algorithms to journal searches.

On the other hand what these folks are describing is pure scientific fraud, namely posting a bunch of fake papers. I cannot see real researchers taking this risk just to generate citation numbers as it would quickly end one’s career and it requires a public display, but it is not Google’s job to catch fraud.

I am also surprised that they published their method. The cyber security folks do lots of research on hacking but I do not think they publish the methods. Or do we need a Journal of Cheats and Hacks? Is this a new tenure track?

I agree it’s fraud. And website developers have been posting fraudulent pages for years to get better ratings. The techniques and counter-techniques are well known to Google. Shame on Google if they let a fine application like Google Scholar get fooled. Maybe one branch of Google needs to talk to another?

I don’t think GS should spend millions of dollars developing fraud detection algorithms just because of this little stunt. There is no evidence that scholars are actually doing this kind of fraud and I doubt they are. Unfortunately I think this stunt has generated a false rumor that there is something wrong with GS citation counts because scholars are dishonest. This reflects badly on everyone.

Hi Phil,

As of today there is no Marco Alberto Pantani-Contador on Google Scholar (at least it does not show in the search results). Also, authors were unfair to Google – they gave them 1 month (25 days) to index and only 17 days to remove – who knows, may be the docs were removed from Google after 25 days?

Google does nice job allowing scientists to select their publications, and they would do even better if they’d allow to “report” or “flag” a publication or an author like Marco Albrerto Pantani-Contador. May be it is in their plans, who knows?

I am not sharing your praise of using manual labour to index scientific documents, though:) I have a lot of examples in Computer Science when conference takes place regularly and only some editions and not all of them are indexed by ISI, or Scopus. Or, one year is indexed by ISI, another is only by Scopus, another – by both. Or, the “extended proceedings” stored in some private digital library are indexed and the selection of the best papers is not indexed. Basically, little have changed since Informatics Europe pointed out the shortcomings of such manual labour-based but non-transparent approaches.

http://informaticseurope.wordpress.com/2011/07/28/scopuss-view-of-computer-science-research/

http://www.informatics-europe.org/docs/research-eval.php

Cheers,

Alex

First of all, as one of the authors of the discussed paper I would like to thank Phil for ‘unleashing’ the discussion we tried to provoke when we produced that experiment. It is interesting that this should happen now, as an English and Spanish version of the paper was deposited in May in our institutional repository (http://hdl.handle.net/10481/20469), this leads to many questions regarding the impact of repositories, and their analogy with journals, as the paper has had a real impact now, when deposited in Arxiv, but I guess that is another matter to discuss elsewhere.

Regarding the discussion above, our intention was not to discredit GS, but to alert on the consequences and responsibility creating tools such as GS Citations or GS Metrics may have on a research evaluation context and the necessary measures that must be taken into account. Of course gaming citations cannot be stopped and it has been proven many times with Thomson Reuters Web of Science. But that is no excuse to develop the necessary measures to try to avoid it. One can game the Web of Science with Citation Cartel, etc. but in this case we wanted to insist on how easy it was to do so.

Also, regarding the transparency of GS, free does not equal transparent, and in fact it is not at all! GS Metrics, for instance, does not allow us to see all the documents published for each journal, but only those contributing to their h-index, that is far from transparent…

On the comparison between TR Web of Science and GS, both have errors, that is undeniable. But not to the same extent. In GS Citations you can even find the same document in different co-authors’ profiles with different citation counts, the errors are serious errors which question the quality of the product.

And finally, I would like to take the opportunity to tell the end of the story which is missing in the paper. This experiment was a diversion in both senses, but we would like very much to prepare something more serious:

– Marco Alberto Pantani Contador, does not exist anymore I am afraid. As indicated Aliaksandr, our creature has been erased from the web.

– Two days after we uploaded our manuscript in our institutional repository, most (but not all) of the affected researchers’ profiles were temporarily removed from the web along with our faked researcher. Although after a few days they were made available again with out the fake citations.

– However, a first faked paper authored in this case by me and which was our first test (it is not mentioned in the paper), still remained, directing approximately 30 citations to each member of our research group.

– Some months later (mid-August), these citations and the last traces of our experiment were finally deleted.

So GS did in fact correct its errors, as indicated above, but not transparently, we had no contact with them whatsoever, it was ‘done behind closed doors’.

Nicolas, as I said in my first comment it may be acceptable, even expected, that a free computer generated product will have more errors than an expensive monitored product. It is like computer translation versus human translation.

My question is do you think it realistic to expect that real researchers will commit this kind of fraud enough to be of concern? If so then why tell them how to do it? If not then what is the point?

I hope not, it would be very unwise! And damned those who do it!

But I have some reservations with journals. The GS products have created much expectancy as for the first time the ‘invisible impact’ (that outside of the TR) is now visible. Non English-speaking countries and especially journals from the Social Sciences and Humanities can now ‘be measured and ranked’ and these tools are embraced without any thought as research evaluation tools by journals and researchers. Of course, the launch of GS Citations and GS Metrics are good news as they bring alternatives to the traditional journal indexes and especially the JCR.

But we believe that it is still early to adopt these tools as valid. The Impact Factor, and the JCR are still the main yardstick for research evaluation, and citations found in GS still have to be ascertained. A computer generated product such as this can have many errors, and this is expected, but when Google develops such tools its real intentions are to compete with TR and Elsevier and for this, they have to take many precautions, which they have not implemented yet. Surely they will, I am very optimistic on this.

I prefer not to guess at Google’s intentions. They may be satisfied with a good computer generated product. I would be.

There is actually a possibility to see all articles published by a journal in Google Scholar using their “advanced search” feature. E.g. this one would return all articles published by Springer’s International Journal of Information Security in 2009-2010. The numbers match with ISI!

http://scholar.google.it/scholar?as_q=&as_epq=&as_oq=&as_eq=&as_occt=any&as_sauthors=&as_publication=International+Journal+of+Information+Security+springer&as_ylo=2009&as_yhi=2010&btnG=&hl=en&as_sdt=0%2C5

Of course – this is a rather primitive and not convenient way of searching, one can do way better search – e.g., Microsoft Academic Search is already more convenient to use, but I would assume Google had different priorities with Scholar (at least so far). Finding articles published in a journal in a convenient manner can be added later – on top of the all-the-time-growing bunch of scholarly data they have…

I don’t understand the claim that this (a) would cost Google many millions to fix, and (b) is not in their interest to avoid. As mentioned in previous comments, Google is an expert at dealing with a much more prevalent,. easier to execute (at least to the level described in the paper), and profitable form of cheating: search engine optimization. I would trust the tools they have developed to deal with this over any human-guided curating on the part of TR. Plus, unlike TRs approach, this scales as academic publishing continues to grow.

This bring me to the more fundamental issue. The only reason we are using citations as a metric to begin with is (like too much in academia) an unfortunate historic accident. When publishing was young and small, this was the only to carry on. Now that we have a large amount of network-wide data on publishing, why are we still sticking to these archaic ways? There is a reason why Google uses page-rank (plus some magic) instead of number of incoming links (citations) for rating websites. Incoming links are inherently not a robust measure, and will always be easy to game. If not by these “obviously cheating” ways then by simply publishing lots of bad papers in poor journals to build up citations on your other papers… under pseudonyms if necessary.

If we want to stick to the citation approach, then even there TR doesn’t have some magic sauce on Google. Google already determines the journal that published your paper (and to make this more reliable, crowd-funds it to researchers by allowing us to combine papers/edit info in our Scholar profiles). If they wanted to provide two citation counts, one from “official publications” and one from official publications + preprint/academic-website then they can already. It would probably to a summer intern all of a few days to deploy, if such a tool was desired.

Artem: As an algorithm developer I see the issue of detecting faked articles as a difficult problem, hence probably costing several million to solve. This is very different from what Google search algorithm’s do. But maybe I am missing something. If you have a simple algorithmic solution in mind I would love to hear it and so might GS.

David, no magic solution is needed to correct problems as the ones we denounce in our working paper. It is as easy as allowing filtering by document type. This is a serious flaw for GS Citations and Metrics mainly because, contrarily to what it was stated before, we did not commit a scientific fraud. In fact, we didn’t even enter the scholarly communication system. We did not, try to trick anyone, we simply uploaded a bunch of papers to a website in which there were many references included. What is wrong with this? Instead of inventing a false author it could simply be a reference list intended for a class, or just to keep track our publications.

The problem is that GS thought it was academic material and thus included it in our GS Citations profiles. However, as suggested before, maybe it is not GS’ role to detect what is real from what is not, but it must allow the user to detect it. Right now, Google can identify journal articles and preprints reasonably well, why can’t I see which of the citations I receive come from journal articles and which don’t?

This lack of transparency can become a serious flaw and it can be fixed without expending many millions.

First of all if a scholar did what you did and loaded a bunch of fake papers in order to boost their citations it would certainly be fraud. That is why the title of this article says “gaming”. Beyond that designing an algorithm that accurately distinguishes a review article with many references from a class reference list, etc., strikes me as very difficult. I recently developed an algorithm that estimates the grade level of scientific material and it was a big project. This is artificial intelligence so not easy.

Uploading pdfs to a website fraud? Don’t know, GS is ‘gamed’ because it considers every document in institutional domains as academic, that is the problem.

Regarding distinctions between document types, there is no need to go as further as distinguishing reference lists from reviews and so, you only have to distinguish regarding the source (journal articles, repository documents and miscellaneous, for instance). GS Metrics already detects articles published in journals, no further developments are needed. I insist, it is just making their products transparent.