- Image via Wikipedia

I sat in a session yesterday at a scientific meeting and listened to an enthusiast for open data paint rosy pictures of optimal workflows and “idealized data models” (I love that term), talking about unlocking the data hidden within studies and ossified print charts locked in PDFs in order to open up a new day of eScience, in which statistical models and computerized discovery could generate new insights into the world around us.

And as I listened to this, I kept thinking, “The most important insights in science seem to come from outsiders looking at commonly available data in new ways. The assumptions and biases people hold seem as important as the data. How do you account for that?”

If only we could have a reliable database of assumptions and intellectual biases as well.

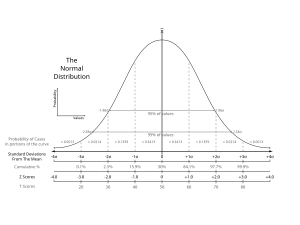

Statistics provides an important intersection of mathematics and assumptions, with interpretation a key variable that lies somewhat outside the realm of statistics. A recent article in Science News by Tom Siegfried breaks apart some of the reasons we should take a harder look at what we think scientific studies really say, especially when terms like “significance” are thrown around with false confidence.

In fact, “significance” is <ahem> a significant problem in the public understanding and portrayal of science. As Siegfried writes:

A new drug may be statistically better than an old drug, but for every thousand people you treat you might get just one or two additional cures — not clinically significant. Similarly, when studies claim that a chemical causes a “significantly increased risk of cancer,” they often mean that it is just statistically significant, possibly posing only a tiny absolute increase in risk.

It’s not surprising that laypeople or reporters misuse or misunderstand statistical terms, but do scientists themselves understand the terms? One of the slipperiest is the vaunted p-value. Invented by a mathematician measuring agricultural improvements from fertilizers, the probability the p-value measures is the likelihood that the events observed occurred by fluke — not, as some think, the chance that the hypothesis isn’t true. As Andrew Gelman writes in his excellent summary of the comment storm Siegfried’s article started in the relatively anemic statistics blogosphere:

A p-value is the probability of seeing something as extreme as was observed, if the model were true. It is not under any circumstances a measure of the probability that the model is true.

But aside from the potential for misinterpretation, the problem with p-values can be the fact that they were achieved in very particular circumstances, yet we tend to want to generalize from studies the same way the law generalizes from Supreme Court decisions — as if a study creates intellectual precedence rather than simply ruling on a situation that’s unique and hard to replicate. As Alex Tabarrock writes on Marginal Revolution:

. . . the problems with the p-value is not so much that people misinterpret it but rather that the conditions for the p-value to mean what people think it means are really quite restrictive and difficult to achieve.

This fact becomes all the more glaring when studies are thrown together into a meta-analysis. As Siegfried writes:

Meta-analyses have produced many controversial conclusions. Common claims that antidepressants work no better than placebos, for example, are based on meta-analyses that do not conform to the criteria that would confer validity. Similar problems afflicted a 2007 meta-analysis, published in the New England Journal of Medicine, that attributed increased heart attack risk to the diabetes drug Avandia. Raw data from the combined trials showed that only 55 people in 10,000 had heart attacks when using Avandia, compared with 59 people per 10,000 in comparison groups. But after a series of statistical manipulations, Avandia appeared to confer an increased risk.

In principle, a proper statistical analysis can suggest an actual risk even though the raw numbers show a benefit. But in this case the criteria justifying such statistical manipulations were not met. In some of the trials, Avandia was given along with other drugs. Sometimes the non-Avandia group got placebo pills, while in other trials that group received another drug. And there were no common definitions.

“Across the trials, there was no standard method for identifying or validating outcomes; events . . . may have been missed or misclassified,” Bruce Psaty and Curt Furberg wrote in an editorial accompanying the New England Journal report. “A few events either way might have changed the findings.” [boldface mine]

The ways in which statistics represent a blending of math and assumptions, especially in fields in which indirect observations are often portrayed as direct observations, presents high hurdles for dreams of useful open data. And if we’re only probing the data and not factoring in the assumptions that generated them or the uniqueness of the conditions under which the data were generated, we are going to be misled.

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=a616fc92-a1f1-4e41-bae5-1b2a848fe366)