The map of science, as measured by the flow of manuscripts, is an efficient and highly-structured network, a new study reports. Three-quarters of articles are published on their first submission attempt; the rest cascade predictably to journals with a marginally lower impact factors. On average, articles that were rejected by another journal tend to perform better — in terms of citation impact — than articles published on their first submission attempt.

The article, “Flows of Research Manuscripts Among Scientific Journals Reveal Hidden Submission Patterns,” was published online last Thursday in the journal Science by French ecologist Vincent Calcagno and others.

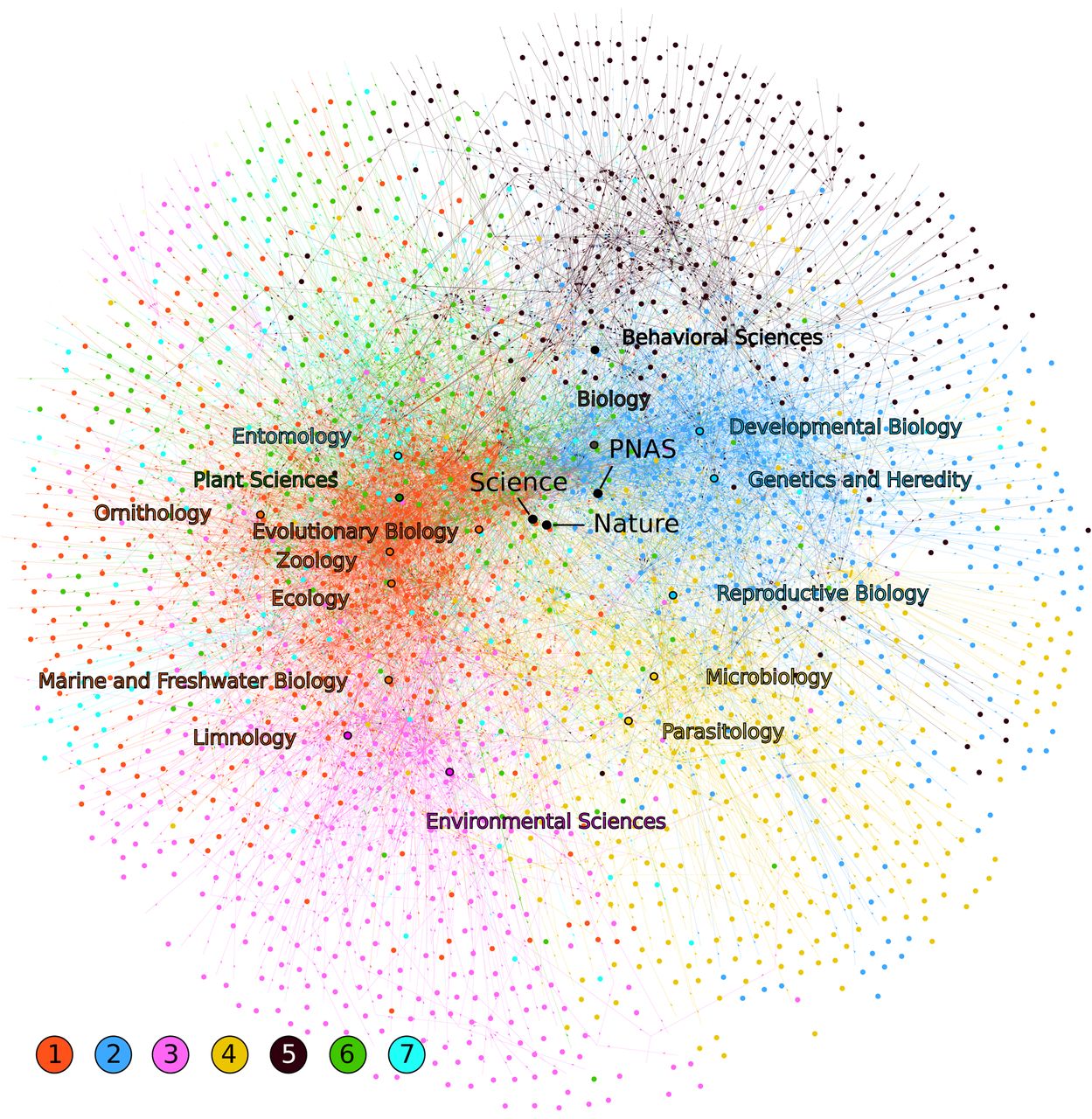

Calcagno’s approach to studying the journal system is entirely novel. Starting with articles that were published between 2006 and 2008 in 16 fields of biology (encompassing 923 scientific journals), the researchers surveyed corresponding authors on whether their article was rejected by another journal prior to being accepted. Retrieving the names of the prior journals in the submission chain allowed the researchers to create a huge network map showing the directional flow of manuscripts through the journal system. Not surprisingly, Science and Nature form the center of that map.

The researchers only requested the preceding journal in the chain of rejection so it was impossible to calculate the average number of times an article was rejected before ultimately finding publication. Starting from published articles also precludes the researchers from studying submissions that were ultimately abandoned after serial rejection. Still, they report that 75% of survey respondents indicated that their articles were published on their first submission attempt. Based on the math, average submission pathways are likely to be short, indicating that the journal system as a whole is working efficiently. When rejection did happen, authors selected journals with marginally lower impact factors, with few exceptions. Taken together, these findings imply that authors generally target the appropriate outlet for their submissions and are risk-averse when it comes to resubmission.

While resubmission costs authors time and effort, it also comes with real benefits. Articles previously rejected by another journal received significantly more citations than articles published on their first submission attempt. Calcagno interprets this finding as evidence that the peer-review process is doing its job. Indeed, two large surveys on peer review (Sense about Science (2009), and Mark Ware/PRC (2007)) both indicate that scientists overwhelming agree that the peer-review process improves the quality of their work. Proponents of the publish-first-review-later model argue that it is better to produce more publications than improve the quality of one’s work.

James Evans, a University of Chicago sociologist of science, suggests an additional explanation for the improved citation impact findings. As quoted in The Scientist:

“Papers that are more likely to contend against the status quo are more likely to find an opponent in the review system”—and thus be rejected—“but those papers are also more likely to have an impact on people across the system,” earning them more citations when finally published.

Scientists cite the work of others for various reasons not all of which are considered valid. In contrast, the decision of where to submit one’s manuscript — and, if rejected, where to resubmit — is based on careful and deliberate decision-making. Within any field, researchers are keenly aware of the pecking order of journals. Publication, after all, makes or breaks careers.

Calcagno proposes using his analytic measures to develop a new journal ranking index based on the flow of manuscripts. Such an index, he argues, would more closely reflect authors’ perceptions of journal quality. Yet, their findings suggest that collective submission choices essentially explains the same underlying phenomenon as collective citation behavior, so it is not clear that such a new index would provide any additional information. Authors may be making blind submission choices based entirely on the impact factor of the journal. To me, this study suggests that the impact factor is a reliable indicator of the pecking order of scientific journals.

Discussion

27 Thoughts on "Mapping the Flow of Rejected Manuscripts"

Hi Phil,

Very interesting study but I wonder about the quality of the data. That said,I haven’t yet read the study.

The fact that three quarters of the papers were accepted on the first try concerns me. I wonder if there was a significant response bias in his survey data with people getting rejected on their fist try being less likely to respond. Top journals tend to have acceptance rates ~ 10% and at least in the fields I am associated, good journals usually accept under 50%. Obviously there are a lot of variables such as the quality of the submissions but a 75% acceptance rate on the first try sounds very high to me. Any thoughts?

The authors discuss potential bias in their paper. They conclude that the effect size is large enough where it could not be an artifact of response bias. I imagine one reason why the acceptance rate is so high is that they are only observing those articles that were published. If they also observed manuscripts that were abandoned (i.e. the author gives up trying to get published or the paper gets superseded by a new manuscript), the rate would be lower.

But this implies that a great many papers are never published which seems a problem in itself and is contrary to conventional wisdom. Perhaps they go elsewhere. These findings are odd but interesting.

David, also remember that the researchers start with articles indexed in Web of Science, which indexes just about a half of the total number of journals. This may also explain the high acceptance rate.

Phil, if you mean that many of the rejected papers get published in the other half of the journals then that is part of my suggestion. Some may also get published in generic megajournals or in other fields, especially if the are interdisciplinary. Call it the case of the missing rejected papers. If 75% were never rejected but the rejection rate is over 50% then something is up.

On the other hand maybe their responses are skewed. What is their response rate across all articles published in their chosen population?

Their response rate was 37%. I’ve sent you a copy of their paper and supplemental materials.

The main contribution of this paper is not about acceptance rates in biology journals, which are well documented, but on the flow of manuscripts between journals, which has not yet been documented–at least not publicly and systematically.

Thanks Phil, I will read it with interest. But the fact remains that there is something strange going on if their results are correct. Try building a model with say a 50% rejection rate where 75% of papers are never rejected. The only way is if a lot of rejected papers leave the system. If they are never published then this might support the popular hypothesis that peer review kills radical science. I am not claiming this just pointing it out.

On the data side it is possible that a lot of respondents lied because being rejected is potentially damaging information.

There are three reasons why their general reported acceptance rate may be higher than industry standards for biology journals:

1. Their target population is authors of published articles. They are unable to identify articles that were rejected but never eventually published. This creates an upward bias on their acceptance rate.

2. Their target population is selected from Thomson-Reuters’ Web of Science. They are unable to identify articles that started their rejection cascade from WoS journals and eventually published in non-WoS-indexed journals. Again, this creates an upward bias on their acceptance rate. Identifying their target population from BIOSIS, say, would have reduced this bias, but the researchers wanted to analyze citation rates as well, hence, they focused on the WoS dataset.

3. Non-response bias. Authors who were published upon first submission attempt may be more likely to respond.

Also my model statement is incorrect. The model works if many rejected papers are rejected many times. Surprising results are the best results.

While the majority of articles may have short publication pathways, perverse incentives (such as a policy that rewards authors financially with cash bonuses) would lead to very long pathways for some authors. If the authors asked for the full publication pathway–instead of just the last rejecting journal–they’d be able to calculate average path length and see if it differs by field or by country of corresponding author.

One other possible factor might be that some authors tend to publish in the same journals, and/or their papers are less likely to be rejected by the journals. In other words, authors who have published in a journal before or are known to the editors (i.e., are “known entities” in some way) might have a higher-than-average acceptance rate. And this set of authors may have been more likely to respond, causing a large upward bias.

Could this be a possible cause of bias?

I’ve been researching trends in manuscript submission acceptance/rejection rates over the last couple months and also find the 75% acceptance rate highly questionable. The most reliable data I found came from the Thomson Reuters study of ScholarOne submissions (http://scholarone.com/media/pdf/GlobalPublishing_WP.pdf). In looking at over a million submissions from over 4,200 journals, the average acceptance rate was 37.7%. This was from data actually pulled from the system.

The study didn’t break down the data by subject area, but no country achieved more than a 51% acceptance rate. Peer review may be doing its job of improving the papers, but I believe it’s taking more cycles than this study indicates.

Keith, I think you are confusing acceptance rates of journals (which include articles that never reach publication, or reach publication in a journal that is not indexed in Web of Science), with acceptance rates of manuscripts that have been published in a WoS-indexed journal. There might also be some response bias as well that leads to an upward estimate. The main contribution of this article is not about acceptance rates, which as you note, are much better measured through other means, but on the trajectory of manuscripts through the journal network.

The study I referenced isn’t from Web of Science, but rather ScholarOne Manuscripts (owned by Thomson Reuters). Many of the journals using ScholarOne for peer review aren’t indexed in the Web of Science. I do think you are correct about some bias leading to an increase in the estimates.

Keith, thanks for pointing me to this report. I hadn’t seen it before. You’re not the only one who is equating manuscript acceptance rate with journal rejection rate. I think the authors could have made it clear that they are not the same thing since it seems to be distracting readers from their main results.

Phil, I do not understand the significance of the distinction you are making, as it relates to this issue. Nor do I think I am distracted. They have a central finding that does not seem to make sense and that is always interesting. How people choose the last journal they submit to is less interesting to me, but that is just me.

It also depends what authors considered as rejection. Is getting a paper sent back by the editor as being “out of scope”, not interesting enough or for other ‘unscientific’ reasons a rejection? In my opinion actually no, to me a rejection is when it goes through peer review and gets rejected on scientific merit. I imagine most of the rejections of journals that eventually only publish ~10% of submissions are of the former type. This misunderstanding can substantially skew the data.

“Articles previously rejected by another journal received significantly more citations than articles published on their first submission attempt.”

Statistically significant, but the effect is tiny: https://twitter.com/joe_pickrell/status/256756126140477442

The authors could only pull this out because they had a data set of tens of thousands of papers. There is almost no predictive power.

I agree. I looked at the supplementary files in more detail and I find their method of comparing citation performance somewhat confusing. I would have preferred to see an ANOVA with logCitations as the dependent variable, Resubmitted as a dummy (indicator) variable, and journal as a Random variable. This would allow them to estimate the citation effect, calculate its percent difference and provide a confidence interval of the estimate.

Responding to criticism, the authors present more details (and a new figure) on the citation advantage of articles previously rejected by other titles. See:

http://vcalcagnoresearch.wordpress.com/2012/10/23/the-benefits-of-rejection-continued/

I think your characterization of those who prefer the publish-first-and-review-later model is somewhat unfair. At least the people I know who argue for this position are not out to balance speed of publication against quality at the sacrifice of the latter but rather to increase dissemination and allow open peer review to provide feedback from a wider range of scholars that can be used to revise the original article, which will be a dynamic, not static, piece of research, revised every time the author receives feedback that seems to justify some important revision.

For those interested in knowing more about the impact of pre-publication history on post-publication citation count, and effect sizes, I have posted more data and explanations on my research website at

http://vcalcagnoresearch.wordpress.com/2012/10/23/the-benefits-of-rejection-continued/

Best, Vincent (and coauthors)