How much of the literature goes uncited? It seems like a simple-enough question that requires a straightfoward answer. In reality, this is one of the hardest question to answer, and the most appropriate response is “it depends.”

A citation is a directional link made from one paper to another. In order to count that event, that link must be observed. And while counting a citation confirms that an event took place, not observing a citation does not confirm that it didn’t.

To use an analogy, if I observe a tree falling in the forest, I can confirm that it indeed took place. However, trees fall all the time in different parts of the forest, and if I wasn’t there to view the event (or the aftermath), I cannot confirm that they fell. When I report on how many fallen trees I observed, the context is just as important is the observation.

In a 1991 piece in the journal Science, science journalist David Hamilton reported on a study undertaken at ISI that quantified uncitedness in their citation indexes. Ninety-eight percent of Arts & Humanities papers remained uncited, according to their data, with the Social Sciences not far behind at 74.7%. The intended message was that much of the sciences don’t matter, and humanities barely mattered at all. The article attracted stern responses by Eugene Garfield and David Pendlebury (both from ISI) that Hamilton’s piece lacked the context of the analysis—the percent of uncited articles included all types of articles indexed by ISI, not just research articles. Remove letters, editorials, obituaries, corrections, news, and other ephemera, and the percentage of uncited articles drops like a stone. Citations were counted just four years after publication, and extracted from the ISI index, which at that time, indexed just 10% of the scientific journal literature.

If the researchers waited 10 years instead of four, based their analysis on a more comprehensive index, focused just on research articles, and included citations from books—the source of many citations in the humanities—we would imagine that the results would come out very differently.

Garfield also argued that uncited literature is not necessarily a bad thing. Much of science progresses by making incremental improvements and advances over older studies. Reference lists are not supposed to be historical chronologies of everything that preceded it, but a carefully selected list of relevant sources.

There are other explanations for uncitedness.

Citation errors. Authors misspell journal names or include errors in the volume or page numbers. While DOIs and disambiguation software at the indexing stage can help correct well-intentioned mistakes, they still happen. Errors essentially prevent a directional link to be made from the citing article to the cited article, which means that it cannot be counted. Or stated more directly, counting assumes good metadata.

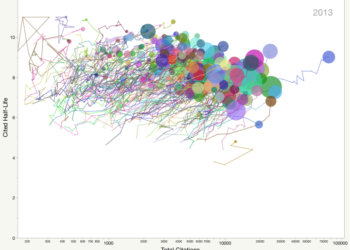

Citation takes time. An article may wait years for its first citation. Referred to as “Sleeping Beauties,” some papers go unnoticed for decades until they are awakened by a citation event (the kiss from a handsome prince), after which they attract a lot of attention. Generally speaking, the longer a paper waits to be cited, the less likely it is to be cited. Conversely, the earlier a paper is cited, more more likely it is to be cited. Citations beget citations.

Citation is limited by the universe of indexed papers. Thomson Reuters’ datasets follow a policy to index the “core” literature, meaning a smaller collection of elite journals. Scopus, in comparison, is based on a much broader selection of journals. And Google Scholar, as mentioned in a recent post, has a much broader definition of a scholarly document. All three indexes provide different citation counts.

How much of the literature goes uncited? Answering such a question ultimately depends on providing context to the observation period and the universe of observation.

Fortunately, such an approach can also be extended to questions such as, “How much of a library’s collection never circulates?” and “What percentage of manuscripts are never published?”

The answer to all of these is, “It depends.”

Discussion

11 Thoughts on "How Much of the Literature Goes Uncited?"

Absolute numbers don’t matter too much, but comparisons between specialities, journals, authors and even countries do. Being from Spain and getting a shocking name, I know about it (one Finnish colleague told me when I met her: “it’s funny to know that you really exist”).

http://www.uned.es/dpto_log/jpzb/jesuszamora_inv.html

I puzzled for awhile over why they “dropped like a stone,” (paragraph 4) until I realized you meant obits, editorials, etc. were removed as “things being cited,” not “places being counted for citation purposes.”

As an editor, I consider every zero cite to be a failure. This isn’t as bad as it sounds: you have to swing the bat to get a hit. Now, if only I could find a way to identify the zero cites articles in advance!

Has it not occurred to you that industry does not cite, undergrad students don’t cite, citations in conference papers and non-indexed journals don’t get counted, etc.? How can you be sure zero cite papers are failures and don’t have any impact? Does getting cited as a blurb in a review article make one paper much better than one with zero cites? Shouldn’t papers be published based on their content and quality, not how many citations they will bring? Do you rather publish controversial or incorrect garbage that gets cited many times over well done but narrow subject papers? Much to reflect in your role as editor.

If an article were discovered because of its inclusion in an anthology and were cited as part of the book rather than the original journal where it first appeared, would it be counted if the study included only citations to journals?

A piece of work may have significant impact because it influences the practice of some subject or discipline, for example in many branches of medicine – yet not get cited. As an Editor of a research based Journal I also see ‘zero cites’ as relative failure, but colleagues who edit practice based journals might not have such a view.

So how to measure usage? Much harder . . . .

Certainly cites should not be seen as “the be all, and end all”!

Thanks for the interesting article. One should mention that Garfield already published about Uncitedness before, see the article Uncitedness III – The importance of not being cited. In: Current Contents 8, Feb 1973, p. 5-6. Relevant to your question one should distinguish at least two types of uncitedness:

First, a publication is not cited because it is irrelevant, and second it is not cited because it was forgotten or never found. I think the second category also origins from the fact that most researchers don’t to proper literature review but stick to the journals, colleagues, and indexes they already know. The German information scientist Walther Umstätter suggested another source of uncitedness: a publication can be not cited on purpose. For instance an author, journal, or institution may look too doubtful to someone or someone does not want to cite specific sources. Sometimes the actual content of a publication does not matter but it’s the form that influences whether it is cited or not.

Your final question “How much of a library’s collection never circulates?” could be answered at least more easy than citation counts because libraries should exactly know whether a book is on loan or not. I asked this question at libraries.stackexchange for further dsicussion.

Using the Smithsonian/NASA Astrophysics Data System (ads.harvard.edu)

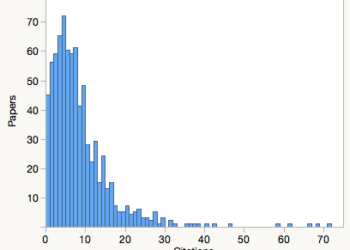

Taking citation counts today to the entire years output for 2007 (per source):

The Astrophysical Journal (a 1st tier journal) <~ 1% were not cited

Astrophysics and Space Science (a 2nd tier journal) ~15% were not cited

Astronomical Soc. of the Pacific Conf Ser (a 1st tier conference series) ~50% were not cited

Bulletin of the Am. Astronomical Soc. (abstracts only) ~91% were not cited

I removed all non-papers (errata, editorials, …) so these numbers are reasonably accurate, but clearly one can get almost any desired result by properly choosing the definition of a scholarly publication