Greetings from the blue heart of a blue state, where the daily protests rage. To give you a sense of the local post-election atmosphere, our schools and child-oriented businesses felt the need to send around emails to parents on how to talk to children about recent events, essentially recycling the same messages they used after the Sandy Hook shooting and 9/11.

The Scholarly Kitchen is not a political blog, but we are all affected by political events (see Brexit as an example). There’s almost no way to predict the impact we’ll see on scholarly publishing, as a minority of US voters has elected a completely unpredictable President. Very few forthcoming policies have been discussed and many of those that have are already being walked back. This unpredictable nature is at the heart of the anxiety that many of us feel.

Maslow’s Hammer tells us that, “it is tempting, if the only tool you have is a hammer, to treat everything as if it were a nail,” and I’m sorry to say that this is the best I can offer you today, a look at two post-election analyses that I think relate directly to scholarly publishing.

The first is the role of social media in the election, or more directly, that of Facebook and the impact of how it curates content for users. Mark Zuckerberg insists that the fake news stories so prevalent on Facebook did not influence anyone’s vote:

Personally I think the idea that fake news on Facebook, which is a very small amount of the content, influenced the election in any way — I think is a pretty crazy idea. Voters make decisions based on their lived experience.

Zuckerberg has offered no evidence to support his claims, while others have shown how prevalent even a small percentage of fake stories can become. Regardless, this seems to put Facebook in a position of arguing against the pitch it uses to lure advertisers. If the site has no influence on its users, then why waste your advertising dollars? I’d argue that the problem caused by Facebook and social media in general is less the fake news stories and more the isolation they cause, the filter bubble created as they expose users to just the information that confirms their previously held beliefs. The great promise of social media is that no matter how weird you are, you can find community with like-minded individuals and see that you are not alone. The great flaw of social media is that once you find that community, it tends to insulate you from the rest of the world.

Facebook’s struggles with the truth are the result of a move from curation based on human judgement to the use of algorithms. This has led to the spread of a great deal of misinformation. Rather than considering the role for human judgement in editorial decisions, Tim O’Reilly suggests doubling down on the use of algorithms, and that all of Facebook’s problems can be solved through the creation of a magical program that can separate truth from lie. Exhibiting the worst of Silicon Valley’s “there’s an app for that” myopia, he suggests that a stronger curation effort is not the answer, and instead, Facebook should emulate Google’s process for ranking search results.

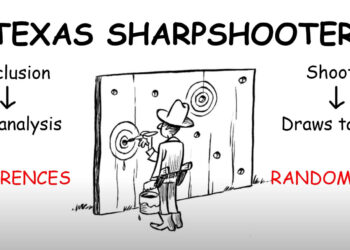

The problem with this sort of thinking is that Google is a poor arbiter of the truth. Perform a search for “vaccines cause autism” (and please sign out of Google and/or use private browsing mode so Google doesn’t feed you results meant to match your previous reading behavior). Three of the top six results I see offer “undeniable scientific proof” that the vaccine/autism link is factually true. Try a search on “Hillary Clinton killed Vince Foster” and the percentage of reputable sources drops precipitously.

Google’s rankings are based primarily on popularity, not accuracy. Your site gets a higher ranking when others link to it, not when what you say is shown to be true. Google is apparently so aware of this that they hired a team of human medical curators to sort out the nonsense, doing just the opposite of what O’Reilly’s faith in automated algorithms would suggest.

Further, Google’s business is not built around offering the best, most accurate search results to users. Google is an advertising company, and their customers are the advertisers. Their efforts center around meeting the needs of advertisers, not truth seekers. Paid placement, the favoring of results from Google’s other owned services, and the ever-dwindling space given to actual results offer clear evidence of more holes in O’Reilly’s argument.

As publishers and editors, curation is at the heart of what we do. Our books and journals are valueless unless they contain accurate information. For this, we rely on expert opinion, through the advice offered by qualified peer reviewers and ultimately, an editor’s experienced worldview. These decisions are informed opinions based on human judgement, and this is often difficult for some members of the research community, which generally favors quantitative results over qualitative ones, to accept. But the questions, “is this work any good?”, or even, “is this work methodologically sound?” are qualitative questions, and at best, quantitative methods can only offer an approximation of the opinion we are seeking. That’s one of the main reasons the Impact Factor is so flawed a tool, but also why attempts to replace it with similarly flawed tools are no better.

With this latest evidence of the effect that spreading misinformation can have on society, I would argue the opposite of O’Reilly. This is no time to abandon our responsibility for curating the world’s research in hopes that a computational solution will somehow make these difficult decisions for us. We must carefully consider the societal effects as we experiment with new business models that focus on serving the needs of authors rather than readers, and as we pare down the review process and the requirements for publication in order to increase speed and decrease costs.

The other piece of post-election analysis that has stayed with me comes from novelist and screenwriter Jesse Andrews. There’s no one reason why the election went the way it did, and trying to reduce everything to a simple answer is to ignore the many complex factors involved. But Andrews makes a convincing point about the power of story, and how one candidate did a much better job of telling a simple story than did the other.

Like I’m doing right now, Trump told a grossly simplistic story in his campaign…But as a story, it was super effective. It was very easy to understand. You could remember all of it, and it was about America…

What was Clinton’s story? Clinton ran as a technocratic incrementalist, who knows tons of stuff about tons of stuff and will make well-considered technical improvements to our country here and there, continuing and sharpening our neoliberal trajectory with policies that address this thing and that thing, based on dizzying amounts of science and data. She’ll react to world events on a studied, case-by-case basis. That is both a very sound vision of a presidency, and the most boring thing I have ever typed. I had to get up two different times for coffee while writing it. It’s not a story at all.

I’ve been involved in some recent efforts to help scholarly publishers do a better job of outreach to the research community and to society at large. We are notoriously poor at telling our story. We know we do something valuable, but what we do is often subtle and unseen, and when we start describing it, we get lost in the details and the caveats. At that point, we’ve lost our audience.

For the last decade and a half, we have been trying to counter an argument that all publishers are greedy corporations, reaping massive profits, and bent on stopping cancer patients from reading about their conditions. Or one that publishers steal the hard work of researchers and then sell that work back to them at exorbitant prices. Neither of these arguments is particularly true, but both resonate emotionally. That’s hard to counter with wonky charts showing declines in cost-per-use or cost-per-citation or an in-depth explanation of the peer review process. Rooting for a self-declared Luke Skywalker over someone they’re accusing of being Darth Vader is much easier to get behind than understanding the subtleties of a complex service industry.

Our industry is under an increasing burden of regulation from governments, funding agencies and universities. We can live with these regulations as long as they are rational and fair, but to achieve that, we must learn to tell our story in an effective manner.

Effectiveness means clarity and simplification, it means finding ways to get our point across in a direct and easy to remember manner. I won’t go so far as to suggest prevarication, but what we need most is a narrative about just what it is we do and why it is important.

Consider this an industry-wide challenge. At least it will give you something constructive to do as you hunker down in your doomsday bunker during 2017, or roam the deserts in search of water and gasoline.

Stay safe, stay positive, and support one another as we enter difficult times.

Discussion

17 Thoughts on "The US Election, a Need for Curation, and the Power of Story"

David interesting essay, however I am not too sure I agree with your analysis. If one reads Nolte and Arendt among others one finds that the common thread in fascism is the foundational belief in mythology, conspiracy, anti intellectualism and a distrust in institutions. What we have just witnessed is a successful campaign based on the preceding. Unfortunately, facts do not have the mass appeal of easy answers based on mythology, conspiracy, anti intellectualism and a distrust in institutions. Additionally and even more unfortunate, funding and regulations will be based on assumptions presented within the preceding construct.

Funding is done by Congress, often ignoring Executive Office requests, so I expect relatively little change in major programs. How the agencies spend the money in details may change considerably.

A short story needs time to write, that’s all. Clinton had plenty of writers and plenty of money. There is no acceptable excuse about motives or complexity. You can’t sell any complex message without a short story and a slogan -Trump understood this very basic fact, Clinton should have. As the Audi slogan goes – vorsprung durch teknik…

…Steve

CELEBRITY SCIENCE?

The Director of the National Center for Science Education recently declared that “the election of someone who thinks climate change is a hoax and whose running mate once denounced evolution from the floor of the House of Representatives, is frightening and deeply depressing. It is more than possible that the sweeping Republican triumph at the national level may embolden local efforts to undermine the teaching of evolution and climate change.”

There has long been strong linkage between the media (entertainment industry) and election politics. The Republicans used this to their advantage in using “celebrities” such as Reagan (President) and Schwartzenegger (Governor). A gamble that seemed to work. The Democrats link up with celebrities, but seldom put them forward for high office. This time the Republicans went too far, and many, but not enough, of them, disavowed Trump at an early stage (e.g. Romney). So first blame goes to the Republicans. Second blame goes to the media who allowed Trump to put Hillary in the same class as Edward Snowdon.

What scholarly media folk may not appreciate is that there is a similar dynamic in academia and sorting out the gold from the dross is something they have a hand in. For any who might think Trumpism does not happen in science, two accounts of the career of Niels Jerne will perhaps provide helpful reading (1, 2).

1.Soderqvist T (2003) Science as Autobiography: the Troubled Life of Niels Jerne (Yale Univ. Press, New Haven).

2.Eichmann K (2008) The Network Collective: Rise and Fall of a Scientific Paradigm (Birkhauser, Berlin).

Scholarly publishing is largely about incremental improvements. Rarely does a radically new process or product disrupt the publishing market, and yet, talk about transforming the way publishing is done invokes the same frames as national politics.

The cognitive linguist, George Lakoff, summarized his work on framing in a short book (Don’t Think of an Elephant!, reissued in 2014 https://www.amazon.com/ALL-NEW-Dont-Think-Elephant/dp/160358594X/) that has become a bible for many politicians. The ideas presented are so fundamental to presenting, defending, and refuting arguments, anyone in publishing will see the parallels.

The most obvious feature of scholarly publishing to be affected is the US Public Access Program, mandated in 2013 by an Executive Office (OSTP) memo. Here the uncertainties are indeed great. They range from cancelling the program, perhaps as part of a general purge of prior Executive actions, to increased funding and mandating the use of CHORUS. It all depends on who the next Science Advisor is, as that is the person who manages OSTP.

I had a dream last night that the chief lobbyist for Elsevier was put in charge of the transition for this office. By dream, I mean an actual asleep while it happened dream–not something I think should happen! If the lobbyist for Verizon can be in charge of selecting staff for the FCC, why not?

Re the use of algorithms in evaluating truth claims, perhaps there should be a Turing Test of Truthiness where the ratings of man and machine are compared in a double-blind examination. When the algorithm becomes indistinguishable from human rating, it is ready to be deployed.

Re the art of story telling in science, doesn’t that depend upon a thorough knowledge of 1) the audience and 2) the message? Even with that, can telling the story to fellow scientists be done in the same way as to the general public? There are folks who speak science to the general public very well but they are usually not the originating authors.