In case you still harbor any doubts, yes the year Two Thousand and Sixteen is going to be a pivotal one when the history of the 21st century comes to be written. This year has seen many things coalesce, my fellow chefs talk about some of them [HERE] and [HERE] and fine words they are too. If you haven’t yet, go read them. I want to talk about something that has been bothering me greatly for a considerable amount of time; the place of facts and reality, and those who seek to bring us closer understanding of those things, in the modern world. No I can’t believe I’m typing that sentence in 2016.

In the summer, after one of the most abject periods of political campaigning I can recall, the UK voting population was asked to advise the government on whether it thought we should stay, or leave the European Union. The vote was marginal (here’s some proper data analysis for you) and yet also compelling: The UK wishes the current government to negotiate our exit from the European Union. The thing that bothered me most was the fact that one side decided to a specific thing that the other side didn’t: The leave campaign chose to attack experts. It chose to attack, belittle and dismiss those who’s business it is to predict the future from the present state of things. It chose also to indulge in a more generic framing of those experts and academics as “Elitist” and “Out of Touch” and worse. Oh yeah, it also lied.

Over on the other side of the pond (full disclosure – most of my family now consists of US citizens), the events of the last week have played out in a manner consistent with 2016 in general. One side brought facts to a culture war. That side lost. There are a few things I feel are worth pointing out about what has gone with these two events. Because those things directly affect the world we live in. Our world is the world of the reality broker. We publish research and other scholarly thinking whose entire and only goal, is to better fit our understanding of reality to the way the universe actually is. Historians and mathematicians; Art and literature and the big three sciences; philosophy and sociology and economics, the list goes on, all trying to better reveal the majesty of reality.

So what has happened this year?

- Expertise, knowledge, and competence are now under direct attack

- The massive scale social networks have a very serious (possibly fatally so) flaw in how they enable their users to filter and process information

- The maximum surveillance nature of the ‘free to use’ web compounds the filter problem

- The triumph of the ad-supported, cumulative-advantage-winner business model has gutted the 4th estate

And all of these things have been coming for a while. The cascade failure of 2016 has roots going back well over a decade.

Item 1)

In November 2009 the Copenhagen Summit on climate change was a few weeks away when the a server at the Climate Research Unit (based at the University of East Anglia) was (carefully and precisely) hacked. The resulting dataset and email trove was disseminated across the internet, seized upon by the usual suspects and, well to cut a long story short, the Copenhagen Summit ended without any meaningful action being taken. The scientists were investigated really quite vigorously, and although they had done nothing wrong, eight separate committees investigated the science… The hacking received a response of, “Whoa, that’s a bit tricky eh! Well wasn’t anybody in Norfolk [UK], so dunno what to do really”. The scientists were criticized for not releasing all their data quicker and more transparently. I’ll come back to that.

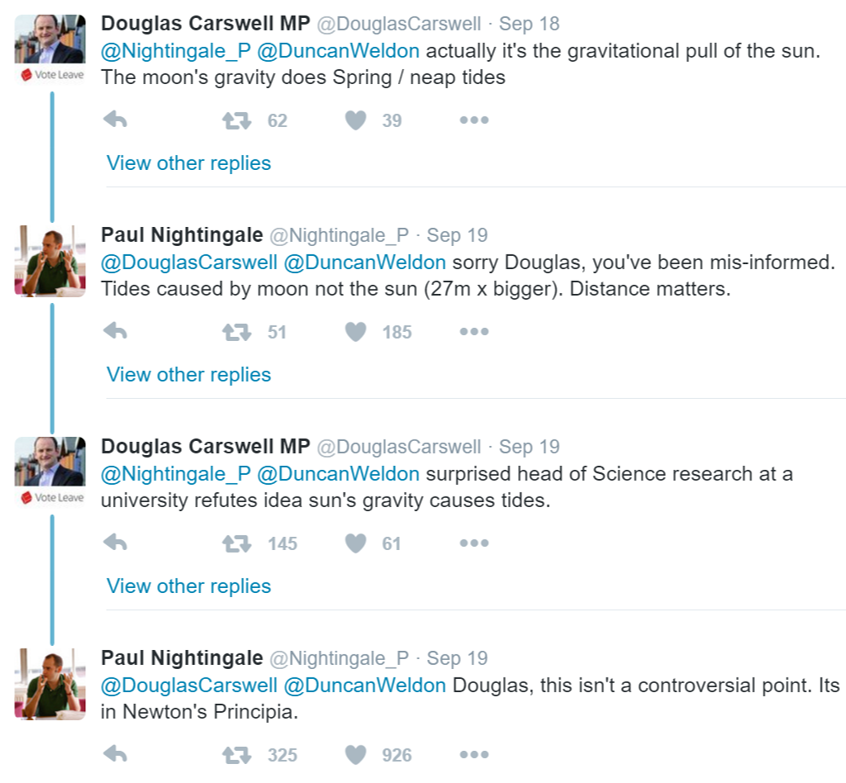

This year, we have UK MP and former secretary of state for Education and then Justice, Michael Gove with this quote: “People in this country have had enough of Experts”, uttered during a TV debate on the referendum.Since then The UK Independence Party MP, Douglas Carswell picked a twitter fight with a physicist on how the tides work. You can see the whole thread here

Conservative MP Glyn Davies has tweeted “Personally, never thought of academics as ‘experts’. No experience of the real world.” We’ve come a long way from the image of the 44th Vice President of the United States, Dan Quayle being lampooned because of his spelling abilities. None of the examples above have garnered any political downside for their owners. These are people who ultimately have a say in the available funding for experts and indeed how experts are consulted by government…

Dr Sara Hagemann has experienced a taste of what this new world might look like. The UK government (the civil service) has been very clear with her (and other colleagues) that since she isn’t a UK citizen, they no longer wish for her to be involved in any advisory role.

And then there’s the Uk Department for Education (DfE) informing researchers using the UK National Pupil database that they must share their results with department officials 48 hours before publication.Here’s the money quote “This will reduce the risk that DfE are caught off guard by being asked to provide statements about research the appropriate people have not seen… [… it is] not the DfE’s role to check or approve the outcomes [but that] the right people have had time to digest it.”

I’m worried. I’ve not cherry picked. This is stuff coming in on my infostreams; coming in direct from the people concerned. The people we publish.

Item 2)

My fellow chefs Angela Cochran and David Crotty have written about this, so I’ll not dwell, except to point out that the rise and profusion of increasingly smart Artificial Intelligence (AI) powered info bots (very common on Twitter at least) is very much a double-edged sword. I do believe that AI agents are going to lead to a future that we in scholarly publishing should be exploring vigorously, but right now the negative effects of a bot that’s designed to swamp or pollute online discourse are what concerns me most. The systems that Facebook and Twitter have at least, seem self-evidently not up to the job of correctly curating and filtering this stuff. The problem seems particularly acute because of:

Items 3 and 4)

Facebook, Google and Twitter make their money by selling advertising off the back of a massive personalized database of intentions (I urge you to read this again… 2003 warning us of a future we now live in) predicated on maximizing the delivery of ‘information’ that you will click on. Click-bait hacks the risk/reward and pleasure centers of the brain. That’s why it’s so effective. That’s why a bot that has enough AI to ‘say’ the right things, or simply drop any one of a number of canned comments and links, is so worrying. The powerlaw economics of the ad game mean that the bots win every time. This is an informational Denial of Service Attack. The 4th estate has come to grief because of this. They need the clicks to survive. Nothing. Else. Matters. If you have a Google Home or Amazon Echo device, you might want to think real hard about what happens when those household AI’s start feeding back information to their users in an ambient fashion. “I expect the news to find me…” remember that quote?

I’m worried about all of this because I’m worried about how scholars are going to cope in the event of a sustained attack on their works. I’m worried about the pressure we as publishers might face. I’m worried about how the public is and will be informed about the way the world works. The weekend before the US election, I was in Washington, DC attending a superb workshop on replication and reproducibility. One of the things that struck me though, was the failure to consider the effects of bad actors on a process of transparency. Those maligned scholars from the University of East Anglia were a harbinger. Their data was hacked, cherry picked and distorted for the purposes of political manipulation. They had no tools or defensive mechanisms with which to cope. The same will be true for #altmetrics. The same will be true for open data. The same will be true for preprint article repositories. How do you fancy dealing with a post publication ‘journal’ that expressly goes out and cherry picks data and research and the names of authors for political ends? Sounds fanciful? Here’s a fake newspaper for you. To everyone working on these things, I urge this: please, please, please, think about how this stuff might be misused and abused; think about the anti-patterns. Because somebody will, if they aren’t already. InfoSec isn’t just about the hardware, it needs to be about the information too.

Here at the Kitchen, we’ve had our arguments and disagreements with many over where scholarly publishing is going and should go. But there’s one thing that unites all of us. We all care very deeply about the business of the scholarly record. We ALL care about getting the research and the debate out there, so that the values of the enlightenment can be advanced. We do. Don’t let our disagreements on the how cloud that fundamental fact. The future is here. Yes, it really is very unevenly distributed. We need to figure out together, what we are going to do about that.

Discussion

19 Thoughts on "How To Live Safely in a Post Factual Universe"

“Post-truth” was named OED’s Word of the Year, 2016.

‘The OED defines “post-truth” as “relating to or denoting circumstances in which objective facts are less influential in shaping public opinion than appeals to emotion and personal belief.”‘

Use of the term went up 2000% this past year.

Not surprising given that accusations of lying featured prominently on both sides of both the Brexit and Presidential campaigns. But I am inclined to believe that objective facts are always less influential in shaping public opinion than appeals to emotion and personal belief. A more colorful version of this is the term “waving the bloody shirt” from the US Civil War debates.

But on a pedantic note, knowledge of objective facts lies solely in the form of personal beliefs, so this distinction is problematic.

If you believe that F= GMm/r2 is a matter of personal belief, then sir! I invite you to step off the nearest tall building…. 🙂

There is a fundamental difference between facts and our knowledge of them. Humans have knowledge, not facts. I believe that all knowledge occurs in the form of personal belief. In epistemology a common definition of knowledge, which I use, is “justified true belief.” Thus some personal beliefs are knowledge while others are not. Facts and the knowledge of them are two different things. Nor do facts, per se, shape public opinion. That requires the statement of facts, knowledge of facts, observation of facts, etc.

If they are using “personal belief” to mean false belief then they should say so. Or if they mean beliefs that are entirely subjective, such as one’s favorite color, then I would say that the claim is simply wrong. In short, in this OED definition the concept of “personal Belief” is confused.

Another aspect that the OED definition glosses over is that in these sorts of political debates what the facts are is often a central issue in itself. Thus charges of lying, misinformation (and its strange cousin disinformation) are often actually reflections of a disagreement over what the facts really are. This is also a common feature of scientific debates, as Kuhn pointed out. It is actually a general feature of fundamental disagreement.

EXPERTS AND CELEBRITY SCIENCE?

There have been many excellent posts on Scholarly Kitchen and this is one of the best! Smith so truly states that for Brexit: “The thing that bothered me most was the fact that one side decided to a specific thing that the other side didn’t: The leave campaign chose to attack experts. It chose to attack, belittle and dismiss those who’s business it is to predict the future from the present state of things. It chose also to indulge in a more generic framing of those experts and academics as “Elitist” and “Out of Touch” and worse. Oh yeah, it also lied.”

Most of the time the “experts” are right (i.e. 90+% of them are in agreement) and we would be stupid to ignore their advice. But we must strive to increase that 90+ to 99+. What scholarly media folk may not appreciate is that the Brexit/US election dynamic sometime operates in academia and sorting out the gold from the dross is something they have a hand in. For any who might think Trumpism does not happen in science, please check the following:

1.Soderqvist T (2003) Science as Autobiography: the Troubled Life of Niels Jerne (Yale Univ. Press, New Haven).

2.Eichmann K (2008) The Network Collective: Rise and Fall of a Scientific Paradigm (Birkhauser, Berlin).

I looked with interest for guidance on how to live safely in a post factual universe, but did not find it. What am I missing?

What am I missing?

I would suggest that you’re missing either 1) a familiarity with the novel that the title riffs off of (https://en.wikipedia.org/wiki/How_to_Live_Safely_in_a_Science_Fictional_Universe) or 2) an appreciation of gallows humor.

This was fact in Germany in 1940

The Jews are Guilty!

by Joseph Goebbels

The historic responsibility of world Jewry for the outbreak and widening of this war has been proven so clearly that it does not need to be talked about any further. The Jews wanted war, and now they have it. But the Führer’s prophecy of 30 January 1939 to the German Reichstag is also being fulfilled: If international finance Jewry should succeed in plunging the world into war once again, the result will be not the Bolshevization of the world and thereby the victory of the Jews, but rather the destruction of the Jewish race in Europe.

http://research.calvin.edu/german-propaganda-archive/goeb1.htm

This is fact in the US in 2016

‘Bill Kristol: Republican spoiler, renegade Jew’

A post in May described a “third party effort to block Trump’s path to the White House” that Breitbart claimed was orchestrated by the prominent conservative and Trump critic Bill Kristol.

http://money.cnn.com/2016/11/14/media/breitbart-incendiary-headlines/index.html?iid=surge-story-summary

Folks these people mean what they say!

I wonder what “objective facts” means. Philosophers of science like Pierre Duhem and W.V.O. Quine have taught us that “facts” are relative to frameworks of understanding, so that observations are not “neutral” but only have meaning in relation to theories.

Indeed Sandy, are they in contrast to subjective facts (just kidding)? The corollary is that every belief might be false, which is part of what makes science fun. But as Descartes pointed out, this does not imply that any specific belief is false.

My guess is that the OED definition was not written by an epistemologist.

And, this, ladies and gentlemen, is why most of America thinks academics are out of touch and not functioning in the same reality . . .

I think most people have thought this way for thousands of years. Academics are trying to understand things that most people do not understand. And there is no way to change this, because in an important sense academics are functioning in a different reality, especially scientists. Let’s start with quantum physics and wave-particle duality. Now there is a different reality!

As for being out of touch, I do not know what that means. Out of touch with what?