The Nominet Trust is a UK-based charity with the mission to “support initiatives that contribute to a safe and accessible Internet, used to improve lives and communities.” Recently, they published a report entitled, “The Impact of Digital Technologies on Human Wellbeing.” It’s published as a PDF, one that is so well-designed that it’s quite digestible on-screen.

The report was covered in the New Scientist where the reporter boiled the conclusion of the report down in a rather histrionic tone: “the internet has actually been the victim of some sort of vicious smear campaign.”

Reading the report by neuroscientist Paul Howard-Jones doesn’t leave you with quite that polemic an impression. In fact, the report is balanced and inclusive. It also confirms that it’s better to be careful when interpreting scientific findings.

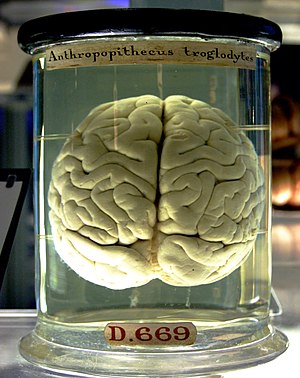

For instance, as I wrote about here recently, the notion of “Internet addiction” is shaky at best, a spin on facts at worst. Another common meme is Nicholas Carr’s extended riff on “rewiring of the brain,” with its pretentious and insidious overtones providing a fussy wrapper for anachronism.

In the new report written by Howard-Jones, both the notion that Internet addiction is a cause of problems rather than an effect of problems is squarely addressed (it’s best viewed as an effect), and the science behind the narrative of “the Internet rewiring our brains” is also dealt with reasonably and authoritatively (it’s not rewiring our brains any more than getting a DVR or smartphone rewires your brain — that is, you learn how to use it, then get the hang of it, hence are rewired, like when you learned how to read, count, walk, or type).

But don’t take my word for it. Here’s a nice excerpt about this supposed Internet addiction for your edification (references have been edited out so I don’t have to put in a bunch of superscript you can’t use — see the full report for the references):

Many discussions of internet addiction, however, fail to discriminate between what the internet is being used for, despite over-use of the internet being associated with one or more specific types of problematic behaviour. In a study of mental health practitioners, adults seeking help for excessive use of the internet focused on excessive access of pornography or online communication related to infidelity, while issues of excessive use by young people focused chiefly on gaming. . . . the significant predictors of problematic usage appear to be low self-esteem, anxiety and the use of the internet for sensation-seeking activities that the user considers to be important. . . . some researchers maintain that internet addiction is not a true addiction, but may be the product of other existing disorders such as depression, or a ‘phase of life problem’. . . . some of the chief hazards of internet addiction (marital, academic and professional problems, together with sleep deprivation) might be the cause rather than the effect of excessive internet use.

And here’s a nice excerpt involving the supposedly dangerous and insidious “rewiring of our brains”:

Gary Small and colleagues carried out a novel study of how the brains of middle-aged and older participants respond when using an internet search engine. Compared with reading text, they found that internet searching increased activation in several regions of the brain, but only amongst those participants with internet experience. Based on the regions involved, the researchers suggested that internet searching alters the brain’s responsiveness in neural circuits controlling decision making and complex reasoning (in frontal regions, anterior cingulate and hippocampus). However, because an uncontrolled task was used, it is difficult to know what cognitive processes the participants were carrying out. This is a problem when attempting to draw conclusions about neural differences. It is possible that, even when they were supposed to be searching, less experienced users were spending more time reading text while their ‘savvy’ users who had learnt how to use search engines were using sophisticated search strategies. After five days of training for an hour a day, the internet-naïve participants were producing similar activations as their more experienced counterparts. . . . Changes in neural activation in different regions can be expected when learning any task for the first time. For example, after adults learned to carry out complex multiplication, the brain activity produced by carrying out this task shifted from frontal to posterior regions (suggesting less working memory load and more automatic processing).

As you can see, this is much more objective than the hyperbole so often used to sell magazines, secure book deals, or dominate television time. With a calm and authoritative voice, the entire report is worth perusing, touching on questions like:

- Do multitaskers have an advantage?

- Can you train your brain to work more efficiently online?

- Why are video games so attractive?

- Can you learn more using video games?

- Do violent or social games make you more violent or social?

- Does the Internet lead to attention problems?

- Does the Internet make us more sedentary?

- Are we losing sleep over the Internet?

Because the report is broken out nicely into summaries around “We Know That . . . ” and “We Do Not Know . . .” you can quickly imbibe some perspective before diving into each section’s detailed prose and citations.

The bottom line? Despite the warnings from self-appointed experts, there is less to worry about with the Internet than there is with — well, with automobiles, guns, drugs, social isolation, aging, recessions, wars, food poisoning, or pollution. So, while it’s nice to fret about the new, it’s even better to put things into the proper perspective and priority.

The Internet is a big deal, but it’s certainly not dangerous.

Discussion

24 Thoughts on "Is the Internet Bad for Our Brains? The Answer Is Subtle and Complex, But Quite Reassuring"

I am struck by this point: “it is difficult to know what cognitive processes the participants were carrying out” which is made with respect to experienced searchers. This should be one of the great research questions of our day, given the central cognitive role that search now plays, especially in the scientific community. Is there even a journal on this topic? Or a major research program somewhere? Should I search for them?

what we risk losing in a non linear world is context. the best example of this is the rise of public figures making completely incorrect and unsubstantiated statements (see Palin, Sarah) that at worst become magically true or at “best” go unchallenged. the news reports i read about Sarkozy being assaulted failed to mention he was traveling in a largely Basque region … did anyone (in the NEWS MEDIA?!) bother to think critically or make this connection? anyone, anyone, bueller?) it seems that currently we are not educated to effectively seek out context, so in the new world order, education and pedagogy has got to change.

I don’t know that I blame that on the Internet. In fact, where did you find out Sarkozy was traveling in a Basque region? (I’m betting the answer is “the Internet.”)

I use the Internet all the time to learn more than the boneheaded 24/7 media report. They are officially pitiful at this point, I agree — polemical, superficial, and reporting like they’re at a fight club. I get more reasoned opinions from blogs, tweets my peeps share, etc.

Broadcast news is choking on its own vomit, but that’s not the Internet’s fault.

I agree, but perhaps you are being polemical. If the news industry is being gutted by the Internet you can’t expect the quality to go up, can you?

I admit, I get frustrated with mass media, but the problems they’re mismanaging started back in the 1980s, with the rise of “right vs. left” and “life as a sports metaphor” reporting (winners, losers, contests, etc.). The 24-hour news channels that took off in the 1990s only exacerbated these trends. Then FOX News came along as a clear puppet of the Republican party’s message/memorandum machine, further making the media into a distortionplex.

The media’s litany of sins predated the Internet. The proliferation of cable television channels probably did more to further distortions than anything else. The Internet is to some extent providing a counterweight by allowing discussion, facilitating long-form stories again, linking materials together, allowing narrowcasting, and offering unique expert opinions instead of blow-dried talking heads.

speaking as one who watched this all come down at Times MIrror in the 1990s, the main issue with the media is (was) not mismanagement, it’s greed. when the business people took over editorial, the race to the bottom began.

my point is that the if use the internet without formally evolving how our brains work = bad juju.

How do we in scholarly publishing get people to use our books the way many Internet users addictively access pornography and video games? 🙂

“Where in the World Is Carmen San Diego?” managed to make geography fun and educational. “MythBusters” manages to make scientific testing fun and interesting. Some of the author abstract videos being published now hint at a more interesting and engaging way to get ideas across. Instead of “books” in your question, maybe substituting the word “information” might loosen things up?

That sort of publishing is all well and good, and there are great benefits to making current research accessible to the non-expert. That said, much of what we do is publish high level material written by experts for experts. If I’m doing experiments at the cutting edge of my field, I can’t write about them in a detailed and meaningful way for others doing the same if at the same time I must write to engage the general public. There’s a shorthand that comes when one assumes one’s audience has a certain knowledge level, otherwise you’ll have scientific papers that are hundreds of pages long as they’ll need to fill in all the basic background.

So while there’s certainly room for fun and educational material, there’s also a need for high level complicated stuff, which doesn’t really translate.

Matching the approach to the audience is what I was implicitly proposing. I think we’re all locked in to one approach in STM publishing, despite the audience. Video abstracts and other experiments have shown that technical information can be conveyed in an engaging and useful manner.

Video does have its uses (particularly helpful for explaining methods and techniques) but I’m not sure how “useful” things like video abstracts are, despite their being presented in an engaging manner. In an age of information overload, isn’t efficiency more valuable than entertainment? I can read an abstract and decide if I want to read the paper much faster than I can sit through a 5 minute video explanation. It’s awful hard to add notes in the margin of a video or to copy and paste a section of text out of it.

Aren’t most of these things just technology for technology’s sake without an actual use case?

I don’t know if the internet is making us stupid, but it is certainly changing our behavior and society. I worry less about the possible re-wiring of our nervous system and more about the character of our interactions and quality of life that results. Without a doubt, much of what the internet does is a vast improvement, particularly in terms of reach and efficiency. As an example, I can’t imagine how anyone did my job (or most of my previous jobs) without the internet.

I do worry though that it is making us socially lazy. It’s much easier to sit in a room by yourself clicking away on Facebook than it is to deal with real human beings in person. In some ways we’ve turned over the determination of social norms to the likes of Mark Zuckerberg and others who are perhaps further out along the Asperger’s continuum than most of us. As such, we lose deep interpersonal interactions for a higher quantity of less meaningful ones. Messy and real is being exchanged for tidy, neat and unreal.

An interesting article here on how this growing fear of intimacy manifests in a world where it is becoming more difficult to translate attention into recognition without relying on social media:

http://www.popmatters.com/pm/post/144490-/

“I have this feeling that people are going to become more and more wary of direct face-to-face attention because it will seem like it’s wasted on them if it’s not mediated, not captured somehow in social networks where it has measurable value. I imagine this playing out as a kind of fear of intimacy as it was once experienced—private unsharable moments that will seem creepier and creepier because no one else can bear witness to their significance, translate them into social distinction. Recognition within private unmediated spaces will be unsought after, the “real you” won’t be there but elsewhere, in the networks. “

I agree that the report provides a useful and balanced overview of what we know and don’t know about the effects of videogaming, and the discussion about the fraught issue of internet “addiction” is good as well. The other sections of the report, particularly those on multitasking, learning, and neuroplasticity, seem cursory, however. The author describes, for instance, the potential benefits of the multimodal delivery of information without mentioning the possible negative consequences of interruptions and working-memory overload, both of which are extensively documented in the scientific literature.

For parents, to whom the report is geared, the big news is the author’s support of the AAP guideline of no more than two hours of non-school-related screen time a day, with the additional caveat that nighttime screen time be avoided as it’s associated with sleep problems. Many parents will struggle with such restrictions, since on average kids are spending far more time looking at screens than 2 hours a day and much of it takes place during the evening hours. I wonder, Kent, whether you support those recommendations or consider them too restrictive?

My own summary of the videogame research can be found here (if you’re interested):

http://www.roughtype.com/archives/2011/04/grand_theft_att.php

Hi Nick,

Sorry, your comment got caught in spam again. I think it’s the links. Happily, David Crotty rescued it. Thanks, David.

To your question about the AAP’s recommendations about what they call “screen time,” I think it’s important to note that when they wrote that policy statement, “screen time” pretty much meant TV time. It was written at a time when a lot of parents were letting their kids have TVs in their rooms, which probably explains the sleep problems too. Now, with our world moving quickly to a screen-based information world, I think that recommendation needs to be restructured. I don’t think they intended it to mean that you shouldn’t look at cell phone screens, computer screens, iPads, or Kindles for more than 2 hours per day. As parents, my wife and I differentiate not based on “screen vs. not” but on a balance of stuff — video game allotments, TV time, iPad time, reading time, family time, outside time, board game time, baking time, dog time, etc. So, in spirit I agree with my old friends at the AAP, but I think their wording has become a vestige of an age of scarce screens (i.e., screen = TV). My son is reading the second “Hunger Games” book on one of our Kindles. Do I mind that he spends 4 hours one day reading it that way because it’s a screen? Not at all.

What the AAP’s statement touches on but doesn’t grapple with sufficiently I think is that “screen time” in the sense they were using it at the time was often a proxy for social isolation and/or poverty. That is, latchkey kids or kids in unsafe neighborhoods or both were choosing to stay inside watching TV because their neighborhoods weren’t safe or they felt socially isolated or both. And don’t even get me started on what those damned milk carton “Have you seen me?” alerts did to our sense of letting our kids out to play. I think being diverted from core issues like poverty, pollution, too many cars driving too fast, social isolation, and poor nutrition by blaming stuff on TV or “screen time” isn’t going to help us solve root causes.

Kent,

I agree there are differences between TV viewing and computer use. But, just to be clear, the AAP policy is not a relic of pre-net days. It was updated this year, and it reads (in part): “Pediatricians should continue to counsel parents to limit total non-educational screen time to no more than 2 hours/day, to avoid putting TV sets and Internet connections in children’s bedrooms, to coview with their children, to limit night-time screen media use to improve children’s sleep, and to try strongly to avoid screen exposure for infants under the age of 2 years.”

It should also be noted that TV viewing by kids (and adults, for that matter) has not decreased since the arrival of the web. So computers (in their various forms) have been additive to total screen time rather than substituting for TV time.

Nick

Great information here and in the report. I’ll take some time to digest.

As usual, very interesting comment thread.