The psychology literature might be suffering from a complex by now.

Back in April, I wrote about the Reproducibility Project and PsychFileDrawer.org, two initiatives created to test the reproducibility of studies in the psychology literature. Since then, both sites seem to have fallen off the radar, if the stats at the Reproducibility Project are any indication (the low number of views of papers at PsychFileDrawer.org isn’t encouraging, either).

There may be just as much of a need for something, however, as a new paper finds a suspiciously high number of papers that are just reaching statistical significance in the psychology literature.

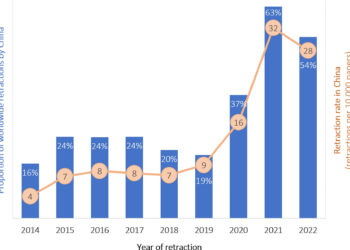

The study, published this month in the Quarterly Journal of Experimental Psychology, examined 3,557 p values between 0.01 and 0.10 published in 12 consecutive issues across three psychology journals – the Journal of Experimental Psychology: General, the Journal of Personality and Social Psychology, and Psychological Science. The authors – one from Wake Forest and one from the University of Quebec at Chicoutimi – entered the p values from Psychological Science first and independently, in order to discover any problems in recording or analyzing these. They found a 96.8% agreement between their documentation, and learned how to eliminate the errors, which would not have changed their findings in any event.

There is an error in the paper. The authors state that they analyzed 3,627 p values, but if you add up their raw numbers, it comes up to 3,557. I don’t think this matters, but it’s something that could have been caught.

The hypothesis of the study was that if the p values occurred naturally across independent studies, there should be no deviation from an expected distribution of p values based on mathematical models appearing in the literature. If there were “disturbances” around certain values, then perhaps something other than factual record-keeping and straight-up statistical calculation was going on.

Sure enough, the researchers found significant disturbances around some p value ranges, but most consistently and significantly around those in the range between 0.045 and 0.05 (p < 0.001).

The authors then looked more closely, dividing the 0.045-0.050 range first into 0.0025 zones and then into even finer 0.00125 zones. This allowed them to measure precisely where the disturbances were occurring. A high proportion of the disturbances seemed to appear between 0.04875 and 0.05.

Breaking the data down by journal, the Journal of Experimental Psychology: General seemed much less prone to these disturbances in reported p values, while the other two shouldered the blame for the overall effect about equally.

To further check their calculations, the authors also used an unidentified rater who was blinded to journal title. This person was asked to calculate the data from Psychological Science. The same patterns emerged in this independent analysis.

The authors offer a number of possible explanations for their findings, including publication bias on the part of authors, editors, and/or reviewers; an excessive emphasis on statistical significance over other statistical measures like effect size or reproducibility; or how researchers might consciously or subconsciously control their degrees of freedom and thereby nudge their data in a favorable direction. The authors write:

. . . researchers may engage in the “repeated peek bias” (Sackett, 1979) or “optional stopping” (Wagenmakers, 2007), which amounts essentially to capitalizing on random fluctuations in one’s results – one monitors one’s data continuously, ceasing only when the desired result appears to have been obtained.

It’s also interesting that one journal in the set fared better than the other two. This suggests that there are editorial practices at that journal which may counteract the concerns the authors articulate. Is there more rigorous statistical review? Is there a different set of editorial priorities? Are authors with borderline data afraid to expose themselves to that journal’s editors? I couldn’t find anything obvious to explain this observed difference, but there is something to say about the Journal of Experimental Psychology: General when it comes to publishing papers flirting with significance.

The authors also speculate that the observed disturbances between 0.045 and 0.05 may be part of the explanation for why researchers are hesitant to share data:

. . . [others have] found that researchers were especially unlikely to share their published data for reanalysis if their p values were just below 0.05. Such a pattern may be indicative of researchers who are reluctant to share their data fearing that erroneous analyses of weak data be exposed.

The authors conclude that the continued drive to p values created by null hypothesis testing in the psychology literature may be distorting results, especially when combined with the powerful and growing incentives to publish.

In other words, perhaps our obsession with 0.05 has disturbed even the statistics we use to measure research in psychology.

Discussion

4 Thoughts on "Sort of Significant: Are Psychology Papers Just Nipping Past the p Value?"

To me, the effect size is much more interesting (and important) than the p-value. With a large enough sample, even small effects can have high statistical significance but very low practical significance.

Effect size estimates suffer from the same problems as p values:

http://psychologicalstatistics.blogspot.co.uk/2012/08/yet-more-on-p-values.html