The March 28 issue of the Times Literary Supplement contains an op-ed piece by Malyn Newitt, formerly of King’s College London and Exeter University. Its title is “Out of Bounds: the National Trust’s Libraries,” and Newitt opens it by quoting the National Trust’s mission statement:

We take care of historic houses, gardens, mills, coastlines, forests, woods, fens, beaches, farmland, moorland, islands, archaeological remains, nature reserves, villages and pubs – and then we open them up for ever, for everyone.

But Newitt then points out that while the National Trust’s work in this regard has been invaluable and in many ways exemplary, it has left one important component of many of these properties far short of “open… to everyone”: the books that are housed in their libraries.

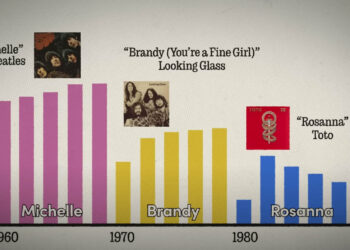

The historic houses that have been preserved by the National Trust and made available to the general public include, according to the Trust, “140 historic libraries (around 230,000 books in 400,000 volumes)…Many are country house libraries, some collected by wealthy bibliophiles, others containing more practical everyday books, including rare provincial printing.”

Newitt observes that in recent years the Trust’s Curator of Libraries has set out to catalog these collections, making it increasingly possible to search, identify, and locate their holdings via COPAC (a national online union catalog), and that concerted efforts are also underway to repair and conserve the books themselves.

All of these efforts Newitt praises, but he points out that while these books are, in many cases, now discoverable and theoretically available for scholarly examination by appointment, in reality they are effectively inaccessible given their scattered locations and the restrictions involved in arranging access to them. He calls on the National Trust to go one large step further towards its stated mission: to make the books available for reading by interested members of the public. He proposes a number of ways in which the Trust might move in this direction:

A printed version of the catalogue available in each library for consultation by visitors… “open days” when a library can be visited and the books inspected… a programme of exhibitions… programmes, developed with schools and colleges, based on interesting items from the library; digital images of rare and important items made available on the internet; a separate membership scheme for those wanting to use the libraries; or simply a reprographic service.

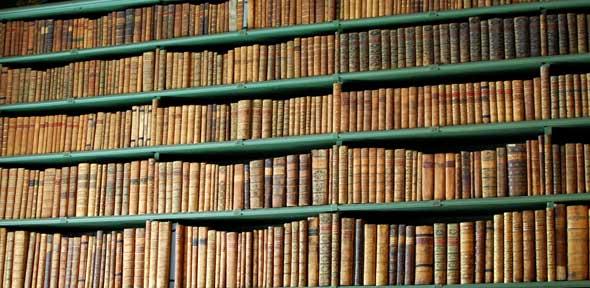

Any scholar, book lover, or librarian will empathize with Newitt’s irritation: the thought of these hundreds of thousands of volumes – many (if not most) of them in the public domain, and many (if not most) of them representing rare or functionally unique content – sitting in 140 now publicly-owned libraries where visitors are unable to read or even touch them, is teeth-grittingly frustrating. It seems to me, though, that most of his proposals would result in only very small and incremental increases in public access.

For these books – at least the ones in the public domain – to become truly accessible to the scholarly and general public, what really must happen is for them to be digitized and put online, where their intellectual content can be found and read freely. Seeing their pages on a computer screen is not, of course, the same thing as holding the books physically – but online access is radically better than no access at all (which is what the world has now), and in any case, digitizing the books would not make them any less physically accessible than they currently are.

So what is stopping the digitization from happening? My guess, based on experience working in academic libraries that hold collections of similar materials, is that there are two major barriers, one of them both inevitable and understandable, and the other one much harder to defend:

- Money. Purely functional digitization can be fairly cheap, but it is not free. High-quality digitization, on the other hand, is relatively expensive, as are the processes of metadata creation, search engine optimization, formatting and organizing images to make them easily usable, and storing them robustly and reliably. On the conservative assumption that only half of the books housed in these National Trust properties are in the public domain, we’re talking about creating a digital collection of 200,000 volumes – that’s not an inconsequential undertaking, and the money would have to come from somewhere: either redirected from other public projects, or brought in by a private entity. More about this in a moment.

- A desire for institutional control. This is the less-defensible explanation, and it’s one that I have encountered too often in libraries themselves. Very often, there is among librarians and curators a sense that creating free online access to these materials will harm the host organization by (in the short run) reducing the number of visitors and (in the long run) taking away the potential for future revenue streams. Sometimes these concerns are not very well articulated – they are expressed simply as a generalized sense that giving up institutional control over the content of these documents would be unwise.

As for the concern over money: that’s life. The reality is that resources are always limited, they tend to be particularly so where projects in the public sector are concerned, and a dollar (or pound) can only be directed to digitization projects by being taken away from some other endeavor – unless some outside entity can be convinced to underwrite the project. This, of course, is exactly how the Google Books project came about. Could the National Trust lure Google (or some similarly deep-pocketed organization) into its historic houses to digitize their public-domain books and make them available to the public under mutually-beneficial terms? And if such an organization were willing to do that, how would the academic world feel about it? What if the project created a significant net gain in public access, but also allowed the corporate underwriter to make money in some way – perhaps by selling printed copies on demand, or by licensing some kind of enhanced access to the online images? There are some in the scholarly world who would focus on the net benefit to scholarship and the net gain in public access, and would welcome such a partnership. My experience suggests that there are also many who would focus on the possibility of corporate gain under such a scenario, and would therefore oppose it in principle, regardless of its potential benefits to scholarship.

As for the concern about institutional control: I have little sympathy for it. While it’s possible that providing free online access to the books in these houses would reduce visitation levels, it seems highly unlikely: the books’ content is inaccessible to visitors now, so access to it can hardly be attracting visitors. And unless the Trust has plans to digitize the books itself and somehow generate revenue from the digital images, there is little or no opportunity cost to letting someone else create the images and potentially profit from their public availability.

There may well be other barriers to the Trust engaging in a project like this. I hope they can be overcome. As of now, the public-domain books housed in these historic properties are a public treasure to which the public that owns them has effectively no access.

Discussion

6 Thoughts on "Public Access to Public Books: The Case of the National Trust"

You, or rather the NT, needn’t worry about increased access to libraries affecting visitors to the properties in the NT. It is such a minor reason for people to visit them (and this perhaps reflects why libraries aren’t in the NT’s mission statement). The houses and bountiful gardens themselves are attraction enough (especially for families with young kids who have energy to expend).

But the books should be made accessible of course. That said, and again why they are not a general pulling point, is how obscure and, by today’s terms, niche they are. A few volumes on a single decade’s history of a specific English county for example. From a time when most folk tilled the land for a living. They are more a statement of how the moneyed gentry felt little or no pressure to, you know, earn a living – as they had the time to write and/or read these tomes, as well as wander in those wonderful gardens.

And of course there will be a some genuine social history deep within them for local historians,

But so many are so obscure that it’s hard to see how a for-profit organization could make money. Brand capital out of philanthropic gestures yes, but hard cash. Nope.

But anyone from overseas visiting the UK should check out the NT for some genuine insights into a world gone by. (And I’m not anything to do with NT other than a grateful member).

Let’s not overlook the wonderful work that volunteers might do. The Gutenberg project is a clear indication that volunteers can make a big difference. As well, those volunteers might well do a better job than a Google staffer who has little interest in what he or she is scanning and OCR-ing. Rasterized page images should only be the start of the digitization process. There is much more than can and should be done to preserve and extend access to these works.

As a regular visitor to National Trust properties, Malyn Newitt’s criticisms of the Trust’s libraries seem slightly unfair. While the Trust does, perhaps almost by accident, own “one of the greatest collections of books in Britain” (Newitt’s description), it it physically constituted as around 140 smaller collections distributed widely around England, Wales and Northern Ireland. Perhaps there was a tendency in the past for the Trust to treat its libraries in much the same way as it treated its paintings or furniture, but times have changed. In recent decades, the Trust has made great progress in promoting the use of its collections, e.g. through cataloguing and the addition of bibliographic records to COPAC. The consequence of this is that the collections are probably far more accessible to scholars now than they have ever been before. Without massive investment in digitisation (or perhaps involvement in ILL), it is difficult to see how access to collections like these could ever realistically be organised, except by appointment. Property-specific catalogues on site or library open-days might have their uses, but do not really solve the core accessibility problem.

As you point out, digitisation would represent one potential solution. However, the impediments are not just financial. The nature of the collections (and their storage environments) mean that there are likely to be considerable collection care issues. There would also be the purely logistical challenges associated with digitising highly-distributed collections, e.g. whether mobile digitisation studios would need to be set up in-house, or whether items would be able to be taken off site for digitisation elsewhere. These are probably not insurmountable problems, but they all require dedicated resource, e.g. for inventory control or conservation. These are far more likely to be impediments to digitisation than a misguided desire for institutional control.

Finally, I’m not sure to what extent these collections really are owned by “the public.” The works themselves may mostly now be in the public domain, but the National Trust’s libraries no more belong to the public than do the collections of a university library. The National Trust is not a government body, but an independent charity that gains most of its income from membership subscriptions, property and legacies. Nor does membership lead to additional privileges. The antique chairs in the Trust’s properties may (in some sense) belong to its members; that doesn’t, however, give you the right to sit on them!

Thanks for clarifying the National Trust’s private nature — I was indeed operating under the mistaken assumption that it’s a government entity. (I guess I assumed the word “national” meant, you know, “national.”) So you’re right that the sense in which the public-domain books contained in National Trust properties are “public property” applies only to the content of the books — not to the volumes themselves.

The US Dept. of Energy’s OSTI had an interesting solution to this challenge, called Adopt-a-doc. Patrons paid to have selected documents digitized and got an acknowledgement on the digital result, mostly older scientific reports. It is prioritizing a filter of sorts. Unfortunately the program was just killed to make way for the emerging US Public Access program.

Ironically OSTI’s public collecting of open access journals was also killed, so open access is taking a hit from Public Access.

Michaeldaybath makes many of the points that occurred to me while reading this interesting article. I have been closely involved with digitising books from a number of institutions for several years, and a couple of additional comments spring to mind. Firstly, duplication. Many books will be found in more than one NT library. A proper catalogue of the entire holdings across the country will highlight this. Somebody would then have to decide (a) whether a few locations might in fact contain most of the material that needed digitising, (b) which copy was in the best state of conservation to be digitised, and/or, (c) if different editions existed, which to select (or whether to deliberately digitise all of them, at extra cost), and/or (d) whether marginalia etc. should be included (digitising marginalia legibly brings addtional challenges for the photographer). Secondly, akin to this: if a particular work has already been digitised by Google or Project Gutenberg or archive.org, or even a commercial organisation like Proquest or Cambridge University Press, why would you bother to do it again? Thirdly, don’t even think about this being something for amateur volunteers to do – the kit is expensive and the books valuable; scanning is a highly skilled job (which is why there is a lot of debate out there about the poor quality/incompleteness of many existing scans). This is why you would be talking big money. Several million to make any serious inroads at all. Fourth, it would be impractical to produce separate print catalogues for each NT library, but it should be possible to put a filter on a unified electronic catalogue that would enable those interested in a particular property to see only the holdings from that library. There are several systems available out there that deal with multiple libraries in the same institution – most UK universities run a union catalogue of this kind – and if the NT is contributing to COPAC, it must be using one of the normal standard formats. Finally, a reality check on ‘public access’ to the actual books. Antiquarian books tend to be both fragile and valuable. Books in special collections, even at the British Library and other truly public institutions, are normally kept in climate controlled secure facilities, and may only be read in a supervised area after credentials have been presented by the reader. They need to be re-shelved in the right place too, or they will be unfindable next time. There are many issues around potential damage (intentional or unintentional) and theft: the cost of supervision, the cost of insurance and so on. As Michael says, you can look at the chairs but you can’t sit on them! And, would it all be worth it for the number of people that would actually want to look at those copies rather than the ones in their usual library or on google?