While public access to research articles is a fact of life for much of the scholarly community, access to research data – while a top priority for many governments and other funders, who see it as the key to future economic growth – remains a challenge. There are many reasons for this, both practical (eg, lack of infrastructure) and professional (eg, lack of credit, getting scooped). The publishing community can and does already help with the former, for example through support for NISO, CrossRef, CODATA, and other organizations and, increasingly, the development of data sharing and management solutions. Resolving the professional issues, however, will almost certainly require action by research funders and institutions.

While public access to research articles is a fact of life for much of the scholarly community, access to research data – while a top priority for many governments and other funders, who see it as the key to future economic growth – remains a challenge. There are many reasons for this, both practical (eg, lack of infrastructure) and professional (eg, lack of credit, getting scooped). The publishing community can and does already help with the former, for example through support for NISO, CrossRef, CODATA, and other organizations and, increasingly, the development of data sharing and management solutions. Resolving the professional issues, however, will almost certainly require action by research funders and institutions.

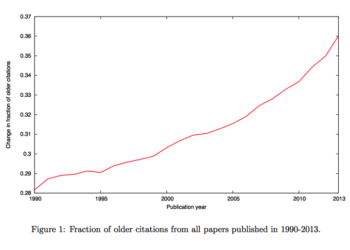

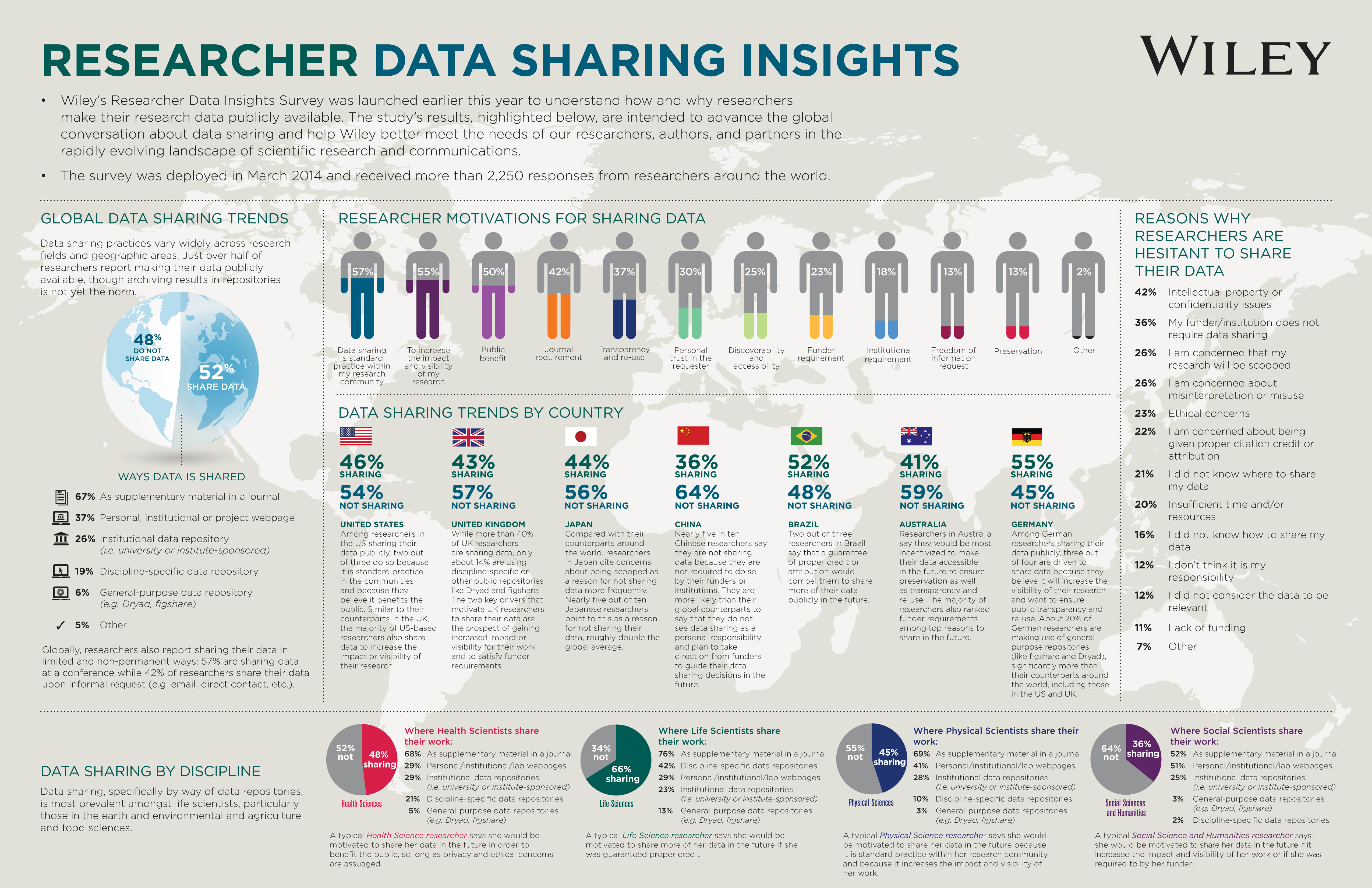

Earlier this year, in an effort to establish a baseline view of data-sharing practices, attitudes, and motivations globally, across a cross-disciplinary set of researchers, Wiley surveyed around 90,000 recent authors of papers in health, life, physical, and social sciences, and humanities. For the purposes of our survey, in order to better understand how researchers themselves view data sharing, rather than imposing any definition of either data or data sharing on them, we allowed the respondents to decide what it means to them. Several of the more common activities researchers see as data sharing – such as sharing at conferences, or on request – would fall short of many definitions of data sharing, which demonstrates why it is so important to listen to the views of individuals in this discussion.

2,886 researchers responded (3.2%), of whom 2,255 (2.5%) were actively working on a research project or had been in the last two years. The respondents skewed towards the Americas (52%), with 30% from Europe, Middle East & Africa, and just 18% from Asia Pacific. The vast majority (87%) were from universities, colleges, and research institutes, with the remainder working in a mix of industry, government, medical, and other organizations. In terms of disciplines, most respondents (37%) are working in life sciences, compared with social sciences and humanities (25%), physical sciences (22%), and health sciences (16%).

So, based on what our respondents told us, who is sharing what, how, and why (or why not)?

The overwhelming majority – 82% – produce data in spreadsheets and CSV files, etc, with only 12% of respondents creating relational databases. Thirty-eight percent of respondents create two-dimensional images as data in the course of their work; 3D images, 12%. Twenty-two percent of respondents are creating executable code/models, 14% collect transcripts and other data from interviews, and 11% are generating video/audio recordings. Surprisingly (to me anyway), for the most part, these files are (relatively) small – over 60% are less than 10GB and only 3.5% are larger than 2TB.

How researchers report that they are sharing data is also revealing. Over two thirds (67%) of the 52% who report sharing (ie around 38% of all respondents) stated that they have done so as supplementary material in journals (which broadly matches Wiley’s own experience), compared with only 19% who say they use a discipline specific repository and just 6% who report using a general repository, such as Dryad or figshare. As mentioned above, many researchers report sharing data in informal, often impermanent ways, that wouldn’t meet formal requirements, such as sharing at a conference (57%) and sharing on request via email, direct contact, etc (42%). In addition a somewhat staggering 37% say they are using a personal, institutional, or project website to share data – again, unlikely to meet any data sharing mandates, and certainly not the best way of ensuring any kind of long-term preservation of the data.

Perhaps most interesting is why researchers say they share data – and why they don’t. For example, German researchers reported sharing the most (55%), with three quarters of those respondents doing so in order to increase the visibility of their work and to ensure public transparency and reuse. Chinese researchers, however, reported being least likely to share (36%), with half the respondents stating that this is because it’s not a funder requirement. Chinese researchers are also less likely than their peers in other countries to say that they see data sharing as a personal responsibility. Other reasons given by respondents to share (or not) data highlight some interesting cultural differences. For example, twice as many Japanese researchers say they worry about being scooped; two thirds of Brazilian researchers say they’d be willing to share their data if they got proper credit or attribution for this; and only 14% of UK researchers say that they are sharing data via public or discipline-specific repositories, compared with a global average of 25%.

The main reason respondents cited for not sharing data is around IP/confidentiality issues, especially in health science, where it was cited as a reason by 68% of respondents, compared with an average of 42% across all disciplines. No funder requirement was the second biggest reason given for not sharing data (36%), while concerns about being scooped, and about possible misuse or misinterpretation of data were ranked third by respondents (26% each).

Unsurprisingly, respondents from different disciplines report different concerns and motivations. For example, as you can see from the infographic, respondents from social sciences and humanities as well as the physical sciences would be motivated to share their data in order to increase the visibility and impact of their work. Life scientists, however, would be more motivated to do so if they were guaranteed to get credit, while respondents working in health science, told us that they are most concerned about privacy and ethical issues around data sharing.

So what are the overall lessons learned from our survey?

Publishers have, by accident rather than design, hosted data in the form of supplementary material for a number of years now. However, this compares poorly with other services which are far better suited to the curation and long-term preservation of research data. We could undoubtedly do more, therefore, for example, through requiring journal authors to archive their data. Some journals and societies already do this, including Molecular Ecology, which introduced a data archiving policy in 2011 when it adopted the Joint Data Archiving Policy. Since then, the journal has deposited over 1,000 data packages in Dryad – an extraordinary achievement in just a few years. Examples of societies that have introduced data-sharing policies for their journals include the American Geophysical Union, the Society for the Study of Evolution, and the British Ecological Society, whose Executive Director Hazel Norman recently wrote an excellent article about their experience. And most genetics journals require authors to deposit any DNA sequences they reference in GenBank.

Next, with lack of funder requirements cited by respondents as a major reason for not researchers not sharing their data, governments and other funding bodies need to play a more active role. Although some already require their researchers to submit data management plans, these are not always enforced – and many funders have not yet even developed their requirements. Support for and investment in the data repository infrastructure is also critical – discipline-specific and general repositories offer researchers a simple, safe, and permanent way to share their data, but they are underused at present and more investment will be needed in order to support any significant growth in future demand.

Last, but by no means least, we urgently need to find ways to credit and attribute researchers for data sharing – again, an area where institutions and funders need to show leadership. Not only will this require adoption of data citation standards, but also a cultural change in how researchers are rewarded for creating, analyzing, and preserving data. If adopted, the Contributor Roles Taxonomy CRediT initiative, led by Amy Brand and Liz Allen (see my interview with Amy) could help significantly with this. Varsha Khodiyar, Karen Rowlett, and Rebecca Lawrence also make some useful suggestions in their recent Learned Publishing article, including the expansion of data publishing opportunities and implementation of – and recognition for – data peer review.

There is certainly no one-size-fits-all, silver bullet solution to the challenges of data sharing – different communities, researchers, organizations, and countries are likely to continue to need different solutions. But there is a real opportunity here for the wider scholarly community – funders, institutions, publishers, societies, and others – to work together to develop data-sharing standards and best practices, as well as to encourage the use of existing repositories and create new ways of sharing data.

A full report including additional data will be available in due course. Thanks to colleagues Laura Fedoryk and Liz Ferguson for their help

Stop Press: A Knowledge Exchange report, Sowing the seed: Incentives and motivations for sharing research data, a researchers’ perspective, published yesterday (November 10) provides a much more in-depth look at many of the issues we covered in our survey, and includes recommendations for how all stakeholders – funders, societies, publishers, research institutions, data centers and repositories, and Knowledge Exchange itself – can encourage and support research data sharing. (Note, link was updated on December 18, 2014 as original report was amended)

Discussion

24 Thoughts on "To Share or not to Share? That is the (Research Data) Question…"

This article reflects a great deal of advocacy for greatly increased data sharing, but is that really what we want? Some of the policies being advocated are highly intrusive on the researcher, especially the use of mandates. Is this the way we want science to work, with funders and institutions acting as regulators of researcher behavior?

I am particularly concerned by the so-called culture change called for in this statement: “Last, but by no means least, we urgently need to find ways to credit and attribute researchers for data sharing – again, an area where institutions and funders need to show leadership. Not only will this require adoption of data citation standards, but also a cultural change in how researchers are rewarded for creating, analyzing, and preserving data.”

The implication seems to be that the reward system of science today is wrong and that urgent action is required to fix it. I am not convinced.

The US Government considered these issues in detail via the Interagency Working Group on Digital Data (IWGDD), which I did staff work for. Our result was the concept of the Data Management Plan (DMP) which is now mandated by OSTP. The idea of the DMP is that researchers will consider data sharing then make their own judgement about which way is best. This is very different from forcing science to change how it works, which some data sharing advocates apparently want.

Question: do you have any insights as to the effect of data sharing on replication? Is research more or less likely to be replicated when the data is readily available. Whatever the effects, if there are any, do those effects advance or retard the goals of science?

I would be interested to learn if researchers are complaining that they do not have access to data they need. In short, is data available to those who need it?

Good question Frank. It’s not something we specifically addressed in our survey, nor, as far as I can see, did Knowledge Exchange, whose report only mentions replication in passing as a benefit of data sharing. If anyone has looked at this I’d be very interested and, if not, then it’s something we should attempt to gather evidence on in future.

I am surprised by the negative attitude to data sharing shown in the first comment. To my knowledge most publishers encouraging data sharing and have no problems with accepting the usefulness of data management mandates. It is often said that for most researchers the maxim which best fits their attitude to data sharing is a positive – your data is my data – with a negative – my data is my data but I cannot see whether any researcher should not only to be willing to deposit data to make it available but also structure the data (as far as possible) to make it usable by other scholars, It is obviously not unreasonable for researchers to ask for an embargo so that they can make first use but then why should anyone want to stand in the way of other people using it for their own research. Eefke Smit who (inter alia) is the data person for STM has written on this topic – for example in http://www.dlib.org/dlib/january11/smit/01smit.html,

Anthony

First, the comment to which you refer was not written by a publisher.

Second, I don’t see much opposition to this from the publishing world, except in that there are policies being floated around that ask publishers to act as the enforcers of data availability rules. This creates additional cost in terms of employee effort, overhead, monitoring systems and compliance systems for journals, costs for which no funds have been offered.

Like many other policies that have recently been proposed around science publishing, it is a broad brush that puts responsibilities on parties further down the line. Rather than crafting a nuanced policy that emphasizes data types that are reusable, or that provides significant funding for mechanisms for all of this data to be made publicly available to everyone forever, the policies just basically ask for everything and then pass the buck, hoping someone down the chain can figure out the details (universities, publishers, researchers).

Indeed I am not a publisher. As I tried to make clear, I am an analyst of federal policy, in this case data policy, where I was an active participant in policy development. My point is that many of the more radical proposals for data sharing have been carefully examined and rejected as US policy. It is important to understand the government’s reasoning or “negative attitude” toward these proposals. A major part of this is the belief that scientific communities, not government funders, should decide how to handle data sharing. Perhaps our final report will be helpful in this regard. See https://www.nitrd.gov/About/Harnessing_Power_Web.pdf.

This is a complex and nuanced subject, so much to digest here. A few thoughts:

If the goal is reproducibility, then data is less valuable than requiring authors to publish a detailed methodology for how they produced those results. It always strikes me as odd that funders are asking for one without the other. Publish your data and I can look at it and see if it supports your conclusions, but I can’t necessarily tell if the data itself (and hence the conclusions) are valid. Publish your protocols and I can collect my own data to see if what you collected was accurate.

If the goal is reuse of data, then this matters in some areas more than others. There are types of data (DNA sequence, economic data, sociological surveys, computer code) that lend themselves very easily to reuse. Other types of data, often collected under very specific conditions to ask a very specific question, has little value for reuse. And yet, for reasons of simplicity, we are again facing a set of policies that treat all data as equal. This sort of blunt, brute force approach to policy is problematic and creates waste along with the good that is offered.

Last, but by no means least, we urgently need to find ways to credit and attribute researchers for data sharing – again, an area where institutions and funders need to show leadership.

Don’t things like authorship and citation work here?

David,

Absolutely agree with you about reproducibility. I noticed that in the debate on reproducibility many people take the path of the least resistance, suggesting all kinds of IT-based solutions for better storage and sharing of the data. It is true that more data can help in some computational areas (e.g. bioinformatics) but have little use for the majority of experimental scientists. For them, the ability to reproduce is mostly dependent on the knowledge of methods.

For example, in biological sciences, the introduction of genomics methods in early 2000s generated a lot of new data, but it did not improve the reproducibility.

Is it possible that the debate is going toward the data because IT solutions are perceived as easy, quickly to implement and sexy (aka “disruption”)?

Moshe

Thanks Alice, great posting, interesting results with China, perhaps there are also cultural issues there that might factor with the results. As another example point/benefit for data sharing (and publishers being proactive here), the PLoS supp data portal powered by figshare had more than 250,000 views of the data last month. This is a strong SEO factor/benefit pointing traffic back to the journal article.

Thanks for a very informative posting Alice and very insightful.

I really like your observation that the culture of reward needs to shift in order to make it possible for researchers to share more of their work. This doesn’t just apply to data of course but to all kinds of intellectual outputs including things like techniques and analysis algorithms.

Yesterday, I was at a workshop organized by CCC on the subject of content sharing. One of the sessions was a Q+A with a researcher who at one point was asked about her attitude to sharing data, both positive and negative. She was nervous about the idea, citing concerns about what might happen to the that data and how it might be used by competitors. She also mentioned how she had, a couple of years ago made an observation during her work that she had not made public because she was keeping it back in order to have it be part of a bigger story which could be published in a higher impact journal. Despite the fact that the data could be useful for science, she found herself unable to make it available or even discuss it, for fear of somebody else replicating it and claiming it for their own discovery. In effect, fear of being denied credit for her work.

One way in which we might, as an industry, accelerate the progress of science is to enable the ‘publication’ of data, ideas, techniques, code, or whatever type of intellectual output in such a way that researchers can be sure that they will get the appropriate credit for their work and are therefore be less fearful of sharing.

I thought that what is being asked for is the specific data behind a published piece of research. If something is found while doing research that is not discussed in the paper, then I would think that data can remain with the researcher and need not see the light of day until the researcher desires to do so.

The US government policy includes datasets behind papers, but appears to go beyond that (interpret at your own risk):

To the extent feasible and consistent with applicable law and policy2; agency mission; resource constraints; U.S. national, homeland, and economic security; and the objectives listed below, digitally formatted scientific data resulting from unclassified research supported wholly or in part by Federal funding should be stored and publicly accessible to search, retrieve, and analyze. For purposes of this memorandum, data is defined, consistent with OMB circular A-110, as the digital recorded factual material commonly accepted in the scientific community as necessary to validate research findings including data sets used to support scholarly publications, but does not include laboratory notebooks, preliminary analyses, drafts of scientific papers, plans for future research, peer review reports, communications with colleagues, or physical objects, such as laboratory specimens.

How can you say all that without taking a breath? I would use the 2d Amendment citing the clause set off by commas “,a well regulated militia,” as a defense and example for not citing everything.

Citing the Bayh-Dole Act (http://en.wikipedia.org/wiki/Bayh–Dole_Act) might be more effective if you don’t want to make things publicly available.

Not surprisingly, the Federal data policy is ambiguous. On the one hand it says “…digitally formatted scientific data resulting from unclassified research supported wholly or in part by Federal funding…” which suggests that all data resulting from research should be accessible. But then it defines data (for the purposes of this rule) “…as the digital recorded factual material commonly accepted in the scientific community as necessary to validate research findings…” which suggests that absent specific findings there is no data to be made accessible. (Enter the lawyers, stage left.) The fact is that “data” is a confusing concept.

The link to the full report (published November 10th) as referred to in the STOP PRESS, is broken.

I have managed to find http://www.knowledge-exchange.info/Default.aspx?ID=733 but it does not have a link to the pdf of the report, just a short description.

Can you please advise the correct location to see the full report.

Thanks

Thanks Aaron, we’ll get a corrected link up as soon as possible.

Apologies for the delay – apparently the study has been taken down due to “significant errors and omissions” – see

http://www.knowledge-exchange.info/Default.aspx?ID=733