Recently, there was a fascinating story on PBS Newshour called, “The Internet’s Hidden Science Factory.” The reporter, Jenny Marder, detailed how Amazon’s Mechanical Turk has created a shadow network of experienced science survey respondents and why these semi-pro respondents create problems for researchers, editors, reviewers, and for the research itself.

Mechanical Turk is Amazon’s distributed workforce service, which (airquotes required) allows “employers” to “hire” “workers” to complete tasks for small fee per task completed. It rewards volume of work in most cases. One successful implementation of Mechanical Turk has involved researchers looking for readily identifiable pools of research subjects to take online surveys and interact with online tools. Because there are plenty of requests to be had, “Turkers” like to sign up for these online research opportunities, and many do it again and again.

As Marder writes in the online version of the story:

These aren’t obscure studies that Turkers are feeding. They span dozens of fields of research, including social, cognitive and clinical psychology, economics, political science and medicine. They teach us about human behavior. They deal in subjects like energy conservation, adolescent alcohol use, managing money and developing effective teaching methods.

People who have analyzed the work done by Turkers notice a few problems. Their dropout rates mid-survey are higher, which can skew the results. There are no environmental controls, so survey participants could be watching television, drinking, or letting their 7-year-old do the work. But the biggest part of the problem is the repeated exposure to research surveys and tools. This robs the research of spontaneous or “gut level” responses, which fouls the results. As one frequent Turker on surveys is quoted:

It’s hard to reproduce a gut response when you’ve answered a survey that’s basically the same 200 times. You kind of just lose that freshness.

If by now you sense that a study was conducted around this very phenomenon, you’d be right. A group of researchers compared Turker and non-Turker behaviors around a classic psychology experiment (the “Public Good game,” which tries to determine altruism levels). Published in Nature Communications, the study found that Turkers had lost the altruistic impulse the game usually detects in novice participants, largely because they’d learned that altruism isn’t rewarded at the end. As Marder writes in her story:

The first is that frequent Mechanical Turk workers are fluent in these experiments on arrival. They know how to play the game. But also, perhaps more importantly, their natural human impulses from daily life, as they apply to the game, no longer exist.

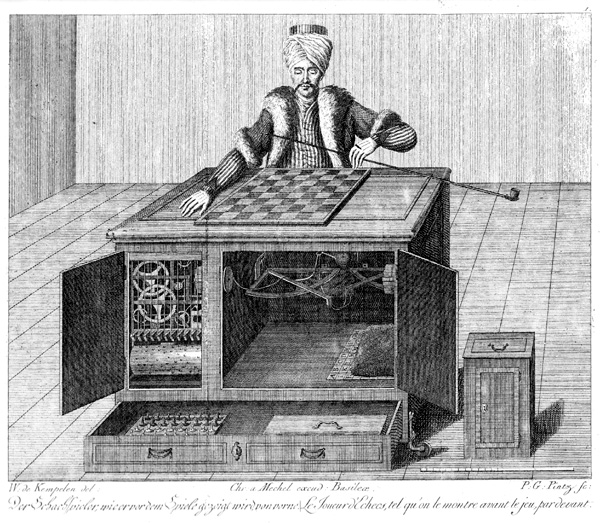

Perhaps we shouldn’t be surprised by this. After all, the inspiration for the name “Mechanical Turk” was a Turkish chess contraption designed to fool the user into thinking a machine was playing chess. Instead, it was an illusion that allowed a human chess champion to play through the machine. Once again, we see a clear example of how what looks like technology really boils down to humans working through technology, with some new problems emerging because of it.

We ask a lot of questions about how a study was conducted. Do we need a new filter on methodology to eliminate studies done in science factories like Mechanical Turk? It’s an intriguing story, and the issues it raises bear attention.

Discussion

4 Thoughts on "Loaded Dice — The New Research Conundrums Posed by Mechanical Turk"

Do any serious scientists or for that matter serious people pay any attention to data generated by something like mechanical turk?

Perhaps I speak too soon, I wonder if Karl Rove used data derived from MT for his Ohio predictions!