This past week at its Annual Conference in Washington DC, the International Association of STM Publishers (STM) released their 2015 Tech Trends, the result of an exercise held during STM Week in London last December. Gathering together representatives of 26 organizations, the STM organizers asked these individuals to identify the top 3 technological trends they saw impacting their organization’s publishing activities over the next three to five years. The mix of organizations was relatively even, consisting of non-profits, scholarly societies, well-established university presses and commercial entities. Through a process of iterative discussions, an interesting view of what’s changing for this sector of the information industry emerges.

This past week at its Annual Conference in Washington DC, the International Association of STM Publishers (STM) released their 2015 Tech Trends, the result of an exercise held during STM Week in London last December. Gathering together representatives of 26 organizations, the STM organizers asked these individuals to identify the top 3 technological trends they saw impacting their organization’s publishing activities over the next three to five years. The mix of organizations was relatively even, consisting of non-profits, scholarly societies, well-established university presses and commercial entities. Through a process of iterative discussions, an interesting view of what’s changing for this sector of the information industry emerges.

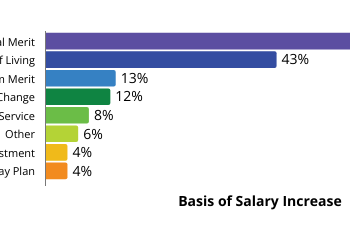

Presented as a colorful infographic, the STM Tech Trends consists of three inter-related core trends:

- The first is the emergence of Data as a First Class Research Object. For those unclear as to the significance of that phrase, such objects are key to ensuring the ongoing reproducibility and reusability of scientific research material. In that light, such objects must be validated, made discoverable, made accessible, curated, and preserved in the interests of making them reusable by other researchers across time and geographical boundaries. The result is that, as the STM Report, Fourth Edition (March 2015) said in its executive summary, publishers are challenged by the explosion in data-intensive research “to create new solutions to link publications to research data…, to facilitate data mining, and to manage the data set as a potential unit of publication.”

- A second inter-related trend pertains to the emerging importance of Reputation Management. In the interest of accountability and transparency, funding bodies seek to better gauge the return on investment of grant dollars while institutions seek to evaluate and reward faculty contributions in attracting those dollars. Over the past decade, an array of new metrics have emerged, been tested for validity and are currently being tracked. Consequently, the researcher’s impact is assessed by more than just a publication track record. At the same time, there is a recognition that any metrics must be constructed in ways that guard against gamification and other misuse. Publishing has always played an important role in career management for researchers. Publishers see ways that they may continue to be of assistance to researchers in supporting career management while demonstrating to institutions their contribution.

- Driven to some extent by the previous two is a third nascent trend — the scholarly article as a crucial element in a hub and spoke model. While perhaps the least obvious of the three to external eyes, the developing form of the scholarly article as published output encompasses a variety of non-textual forms of content (video, data, software methods, other media, etc.). Those elements will ultimately be packaged, presented, and preserved in a smart network of connections that more effectively meet the needs of specific communities. In such a smart network, is the traditional article still recognized as in the print environment? Not necessarily, and even the term “article” may be a misnomer of sorts. But whatever those packaged elements may be called, it is clear that STM publishers are thinking about what form the evolving scholarly record may take in science and in academia. They recognize the forces of change and are anticipating how they can most appropriately serve the interests of the scholar and to move most nimbly to serve the researcher. But there is a key question that still requires an answer. How rapidly will each community or field of science push the evolution of long-standing practices surrounding appropriate documentation and presentation of results?

The infographic presentation offered up by STM shows these three trends as multiple wheels or cogs driving change, but the connectors between those wheels reflect some of the uncertainties that arose in the minds of those participating in this brainstorming session. How might publishers better support the researcher’s intellectual output for purposes of establishing priority and certification? Who will fund the various infrastructures required in support of this new type of publishing? How to solve issues of integration, standardization and preservation? How to best help protect the researcher in an Open Science and sharing environment against misuse or gaming? As we envision the future of STM and gauge where the technology may take us, these questions are entirely valid and require — if not firm responses — at least a sense of potential collaboration amongst all stakeholders.

It is all too easy to misrepresent these valid concerns from publishing professionals as springing from a desire to preserve a more traditional status quo. That is, however, a far too facile characterization. Publishing professionals — those from scholarly societies in particular — have a vested interest in understanding and acting on the concerns of their members. At the moment, due to the various disruptive forces discussed here at the Scholarly Kitchen, research and scholarly communication of results between colleagues is changing. Naturally, we see new approaches and tools emerging as researchers themselves tinker with creating tools and resources that better support different tasks and workflows. But tinkering and nurturing new approaches exacts a price. As Eefke Smit, Director of Standards and Technology, STM, noted to me during our conversation about the 2015 Top Tech Trends, the scholarly publishing landscape is full of publications, tools and databases that were initially created by a working researcher dissatisfied with the tools or resources available at hand. It was only when such initiatives became successful and more demanding of the researcher’s time and attention (conflicting with his or her primary research goals) that the operations often would be taken on by a more adequately resourced publishing entity.

As a researcher who joined the STM Tech Trends 2015 brainstorming session reportedly remarked, “I had perhaps expected the publishers to focus on the question of how might they make more money from these trends. But the key question was actually what is it that the researcher really wants and needs”.

Viewed in that context, the results of the STM brainstorming session make clear a commitment from the publishing sector to continue to adapt to and support this on-going shift in the communication of scholarship by whatever means may best serve the broader research communities’ requirements. What’s needed is a joining of such efforts with those of information professionals and scholars in order to manage needed changes with the result of a more effective out-pouring of new knowledge and scientific understanding.

Discussion

15 Thoughts on "Emerging from the STM Meeting: 2015 Top Tech Trends"

There look to be over a hundred issues, sub issues, etc., in the graphic, which seems right. There is also a latent issue tree structure, plus a network of sorts, both also right. It is very interesting that OA only gets one minor mention — OA funding. Is OA off the table? However, I would not call these tech trends, but rather tech driven issues. For example, reputation management is an issue, not a technology.

It seems to me that the items mentioned are available, in development or being implemented as I type. So what we can say is that the items will be refined. There is very little new here!

Regarding speaking about money. The publishers were talking about money. None of what has been accomplished, is in the process of being instituted or in the planning stages was/is only being done in order to make money!

Eefke Smit noted that “the scholarly publishing landscape is full of publications, tools and databases that were initially created by a working researcher dissatisfied with the tools or resources available at hand. It was only when such initiatives became successful and more demanding of the researcher’s time and attention (conflicting with his or her primary research goals) that the operations often would be taken on by a more adequately resourced publishing entity.”

This also applies to Scholarly Kitchen. With the support of some publishers I initiated Bionet.journals.note in the 1990’s with a mandate not too dissimilar to SK. While put to good use by Harnad when addressing the open publication issue, the pursuit of my primary research goals left no time to gate-keep and the quality of contributions soon degenerated. Bravo SK and good luck!

I remain concerned about the characterization of data as a “first class” research object. Obviously data publishing and data availability should be on this list of emerging trends, but I worry about that label. Data is not the end goal of hypothesis-driven research, and will always be secondary to the understanding and interpretation of that data. A technician collects data, a scientist understands data. Research institutions and funders are unlikely to give equal weight to raw data collection as compared with actual conceptual breakthroughs. Ask yourself, who you would rather hire as a new professor, the person who ran the machine that collected all the information, or the person who took that information and turned it into a new drug/technology/theory?

So while yes, data is of great importance and a rapidly advancing focus for the entire research community, should we really be placing it at the top of the pyramid, or is it a step on the way to what we wish to achieve?

Agreed, nor do I see publishers spending the kind of money on data that they spend on articles, because there revenue is not there to support such a system.

I’m not so sure about that. The drive to make data accessible is creating some really interesting new market opportunities. Some commercial publishers are building data repository-like services (Nature/Macmillan with FigShare for example). There are already several “data journals” where datasets are published like papers (usually OA, so author processing charges are paid). As funding agencies begin to require release of data, there’s also an opportunity to create author services, ways to ease the path and help make this happen in an efficient and low-effort manner. So while I don’t think of it as a direct replacement for the research paper, there’s definitely new opportunity and new revenue streams here, and we’re already seeing many publishers wading in.

I suspect the returns will never be more than small because the data associated with a paper is enormously larger and difficult to produce, while far less valuable. Many publishers wading in looks a lot like herd behavior. But one never knows. Revolutions are like that.

Perhaps the value for the publisher lies not in the value of the datasets themselves, but in the need researchers have for making them public.

As noted by Canadian Nobelist John Polanyi, data “only appears to be freely available to all. In fact, it is available only to those who understand its meaning, and appreciate its worth.” What may seem junk to some may appear gold to others. The temporal transition is worth a comment.

Decades ago one had an idea. One then had to think up experiments to test the idea, write a grant application and stock one’s laboratory with supplies and personnel. Only then was one in a position to generate appropriate data. Once the data were generated, one had to analyze them with one’s own tools. Today one can skip all this. One goes to the internet and asks two questions. Where is the data store? Where is the software to analyze that data? Both can be downloaded with a few clicks. What in the past took years, can now be completed within days or weeks, provided one knows where to look, what to pull out, and what to do with it.

So by all means let us have data repositories, lots of them, but do not let them become an end in themselves, as happened in the case of the widely lauded genome project. Those of us trying to understand the human genome no more needed the entire DNA sequence than you would need an entire dictionary to understand how it worked. Paradoxically, the diversion of funds from the bioinformatics analysis of the first few thousand sequences that became available, to the human genome project, has probably delayed progress in understanding that genome. And with funds went careers. Data-gatherers are now in abundance. Analysts are thin on the ground so, to return to Polanyi, where are the folk who are going to understand meaning and worth?

One can only skip the research that generates the data if someone else has already done it, which is highly unlikely for any new question. Of course there are cases where large new data sets can be mined by many different people asking different questions, making it something like data for its own sake, but this is not the same as the data generated by a given research project, far from it. One of the big confusions in this whole data discussion is that the term “data” refers to a vast array of very different things. Among other things these different things may differ by many orders of magnitude in size. This makes the term “data” virtually meaningless, which unfortunately does not keep people from using it to make strong claims.

The Tech Trends analysis seems excessively “object” focused rather than “process” focused. A manuscript is not the end point. If a manuscript has imperfections (e.g. poor analysis, shoddy data etc.), in most cases the solution is not to “correct” the original manuscript, but to publish another manuscript that highlights the failings of the first. Scholarly journal publishing is not about manuscript assembly, it’s about curating a process.

It is not surprising to see non-traditional media of scholarly output as one of the upcoming trends. In this age of digitalization, an increasing number of organizations and scholars wish to widen their outreach as well as mine for relevant information, and social media and non-print forms of communication serve as the vehicles. One major change this has brought is the shift in the primary source of information. While traditional print sources of information dominated for years, digital platforms have taken over. Though this has vastly improved data accessibility and availability, it also raises the question: To what extent can one rely on digital platforms as the trusted primary source?