Editor’s Note: The Chronicle of Higher Education recently published an article on the struggles libraries are facing with digital infrastructure costs, which included this quote:

“In the beginning, we all thought, everything is going online, it will save us a ton of money. But that’s not true. Now that’s pretty widely understood.”

It brought to mind Kent Anderson’s post from last year on why digital publishing is so expensive, and why we shouldn’t expect that to change any time soon (also worth revisiting, Kent’s earlier pieces on the costs of keeping your systems up to date and on accounting for technology costs). Meanwhile, the RA21 project promises much needed upgrades in authentication and user experience, but these will also likely come with their own costs. We sit in a precarious position, trying to respond to demands for new and better ways to present information while regularly being told that prices are too high and that libraries don’t have any money. This may be leading us further down the path to market consolidation, as the largest and most profitable publishers are best suited to absorbing these extra costs, while the smaller publisher with lesser margins may not be able to keep up.

A core conceit behind the new information economy is that delivering content becomes much cheaper when the content is in digital form. This conceit has informed, and continues to inform, a lot of thinking, from how publishers charge to what it should cost to host or launch a journal online. A lot of price sensitivity stems from the assumption that digital is cheaper than print. Recently, eLife was caught in up in its own financial contortions around technology costs, shoving aside capital expenditures in order to demonstrate lower per-article charges despite the fact that both need to be paid for, no matter your financial sleight of hand.

The idea that technology is cheap persists despite abundant evidence to the contrary — high broadband bills for businesses and households; publications and publishers struggling to make ends meet in the digital age; and higher costs to society overall due to hacking, phishing, and other security breaches. Most of these costs are passed along indirectly through higher credit card interest rates, bank charges and fees, and so forth, but the overall trend is undeniable.

Put another way, if providing information on the Internet is so cheap and easy, why all these costs and financial challenges?

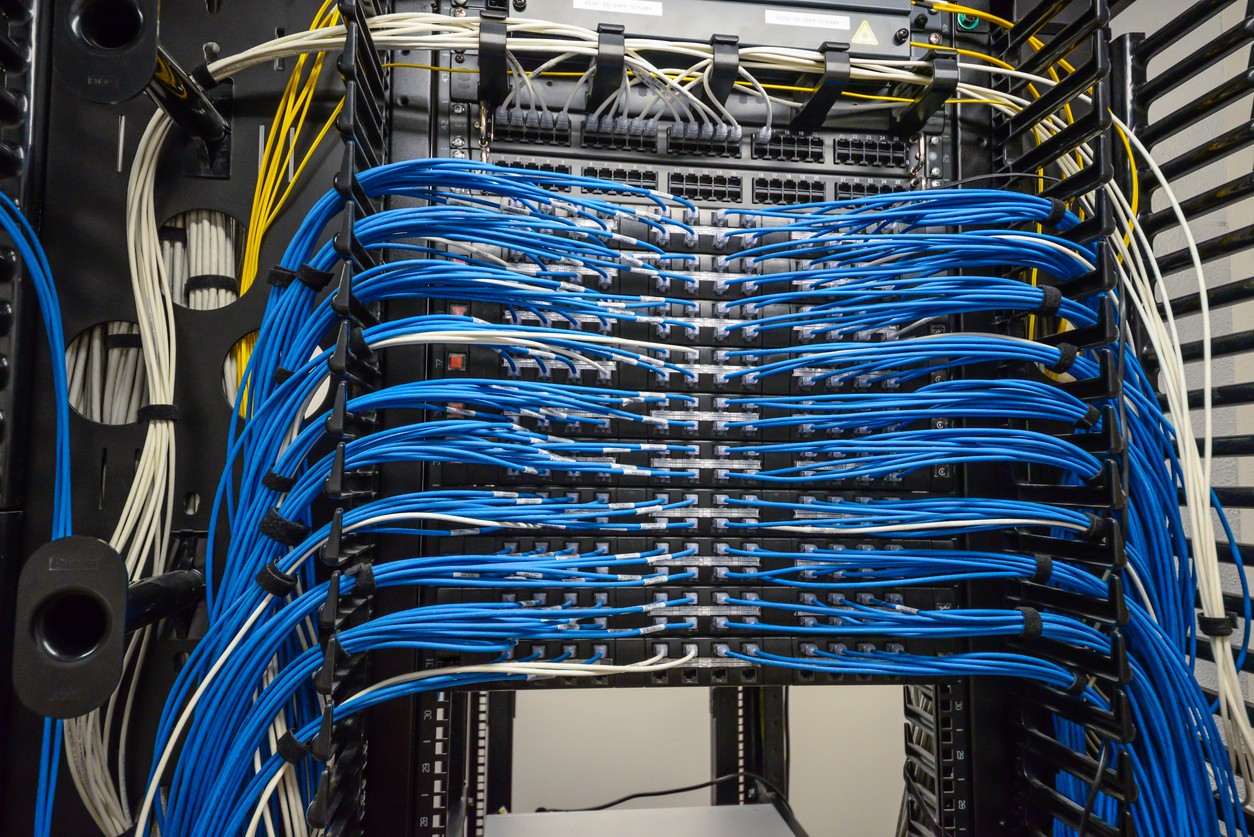

In scholarly and academic publishing, we face increasing platform costs, staff expenses, consultant fees, software licenses, and infrastructure costs because running a digital operation is expensive. And the costs tend to be fixed rather than variable, which means margins have to be higher to withstand the vacillations revenues inevitably undergo. The expense line can’t be managed as easily to match revenues in the digital age, which means an axe is often wielded where a scalpel might have been used before.

Three trends indicate that the costs of the digital information economy are only going to rise over the next decade.

First, there is the trend represented by commercial entities like Google, Amazon, and Facebook laying transoceanic cables — that is, bandwidth becoming more precious, scarce, and expensive.

Second, there is the reality that software engineers (back-end, full-stack, and so forth) are going to continue to command higher salaries as competition for their services increases.

Finally, there will be costs to greater online security — and if you don’t think people are willing to pay for that now, you haven’t been watching the US elections.

Amazon, Google, and Facebook are moving to lay dedicated transoceanic cables between the US and Europe, the US and Asia, and the US and Australia/New Zealand in 2017. With the oceans largely unregulated when it comes to laying cable, this approach proves cheaper than satellites, balloons, or other schemes the companies have tried to increase their reach. But increase their reach they will, because bandwidth cannot be assumed, and paying others for it on the open market doesn’t make sense for these major players. It’s too unreliable and expensive. Facebook and Google are collaborating on a trans-Pacific cable, while Facebook and Microsoft are working with a Spanish telecom on a trans-Atlantic cable.

This kind of infrastructure is not cheap to create or operate. Repairs need to be made, upgrades contemplated, and so forth. But increasing bandwidth is the path to lower costs and more efficiency for these giant Internet companies, and will provide them with yet another advantage in the next decade.

As Mark Russinovich from Microsoft is quoted:

It’s about taking control of our destiny. We’re nowhere near being built out.

How will this drive up costs for everyone else? Directly and indirectly. First, these companies will need to recoup their investments and operating expenses, which means pass-along costs in various ways. Most of these won’t be felt directly, as a $5 increase in a Microsoft license or a $5 increase in Amazon Prime membership will likely go unnoticed. But the costs will be passed along, and then some. In addition, with this bandwidth, costs for Amazon Web Services, Microsoft Azure, and the Google Cloud Platform will also rise. Many publishers use these services to host various parts of their infrastructure. In addition, some publishers will want to maintain their online delivery advantages, which means they may want to buy into infrastructure like this to ensure deliverability is very real. Finally, in a larger sense, this signals the end to assumptions about infinite bandwidth. Reality itself will bring a de facto end to Net neutrality, no legislation needed.

This is emblematic of the increasing build out of infrastructure generally, a trend that has accelerated over the past 3-4 years in scholarly publishing, from various CrossRef initiatives to CHORUS to ORCiD to COUNTER to Kudos and so forth. For publishers, most of whom support these via a membership plan, the costs are real. And as data initiatives bring new supporting services online, costs will only increase.

Within these services and overall, the other cost driver pervades — the expensive computer scientists, engineers, programmers, designers, and database experts behind the scenes. Hiring for these positions can be time-consuming and difficult, as finding the talent in the first place isn’t easy, and competing for it can be harder still. This is why so many services are emerging to provide programmers and engineers. But even these are often torn asunder when one of “the bigs” shows up in a market — for example, when Facebook decides Elbonia is a good hunting ground for engineers, you can bet that the contract shops there empty out pretty quickly as big signing bonuses, offices with espresso machines and climbing walls, and fat paychecks draw staff away.

The expense, difficulty, and transient nature of engineers makes two things less likely for most publishers — independence and lower prices. For most publishers, they can thrive only by partnering with providers who can spread the risk and costs of hiring across multiple customers, and even then, prices are unlikely to fall unless there is a great business model on the provider-side.

As the infrastructure becomes more robust, new services and possibilities will emerge, giving the engineers new things to do, while also adding various stakeholders who will increase requirements on publishers and their systems. Security is already a big issue, and it’s bound to get bigger and more costly to manage. Just recently, Google announced that, starting in January 2017, Chrome will shame sites that don’t comply with https:// security protocols, which may cause many scientific and scholarly journal sites problems, as most are not in compliance currently. Currently, just doing a top-of-mind scan of the major journals, NEJM, Science, BMJ, and Nature do not meet Chrome’s upcoming standard, and they (and their third-party ad services) will need to dig in over the coming weeks and months to adjust if they wish to comply. (To their credit, eLife and PMC do use https://, but they don’t have advertising to offset their costs.)

The move to two-step authentication by Apple, Google, and others is another sign of security as a layer receiving increased attention and generating greater costs. Sending thousands or millions of text messages to cell phones to close the loop on two-step authentication costs money. Building, maintaining, and monitoring secure systems costs money. And since security is a moving target — every new system deployment invites intrusion and engineered defeat — costs tend to gravitate upwards.

We’re also seeing new requirements with various data initiatives, and areas like disclosure, open review, annotation, marketing, and so forth are adding wrinkles to our environment. The rampant astroturfing of social media by non-US sources, including many in Russia, has also raised awareness of the pernicious aspects of anonymity online. Thus, we have a virtuous cycle of infrastructure and possibility creating new demands and expectations for publishers to meet. To respond, they will need new technologies, which will require more scarce engineers, who will be able to charge more, and these costs will need to be borne.

In short, any assumptions that technology costs will fall significantly, that digital publishing will become a panacea of free and cheap resources, or that some steady-state of technological satisfaction will emerge will very likely lead you to reach the wrong financial conclusions. You will budget inaccurately, charge too little, or both.

Given increasing user demands, infrastructure requirements, editorial requirements, and customizations for our market, it’s safe to say that “we are nowhere near being built out.”

Discussion

2 Thoughts on "Revisiting: Why Technology Will Not Get Cheaper"

Coincidentally, this misguided belief persists among academic editors, or at least this PeerJ academic editor in a blog interview (https://peerj.com/blog/post/115284879575/online-publishing-should-be-less-expensive-than-print-interview-with-peerj-editor-kenneth-de-baets/). Unfortunately, the publisher does nothing to disavow the editor of this belief.

I think the semi-polite term for these people is “tourists.” Because we interact with a lot of academics, we have a lot of tourists in scholarly publishing, some of whom are the equivalent of Ugly Americans — they think the native population is backwards and so they SPEAK ENGLISH LOUDLY TO US because they think doing so makes them more intelligible.

It’s better all around if the visitors are interested in learning about the economy they’re visiting, and are open to learning how and why it developed, and where it is headed. It’s also a missed opportunity when a tourist invites an education like this person did, and nobody bothers to explain why things are headed in a different direction than they believe.