I’ve been excoriated a few times in comment threads when I’ve asserted that scientific information made accessible through open access (OA) publishing doesn’t necessarily give users access to the information — that actually, unless you’re well-educated and possess specialist knowledge, much of the information in the scientific literature will be inaccessible simply because you can’t understand it, much less put it to use.

With some experience teaching literacy to adults and English to non-English speakers, I know you can’t take language mastery for granted. Speaking isn’t the same as reading, fluency in daily activities doesn’t equate to comprehension of abstract or specialized information, and comprehension goes beyond words and sentences to realms and signals that are often “meta” to the words and sentences used.

Most scientific papers use a vocabulary pitched to a highly educated audience. It’s then sprinkled with jargon for a specialist audience familiar with statistics and trial design (quick, name the differences between a cohort, cross-over, or cluster trial design). Making sense of even the methods requires appreciable amounts of knowledge.

But let’s begin with readability.

A common measure of basic readability is the Flesch score. Standard reading level is at a Flesch score of about 60 or higher, with lower scores equating to more difficult reading. The scientific literature is pegged in various studies to have a reading score of around 30, with one 2002 BMJ study finding that British English is actually more readable than US English. A low readability score — lower equals more demanding — makes the information difficult or impossible to comprehend for the majority of non-scientific readers.

But how difficult a challenge is readability for Wikipedia readers? A study in 2010 of cancer information on Wikipedia found that while the information was as accurate as that in a professionally curated database, its advanced reading demands made it much less accessible (the professional database required about a 9th grade reading level, while Wikipedia’s cancer information required about a college sophomore’s reading skills).

Leveraging this and other information, a group of researchers in the Netherlands analyzed the readability of Wikipedia overall. Their paper, published in First Monday, is quite readable.

Wikimedia, the parent of Wikipedia, has known for some time that its core product is too difficult to read — to address this, it introduced a “Simple English” version of Wikipedia in 2003, which contains about 68,000 articles (compared to the 3.5 million in the main wiki). The Basic English suggested for the Simple English Wikipedia consists of about 850 words. In late 2003, the Simple English version had a readability score of 80 (Easy). By 2006, this score had dropped to 70 (Fairly Easy).

One interesting finding in this study is how many Wikipedia articles consist of five or fewer sentences. In filtering a few million articles, they found that 40% of Wikipedia articles consist of five sentences or less.

Eliminating these short articles, the authors found that the readability score of the majority of Wikipedia (73.5%) is 51.18 (SD = 13.84), which is lower than the goal of 60 (Standard). In addition, 45% of the articles could be qualified as Difficult or worse.

In the Simple English Wikipedia, 60% of the articles consist of five sentences or fewer. Eliminating these from the calculations, the reading score for the Simple English Wikipedia came in at 61.69, lower than the goal of 80. In fact, 94.7% of the articles scored lower than 80, and 42.3% were below the score needed to be considered Standard reading material.

The authors took a further step — comparing articles with the same title between the two versions. Comparing these, they found that the Simple English Wikipedia had an average score of 61.46 compared to a reading score of 49.27 for the general Wikipedia — both well off their reading level goals.

As the authors write in their conclusions:

The results of this study show that the readability of the English Wikipedia is overall well below a desired standard. . . . Moreover, half the articles can be classified as difficult or worse. This finding confirms our hypothesis that numerous articles on Wikipedia are too difficult to read for many people.

The authors also created a tool you can use to test the readability of any entry in Wikipedia.

There are two simple explanations for the declining readability scores in both the standard and Simple English versions of Wikipedia. First, it’s a skeuomorph of an encyclopedia, and encyclopedias are supposed to have formal, passive language and sophisticated vocabulary. That’s what we think it should be. The second explanation — robust borrowing from authoritative resources — also likely contributes to the works’ difficulties in reaching the optimal readability scores.

In fact, experts are notoriously bad at writing for non-expert audiences. Readability problems in medicine plague patient-education materials, for example. A 1989 study of the readability of smoking cessation materials found that “a serious disparity existed between the reading estimates of smoking education literature and the literacy skills of patients.” In short, many patients couldn’t read about how to quit. A 2001 study of patient-education materials aimed at parents and discussing pediatric topics found that the reading level was about four grades higher than the intended goal. A 2004 study of patient-education materials written for family medicine patients found a similar disparity. A 2008 study of orthopaedic patient education materials found that only 10% achieved the desired readability level. It seems that if you want to find an area where every medical specialty group is failing, you need look no further than the readability of their patient-education materials.

I once worked on a publication aimed at patients that went through academic editors as part of its approval process. It quickly became clear that the writers — who initially were writing at an appropriate level — were helpless to avoid writing for these academic editors after a time, because the feedback from these “experts” was firmly and unquestionably that too many nuances and qualifications were being left out by using short sentences and a limited vocabulary. Needless to say, the publication folded, having attained a reading level only a graduate student would understand.

Even when they try, it’s difficult for advanced readers and writers to write to a lower reading level. It feels condescending, like you’re “dumbing it down,” and also prevents you from using sentence structures and vocabulary that come to you naturally. It’s hard work, and it’s unnatural. But it’s also extremely important to do it right.

Wikipedia is free, yet it presents readability barriers to many of its users. This suggests that the challenge of making scientific information approachable — and therefore, accessible — is much deeper, much more difficult, and much more complex than simply removing reader funding.

Discussion

15 Thoughts on "Wikipedia's Writing — Tests Show It's Too Sophisticated for Its Audience"

Excellent article. It points out a major challenge for Wikipedia: remaining easy to read while including necessary nuances and qualifications. As a journal editor, I’ve been dealing with this problem in a similar way.

I don’t believe requiring a simple reading level will help most journal articles. The nuances and qualifications are just too important. There’s a big difference between “honest” and “honest most of the time!” Providing a complete picture to scientists sometimes requires excluding a lower level of readership. In other words, a journal needs to ask, “Who is our audience?”

Abstracts, however, are another matter. Readers scanning a journal see these first and use them to decide whether to access the article. Here, readability is critical. We’ve been urging authors to keep their abstracts short and to the point. Tell the reader what you did and what you found. Push the qualifications to the body of the article.

The question for Wikipedia is, should it be more or a summary or abstract service, with references to detailed articles elsewhere? Or, should it continue to provide a layman’s version of sometimes complex concepts? Perhaps some type of article self-rating for readability might better inform readers.

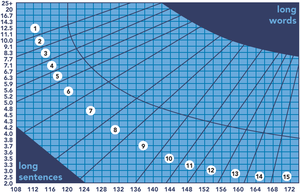

The Flesch test algorithm uses only sentence length, measured in words, and word length, measured in syllables. Technical language per se does not count (which is a shortcoming I have addressed in my readability work, but that is another story). Technical writing tends to suffer from long words, with 4 to 5 syllable words being common. Legal writing suffers from long sentences, with short words, my favorite being a 162 word sentence in a regulatory definition. So one would like to know which is the problem in Wikipedia, if they just used the Flesch test that is. Limiting sentences to 25 words or less is a good rule of thumb.

I blame the pesky physical universe. If it really could be summarized neatly in a few short sentences then science would have been finished decades ago. In reality, it’s endlessly complicated and largely defies description.

On a related note, everyone is always on at scientists to write more clearly and to write for a more general audience, but to be honest that’s extremely time consuming, of dubious value for your career and even then people complain it’s too complicated or that they didn’t understand it. I don’t know how common this is, but reading lay summaries of my work (most written by me) has been like hearing a symphony played on a toy xylophone – so much important detail is missing that the end product feels like a lie.

There’s a reason scientific papers are written so densely and with so much jargon. If each time you had to explain everything a reader needed to know to understand the work, then each paper would be hundreds of pages long…

Indeed, David, the words we use have the meanings they do primarily because we are trying to say what is true about the world. So-called jargon, or scientific language, exists because scientists are talking about things no one else understands. Put another way, a concept is what you have to know to use a word correctly, thus concepts are bodies of core knowledge.

So only people with specialized knowledge can understand specialized words. Science can be summarized in lay language, but it cannot be understood, not as the scientific expert understands it, because lay language lacks the necessary concepts. This is why I am dubious about the OA claims that non-scientists need access to the scientific literature.

It follows by the way that the emergence of new language can be used to track the emergence of new scientific concepts. This is my research field.

In my time working with the Little Red Schoolhouse program at UChicago, a point was made that, although writing (or, more properly, editing) should be done with an eye toward communicating intelligibly, what that means will vary by context. Writing for the press release, or for the campus newspaper, is a different matter than writing for a small cadre of experts in your field.

The “simple English” versions allow users to switch between versions, depending on their familiarity not only with English, but also with the subject matter of a particular article. I believe the design of pages could emphasize this ad hoc swapping ability more readily.

The Flesch scale does not, in my understanding, give any credit or advantage to hypertext. When a printed encyclopedia uses a sophisticated word, it can at best direct the reader to further reading, or incorporate a brief definition. When a hypertextual encyclopedia presents a new word, that word can be linked to a full article on the concept represented by that word. Complicated words can even present with definitions and related links as a cursor hovers over them.

The Flesch Index is built to assess texts given a linear understanding of reading a static text. That’s usually a pretty useful view, but is becoming less useful as literacies evolve alongside our abilities to communicate in less linear fashion.

The Flesch scale was developed in the 1940’s. It only considers sentence length and word length, so neither logical nor conceptual complexity is considered, much less hypertext. An advanced quantum mechanics text could have a ninth grade Flesch score. Still it is a good necessary condition for readability, just not a sufficient condition.

More work has been done in the last 60+ years, including vocabulary based levels. My team cataloged the scientific terms taught in American K-16 education, by grade level. This is another readability scale. Science and science education writers often use terms that are too advanced for their audience to understand. See http://www.stemed.info.

Flesch wrote “Why Johnny Can’t Read” but the real question is why Johnny and Jane can’t learn? This is more about concepts than sentences.

I don’t mean to put you on the defensive. The Flesch scale is, despite being fairly simple, a reasonable place to start in investigating the readability of a text. I simply mean to say that, given the way communications are evolving in our digital age, writers may soon be facing a different question than the classic dichotomy of complex jargon versus simpler “plain” language.

A single word in the jargon of a specialist can be used with very nuanced meaning that the specialist may feel is lost with rephrasing for simplicity. In spoken and print media, this generally means the speaker needs to make hard choices about disregarding nuance for the sake of intelligibility (as a commercial editor, I’ve learned many tricks to getting experts to “speak plainly”).

What I’m proposing is that, in hypertextual environments, the decision becomes less binary. The question changes from a choice between simplicity and accuracy, and becomes a question of whether those important nuances can be made available to the audience without being forced into jargon or slowly and pedantically explained at the risk of wasting some readers’ time.

By way of example of the potential: When reading Wikipedia, one will come across many links within the text. If you were, in the midst of a sentence, to come across a blue, underlined and capitalized word ending on -ology, you would probably be able to infer that the term is a somewhat large subject, and that you could click that link in order to gain a better, deeper understanding of the sub-field. You could even open that link in a new tab or window, so that you don’t lose your place in the article you were reading. Meanwhile another reader, an expert in our hypothetical X-ology, could continue reading without having to skim past hundreds of words providing a background explanation of a subject she already understands well.

Sorry if I seemed defensive, when I was actually pontificating. The structure and complexity of expressed thought, including hypertext, is a major part of my research. Whether adding hypertext increases or decreases readability is a good question.

Besides my commercial editing experience, I’m coming at the topic as one who’s currently looking at how to model the possible stories told in non-linear, ergodic narratives (such as a video game, or a Choose Your Own Adventure novel). It ultimately ties back to my commercial work, thinking about “UX” (user experiences, and the design thereof). Good interactive design keeps in mind that the “story” of one reader may be much more brief than another. Wikipedia has rather famously clunky design, though, so perhaps I’m just leading you in chasing a wild goose. 🙂

But the relational structure that integrates the articles in Wikipedia is not designed, rather it is evolved, like the citation structure of the scientific literature. It is a living thing, not someone’s creation.

On the other hand, my research indicates that expository writing has a deeply non-linear structure, which I call the issue tree. Here is an example: http://www.stemed.info/Repo_Tree.pdf. By coincidence this issue tree was developed when I was converting consumer credit contracts to plain English, back in the late 1970’s. I also used the Flesh test.

Given that expository text has a tree-like structure, with constantly diverging lines of thought, hypertext still has a lot of unrealized potential. After all, writing as a linear string of sentences is a 6000 year old technology. The linear string is modeled on speaking, which has to be linear.

However, stories may be another story, especially where the linear temporal sequence is part of the story.

I’d like to point out that the Flesch score. for this article is 27.4 according to Microsoft Word…