Authors submitting papers to PLOS journals can now opt to transfer their manuscript automatically to the bioRxiv preprint server, according to a joint press release issued on February 6.

In this arrangement, the publisher (PLOS) will perform the initial screening, which includes checking for plagiarism, previous publication, scope, ethical, and technical criteria before manuscripts are transferred to bioRxiv. The publisher maintains that this automatic transfer will accelerate the dissemination of the authors’ work and permit community peer review, which PLOS editors will incorporate into their own evaluation. Authors may opt-out of this service.

What came later in the press release was more alluring, and I should not attempt to paraphrase:

PLOS and CSHL also plan to work collaboratively towards solutions for preprint licensing that enable broad dissemination and reuse; the addition of badges to papers which signal that additional services for authors have been performed by PLOS and potentially other organizations; submission and screening standards in the biomedical sciences; and the implementation of new forms of manuscript assessment to augment or improve current methods of peer review.

Badges? Did someone ask for badges? It’s hard not to conjure a meme that existed long before the Internet. But, before someone gets overly excited and starts a gunfight, we need to clarify a few things, because the lack of details in the press release, bioRxiv, and PLOS websites, has created widespread confusion. Last week, Inside Higher Ed ran a story with the lead, “PLOS Pushes Publication Before Peer Review” — a claim that couldn’t be farther from the truth. So, what’s the truth? Let’s start with the facts.

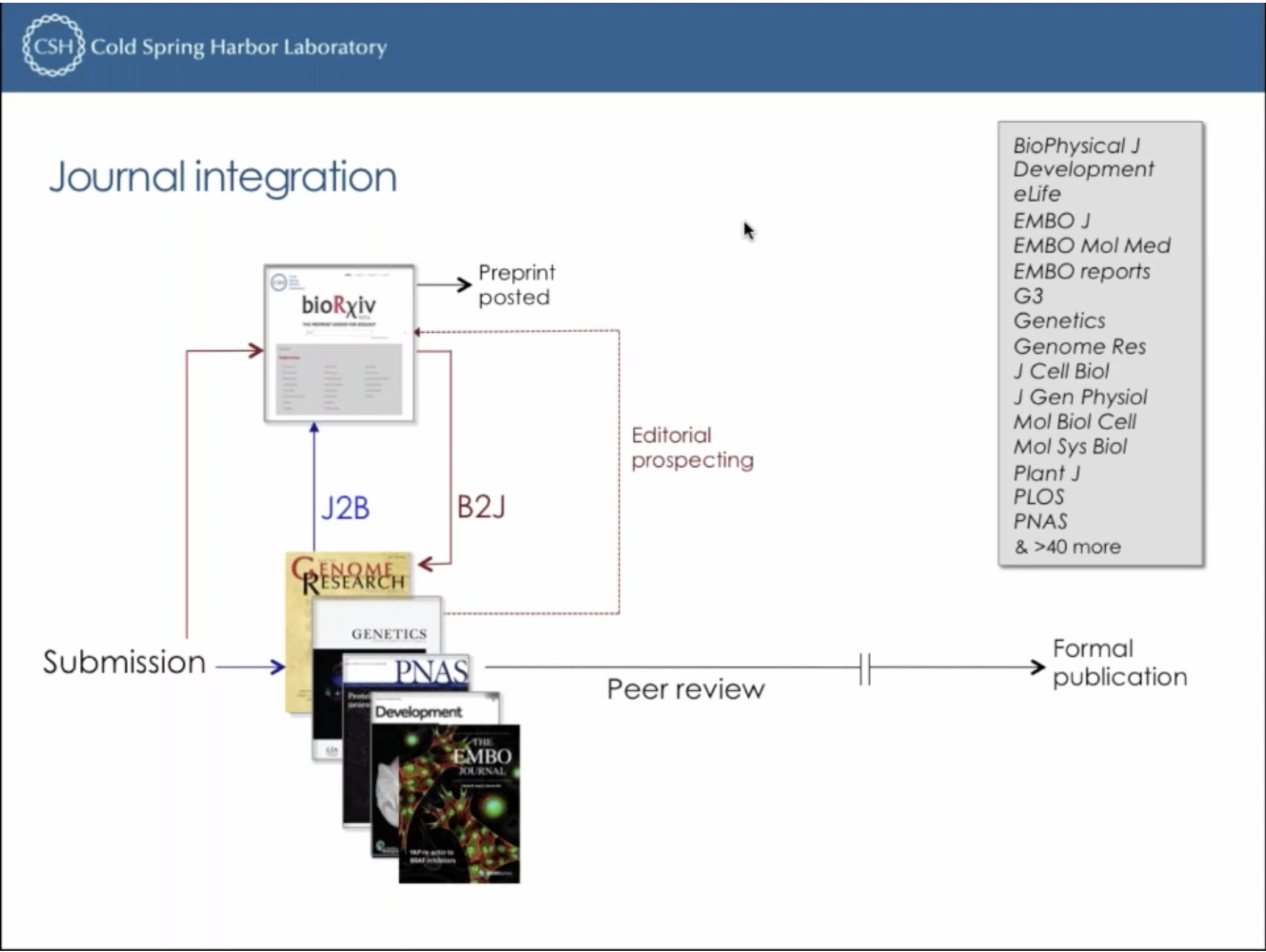

Since January 2016, an author of a bioRxiv preprint could transfer his/her manuscript to a participating journal. bioRxiv calls this service “B2J” and lists a growing list of participating journals on its website. There is no mention of a transfer service that goes the other way (J2B), and yet, it has existed for the past two years, according to Richard Sever, Assistant Director of Cold Spring Harbor Laboratory (CSHL) Press and bioRxiv’s cofounder. J2B was briefly described on a Crossref webinar early last year (see time 40:20). The only other public mention I could find was a press release issued by eLife in March 2017. According to Sever, there are fourteen journals that transfer manuscripts to bioRxiv.

So, PLOS announcing that its authors would be able to post their manuscripts to bioRxiv is not news, unless news means following the crowd or showing up to the party late.

Now, what about those badges?

It is not unusual these days to make big, bold claims about disrupting scholarly communication. However, it is unusual when people with a long history in science publishing make big, bold claims but fail to provide any details. If there is anything that I’ve learned about scientific publishing, it’s that details matter.

By badges, PLOS and CSHL do not mean that a manuscript will arrive with a stamp that reads “Submitted to PLOS Biology” or “Under Peer Review at PLOS Biology“. Similarly, there will be no badge telling a reader that the manuscript was rejected by PLOS Biology and transferred to PLOS ONE. The preprint will look like every other preprint in the system. It will get assigned a bioRxiv DOI, not a PLOS DOI. From the standpoint of the reader, there is no way to tell how the manuscript got into bioRxiv.

As the arXiv taught us years ago, authors are not always conscientious about updating preprints.

Nonetheless, the manuscript transferred initially by PLOS to bioRxiv on behalf of its author may not resemble the paper that is eventually published. Between submission and final publication, there may be rounds of revisions to the manuscript that are not reflected in the original transferred document. While the publisher took responsibility screening the original submission, it takes no responsibility for the version that is left in the bioRxiv. Updating the preprint with a revision is completely up to the author, and, as the arXiv taught us years ago, authors are not always conscientious about updating preprints.

So what are these “badges”? Responding to my request for clarification, Alison Mudditt, CEO of PLOS wrote by email that “badging is to be developed later in partnership with bioRxiv and other stakeholders (including authors) so we don’t yet have details about how this will work.”

Still bewildered, Richard Sever at CSHL, referred me to a proposal to reinvent publishing in the digital age by Bodo Stern and Erin O’Shea on the ASAPbio website. ASAPbio is an advocacy group whose primary goal is promote the use of preprints in the life sciences. I should also note that the authors of the proposal are on the executive team at the Howard Hughes Medical Institute (HHMI), which is a primary backer of the journal eLife, which participates in B2J and J2B.

Stern and O’Shea propose that scientists abandon the journal publication model and adopt an author-driven publishing platform, similar to that used by F1000Research, where results become public first and evaluated later. The evaluation model operates entirely at the article level and relies upon tagging papers with various indicators that may represent their quality, rigor, scope, or performance. The term “badge” only comes up in the comment section as a suggestion from Richard Sever to use the term instead of “tag.”

Now decoded, the press release makes much more sense. PLOS is taking a step to support a post-journal publication platform where manuscripts become publicly available as preprints upon submission and where evaluation is largely conducted post-publication as a series of publisher-provided and community-supported “badges” that are sent to the preprint server over time.

The real outlaw in this story is bioRxiv, which has been taking steps from being a preprint server to becoming a publishing platform.

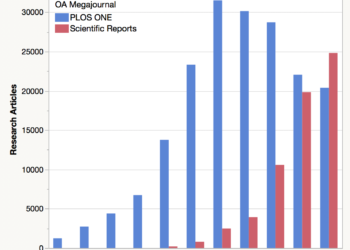

While this may sound like a radical position, PLOS is not far from this publication model already: 9 out of 10 PLOS papers are published in a megajournal where novelty, significance, or impact play no role as acceptance criteria. And, while other metrics-focused services have stolen their spotlight in recent years, I should remind readers that PLOS developed article-level metrics nine years ago specifically to support a narrative that it is the article (not the journal) that ultimately matters.

The real outlaw in this story is bioRxiv, which has been taking steps from being a preprint server to becoming a publishing platform. By incorporating post-publication validation badges into preprints, bioRxiv begins to transform itself into the largest open access megajournal the world has ever seen.

Discussion

28 Thoughts on "Badges? We Don’t Need No Stinking Preprint Badges!"

It would be helpful for readers of Scholarly Kitchen if you would clarify the notion that bioRxiv is now moving “from being a preprint server to becoming a publishing platform.” A preprint has a DOI number and is citable in the regular literature. Thus, a preprint is a publication. Biorxiv has always been a publishing platform. Surely you should use (and define) the terms “preprint publication” and “formal publication.”

The former term does not mean an article has not been subjected to some degree of peer review. The bioRxiv policy is that “every submitted manuscript is examined by affiliate scientists to determine its suitability for posting.” As I discovered to my own cost recently, “suitability,” at least in the contentious evolutionary biology field, may be in the eye of the beholder.

The bioRxiv has been very careful not to call themselves a “publisher.” Indeed, their About Page describes the bioRxiv as:

“a free online archive and distribution service for unpublished preprints in the life sciences.”

Some will argue that anything made public is “published,” as the root of the word implies. However, I take a more narrow view that scholarly publishing implies four key functions:

1. Registration

2. Validation

3. Dissemination

4. Archiving

At present, bioRxiv (the arXiv, and other preprint services) fulfill registration, dissemination, and archiving, but leave validation (editorial and peer review) to journals. (One could argue that checking for scope and duplicate publication is a weak form of editorial validation.) Nevertheless, when validation becomes incorporated into services like bioRxiv—through the kind of quality and impact validation badges they describe in their press release—they transform themselves into a publishing platform, indistinguishable from publishing outlets like F1000R and Wellcome Open Research.

Phil, I think you’re on the right track with your emphasis on validation. I’ve always thought “pre-print” was the wrong name for these services because it assumes a final outcome that a portion of the manuscripts posted will not achieve — formal publication (“print,” ugh).

“Pre-validation server” is more apt a name (for now). And for those that disagree with my reasoning, I encourage you to refer to me as “pre-billionaire Jake Zarnegar.” 😉

You’re right, Jake, the term “preprint” is outdated and inadequate. But most people in research science immediately grasp what it means (while those in other fields use different terms like “working papers”) and that’s why we used it when starting bioRxiv. And there’s no meaningful alternative yet that’s likely to catch on. NIH’s “Interim Research Product”? Your “Pre-validation Product”? Hmm…

A peer reviewed journal with 2 or 3 anonymous reviewers do not a scientific validation make, I’m afraid.

As a postscript we can note that, as reported in the March issue of Nature Biotechnology, a January preprint paper in bioRxiv sent certain biotech stocks falling “precipitously.” By early February the preprint paper had been viewed 18,000 times. One commentator ascribed this to “the lay press” being “ahead of the peer-review process.” Another mentioned “the paper’s publication” when referring to the preprint paper. Another noted that preprint papers “will accelerate research by cutting the lengthy wait associated with peer review,” and declared this “first time a preprint has caused mayhem on the biotech markets” as “a tipping point in biology.”

Interestingly, there was a presentation just yesterday at #redux18 on Peer Review “badges” (?) following along the Creative Commons license style. All I can point to is the tweet I saw – https://twitter.com/_ChristinaEmery/status/963450259862118403 – and from it my sense that this is an initiative of MIT Press?

Wait…PRE-Validation? Badges to increase transparency of the peer review process? This is crazy talk! Several years ago I and others tried to get PRE-val, a service which provided a badge that offered info on the peer review process and other best practices, off the ground. AAAS eventually purchased it and I’ve no idea what happened to date. This is all my way of saying it’s a brilliant idea and I thought of it first. My genius should be recognized! 😉

It came up at the peer review meeting that the role of the editor isn’t always properly appreciated by some & it’s this that allows confusion between what Bioarxiv is doing and an actual journal. A trained editor is required to make peer review happen at scale & that’s what journals bring to the table. Without editors, it’s just commenting on papers online. Without the behind the scenes mediation, managing of conflicts of interest, unprofessional behavior, unconscious bias, badgering people to submit reviews, and so on you don’t have a journal, badges or not.

This is the most spot-on thing I’ve read all week. The role of the editor in peer review is crucial, not least because they vouch for the competence of anonymous reviewers. There is also a world of difference between reviewers who review because they’ve been judged suitable by an editor, and people who show up and comment spontaneously on an article.

Believe it or not we are entering a more anarchic period of what the role of editors and peer reviewers should be in vetting an article from heavy handed and anonymous and serving narrow interests to a more open lighter touch in preprints and open access. Many Editors of traditional journals have inappropriately responded to present problems by consolidating poor past practices of anonymous review by taking on god-like traits i.e. a trend towards discarding a large proportion of submitted papers even before reaching peer review (useless anyway) presumably based on politically motivated editorial decisions rather than deep analysis or even understanding of the potential ‘scientific’ impact of the papers, (not equivalent to trumped up default measures). I call it a new intrusion of academic real politik, there is an inherent fear of upsetting the big players in academia with entrenched positions. Badges of any sort if adopted widely can be ruinous to scientific endeavour precisely because it is not like a medal at the olympics whichcan measure standings objectively, subjectivity inevitably enters into the picture. More important than creating new badges is dismantling the system of what has become to a large degree arbitrary vetting by impact factor default. There is a strong analogy between the two pursuits of impact factor science and badge awarding. Transparency is an issue wherever you look in academia.

To me the point of editorial badges is that they just make preprints more useful to readers. If a paper has undergone some vetting either at a journal, or from an independent source such as Research Square (where I’m from), then it is useful for that information to be conveyed to readers. Given that funders are increasingly allowing preprints to be submitted for grant applications (in order to speed things up), it seems reasonable to provide means of demonstrating the level of vetting that a preprint has undergone, without having to wait for the paper to pass through full peer review.

I understand your point completely; however, editorial badges applied to preprints creates a potential problem. If they are too generic (checked for scope, ethics violation, duplicate publication, image manipulation, etc.), we don’t know WHO did the vetting and at WHAT LEVEL. Without context, the reader may not be able to trust the badge. On the other hand, if a journal puts its own stamp on the preprint and it is rejected (for whatever reason), this information becomes public. And, just imagine all of the stamps that would go on a paper that starts at Nature and ends up at Scientific Reports!

I agree about the who and the what. To work well, the badges would need to link out to a page that gives more information on the checks. This is actually more than journals do currently, so has the potential to shed some light on the what goes on within the editorial office walls, and thus allow journals to demonstrate the value they add.

I hear your point and think linking would solve the context and trust problem. However, it still doesn’t solve the rejection problem. Imagine that a preprint received a badge from PLOS that it failed a plagiarism check. The paper is rejected from the PLOS journal, revised, and resubmitted to another journal, which then passes the paper on the plagiarism check. There are now two badges (one positive, one negative) both associated with the same preprint. One could go much farther with this story, but the problem remains. Complete transparency requires a complete history of all one’s failed attempts. I’m not sure this is what PLOS, bioRxiv or, more importantly, what authors want.

Why would a preprint be the right place for this sort of information? Why would a journal go to all that effort for a paper it is rejecting? If it’s accepted, then why send people to the preprint version, which may not have been revised to include the changes required to the final published version?

I get your point on making peer review more transparent, but fail to see why the preprint is the right place to do this. If it’s seen as being editorially vetted, it might be considered “published” by many journals.

Analogous badges are implicit in any publication, its called impact factor and are even more disserving of science

I don’t find this analogous at all.

The analogy is that short term vetting of any sort is somewhat arbitrary and only academically useful i.e. career enhancing, but not scientifically useful. Most vetting has become smoke and mirrors and subserves an illegitimate academic hierarchy as we are slowly and painfully discovering. The authenticity of preprints should be encouraged free of any obstructionist system of vetting. If it is being considered by biorXiv at all its probably being introduced to appease powerful political opinions i.e. trying to enhance its prestige from a sociological not scientific point of view. The essential problem is we are confounding the two.

Phil, in your response to Dr Forsdyke you list the four functions Oldenbourg ascribed to journals. bioRxiv’s focus is one of them – dissemination.

The fourth function, what you call validation (I prefer certification) is, as you acknowledge and the bioRxiv founders have firmly and consistently stated, the work of journals through peer review. That process is much debated but in surveys, most scientists believe it’s necessary and beneficial in publishing their work. bioRxiv is not going to “do” peer review of its posted content. It’s not going to be a journal, mega or otherwise, like F1000 or anything else. The Kitchen itself has emphasized over the years the many and varied things that journal publishers do (eg https://scholarlykitchen.sspnet.org/2018/02/06/focusing-value-102-things-journal-publishers-2018-update/) and almost none of them are done by a preprint server.

bioRxiv plans to continue doing what it already does: pointing readers to useful information about a preprint. When a preprint has a published version, bioRxiv provides a link to that journal. One journal tells us when a version of the preprint is in press and we signal that too. When there are blogs or tweets around a preprint, a reader is given links to them. When there are notes from a journal club discussion, those can be seen in the comments section, where the community at large can also weigh in.

Last week’s ASAPBio/HHMI meeting made clear that there is a huge interest in seeding more community discussion of preprints and many different ideas about how to do it. bioRxiv will work with all concerned to make it happen. But I want to make clear, as your piece did not, that these discussions will take place off the bioRxiv site and will be conducted not by bioRxiv but by multiple, likely self-organizing entities. At bioRxiv our job will be to work with those entities to figure out ways of connecting all those conversations to the preprint and signaling to readers that those conversations exist. Our shorthand for that signal is a “badge”. PLOS is one of the organizations interested in community review, but as Alison Mudditt told you, they have not yet finalized how they will do it.

Your piece overlooked an important aspect of the PLOS-bioRxiv relationship: the service it will provide to tens of thousands of authors. They’ll be able to make their work instantly available to the community simply by submitting it to a PLOS journal, so the volume of work available for discussion by the community in preprint form at bioRxiv will greatly increase. This commitment to preprints by PLOS is a win for science and scientists. It deserved more than a passing glance.

I think the confusion comes from the lack of details that accompanied the announcement about the mysterious badges that PLOS would be providing:

http://www.stm-publishing.com/plos-and-cold-spring-harbor-laboratory-enter-agreement-to-enable-preprint-posting-on-biorxiv/

PLOS and CSHL also plan to work collaboratively towards solutions for preprint licensing that enable broad dissemination and reuse; the addition of badges to papers which signal that additional services for authors have been performed by PLOS and potentially other organizations; submission and screening standards in the biomedical sciences; and the implementation of new forms of manuscript assessment to augment or improve current methods of peer review.

From the statement, it sounds like there are ideas under development for PLOS to provide some level of services upon the preprints that stem from submissions to their journals. From your response it sounds like no definite plans are in place and that the discussions have not even happened as of yet. So perhaps the press release was premature, or at least perhaps many (I’ve heard similar questions/comments from others beyond Phil) are reading too much into it.

That’s of course separate from the deposit by PLOS into biorxiv on behalf of authors.

Thank you for highlighting this. I work in an Editorial function and somehow the “badges” concept seems to be a sole fetish of marketing teams. Nobody who really knows science, works with researchers, actually believes badges are worth their fad. The sorry state of our industry is that our marketers may very as well use same tactics for selling washing powder, as they really do not understand our audience. Until marketers also arise from science, we will have more badges to badger us.

I think you’re confusing what’s being discussed here with something like Open Science Badges (https://cos.io/our-services/open-science-badges/) which are suggested to be used as a motivational tool to drive author behavior. Here, unfortunately the same term, badges, is being used to describe something still undefined, but presumably to serve as a marker to the reader that a preprint has received some as yet unknown level of reviewer/editorial scrutiny/approval.

In our paper we proposed that badges (we called them tags) would be a useful post-publication evaluation tool. They have two potential advantages: they promise to capture long-term impact better than an editor’s decision to publish the article (which is the current ‘proxy’ for impact); and they could help to dislodge journal-based indicators like the impact factor in the evaluation of scientists and scientific work. We understand that badges are still largely undefined and existing article-specific metrics have not been able to replace the use of the impact factor. It will be critical to identify useful badges (and badges that should be avoided) and to figure out how badges can be ‘attached’ to the article. While we were initially only thinking of post-publication badges, preprint badges could also be very useful for the community and for journals. Imagine, for example, badges that certify ‘statistical analysis’, ‘absence of image manipulation’, or ‘accuracy of highly technical procedures’. And why shouldn’t it be possible to click on the badge to figure out what expert group or individual conducted the certification? To me the strong statement of this blog that we don’t need badges is premature: they are worth exploring, unless somebody comes up with better ideas for creating article-specific indicators of quality and impact.

The concept of post-publication certification is an interesting one. But the key phrase there is, “post-publication.” I’m not sure that the right place for such verifications are on the preprint, which is meant as a working paper, and is potentially subject to an infinite number of revisions. When an author revises their preprint (and John Inglis says that 29% of the preprints on biorxiv are revised at least once), one would need to remove all certifications and start over from scratch. Further, it is likely that the preprint will differ in some manner from the published, version of record, so a peer review process that results in significant changes (not to mention post-acceptance editing) will result in an article that may carry certifications not valid for the preprint. One would need to carry out different review processes for each different version of each paper.

But as for the question asked in this blog post, one must ask where the line is drawn between something being a preprint and being considered “published”. If a manuscript has gone through editorial review and has been publicly certified to have valid statistical analysis, absence of image manipulation and accuracy of highly technical procedures, I would think that most journals would consider that “published” and not be willing to accept further submission of that manuscript. Are those certifications all that different from declaring the article to be “scientifically sound”? That’s where the preprint server makes a shift to becoming a journal.

David,

you raise two valid points. Maybe preprint badges should initially focus on features that journals would find useful, like badges that have limited overlap with the journal’s general review process. Questions like ‘do the data support the conclusions?’, ‘how significant is the advance?’ would still be addressed by the journal’s review process.

Whether a preprint publication or a formal publication, just as a thermometer reading is but one index of health status, so should be viewed each of the many indices of scientific worth. Highly important among these is post-publication review carried out without the cloak of anonymity by people with credentials (as with PubMed Commons, which stops accepting comments from today onwards). Less important is PubPeer which accepts anonymous comments that flow abundantly in overwhelming numbers. A stated reason for PubMed Commons demise is that it believes in quantity of inputs rather than quality. We weep for the loss of an important scientific thermometer (and much more).